前言

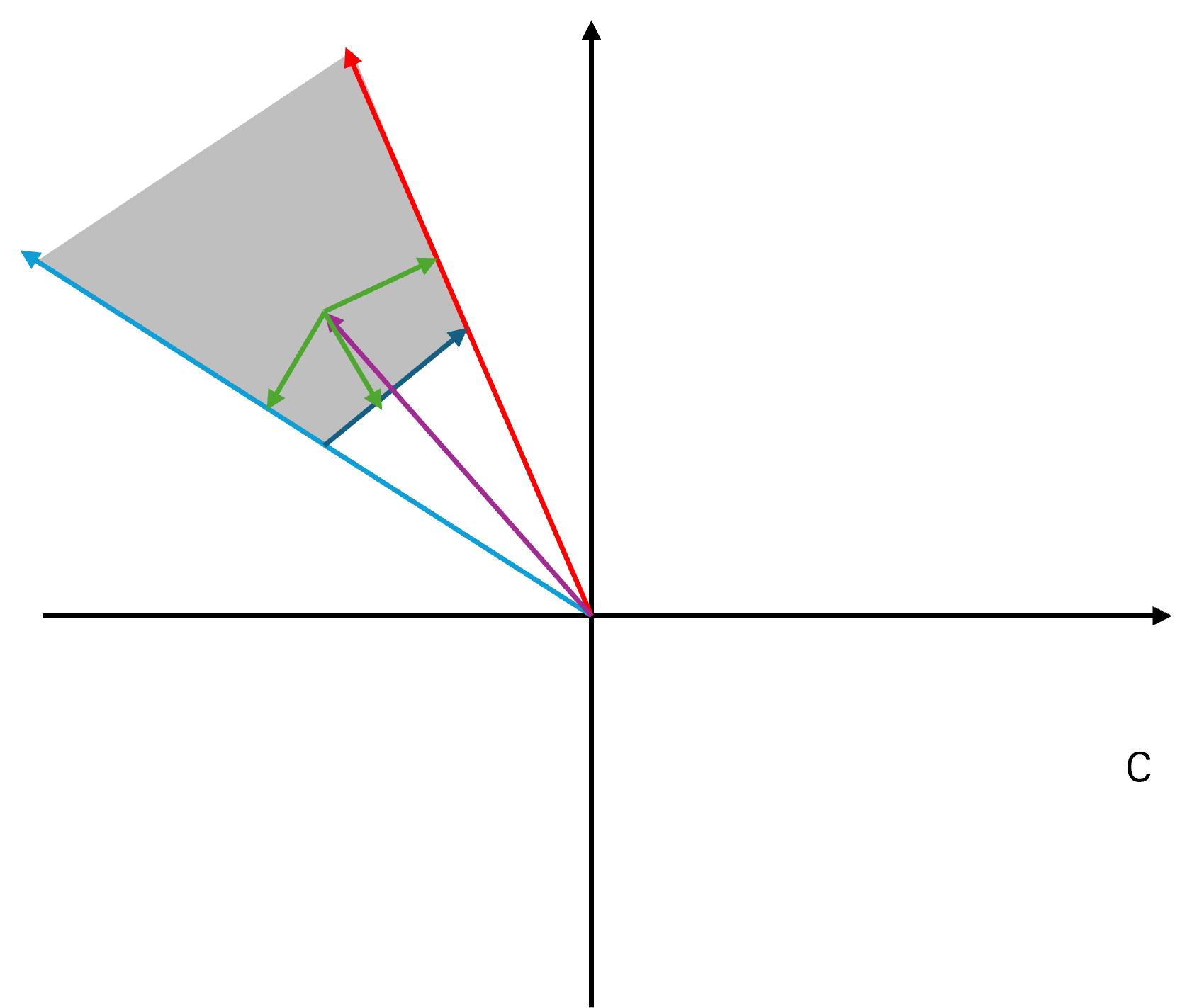

游戏服务端开发是一个非常神秘的软件开发工种,作为游戏开发的两大子类之一,其关注度远低于游戏客户端开发。常见的游戏开发书籍《游戏引擎架构》、《游戏编程精粹》等基本都是介绍客户端相关的渲染、物理、动画等内容。Bilibili上的知名游戏开发经验分享账号Games Webinar上传的五百多个视频里关于游戏服务端的一只手都数的过来,都集中在Games 104里。相对于汗牛充栋的游戏客户端开发书籍,关于游戏服务端开发的书籍可谓屈指可数,比较知名的只有《网络多人游戏架构与编程》。这些游戏服务端开发相关书籍大多在花一半以上篇幅来介绍网络相关的内容:如何使用基础socket来做网络通信,如何降低延迟,服务器与客户端之间的互联拓扑。由于这些内容与常规的互联网服务端开发重合度太高,同时又缺少类似于分布式一致性这类高端内容的介绍,给了读者一种游戏服务端开发没啥技术含量的错觉,以至于知乎上有这么几个提问:互联网服务端技术领先游戏服务端几十年?; 游戏服务端技术/人员整体水平是不是已经落后于互联网服务端?。

正所谓"指法无优劣,功力有高下",游戏服务端与互联网服务端两者要处理的业务有着很大的差异,无法直接对比技术栈。在上面的知乎问题的回答里,很多人都提到了这两者最大的差别:互联网服务是短连接、无状态、高延迟的,而游戏服务是长连接、有状态、低延迟的。但是这种过于干练的概括对于理解游戏服务端并没有很大的帮助。刚好那段时期本人用Python做了太多的游戏节日活动,有点烦躁,想通过自己从零搭建一些游戏服务端通用的组件来更好的理解隐藏在脚本接口之下的游戏服务端的核心业务。在看到这个知乎问题之后,萌生出了一个大胆的想法:用cpp做一个Demo性质的游戏服务端引擎mosaic_game来复刻当前使用的自研闭源游戏服务端引擎的核心功能,同时将这个引擎的功能设计与实现归纳整理为一本完整的书籍,为那些对游戏服务端有兴趣的开发人员提供一份比较系统且充满细节的参考资料。希望通过本书的介绍,能够让大家对游戏服务端开发有一个更全面的认识,了解其功能集合与职责所在。

随着后续工作的变动,逐渐开始接触到其他的商用游戏服务端引擎,最知名的就是BigWorld和Unreal Engine。我所在的大世界服务端组的业务重心就是将BigWorld核心的无缝大世界功能移植到Unreal Engine上,以提升Unreal Engine的单场景玩家承载能力。在做这个无缝大世界功能移植的过程中需要反复的去查阅这两个引擎的源代码,再加上我自己闭门造车的mosaic_game,发现虽然这三个游戏服务端引擎面对的问题相似,但是对应的解法却各有不同。同一个问题三种解法,着实有趣。

兴致起来了之后,我便修改了原有的开发计划,给mosaic_game增加了无缝大世界的支持。同时本书的内容也进行了大量的扩充,同一个业务主题会多个章节来分别介绍这三个引擎的解决方案,大致覆盖了下述内容:

- 通信管理,包括客户端与服务器之间,服务器与服务器之间的通信

- 会话管理,包括账号创建、登录、顶号、下线、断线重连等

- 数据持久化,包括玩家数据、场景数据、社交数据等

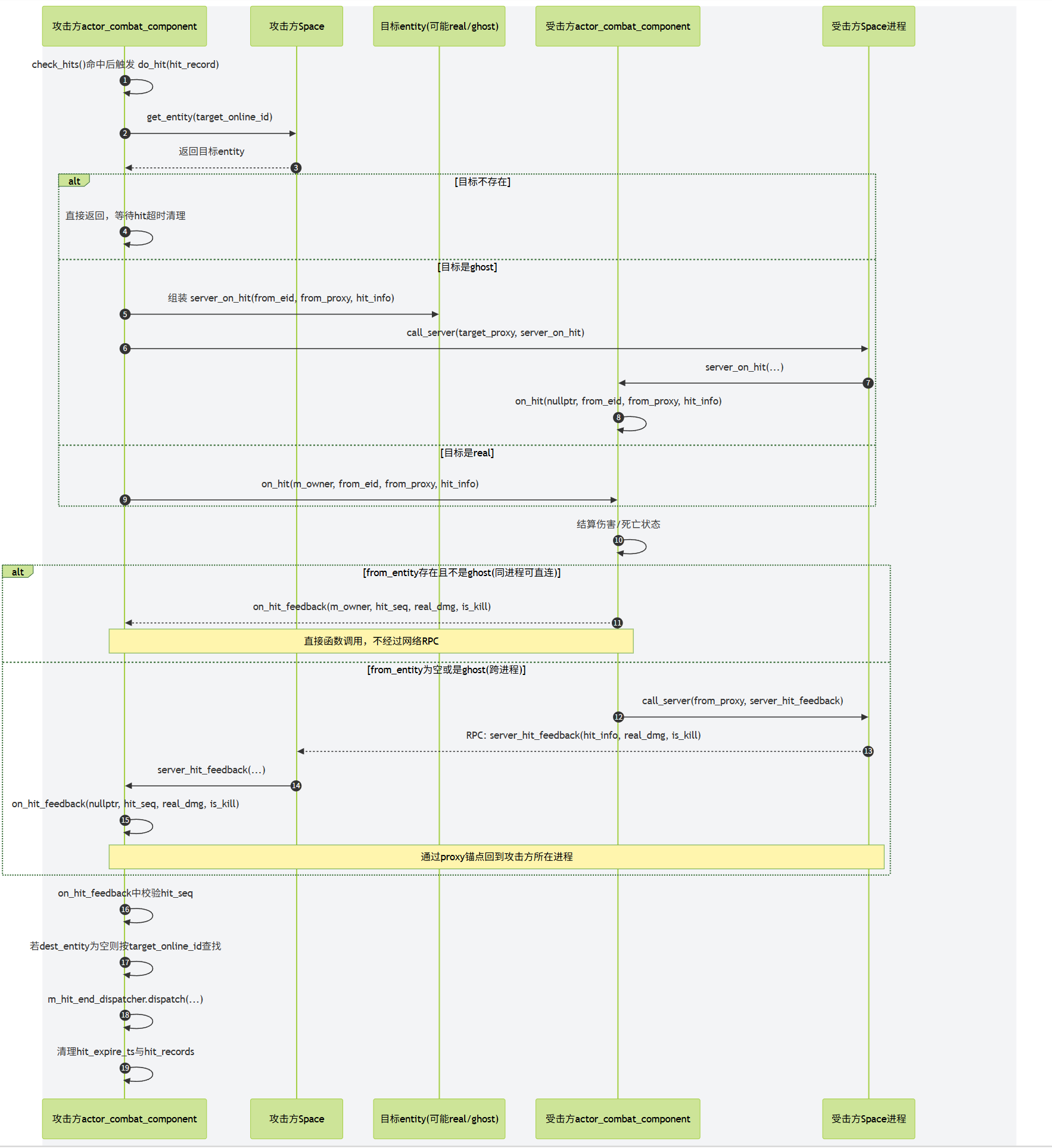

- 客户端请求响应,包括移动、聊天、社交、战斗、场景切换等

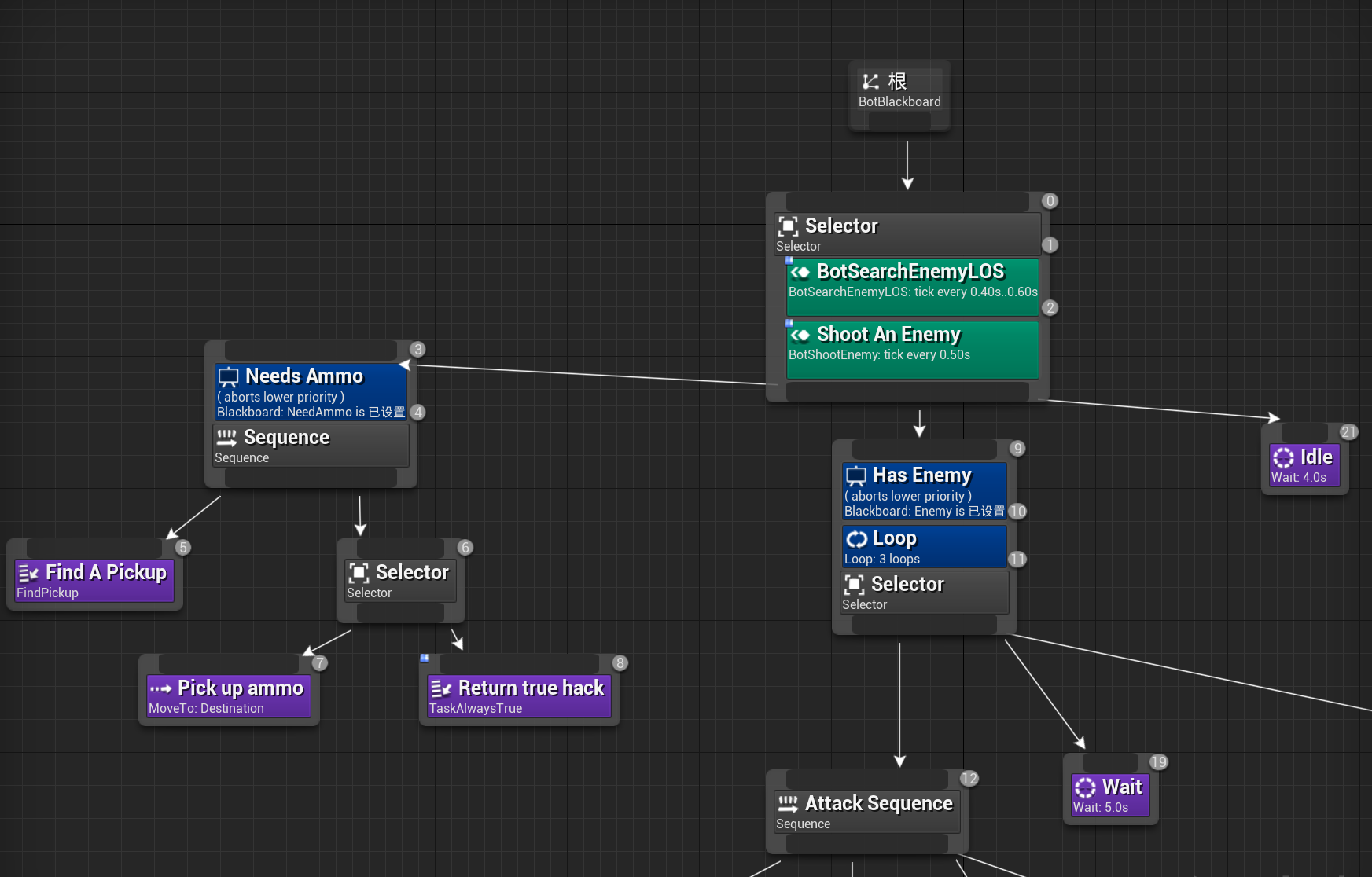

- 玩法流程控制,包括副本流程、怪物

AI等 - 实体状态同步,包括实体的位置同步、属性同步等,

- 分布式场景管理,包括分布式场景的动态扩缩容、无缝迁移等

在介绍这这些内容的时候,我都会附加这些内容的核心代码来方便读者去了解这些内容的实现细节。在章节膨胀和代码填充这两个因素的影响下,本书的篇幅也从原来的600页不到扩充到了现在的1500页。太多的代码贴在书中,会导致书籍的可读性下降。如果读者不想深究实现细节的话可以对这些代码进行跳过,对于不感兴趣的章节也可以跳过。

由于本人经验和精力有限,上述内容之外的一些游戏服务端的非常重要的业务并没有在本书中展开介绍,例如技能与战斗系统、脚本系统、日志收集系统、性能监控系统、运维部署系统、热更新系统、容灾系统等,欢迎对这些内容有深刻理解的游戏服务端开发人员来对本书进行补充。

游戏服务端架构介绍

游戏服务端架构演进

其实关于游戏服务端架构演进的内容,已经有很多前辈总结过了。本人从业没多少年,经历过的项目也是屈指可数,所以本章的存在只是为了全书的完整性,简单的介绍一下。对于更多细节感兴趣的读者可以去阅读skywind3000前辈写的游戏服务端架构发展史。

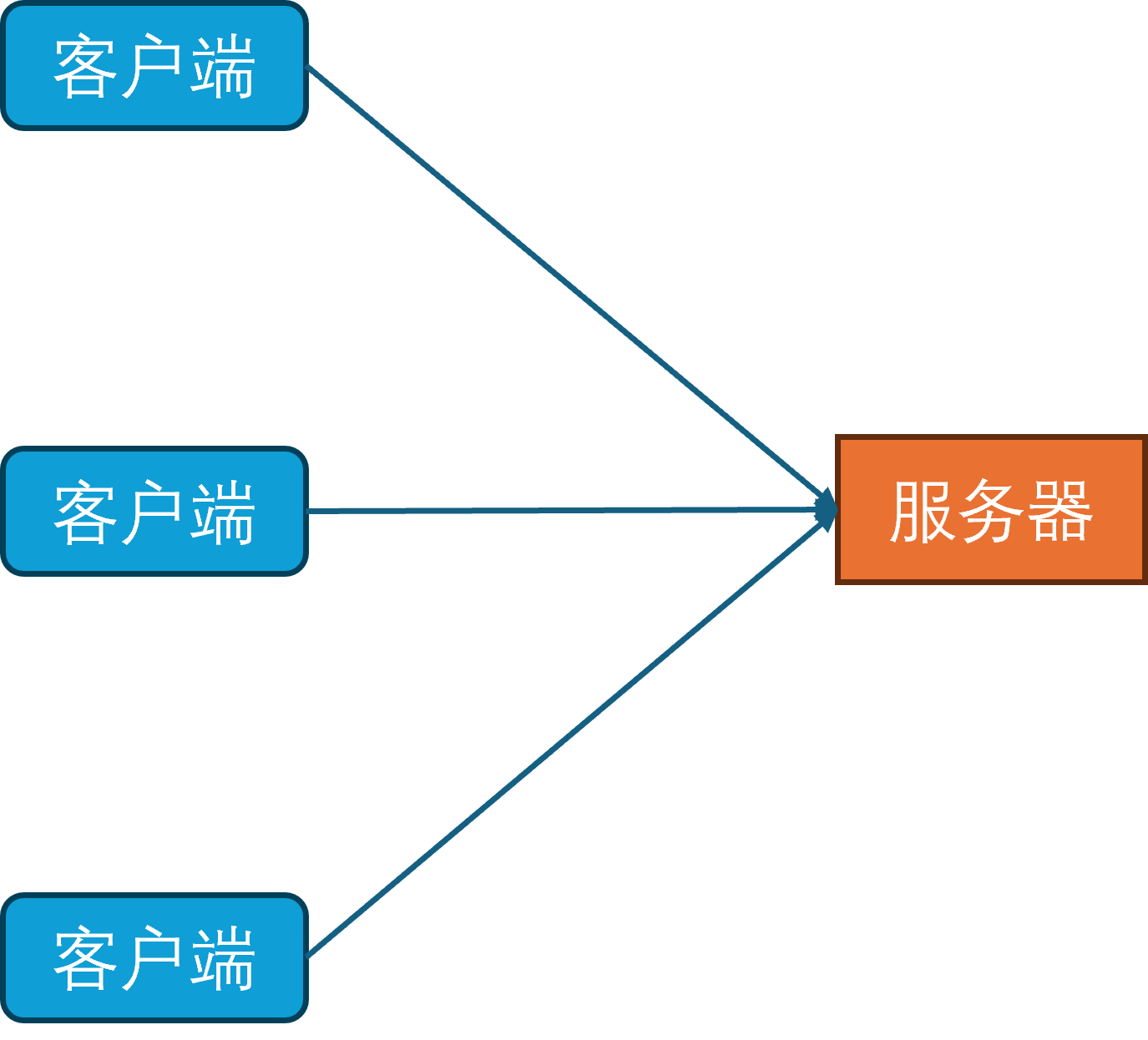

单进程架构

单进程架构是游戏服务端最简单也最久远的架构,起源于1978年诞生的游戏服务器Multi-User Dungeon,简称为MUD1。此时游戏服务器只有一个进程,玩家的客户端可以通过telnet协议连接到游戏服务器,然后就可以通过文本输入来控制自己的角色来进行游戏。

在MUD1中,服务器进程只有一个线程,网络收发使用非阻塞的网络IO模型,同时线程里会每隔1秒钟更新一次所有对象(网络收发,更新对象状态机,处理超时,刷新地图,刷新NPC)。同时玩家数据的持久化使用的是本地文件系统,每个玩家的数据都存储在一个单独的文件中。

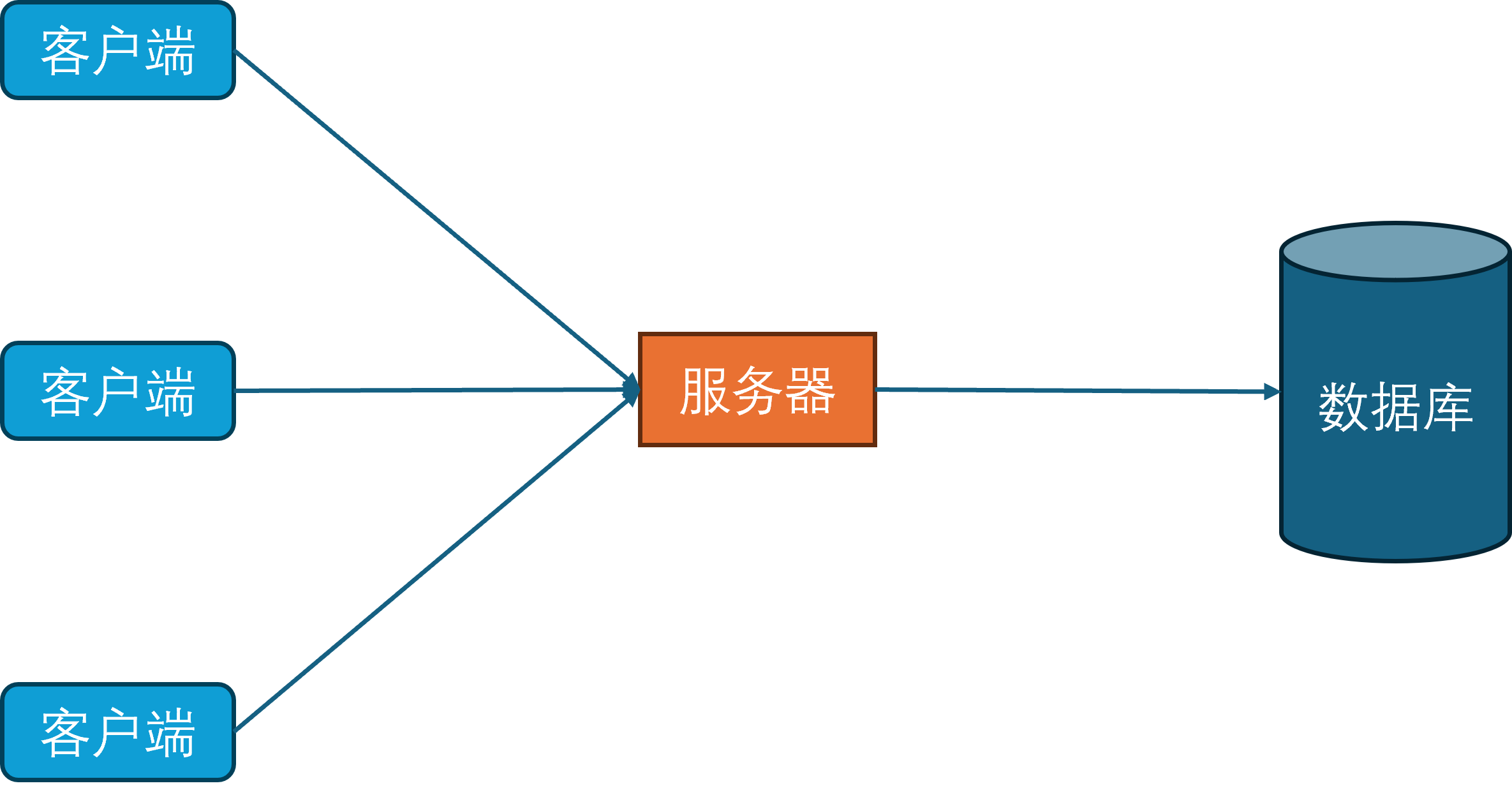

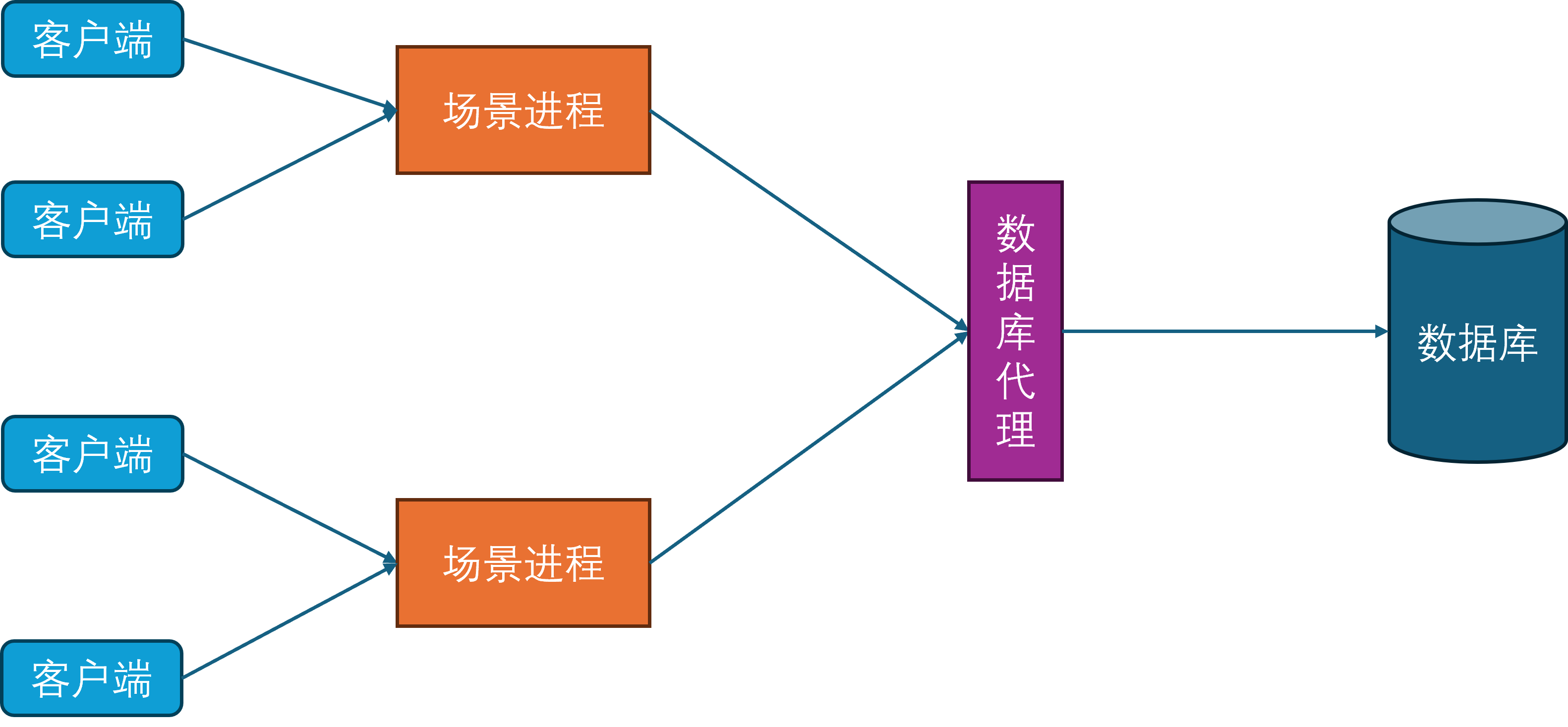

数据库进程

随着互联网和硬件的发展,游戏内容也从纯文字进化为了图形化。由于图形化界面相对于纯文本界面能携带更多的信息,就使得单个玩家的游戏数据量急速膨胀。同时由于网络的逐渐普及,游戏服务器要服务器的玩家数量也逐渐增多。在这两个因素的影响下,基于小文件的持久化系统已经力不从心,因此开始引入数据库作为新的持久化手段。

同时为了规范化游戏内对数据库的访问,开始引入数据库访问层。数据库访问层负责处理所有对数据库的访问请求,包括玩家数据的读取和写入。

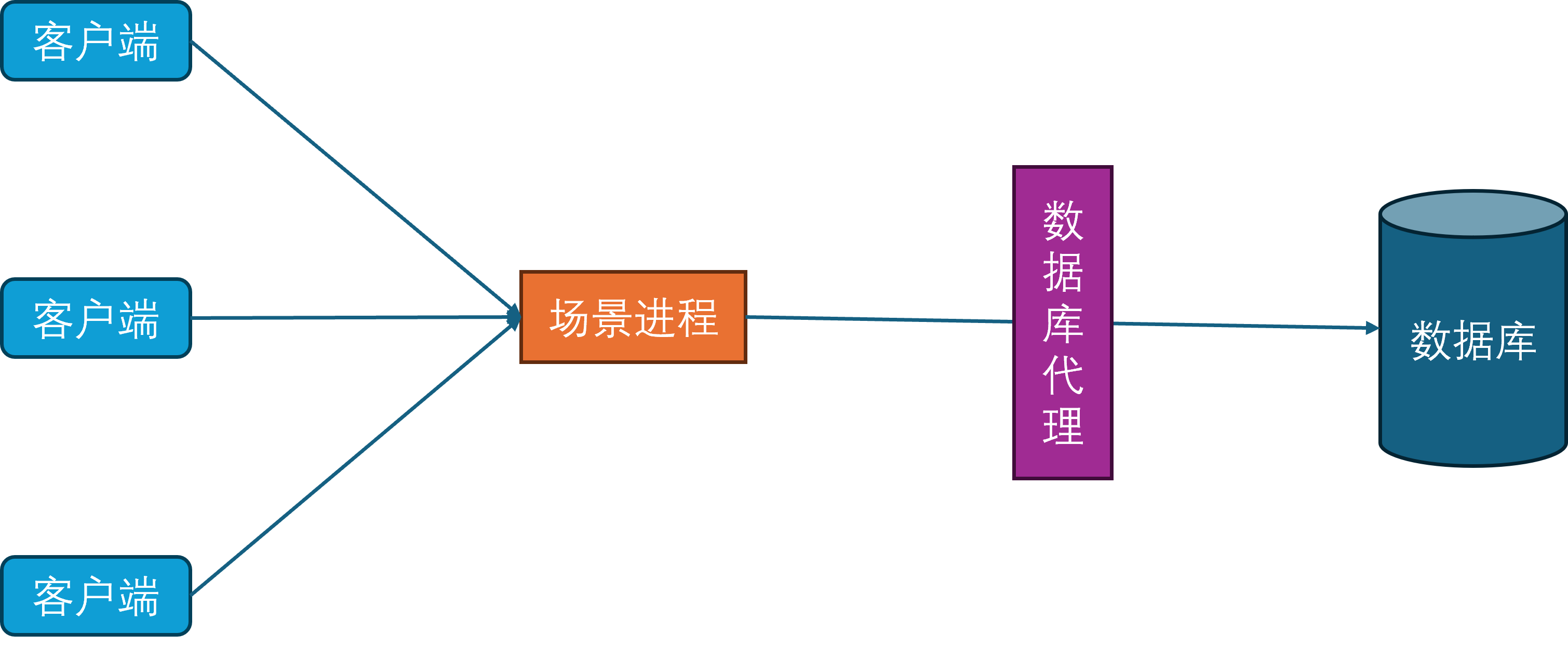

场景进程

当玩家在线数量持续增多时,当前的单场景进程的CPU就成为了瓶颈。为了提高游戏服务器的同时在线人数,开始对游戏场景进行水平划分,每个场景进程负责若干个独立的场景,每个场景负责管理一部分玩家。这种结构也就是常说的分区分服。

在这样的设计下,只要有无限的场景进程实例,就可以无限的扩展游戏服务器的同时在线人数。

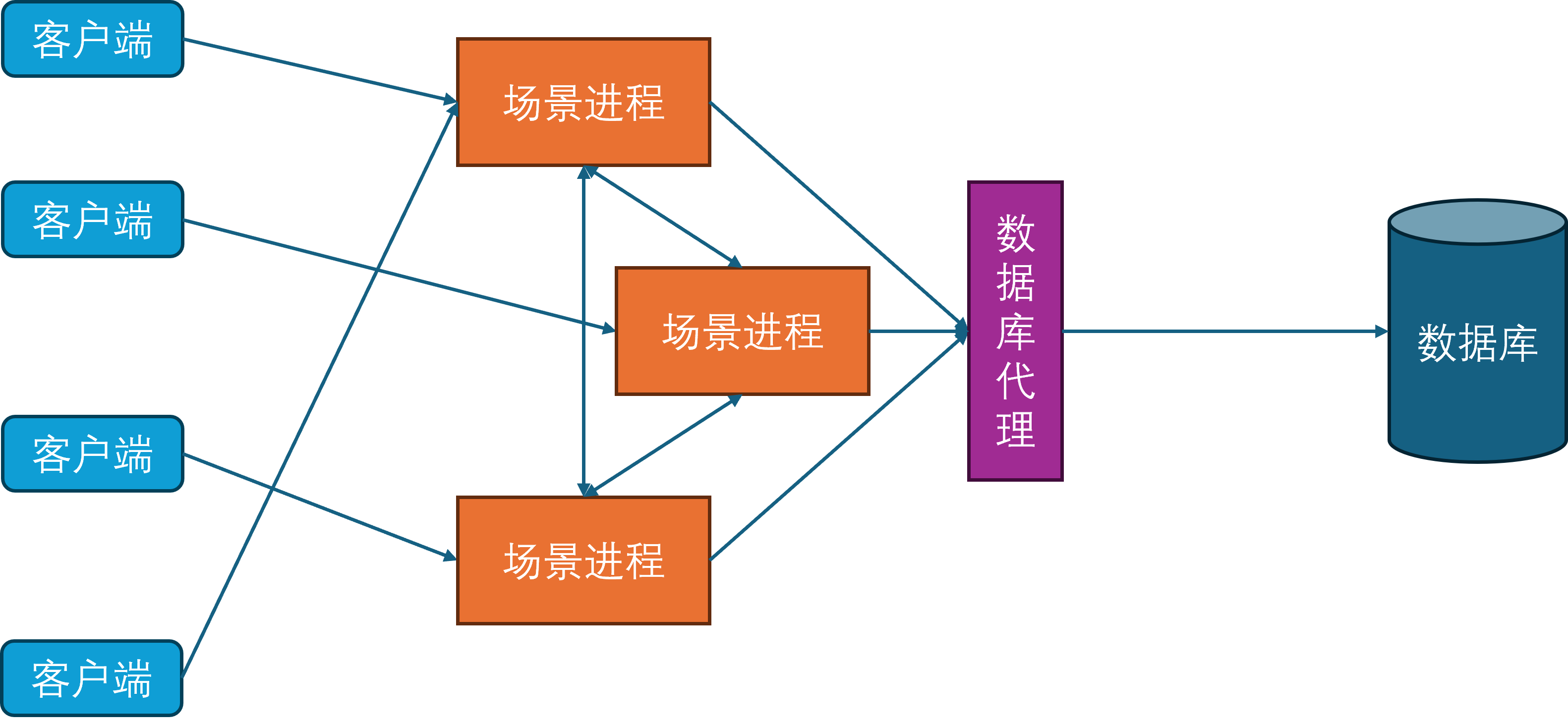

由于玩家不会一直呆在同一个场景里,但是不同的场景被不同的场景进程负责。此时最简单的方法就是从原有的场景进程中下线并断开连接,然后往新的场景进程发起登录请求,这个下线再上线的过程会涉及到非常多的状态修改和数据交互。为了避免这些操作对游戏的影响,不同的场景进程之间开始出现互连,这样玩家数据就可以从一个场景进程传递到另外一个场景进程,避免了存库再从数据库中进行拉取的操作,这个过程就叫做玩家的迁移(Migration)。此时客户端只需要在迁移完成之后根据角色所在的最新场景进程通信地址发起一个新连接,同时断开对老的场景进程的连接,即可恢复游玩。这样就避免了繁重的上下线流程,减少了切换场景进程的延迟。

网关进程

由于常见的网络协议都建立在TCP之上,而TCP连接的握手和关闭过程都比较冗长,这就给玩家切换场景进程带来了不少的延迟。为了进一步减少这种迁移延迟,开始引入网关进程。网关进程承担的角色与Nginx类似,作为反向代理中转场景进程与客户端之间的通信,因此网关进程会与所有的场景进程建立连接。

此时客户端不再连接场景进程,只连接网关进程。这样做的好处是当一个玩家切换场景服务器的时候,客户端连接是不会断的,只需要在网关进程上更新一下这个客户端对应的场景进程关联。同时由于网关进程的功能比较简单,基本只负责通信转发,所以其单进程承载量可以达到上万。网关进程的引入还有一个好处就是可以隐藏后面的场景进程,提供一个统一的接入点的同时,避免了内部服务器暴露在公网,从而减少了服务器被DDOS和入侵的风险。

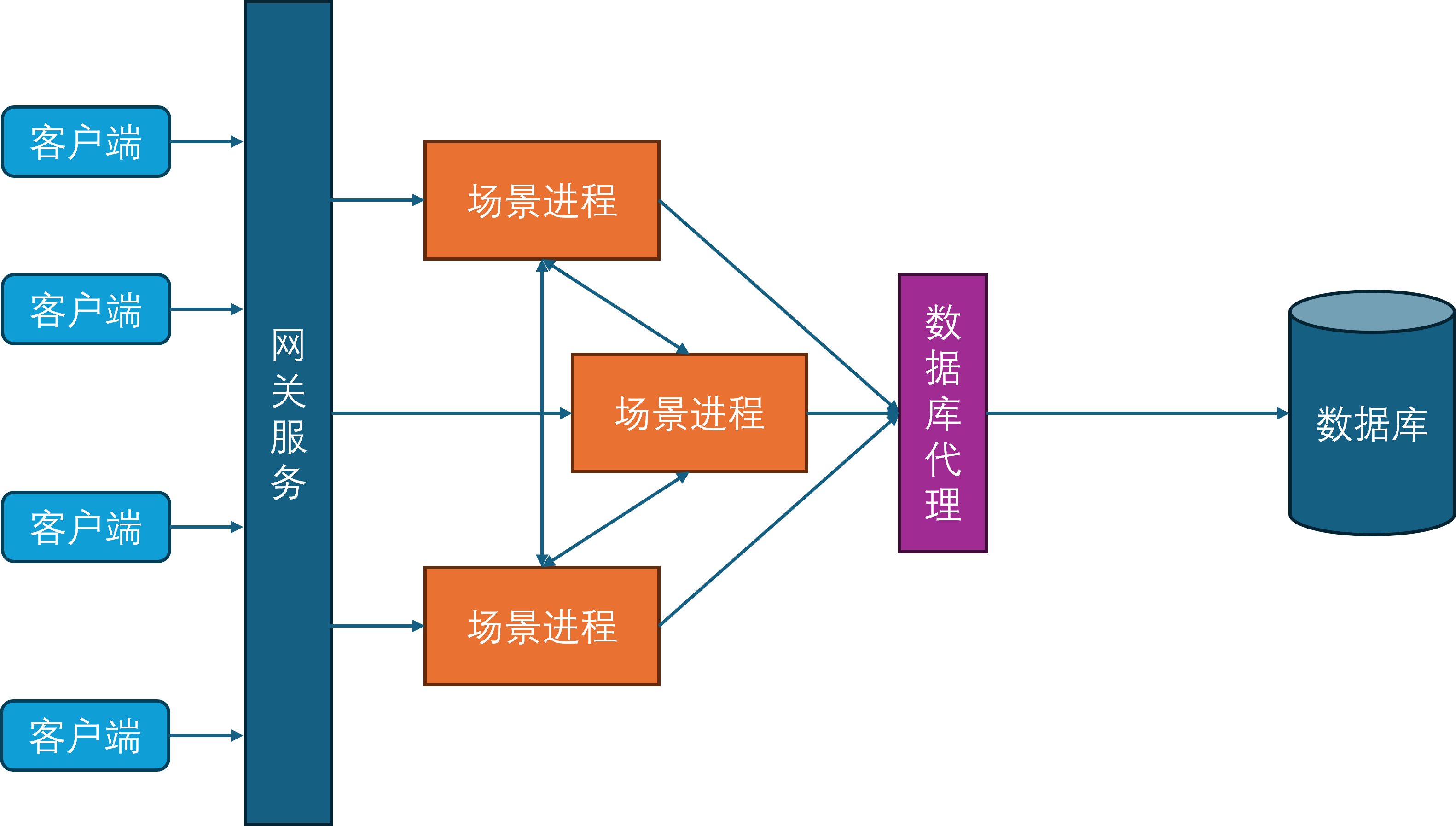

服务进程

随着游戏社交性的增强,游戏内玩家的交互对象不再仅限于同场景的其他玩家,还可以与其他场景的玩家进行交互,典型例子就是在副本内与其他不在当前副本里的好友聊天。为了支持这样的与场景无关的玩法需求,开始引入服务进程。服务进程负责处理所有与场景无关的游戏逻辑,比如登录服务、场景管理服务、玩家之间好友、组队、聊天等。

此时所有的场景进程都与服务进程建立连接,服务进程也会与其他的所有服务进程发起连接,因为服务之间可能出现相互调用,例如给好友发消息会先通过好友服务验证两者之间的好友关系是否存在,然后再通过聊天服务将聊天记录存库,最后再通过在线通知服务来提醒对方进行消息接收。

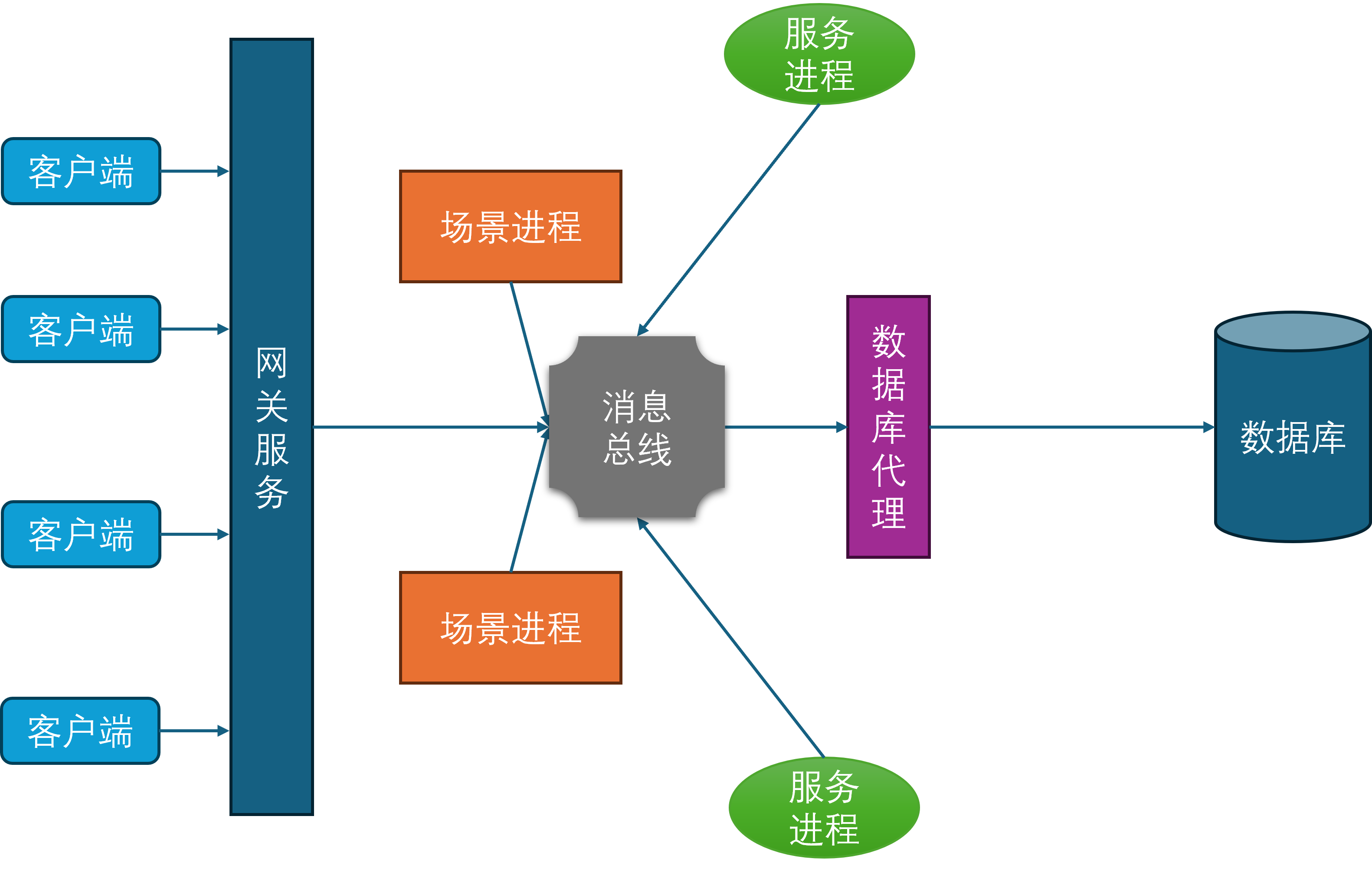

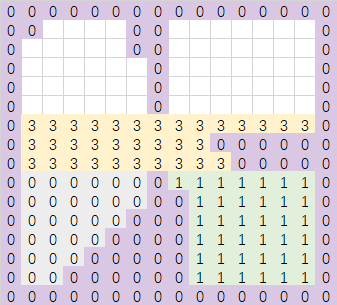

消息总线

在上面的服务器架构图中可以发现,场景进程与服务进程在某种程度上来说是等价的,对于包含所有场景进程的集合A和包含所有服务进程的集合B而言,A中的任意进程都需要维持到A+B这个集合中任意进程的连接,同时B中的任意进程也需要维持到A+B这个集合中任意进程的连接。所以A+B集合内的连接数量是的,是集合总大小的平方复杂度。虽然服务进程是有限的,但是场景进程基本上可以水平的无限扩充,同时场景进程还可以动态的扩缩容。在这种情况下,随着场景进程的数量逐渐增多,连接管理也会越来越复杂。为了减少连接管理的复杂度,隐藏进程间通信的细节,开始引入消息总线进程。

所有的场景进程、服务进程、网关进程、数据库代理进程都与消息总线建立连接,并通过消息总线来进行通信。这样就避免了每个进程都需要维护到其他所有进程的连接,同时也隐藏了进程间通信的细节,简化了开发和运维的复杂度。

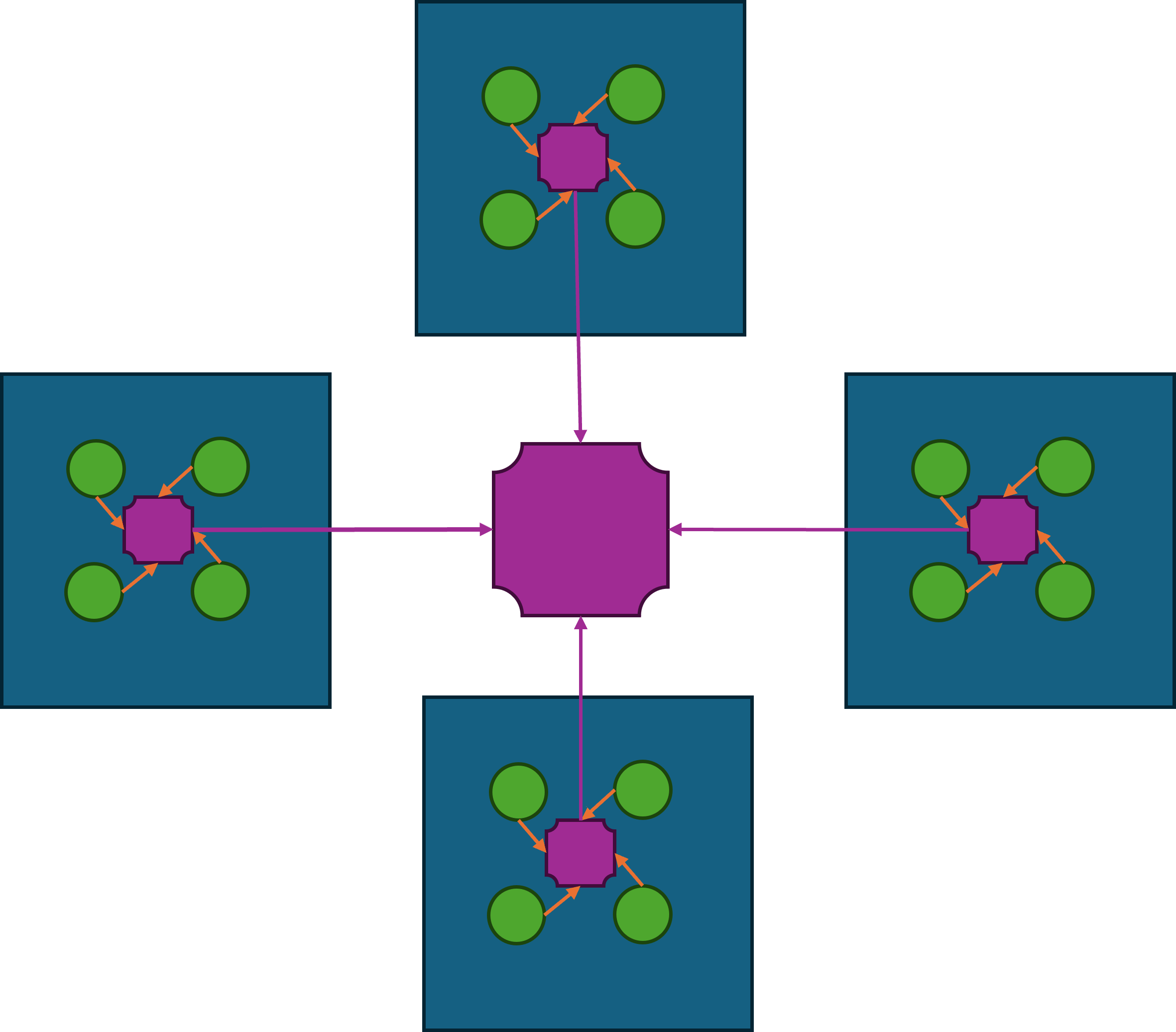

一般来说,消息总线相关的进程会在每台物理机器上部署一个,这台物理机上的所有其他进程都会发起到本机消息总线进程的连接。然后每台机器上的消息总线进程又会统一的连接到一个全局的消息总线分发器,这个分发器会负责将消息路由到对应机器的消息总线进程上,并由目标机器的消息总线进程进一步分发到业务进程中。这样设计相对于所有业务进程都直接连接到全局的消息总线分发器来说,能够有效的减少消息总线分发器的网络连接数量。虽然消息转发相对于直接通过消息总线分发器来转发多了两次通信,但是这两次通信都是本机通信,速度很快,因此不会带来显著的延迟。如果消息的目标在本机的话,则不需要经过消息总线分发器,直接可以通过本机的消息总线进程来直接投递。

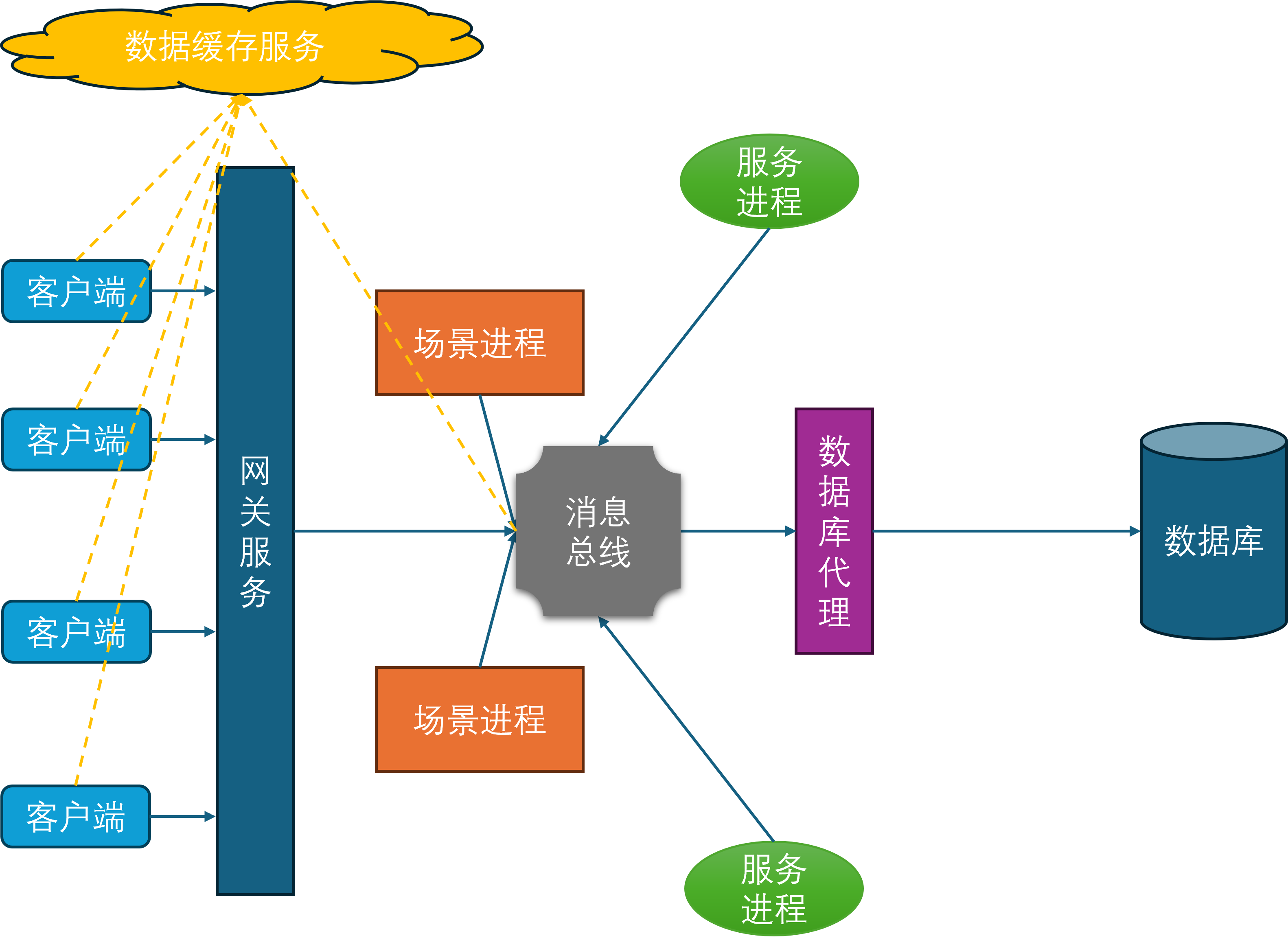

缓存服务

在当前设计下,客户端对于游戏数据的读取都需要经过网关->场景进程这两层中转,如果数据在服务进程上则会多加一层。对于一些变化频率不高但是拉取次数很多的数据,例如玩家的头像基本信息、定期结算的排行榜等,这些数据可以考虑放到游戏服务器之外去存储。这样客户端对这些数据的查询就可以完全不走前述的网关->场景进程这两层中转,同时有效的降低游戏服务器的CPU负载和流量压力。典型的数据缓存服务就是Redis集群,这个集群通过http的方式来暴露数据的查询与修改接口,这样客户端与服务端都可以很方便的与这个集群执行互操作。同时由于Redis集群的性能与吞吐量都很高,整体的机器收益是正的。而且这个Redis集群一般非常稳定,不需要跟随游戏服务器去更新部署,不会给服务端带来额外的维护负担。综合上述几个优点,基于Redis的数据缓存服务在游戏服务端非常流行。

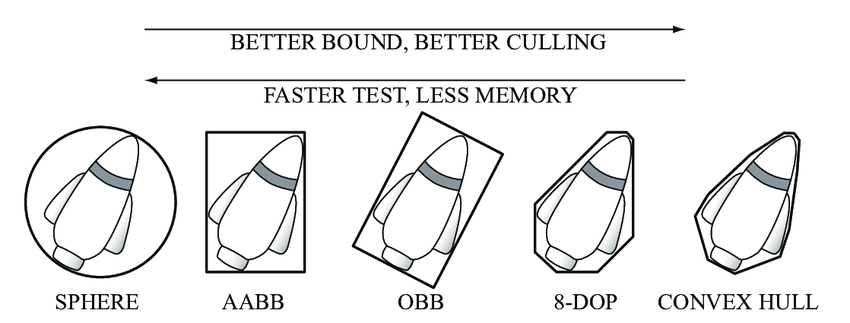

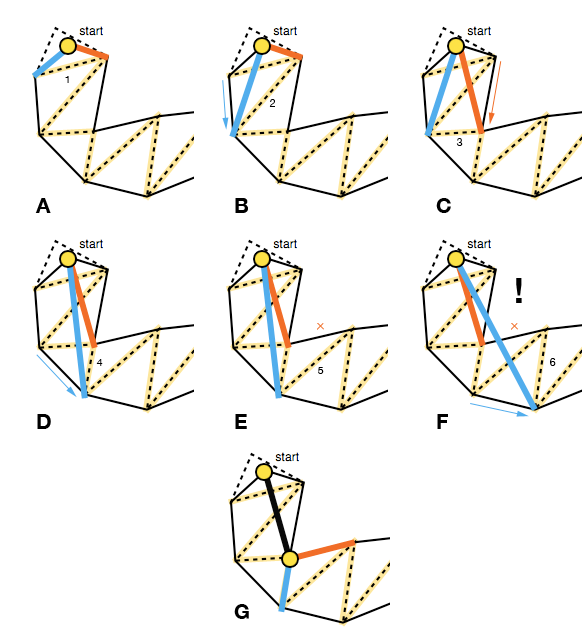

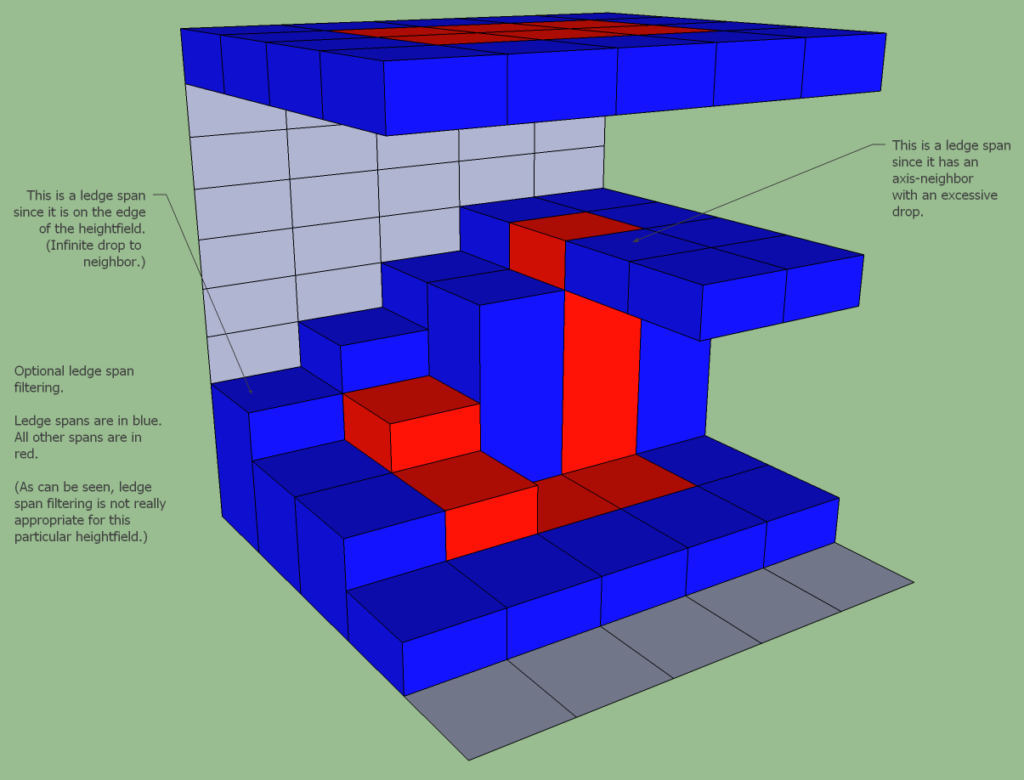

分布式场景

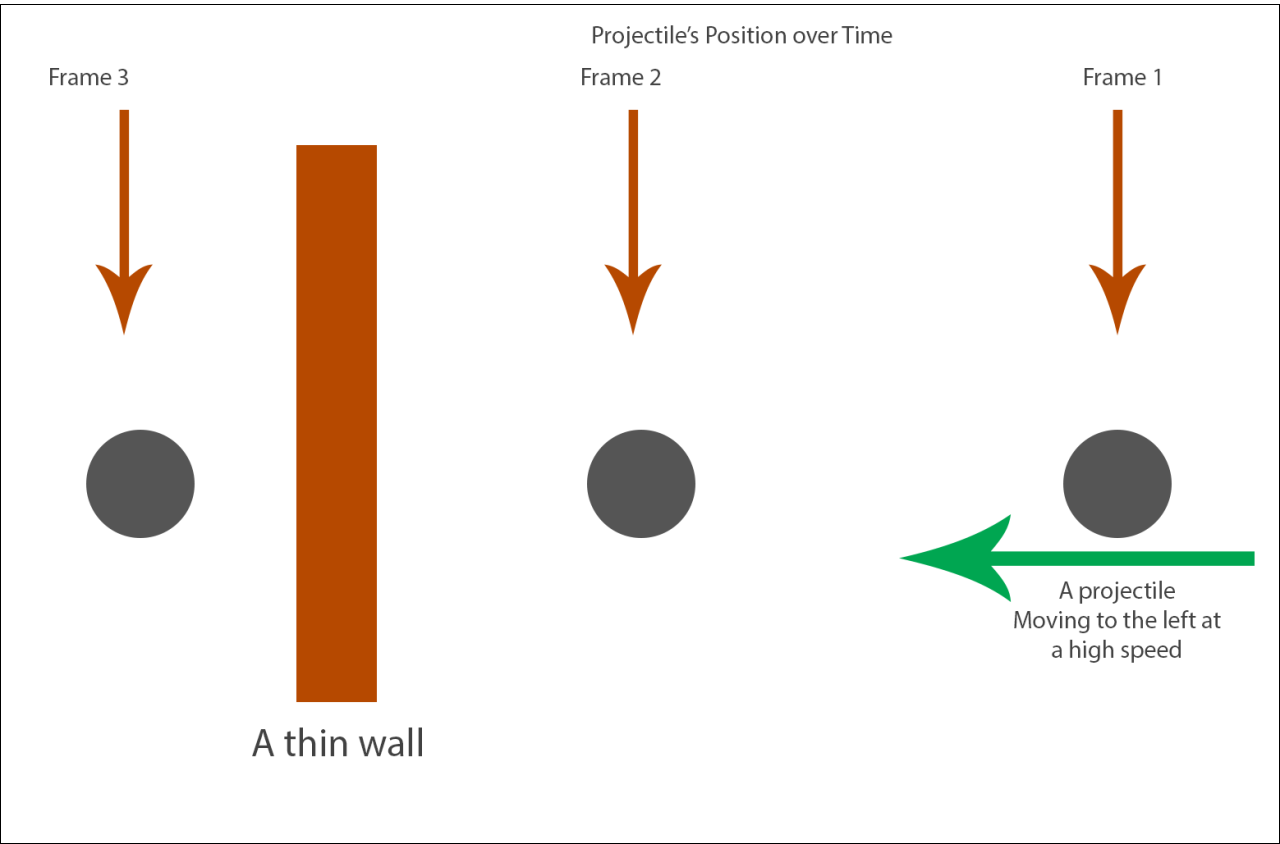

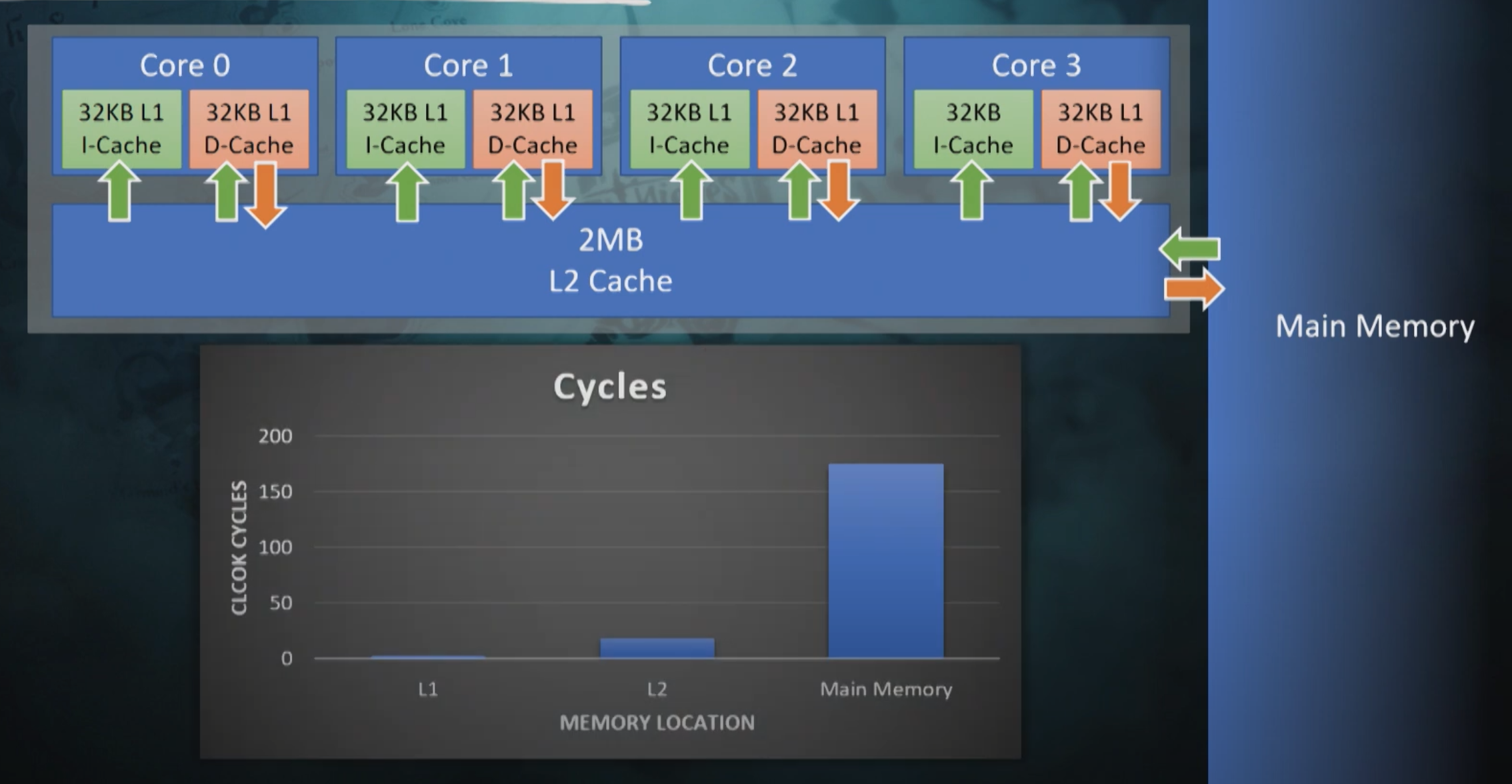

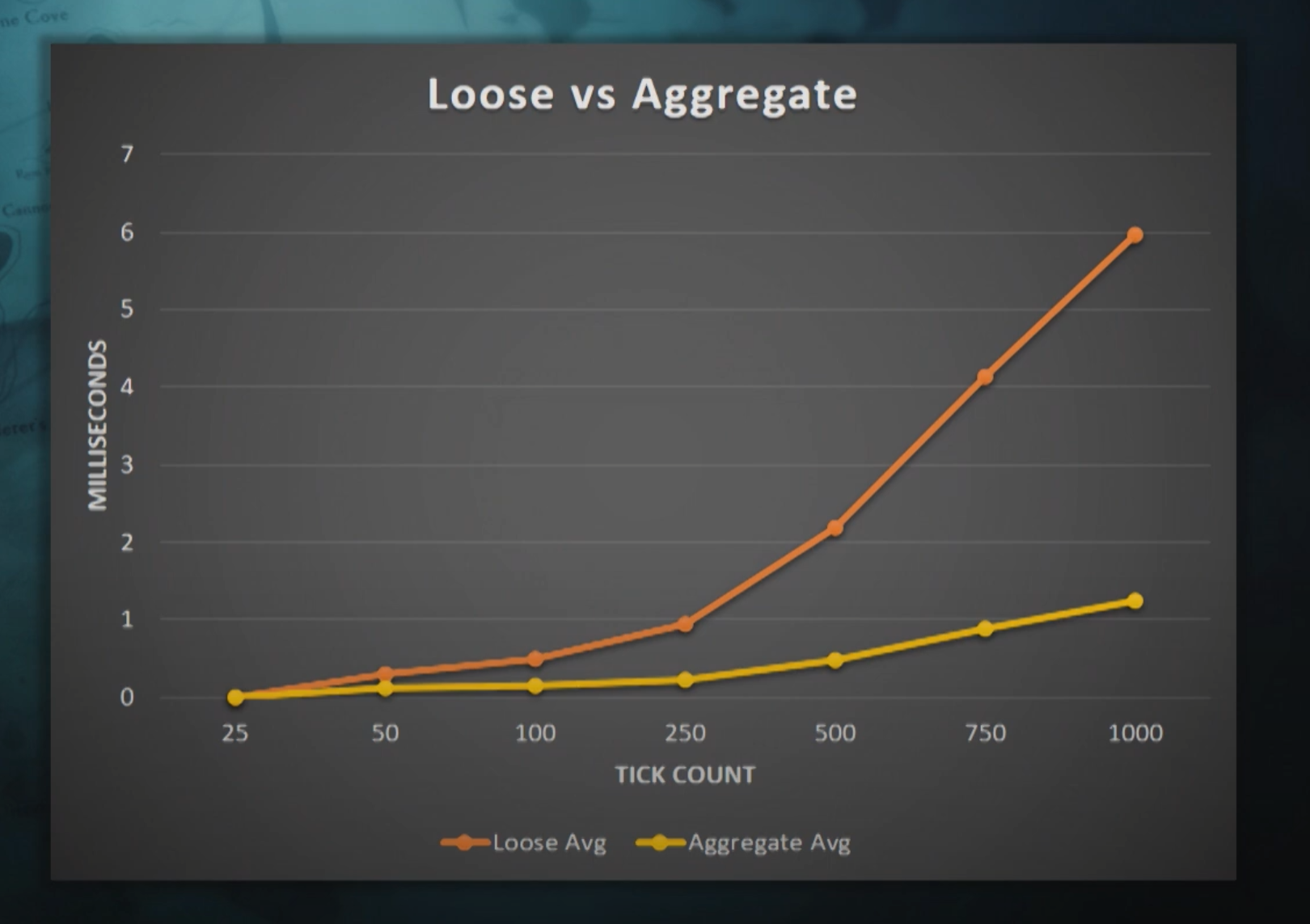

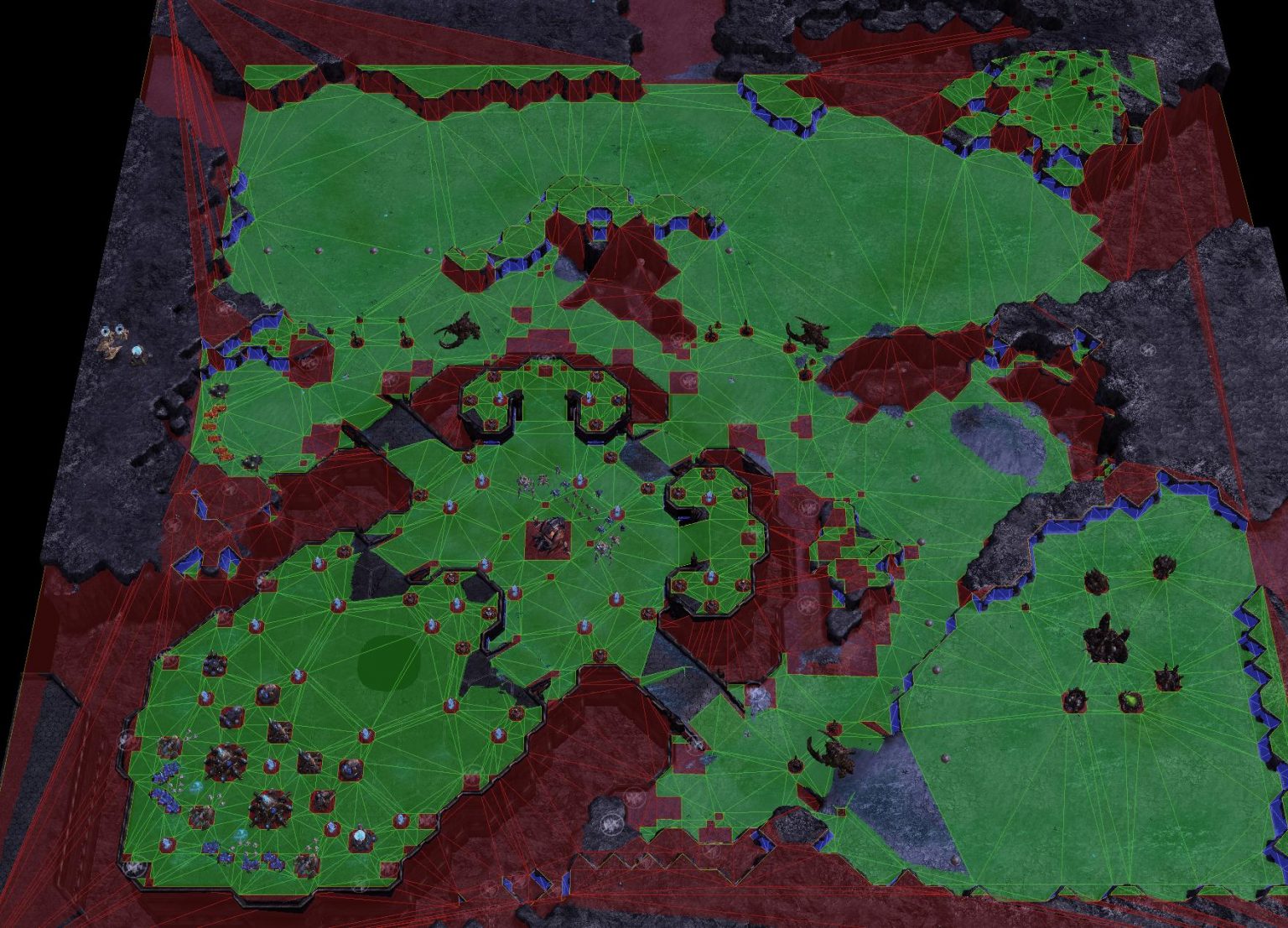

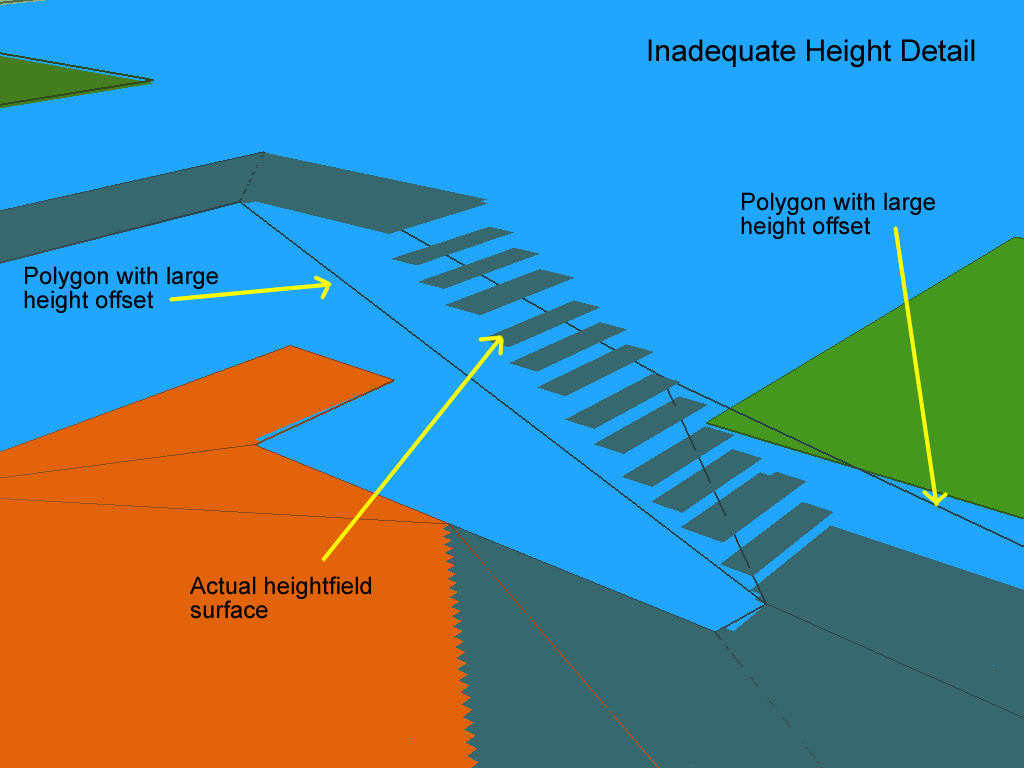

在上述架构中,一个场景只会存留在一个场景进程中,所以这个场景内的玩家数量就会受到单个进程的计算能力限制。为了提升单场景的玩家数量上限,场景进程开始引入多线程,将一些计算密集型的阻塞任务放到多线程中执行,例如寻路线程、物理线程、网络线程、AOI线程等。这样做的好处是可以充分利用多核处理器的性能,降低主线程的一些负载,从而可以提升主线程单场景的玩家数量。但是由于游戏内的绝大部分的业务逻辑都是线性的,不方便拆分为异步任务投递到其他线程中,所以主线程单场景的玩家数量提升终归有限。

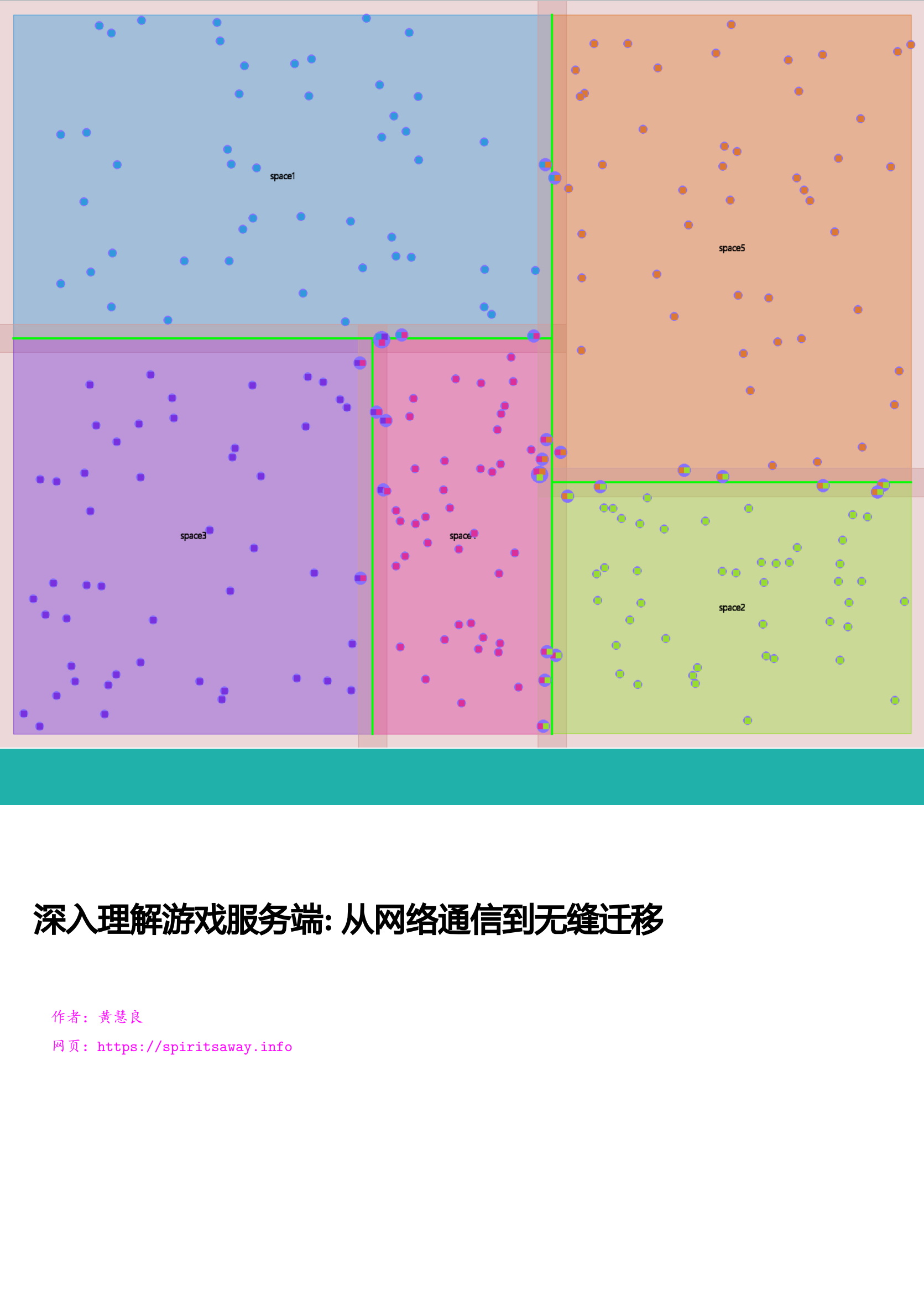

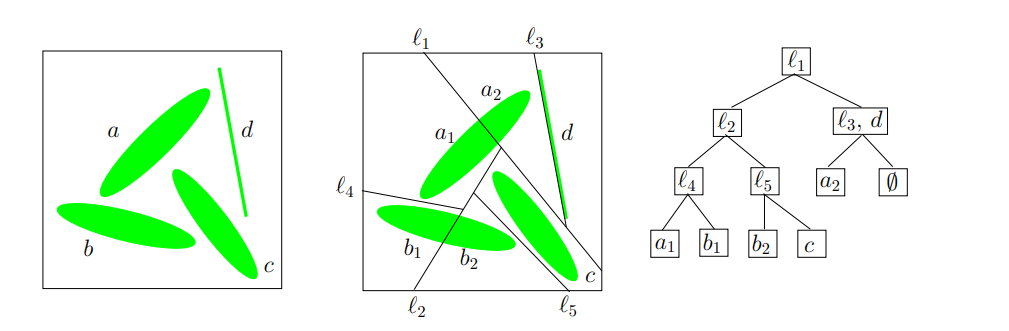

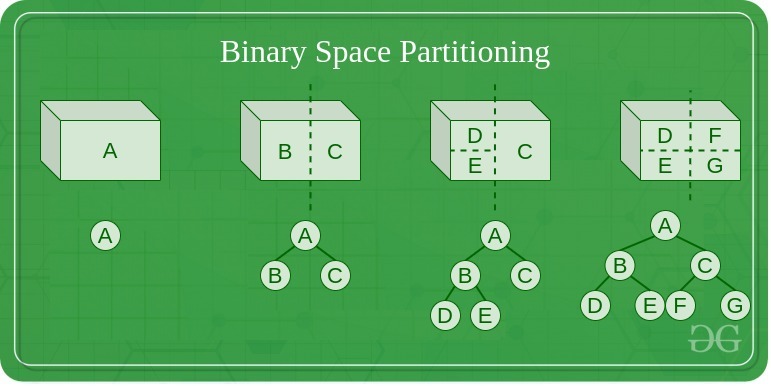

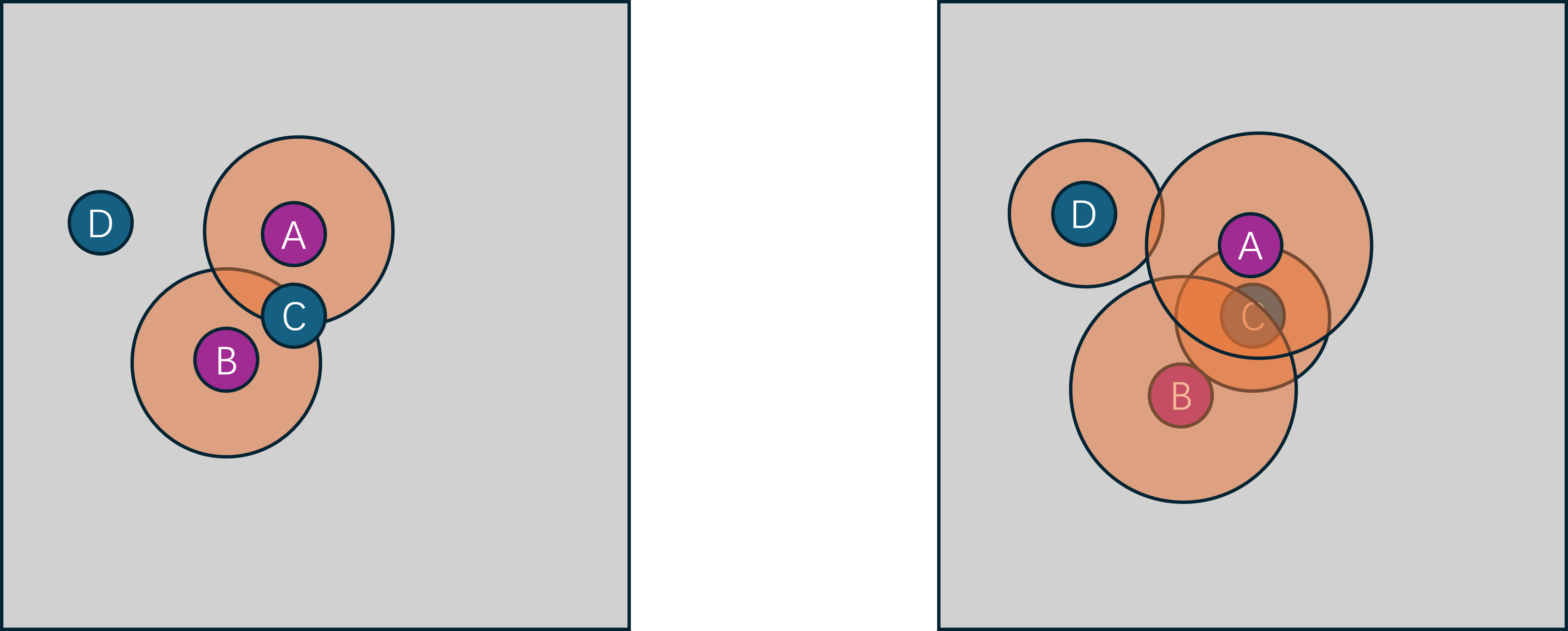

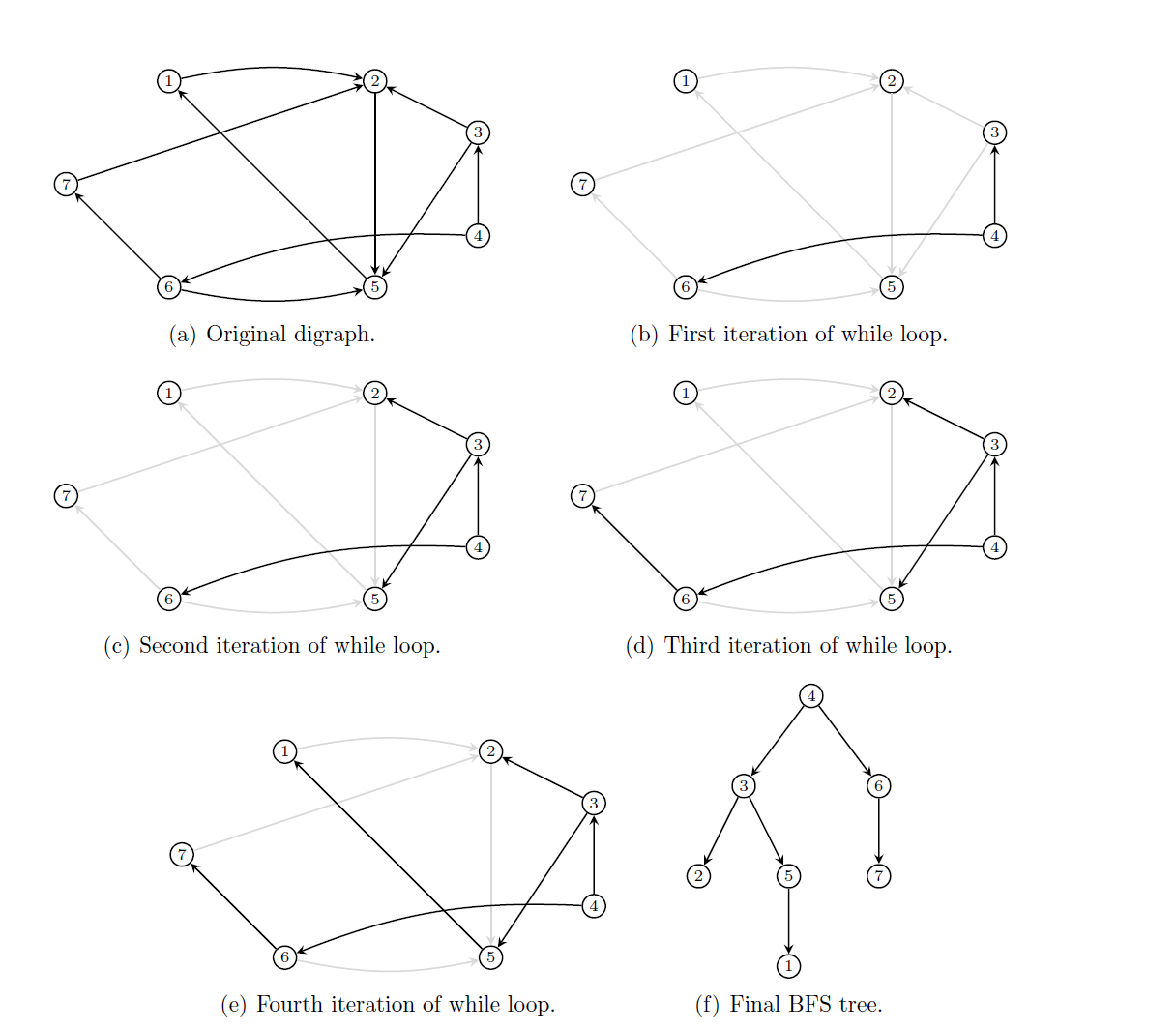

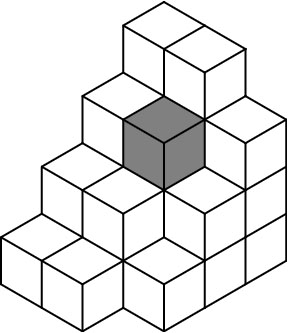

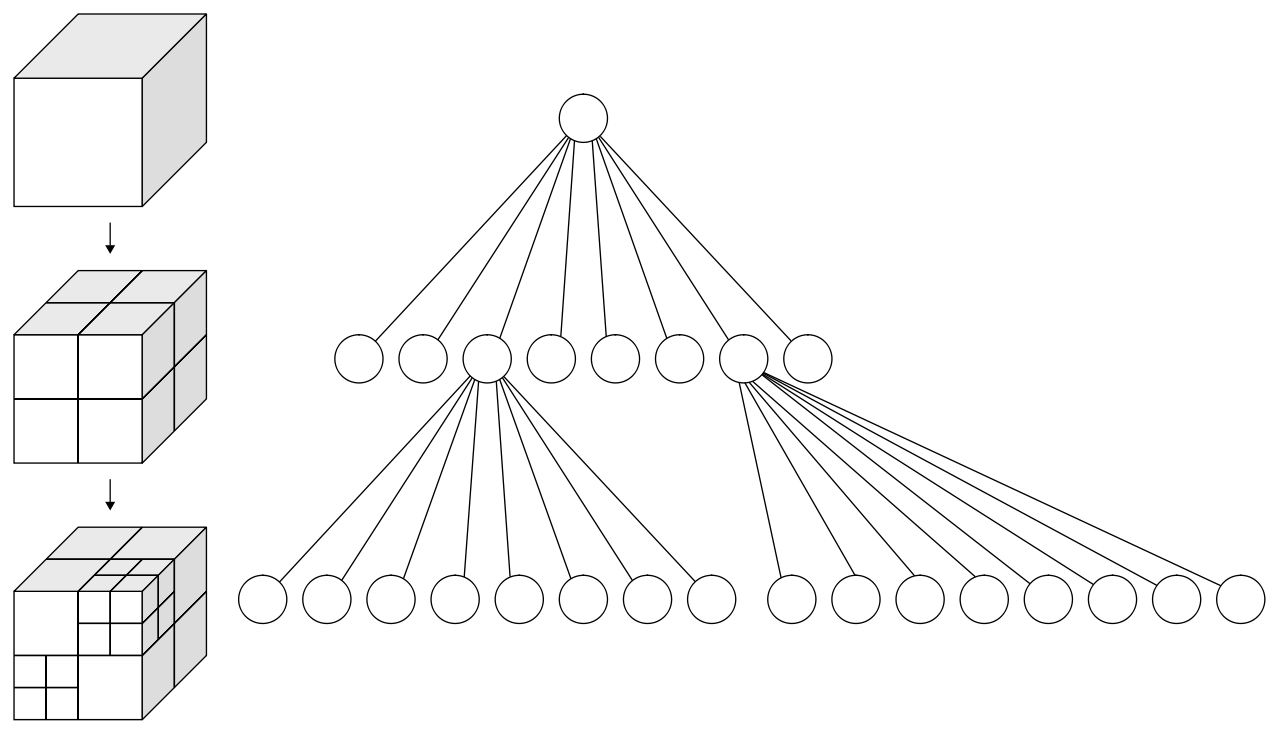

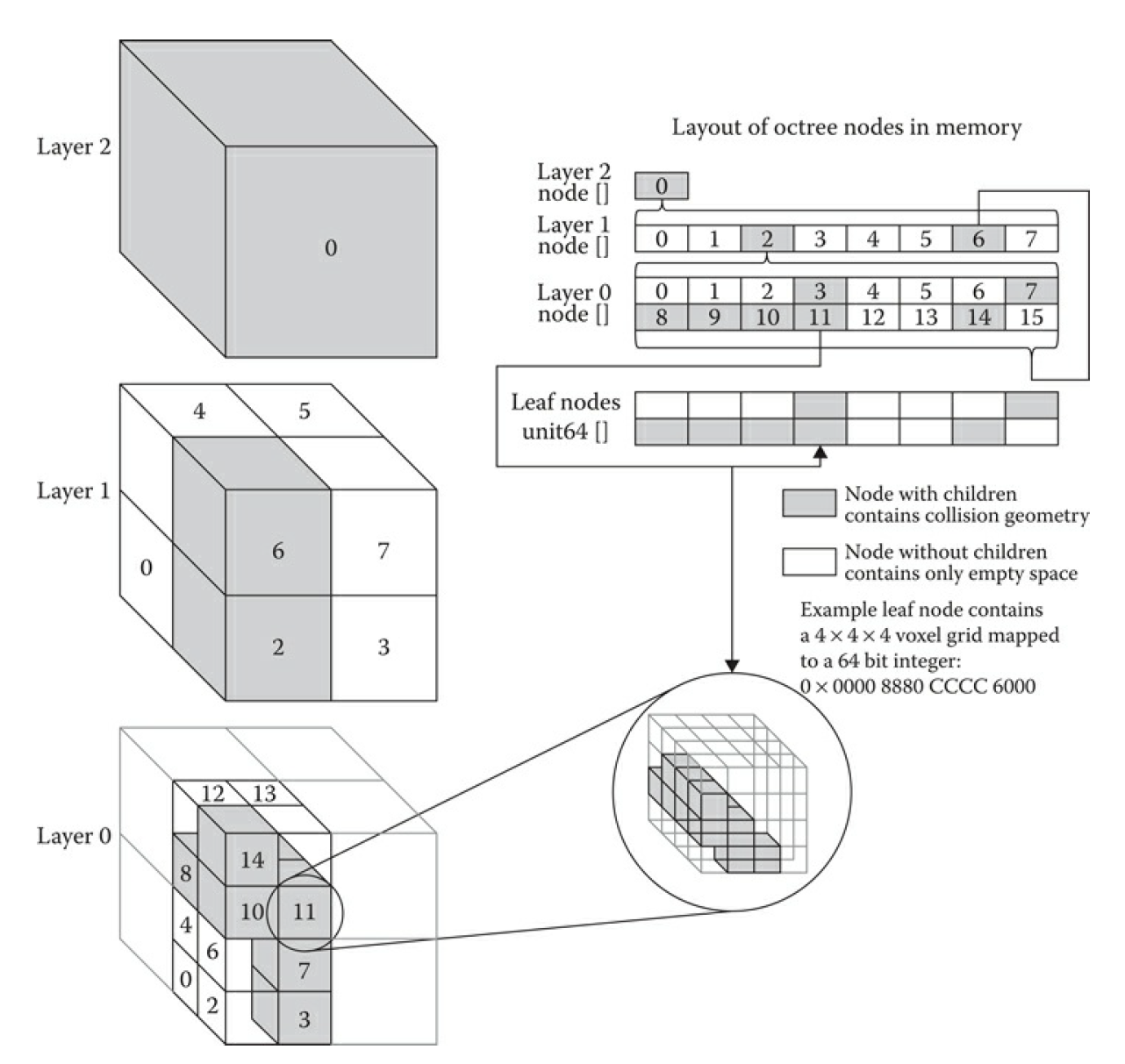

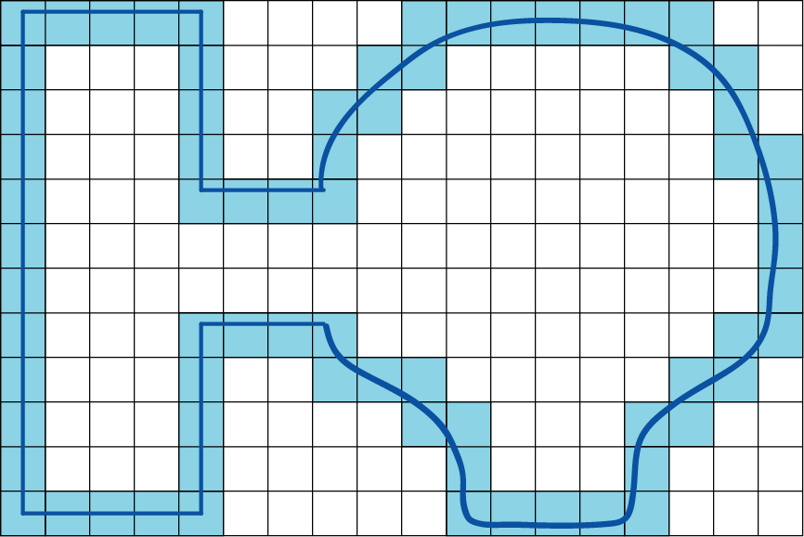

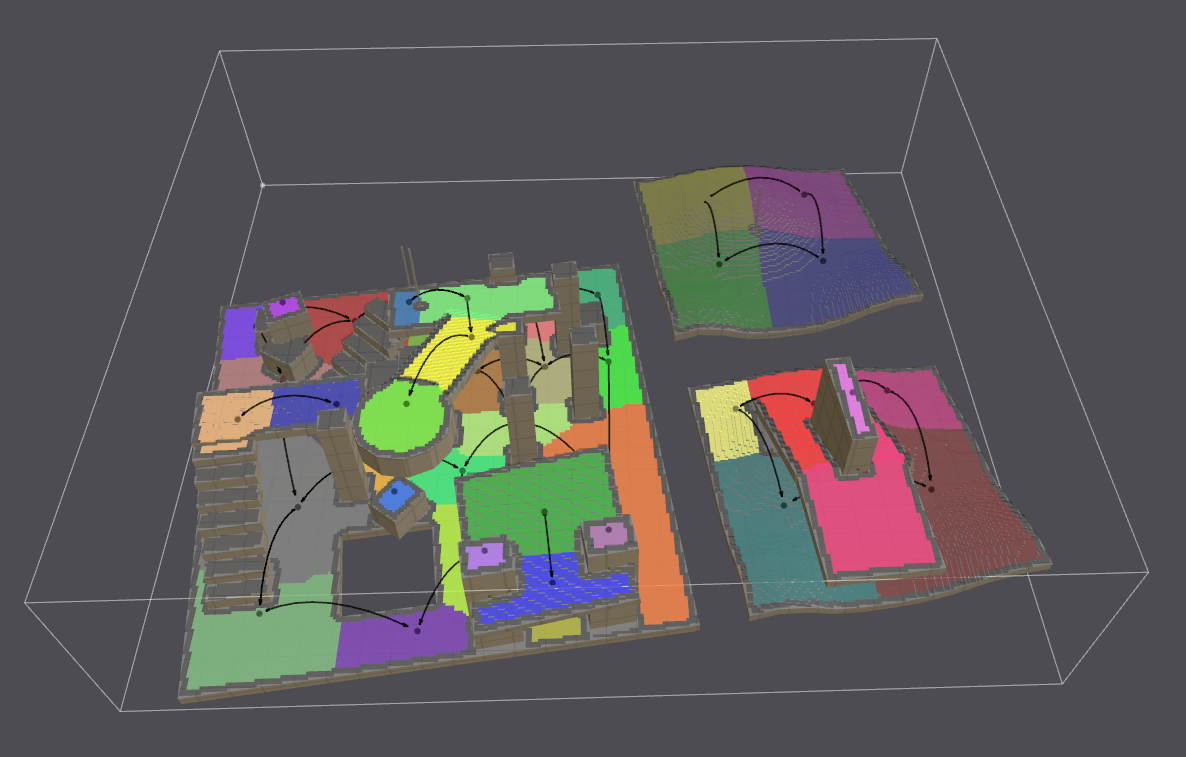

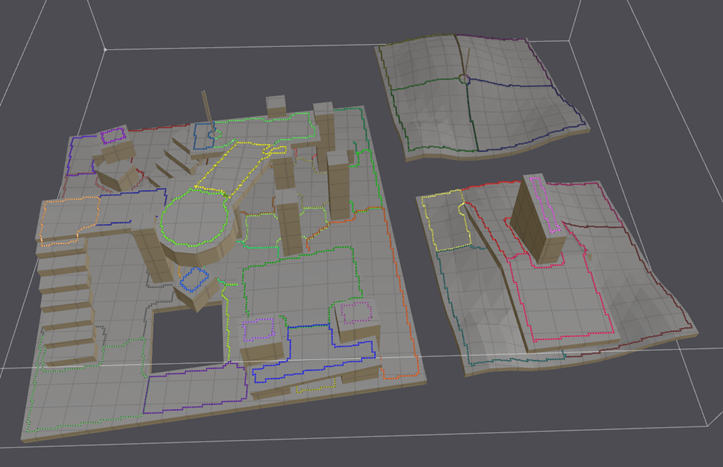

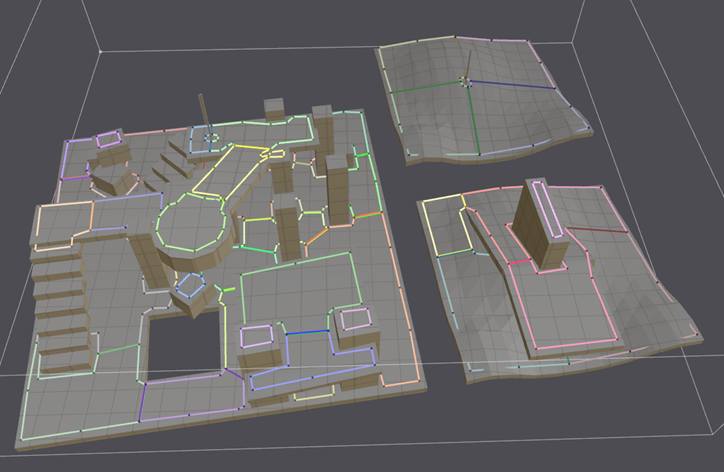

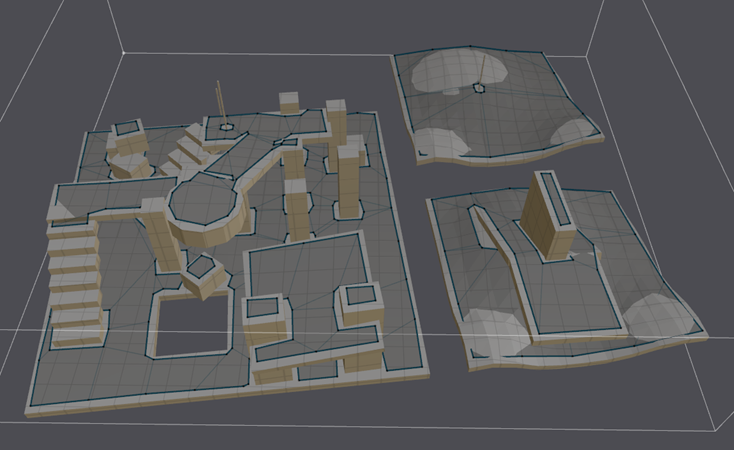

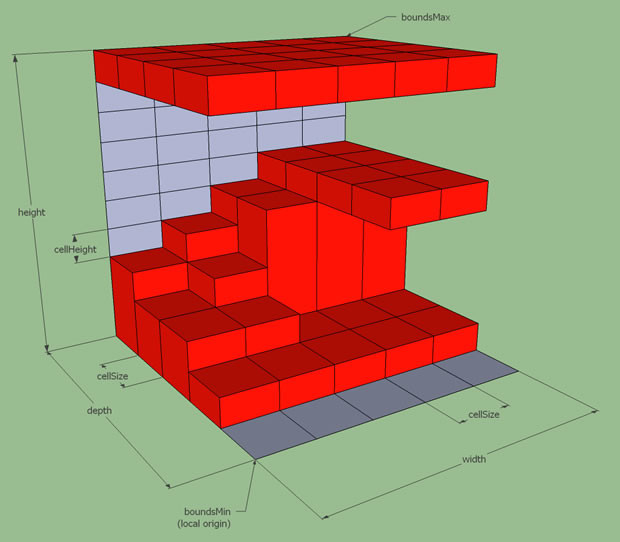

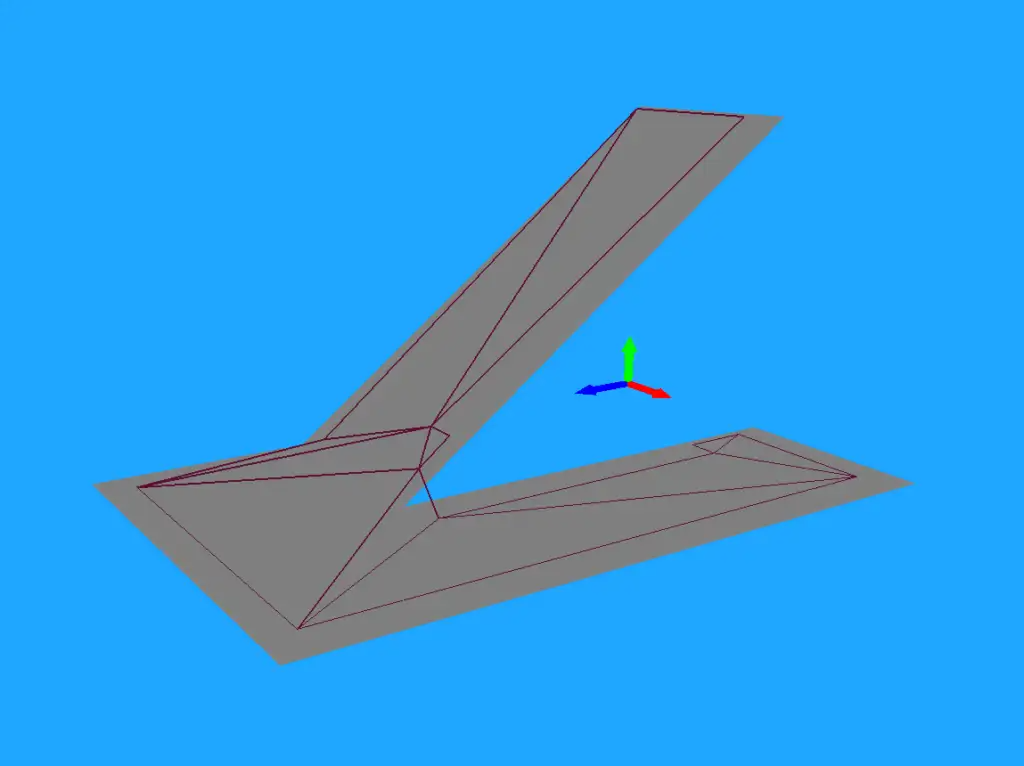

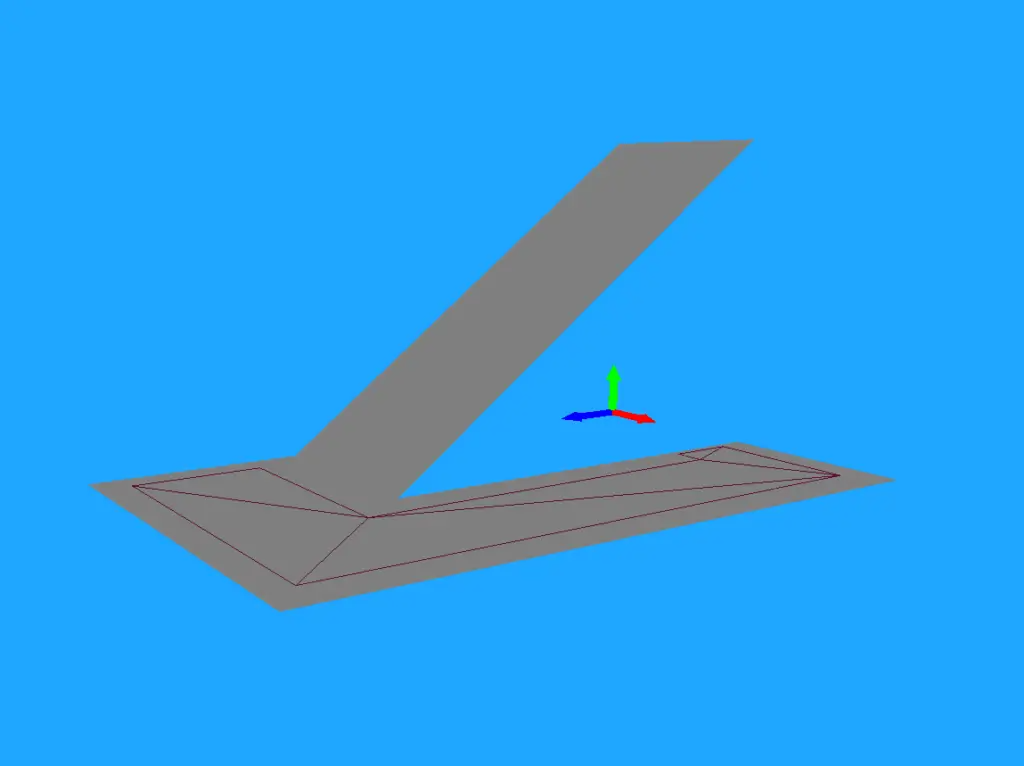

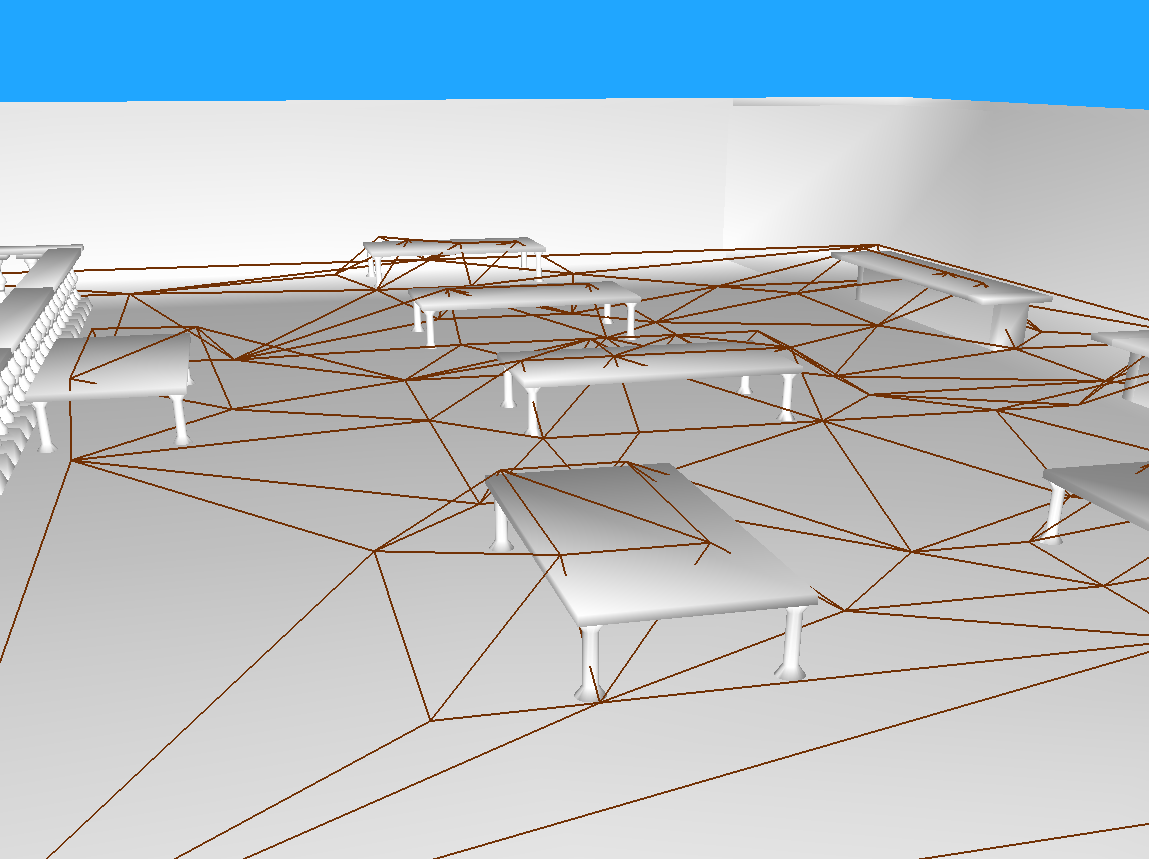

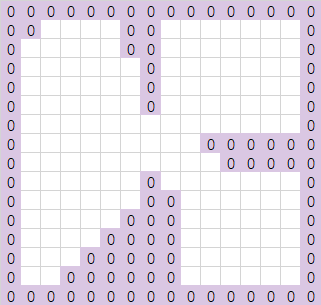

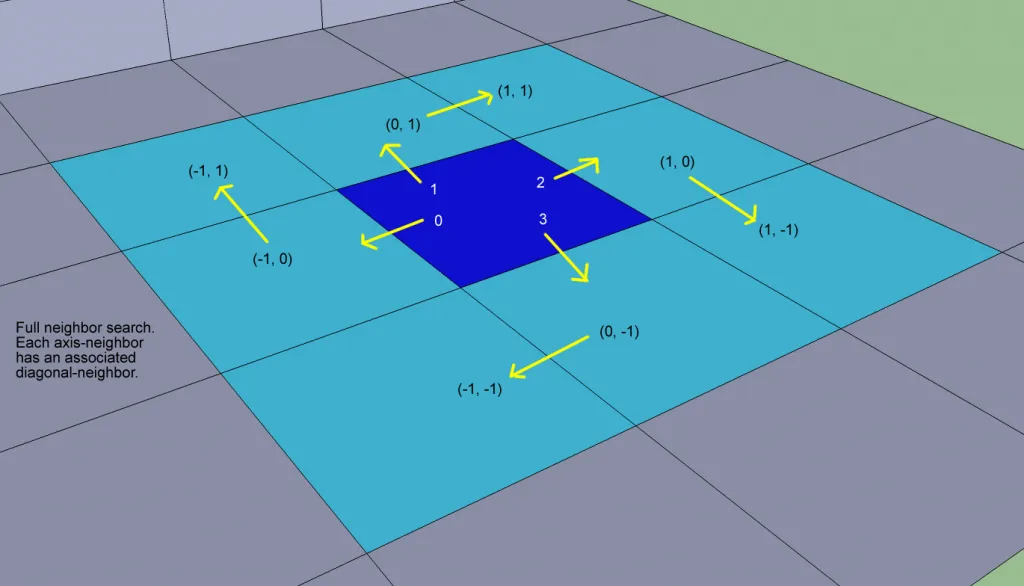

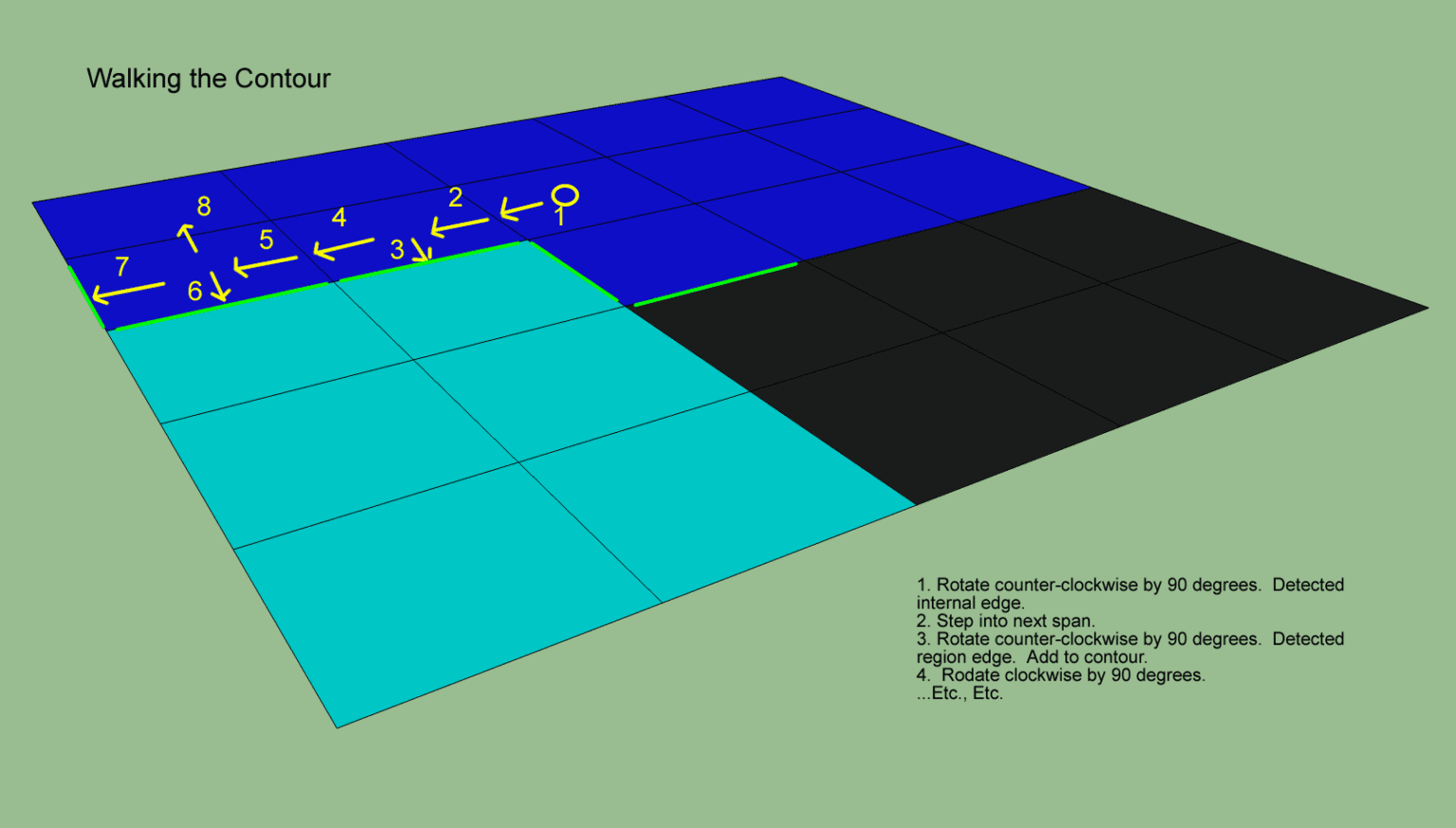

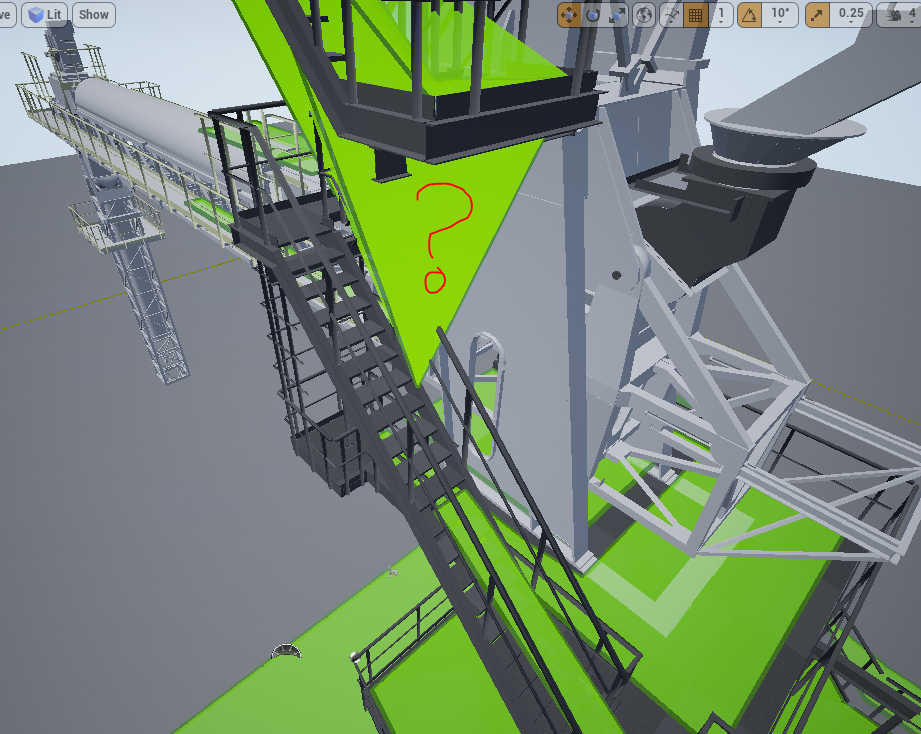

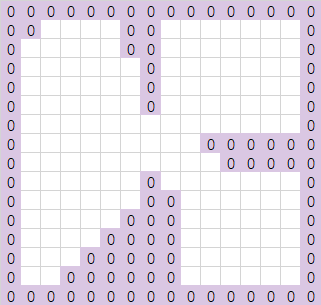

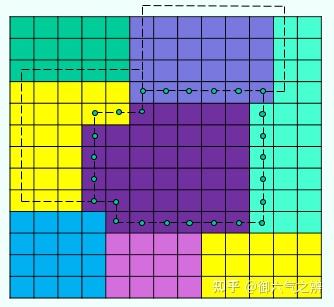

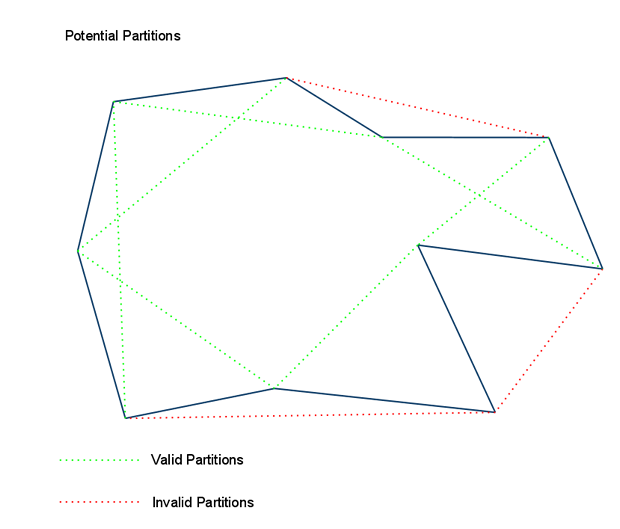

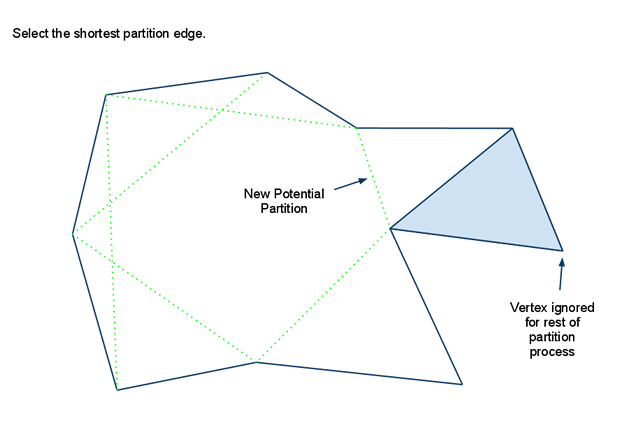

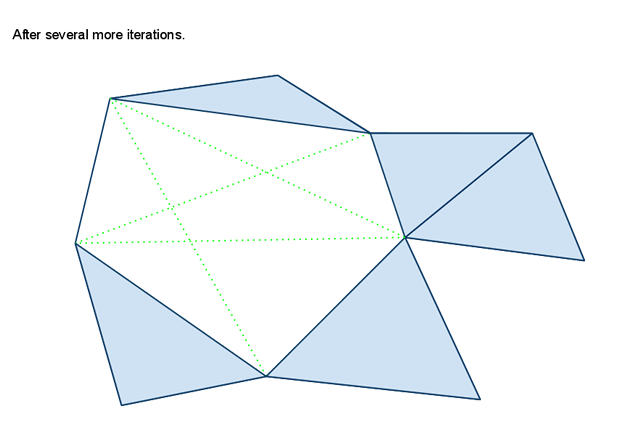

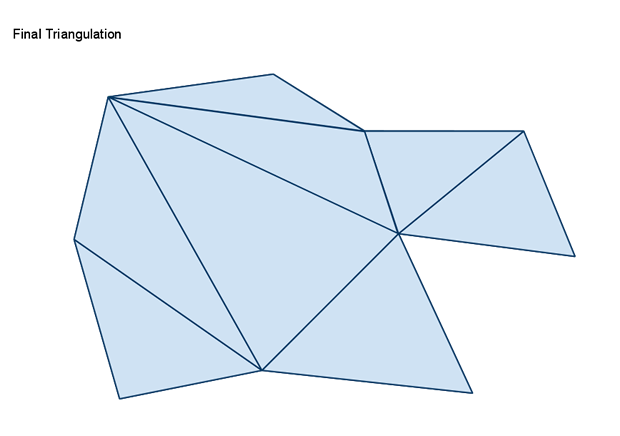

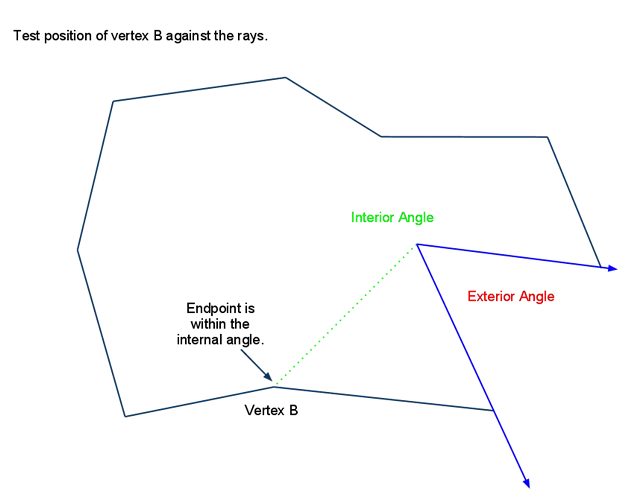

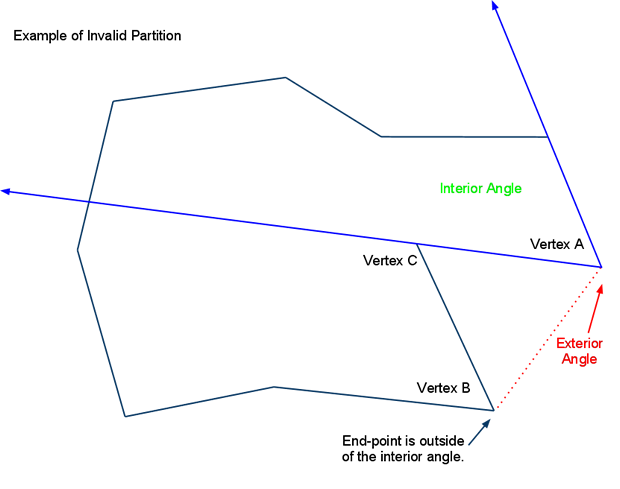

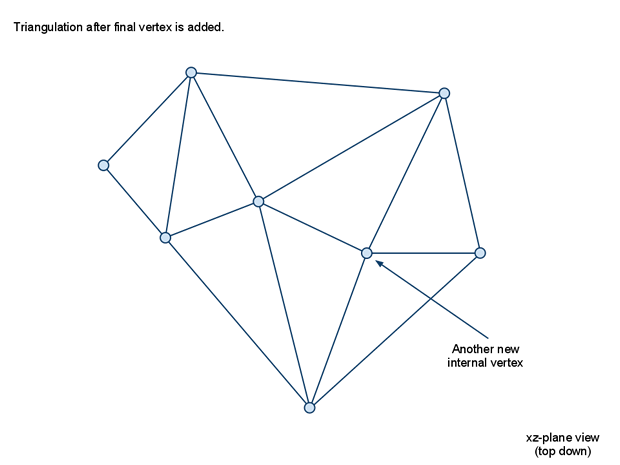

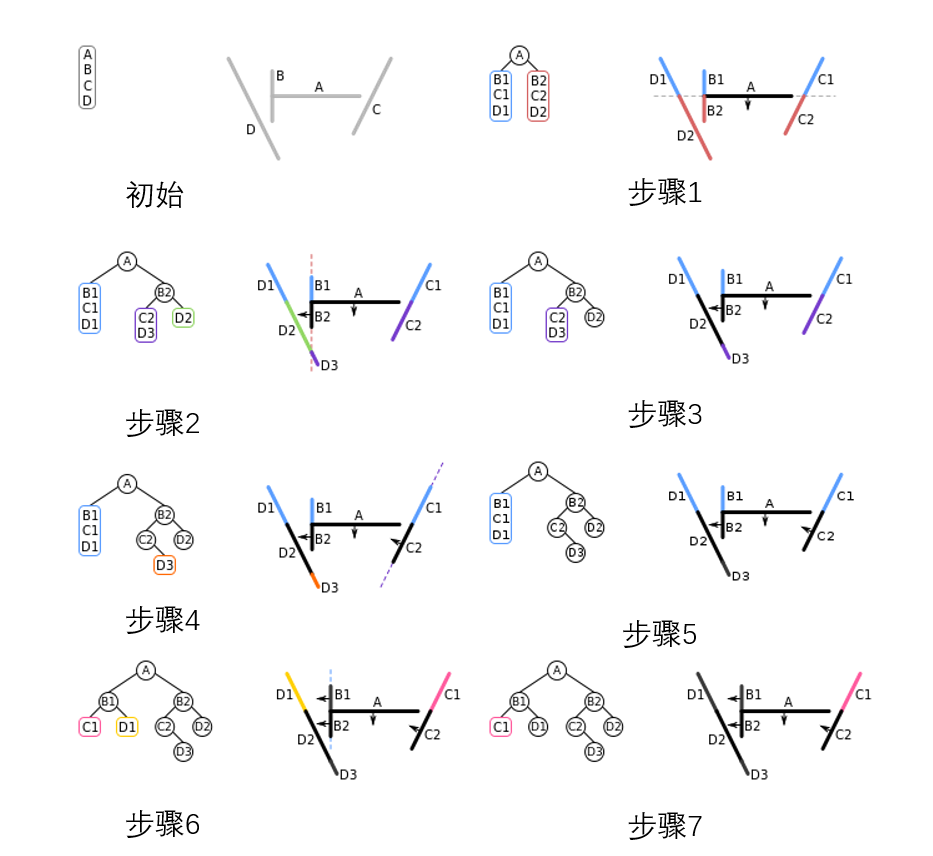

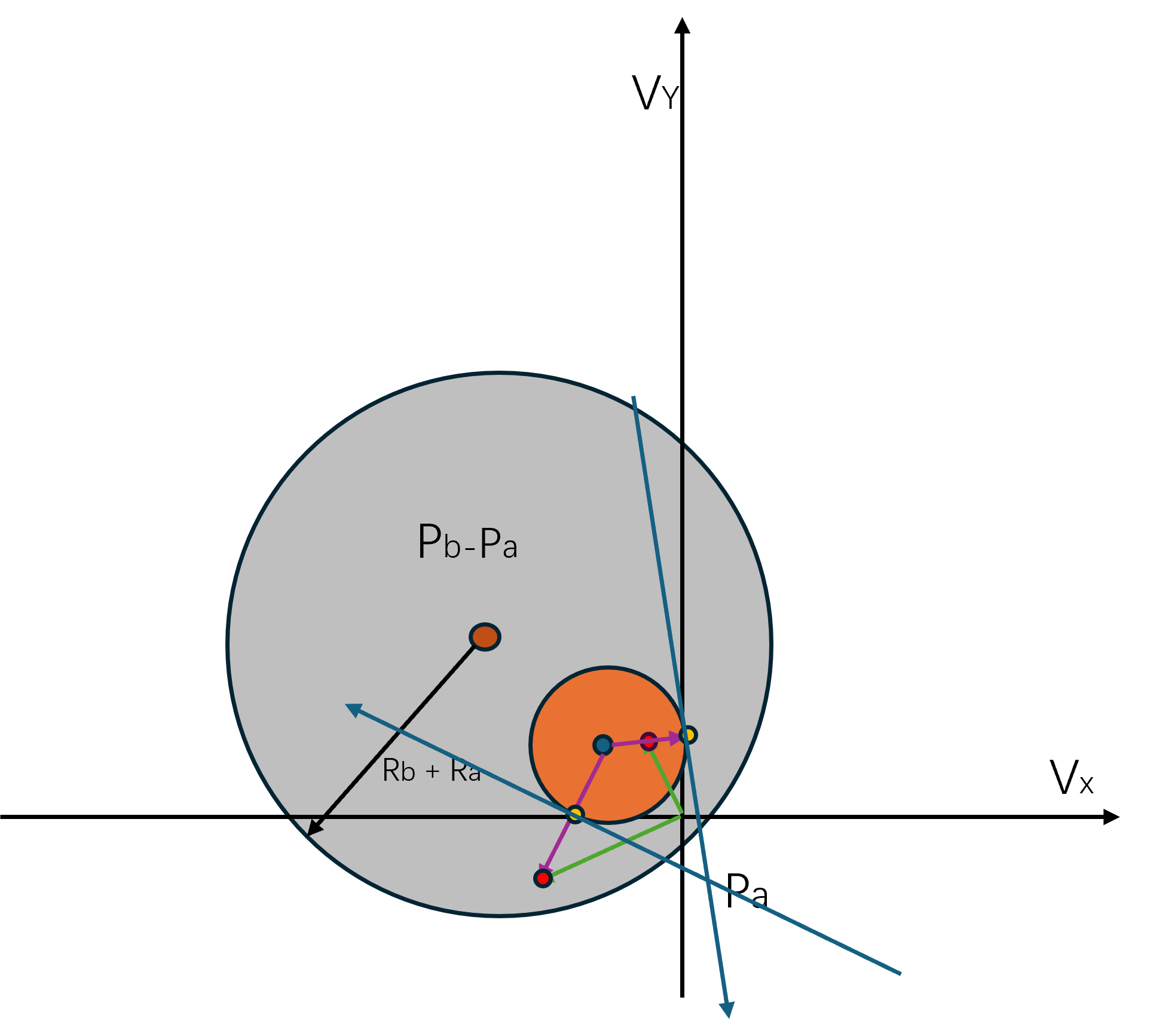

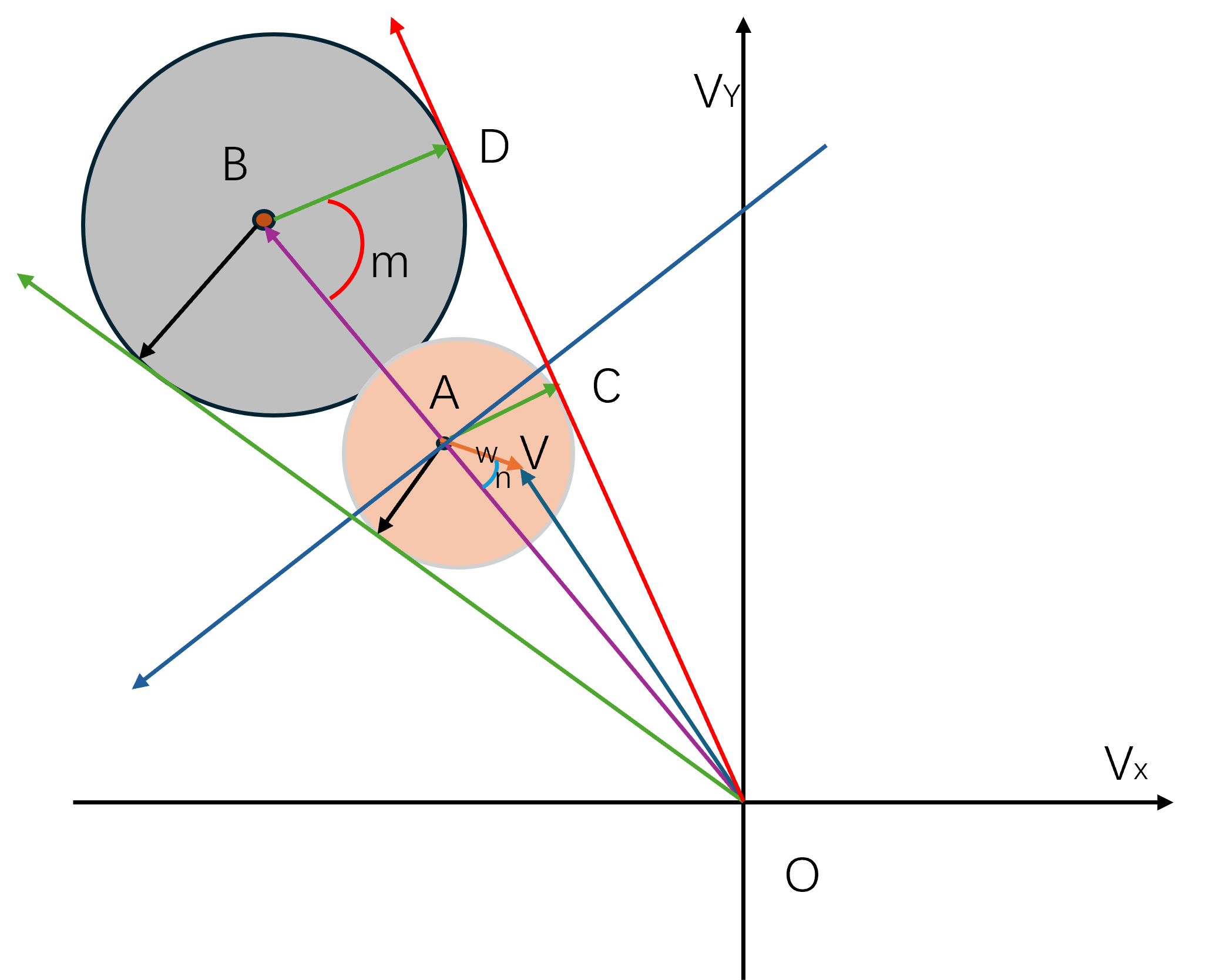

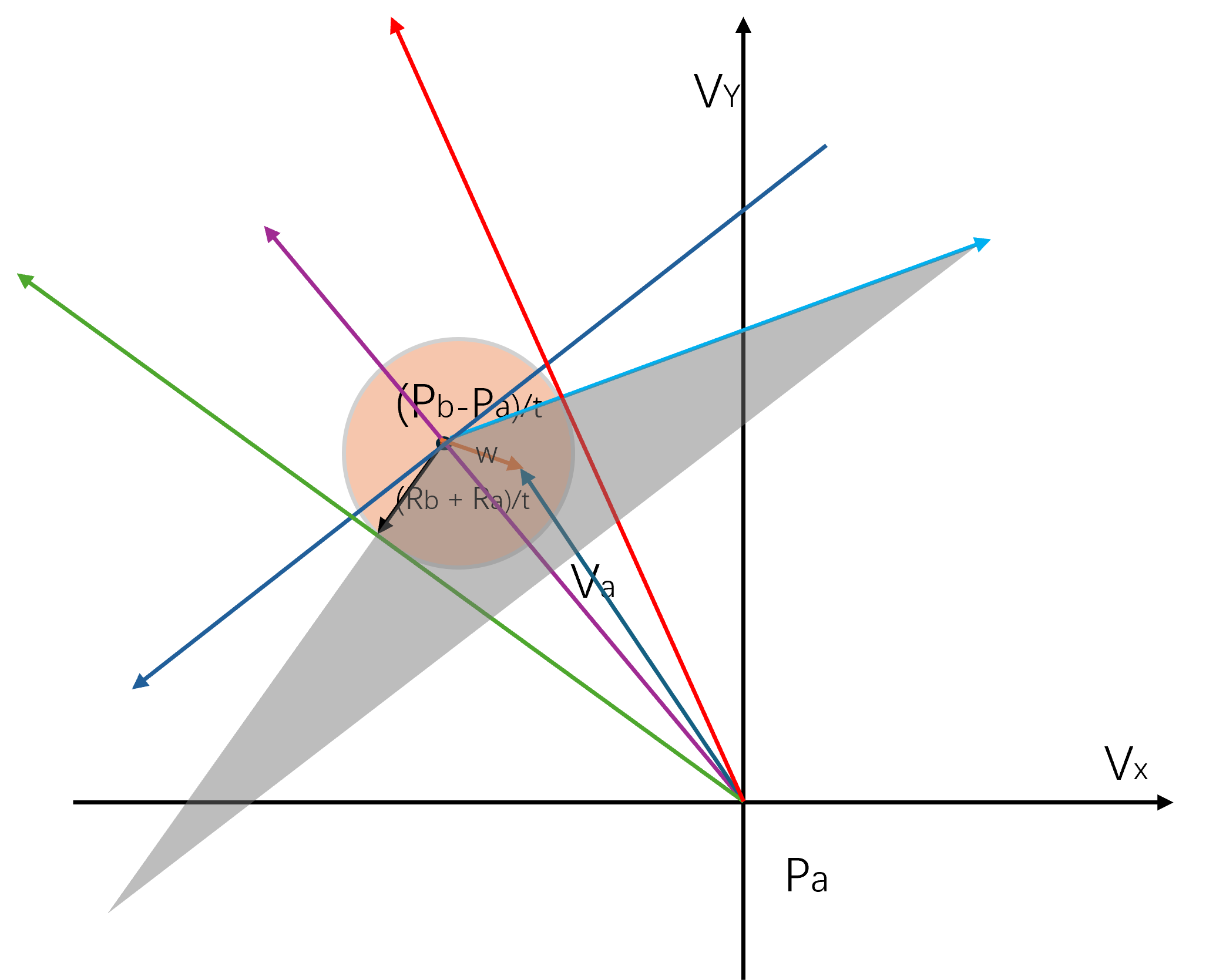

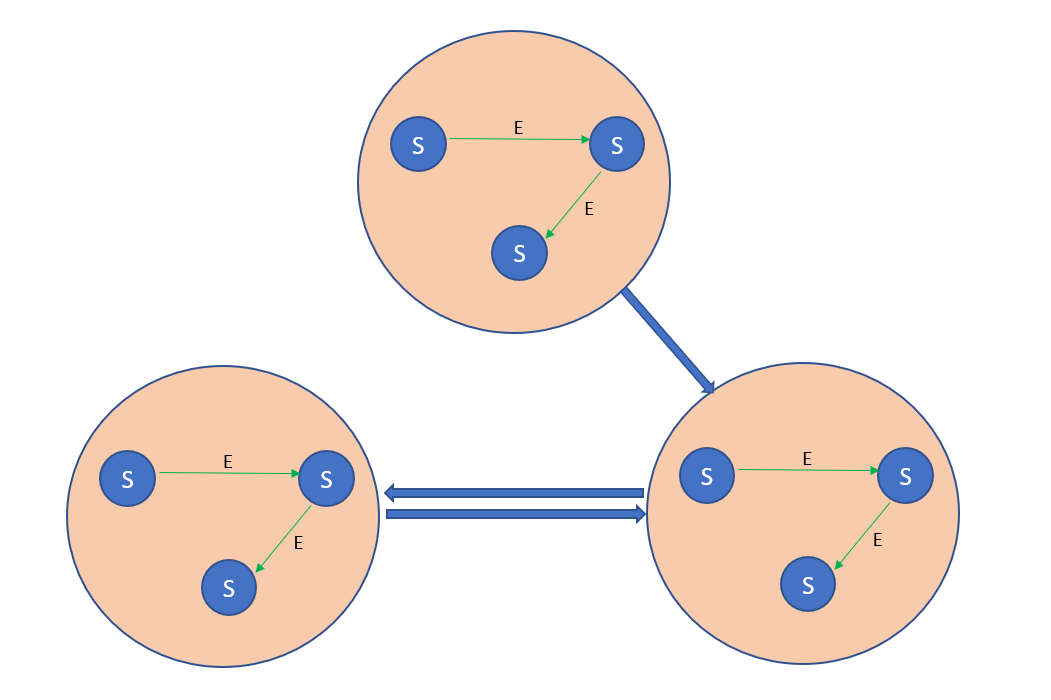

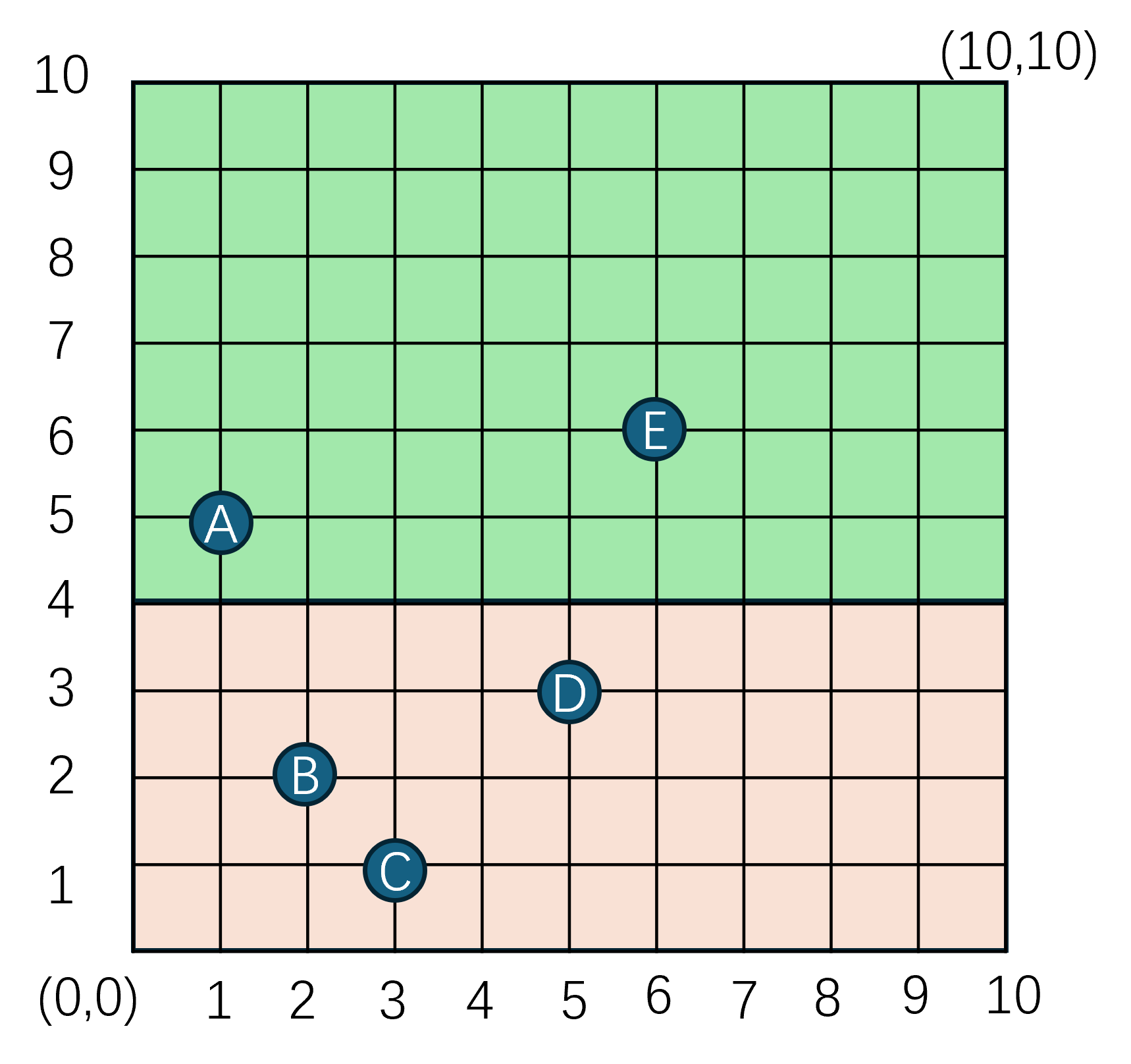

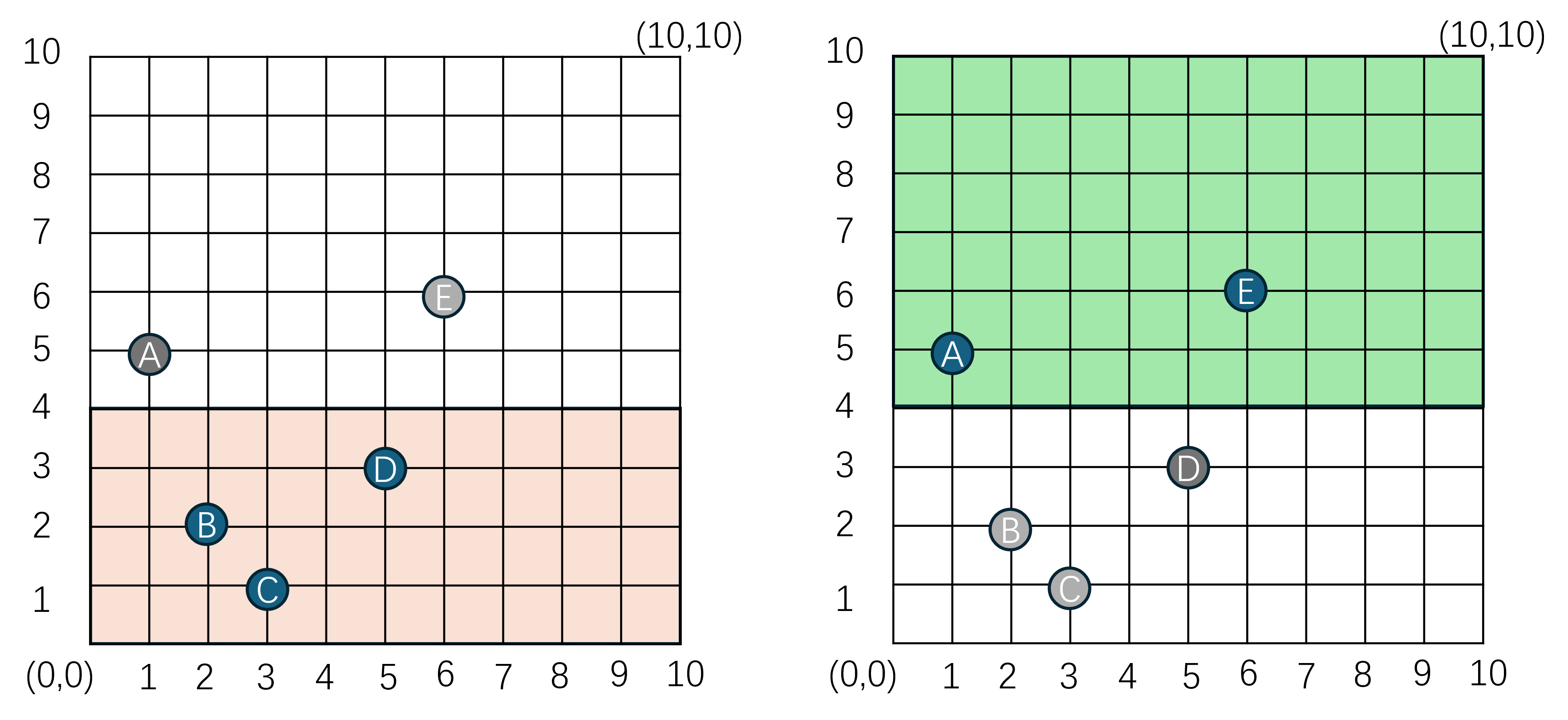

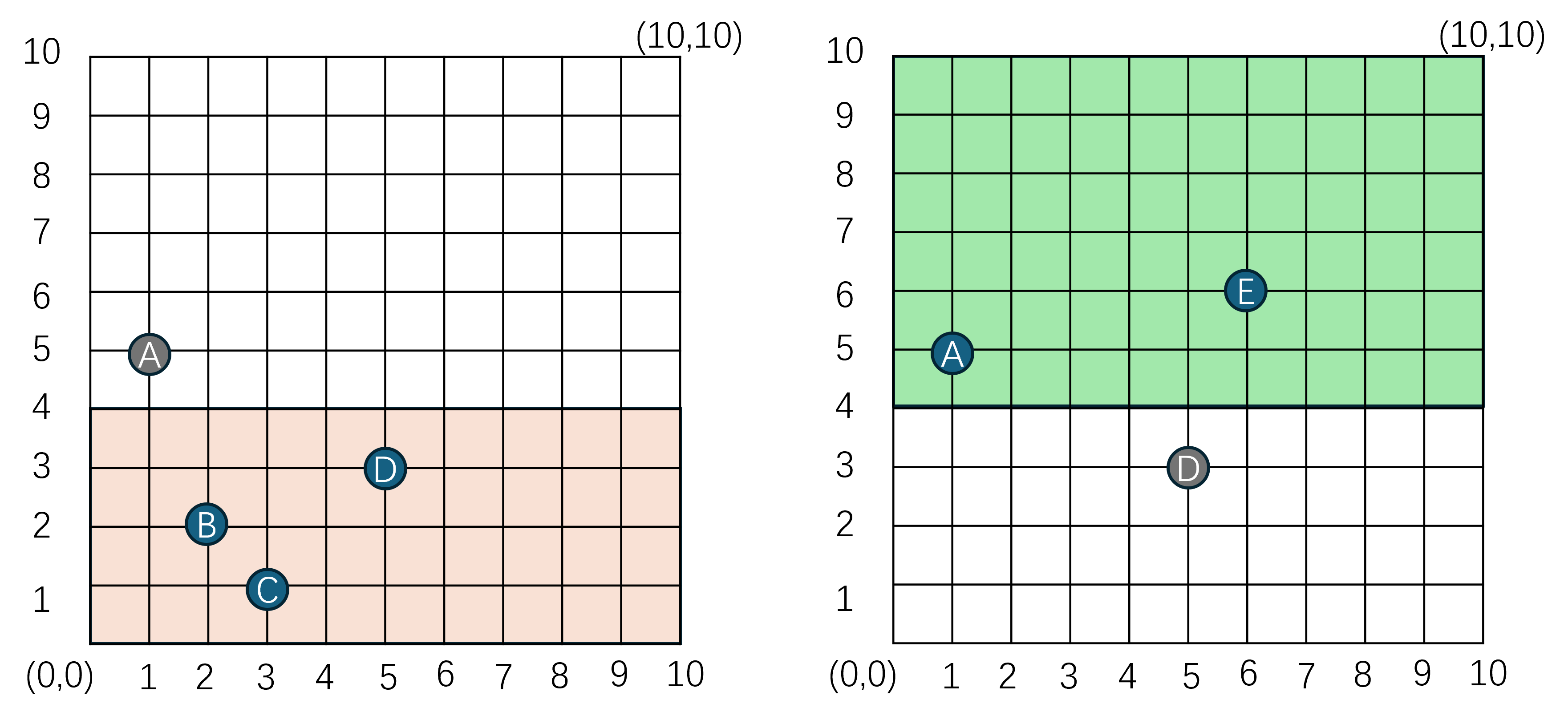

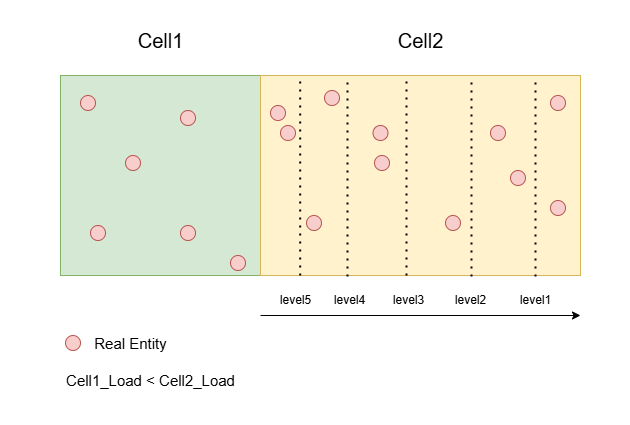

为了绕过这种由于主线程的性能瓶颈导致的单场景玩家数量上限问题,开始引入分布式场景。分布式场景的设计思想是将一个场景拆分成多个子场景,每个子场景都运行在一个独立的场景进程中。这样做的好处是可以将场景内的玩家数量分散到多个进程中,从而可以极大提升单逻辑场景的玩家数量上限。

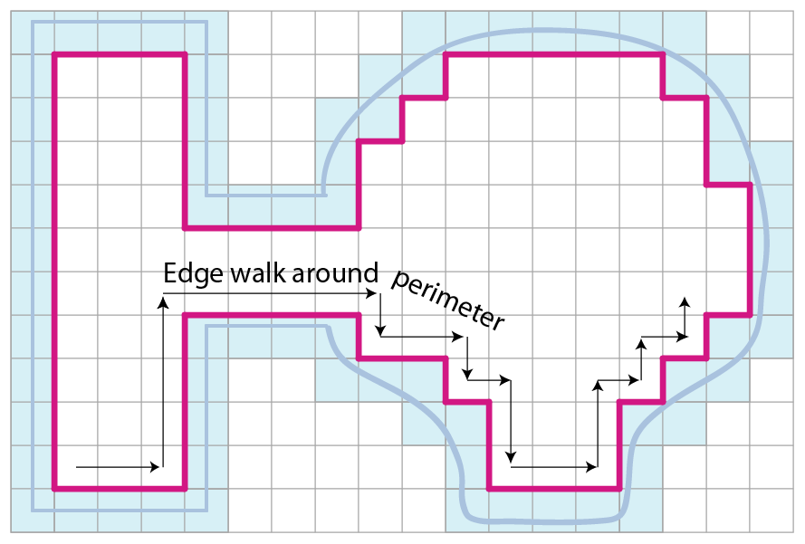

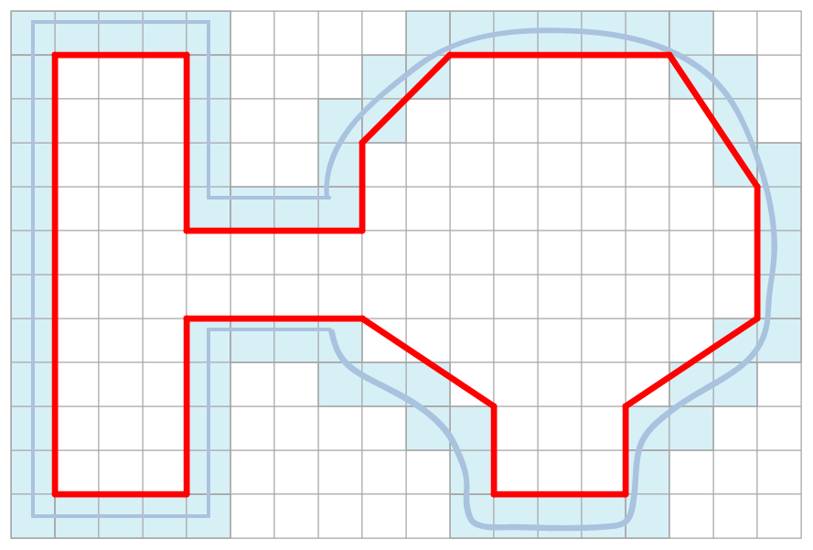

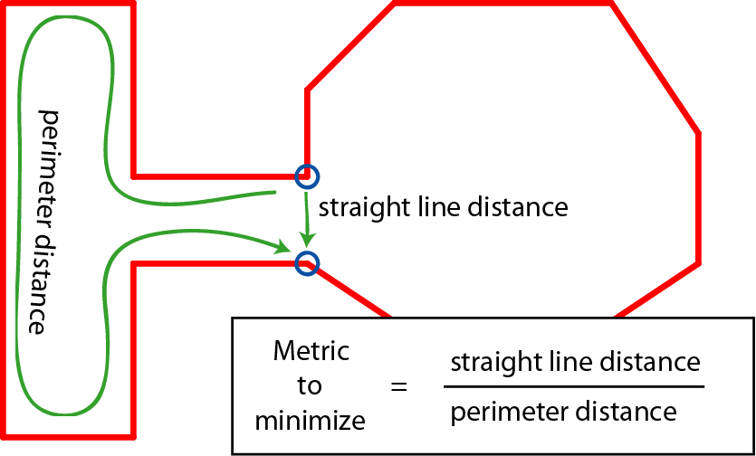

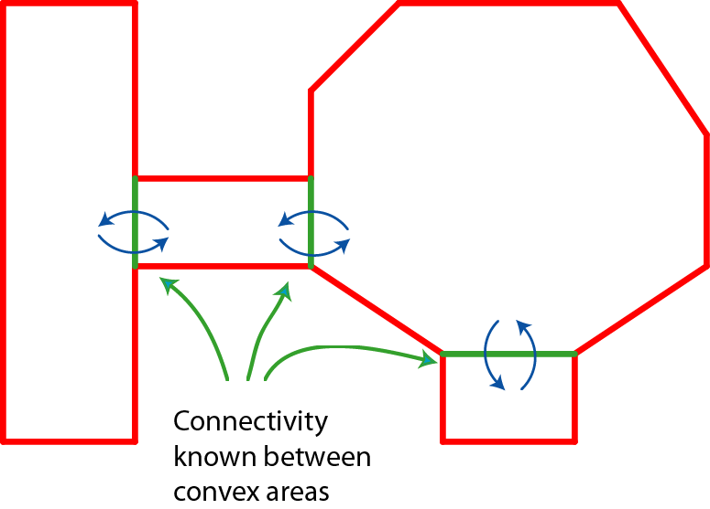

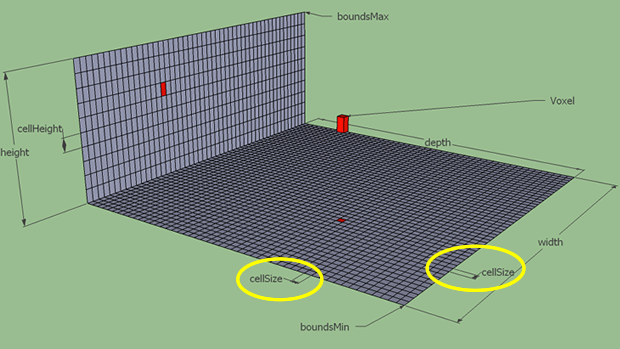

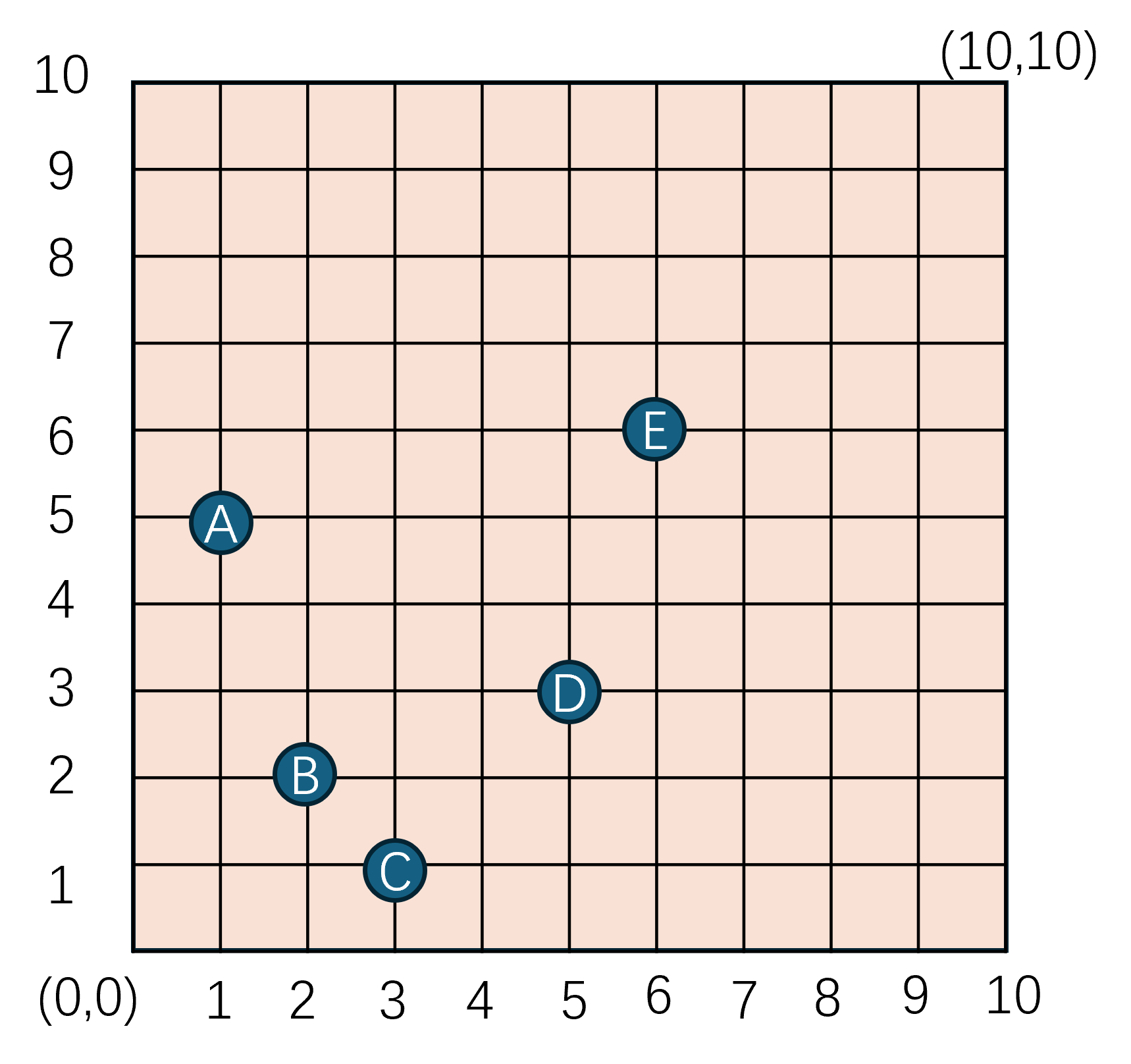

这个场景划分并不是预先指定好的,而是随着场景内玩家数量的变化而动态调整的。例如当场景内玩家数量超过了某个阈值时,就会将场景拆分成多个子场景。当场景内玩家数量减少到某个阈值时,就会将多个子场景合并成一个场景。这个动态调整子场景布局的概念就叫做动态分区(Dynamic Partitioning)。为了方便计算边界以及合并、拆分,这些子场景的形状一般都是矩形。

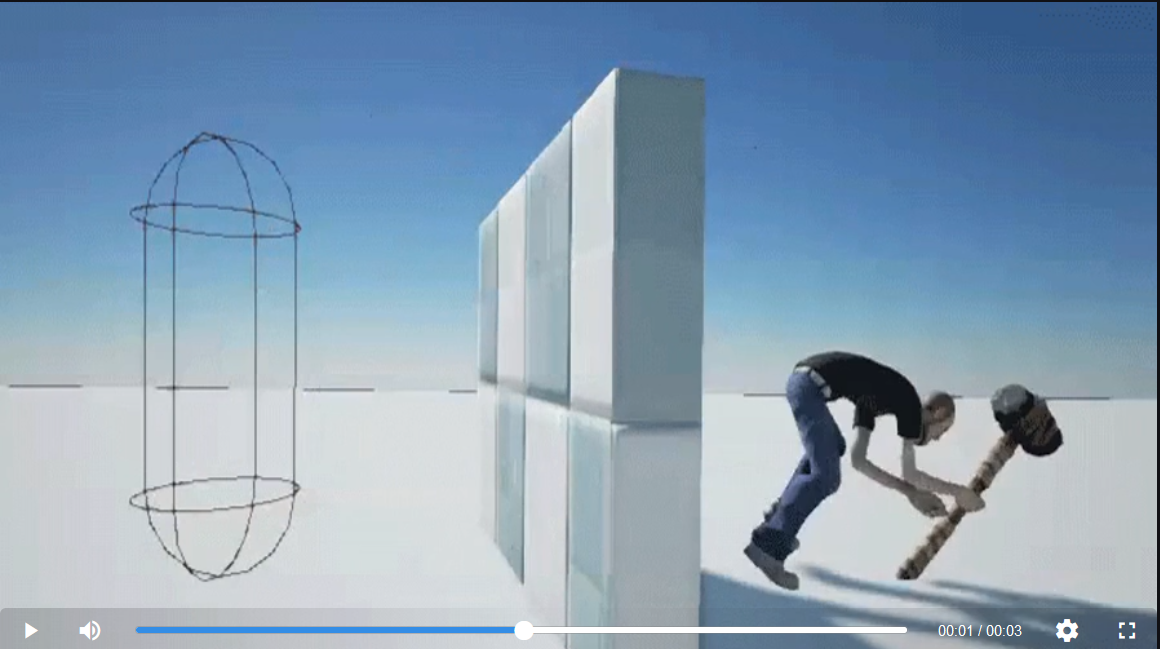

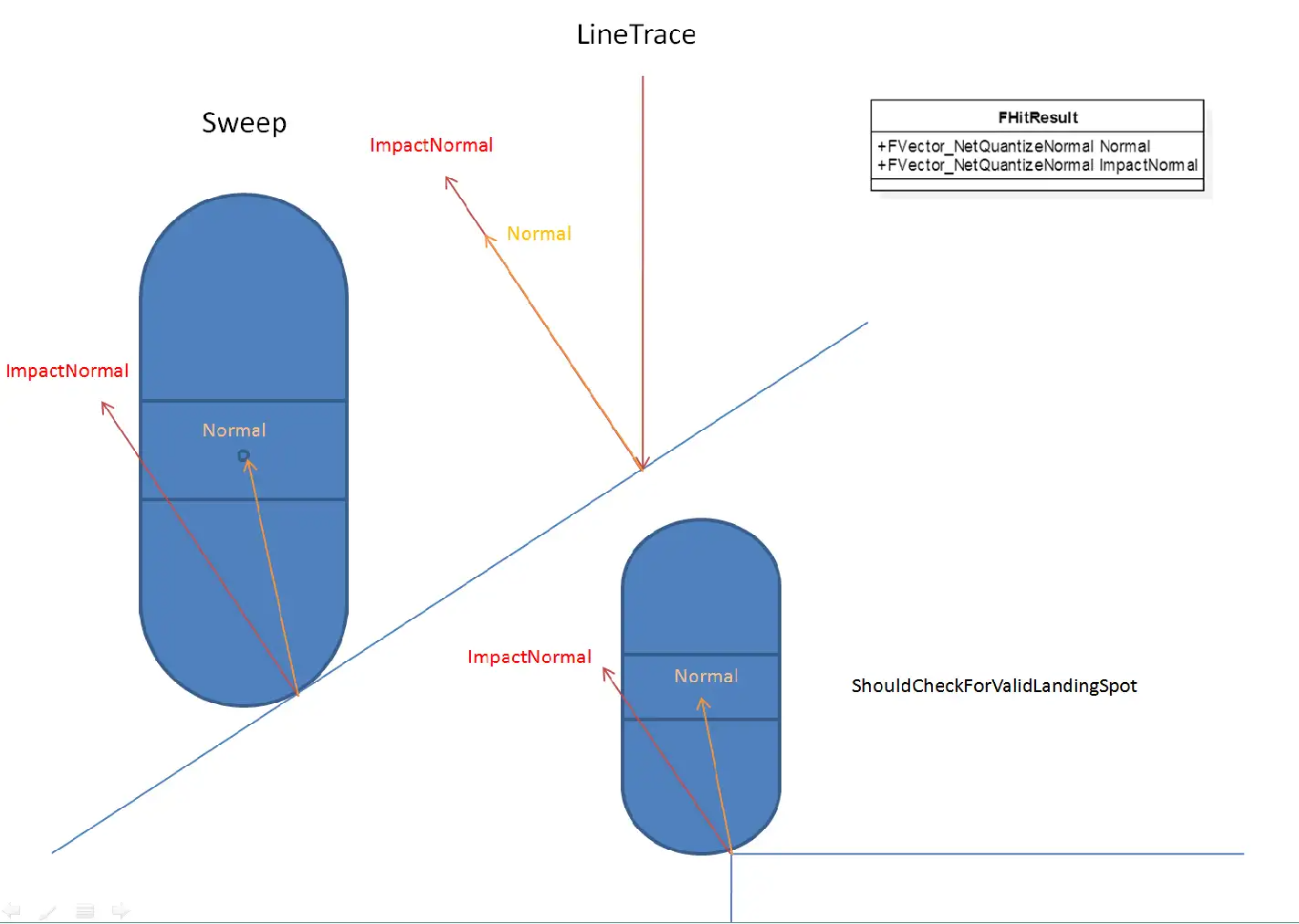

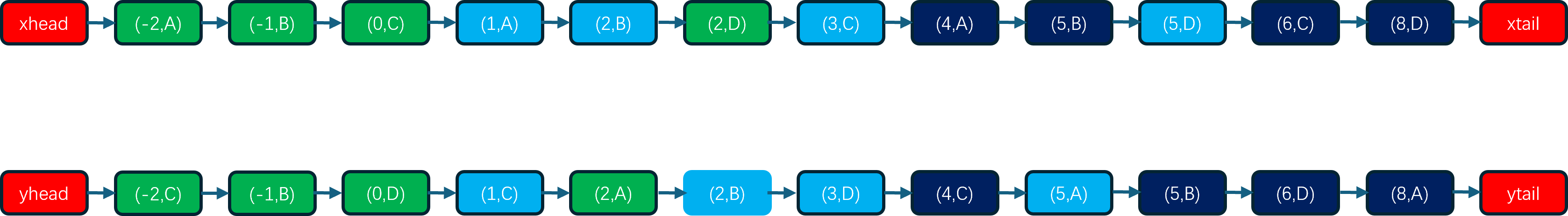

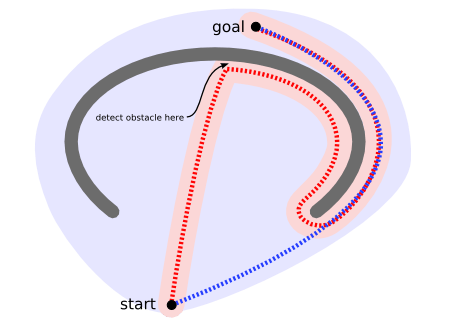

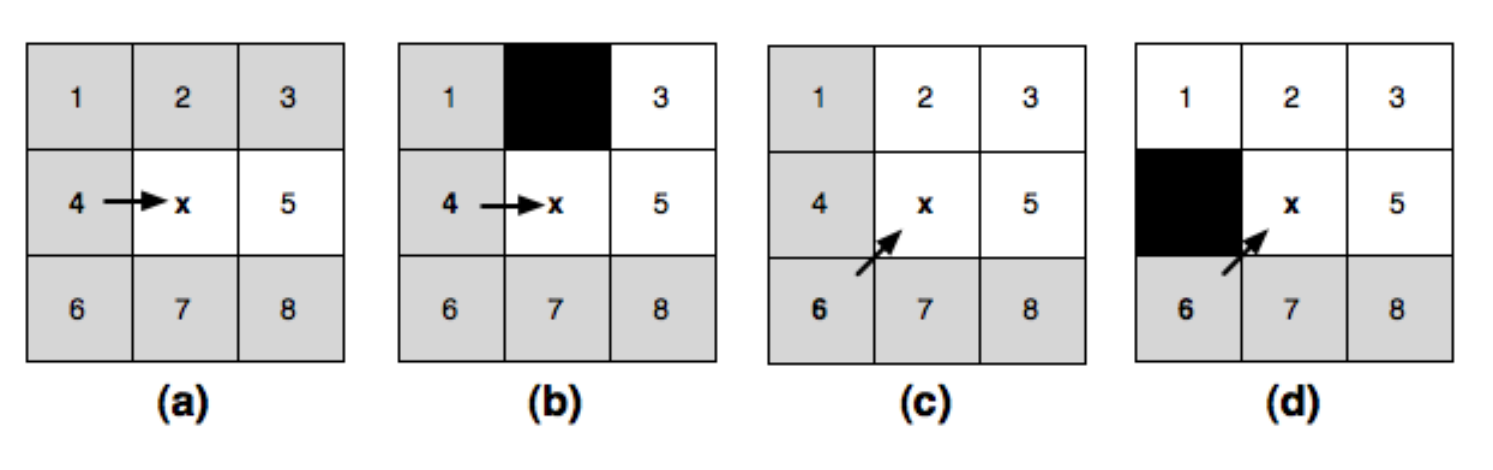

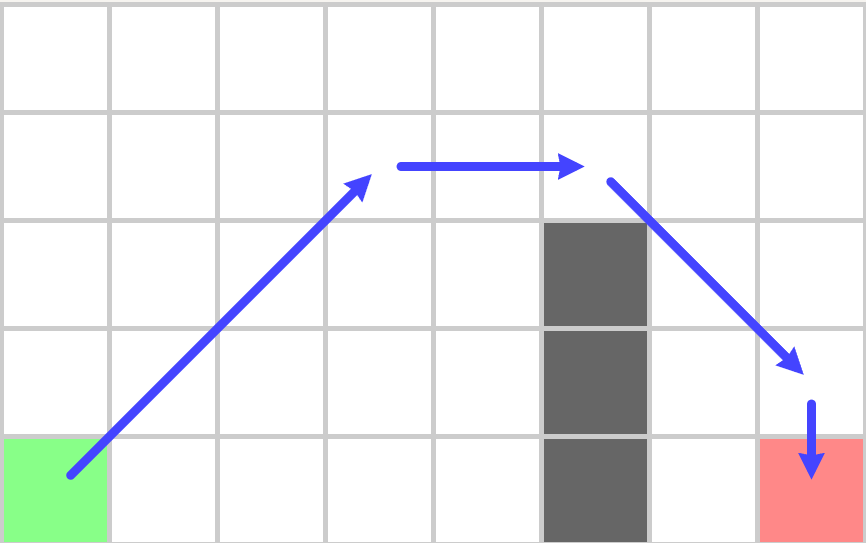

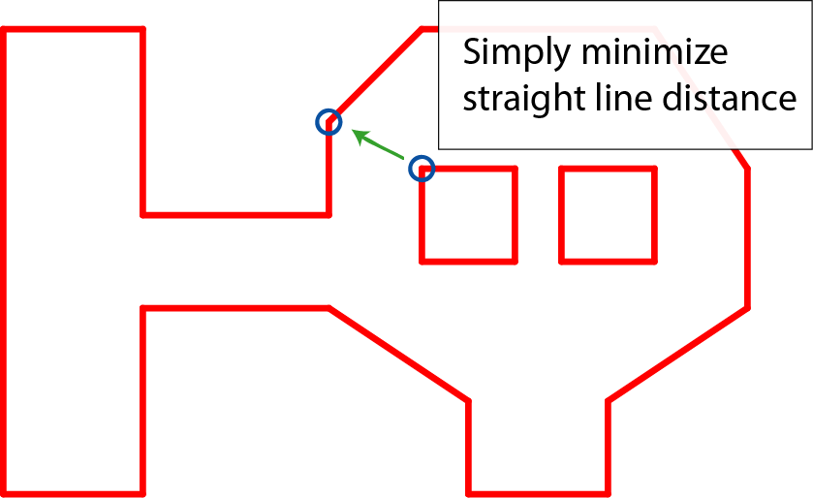

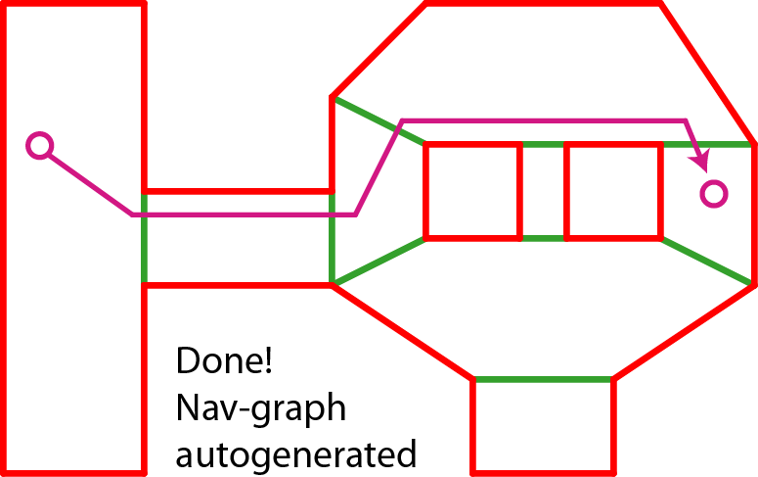

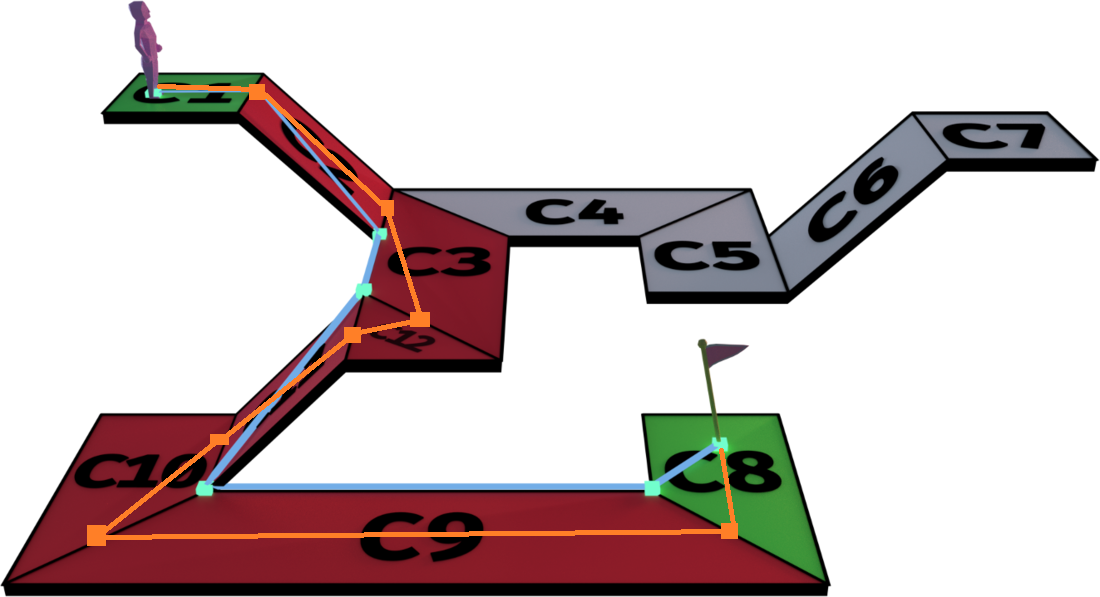

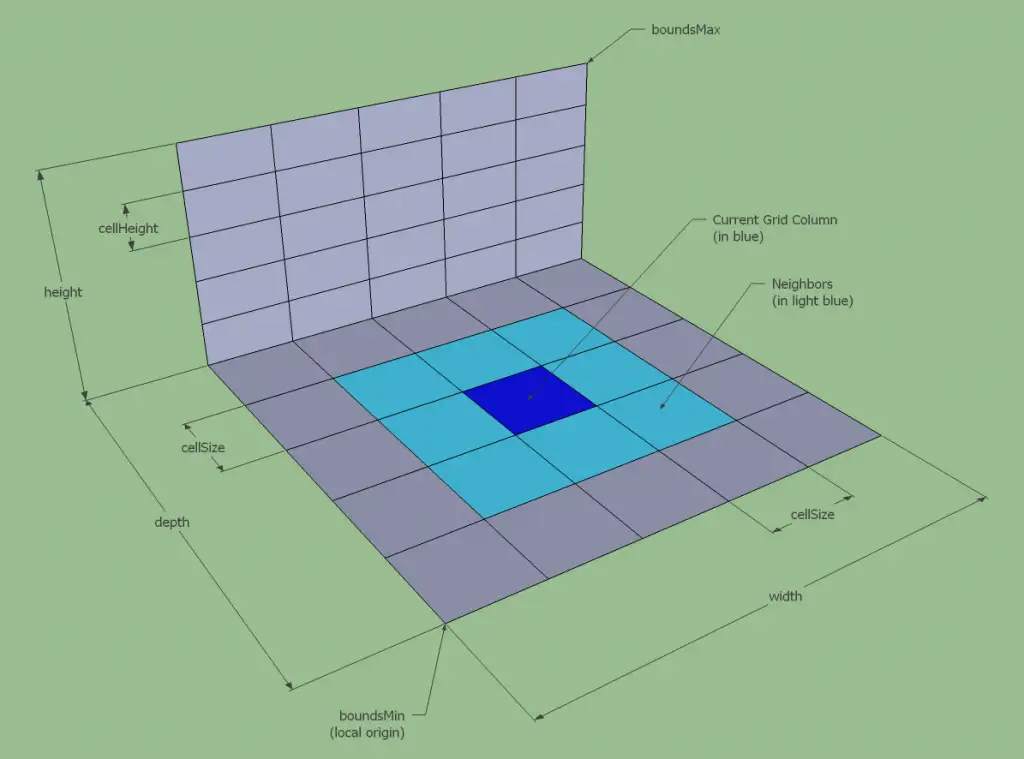

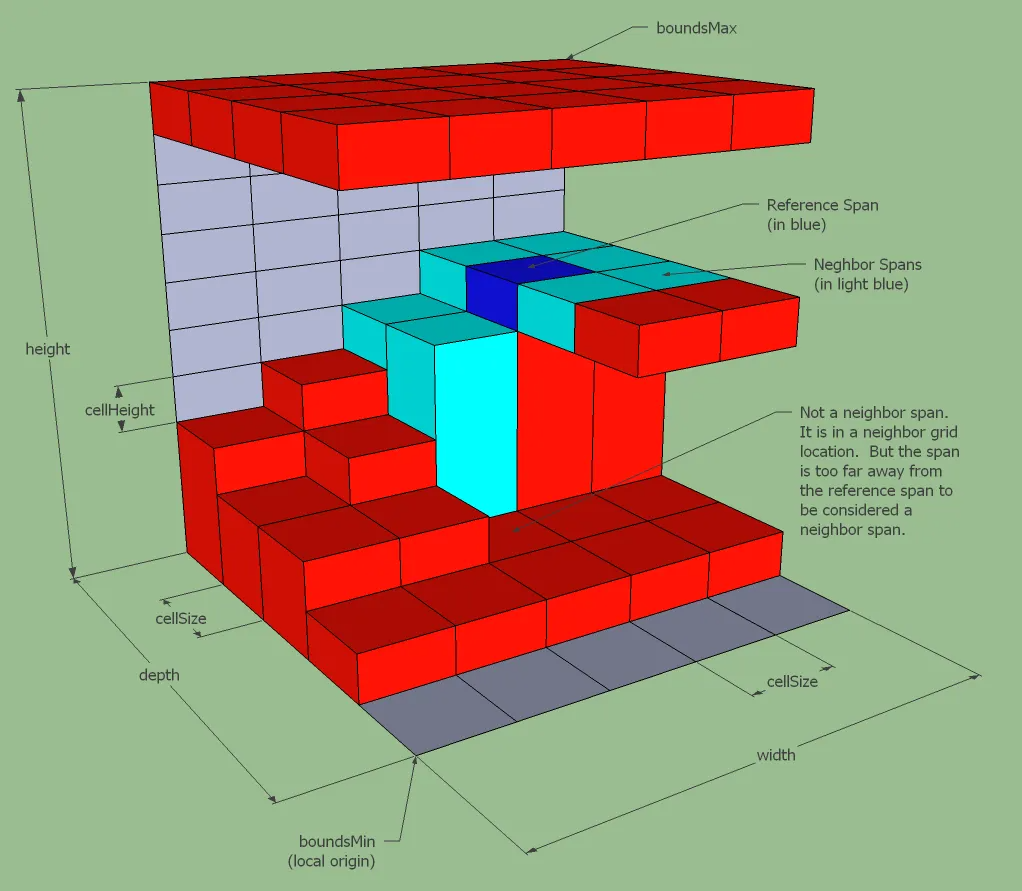

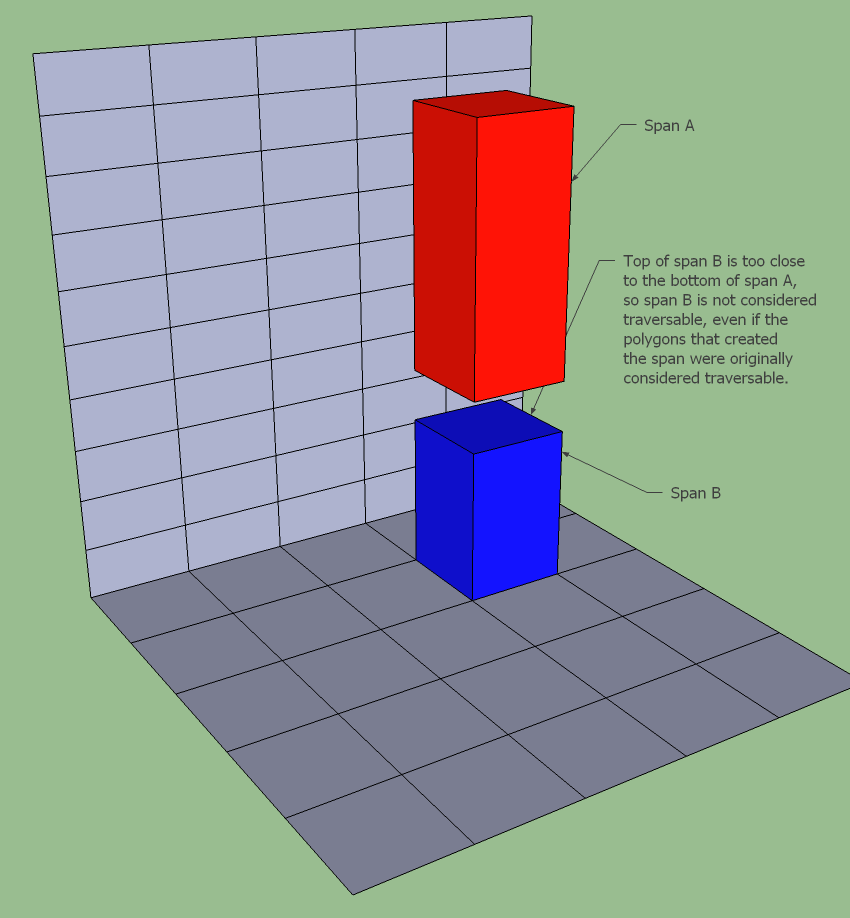

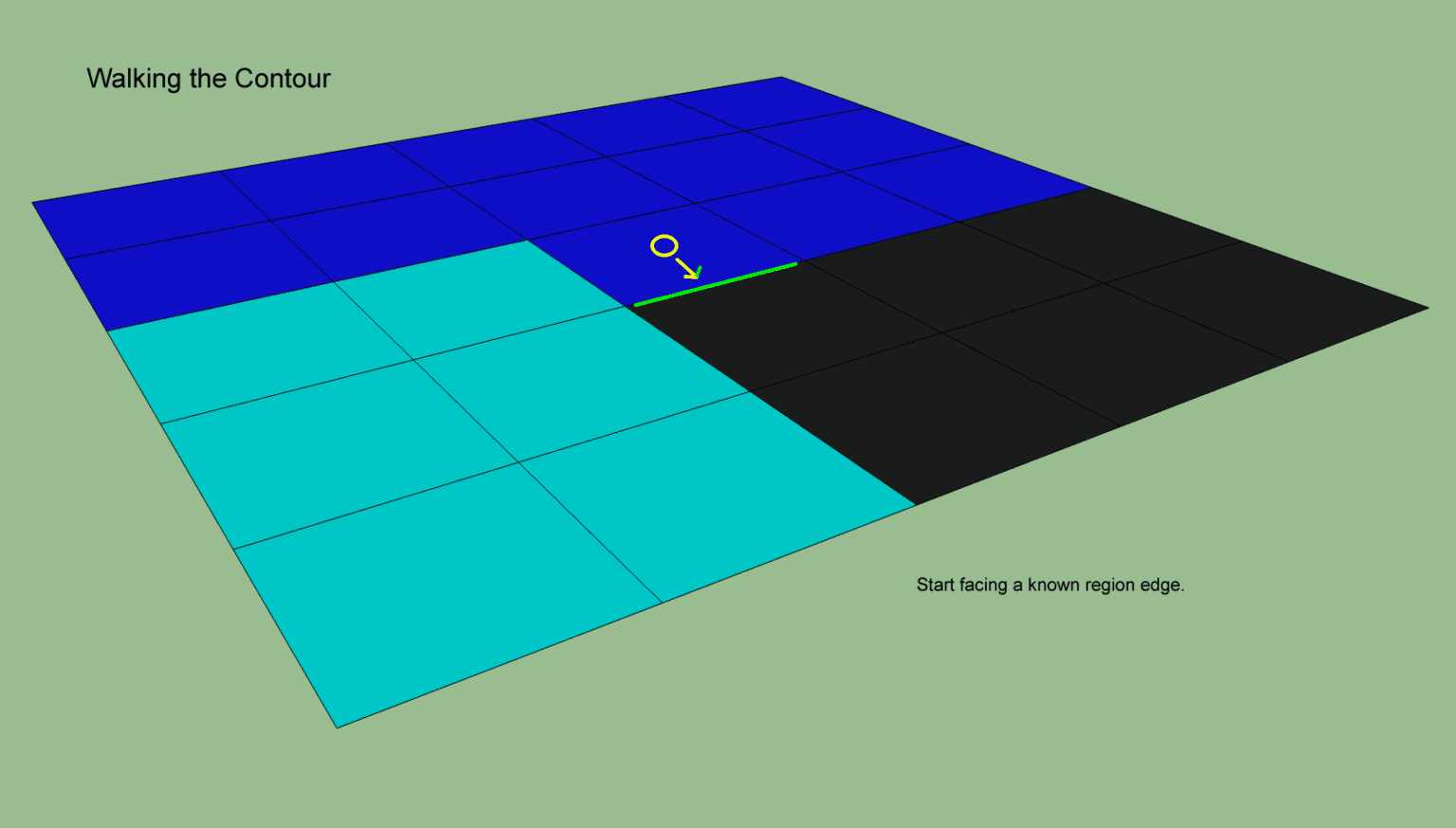

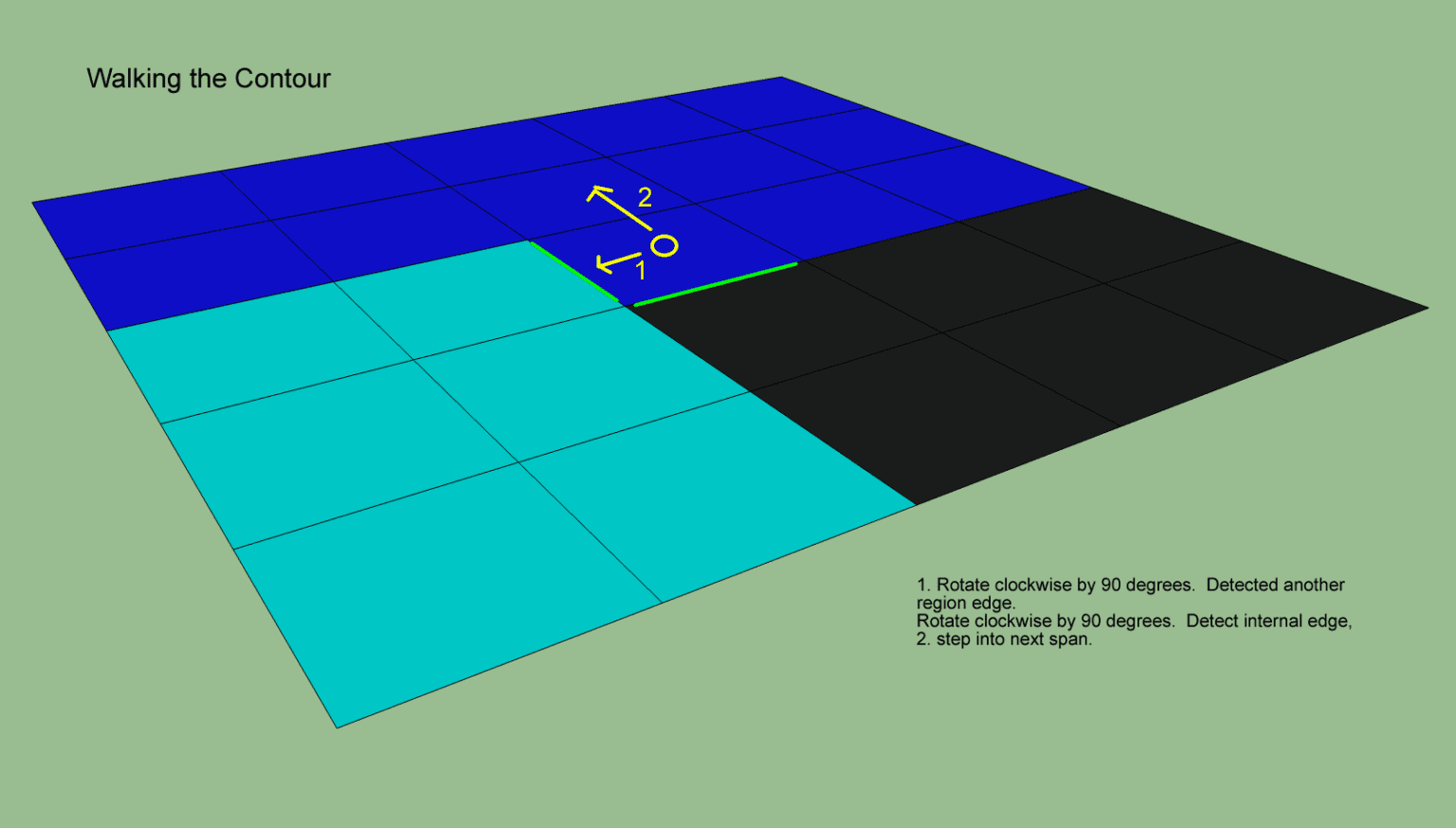

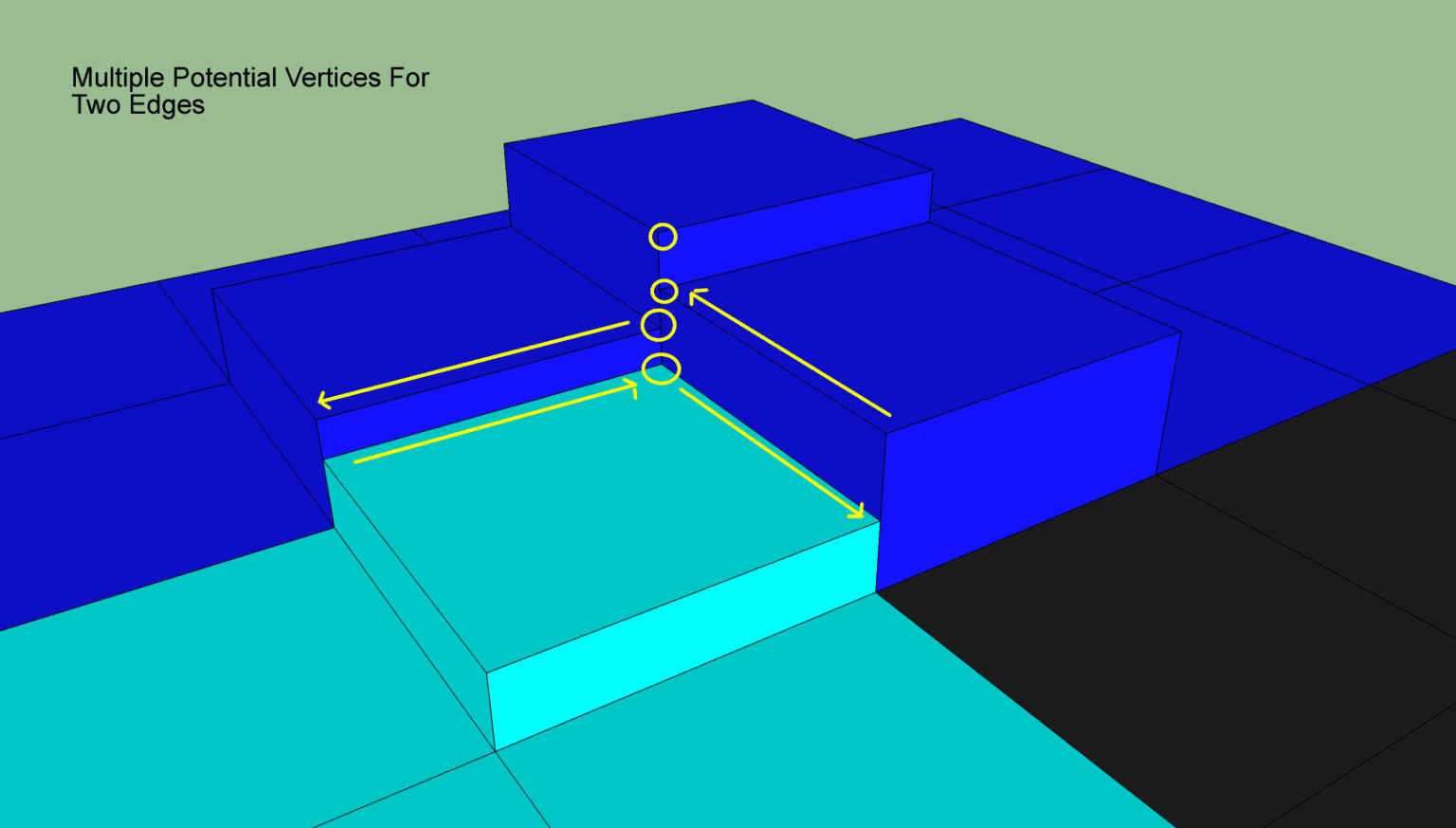

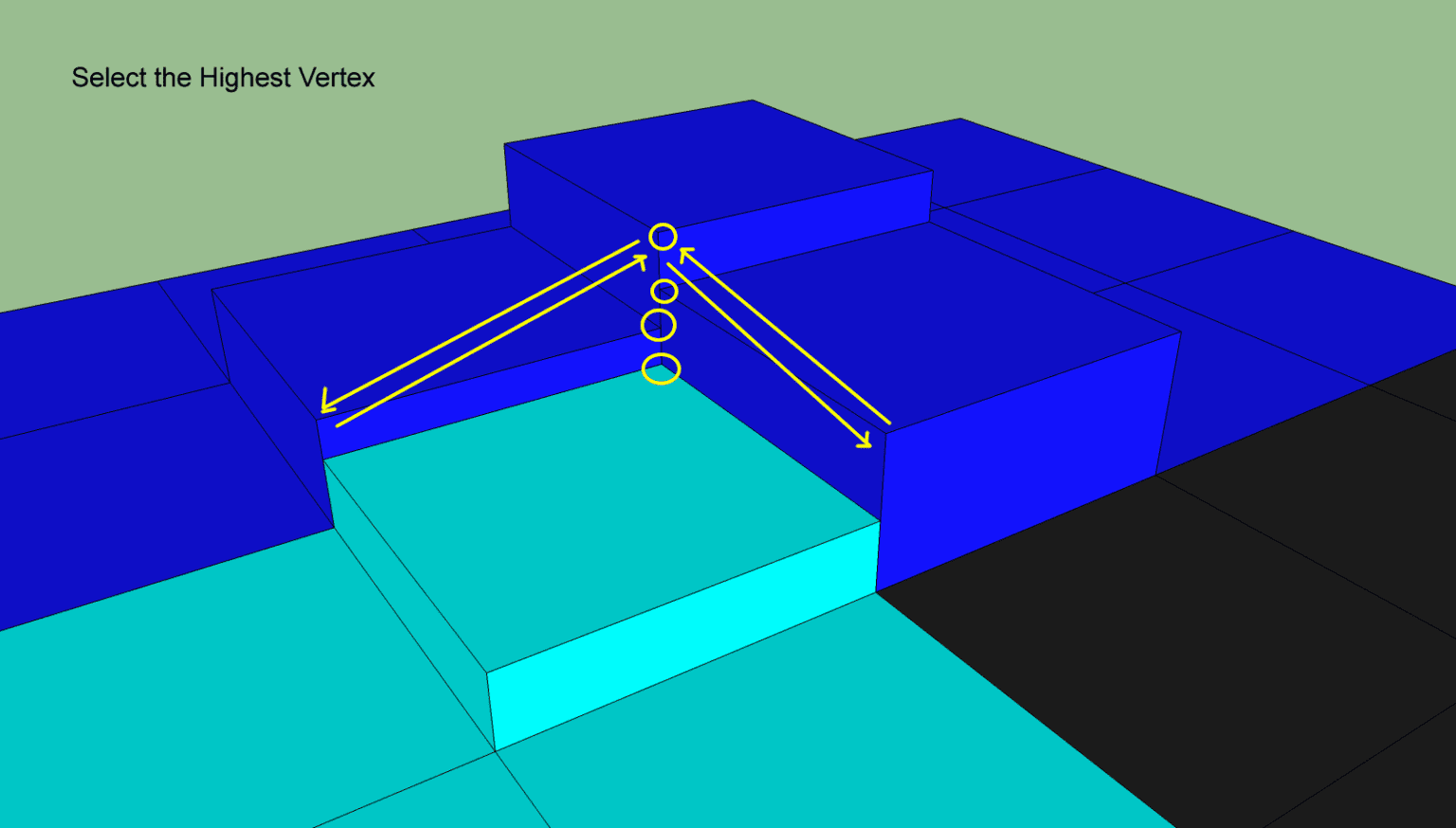

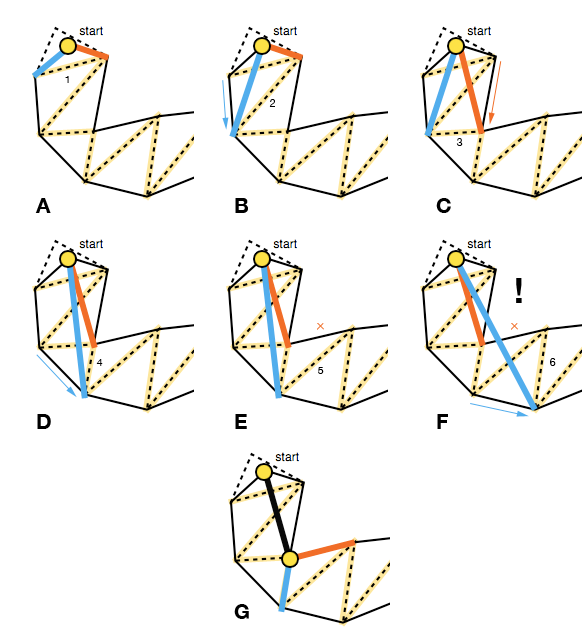

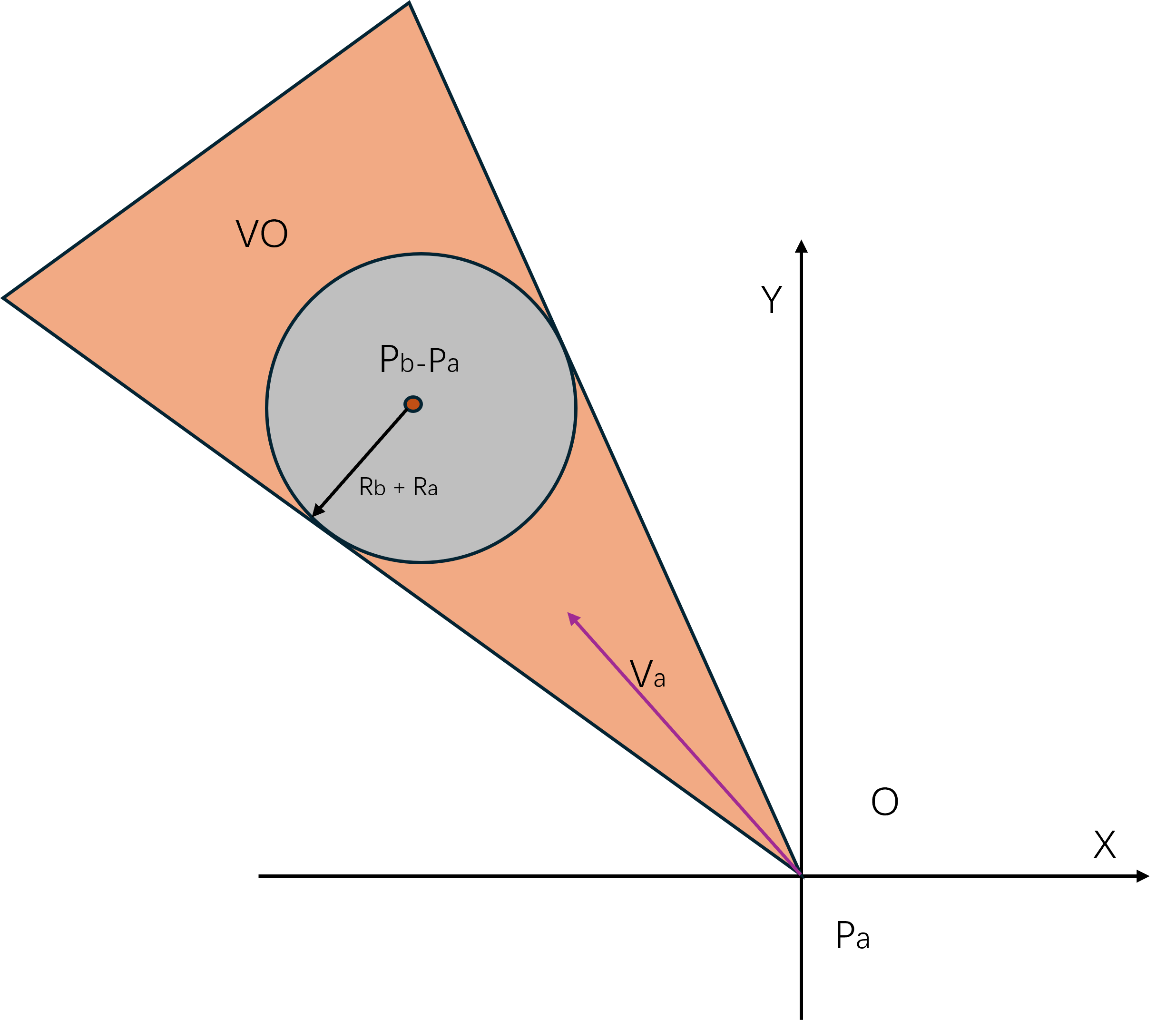

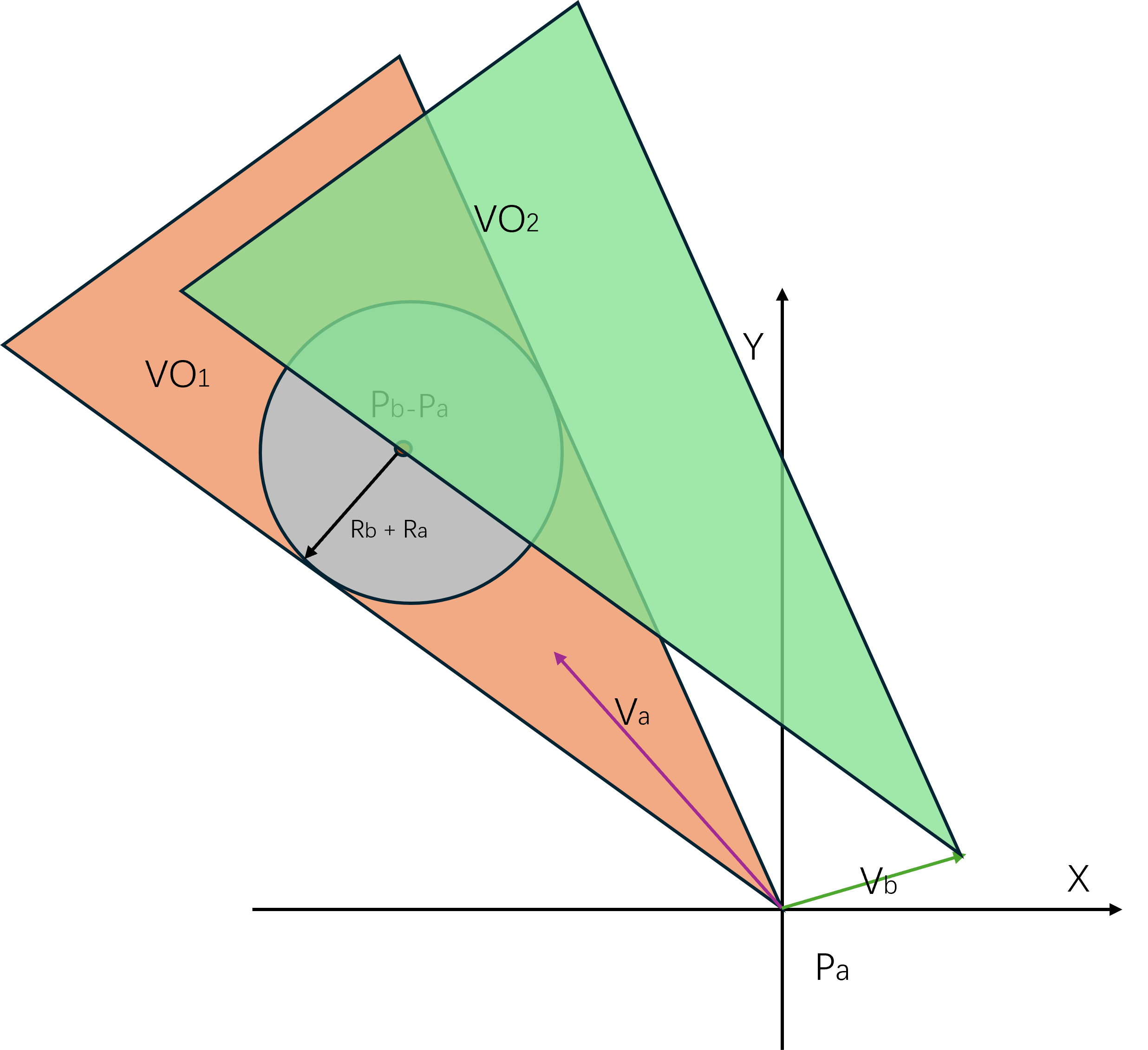

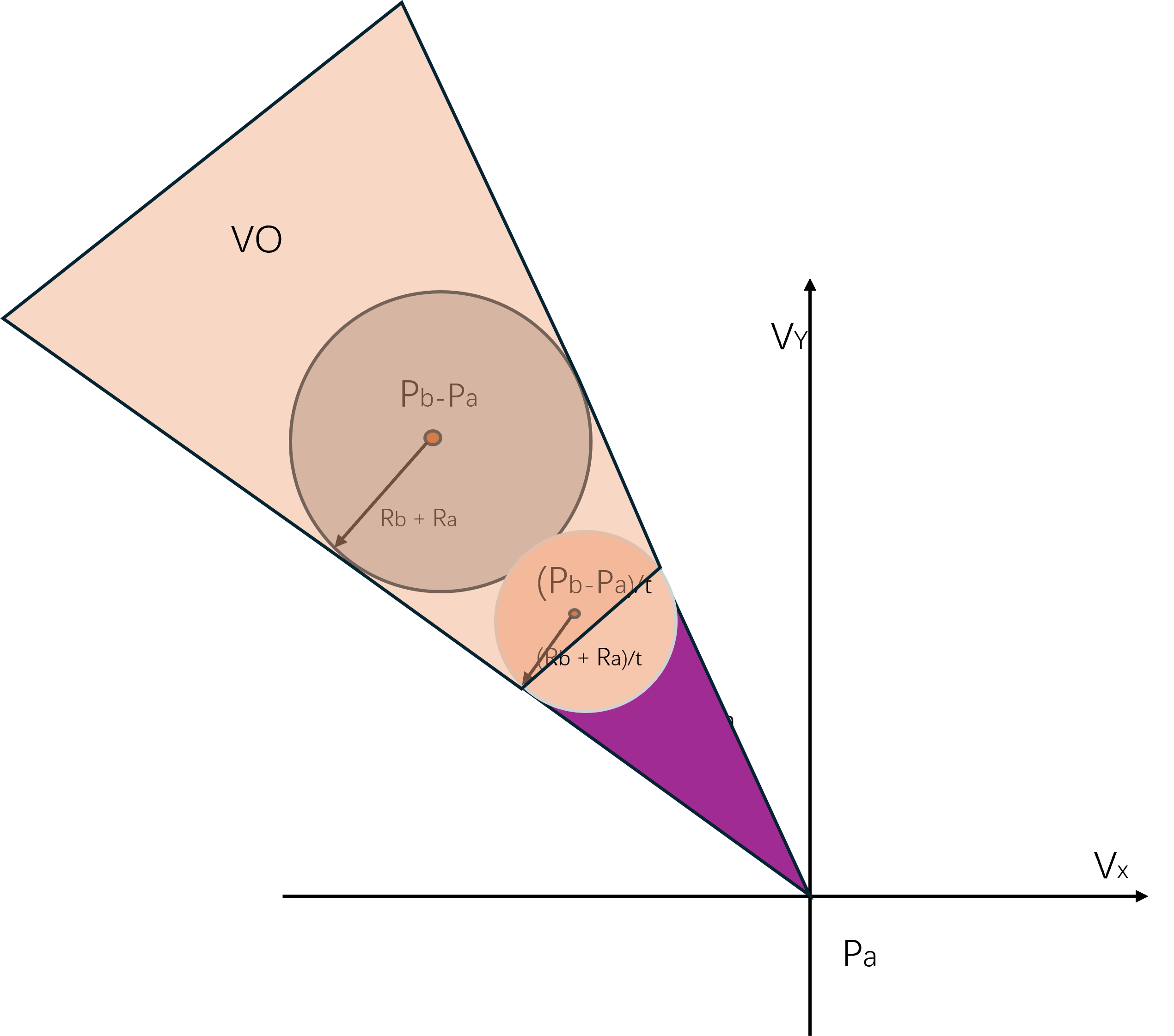

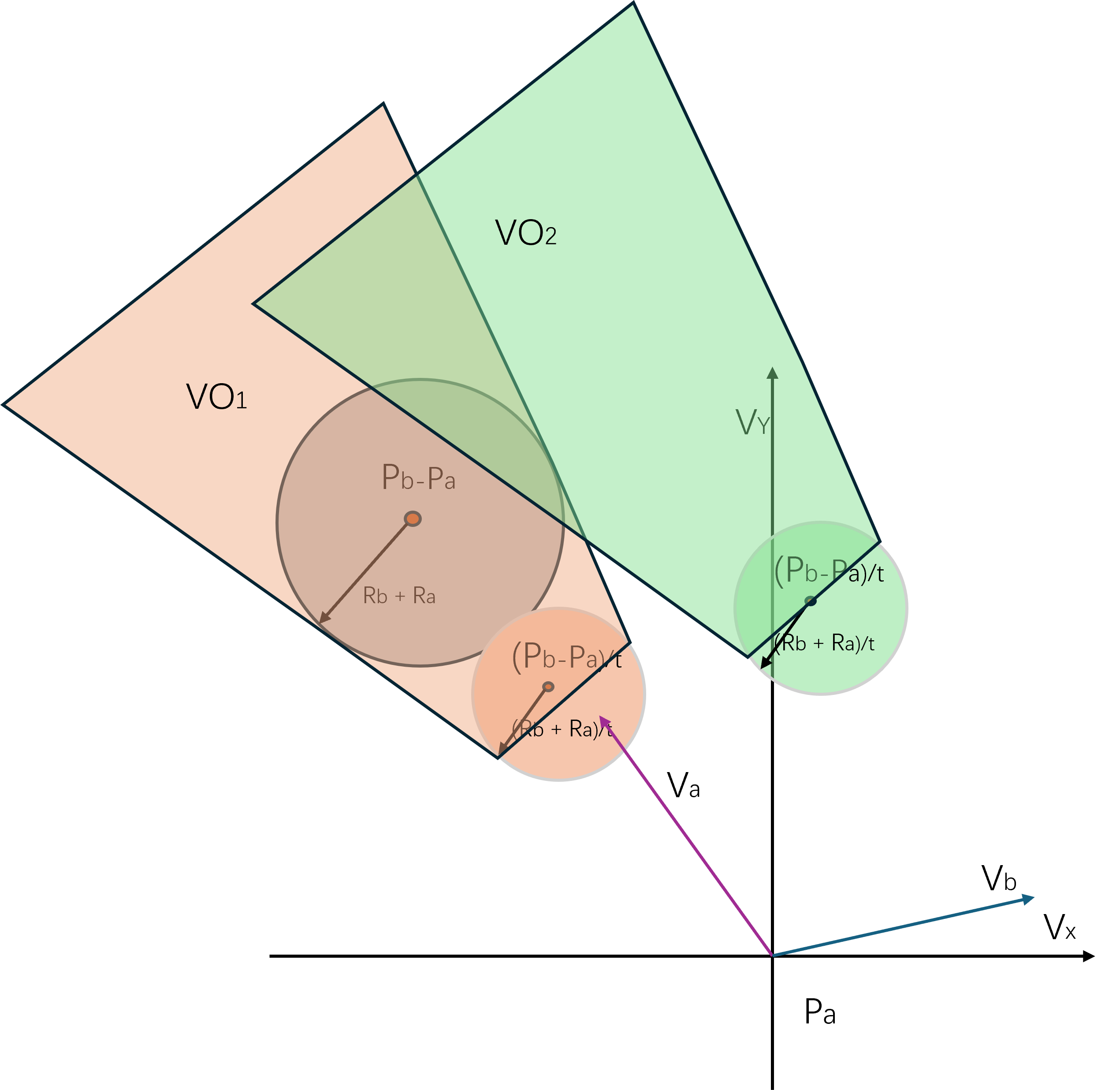

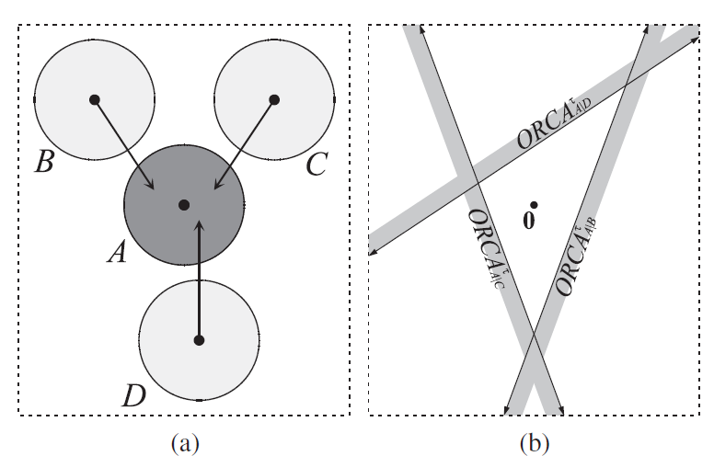

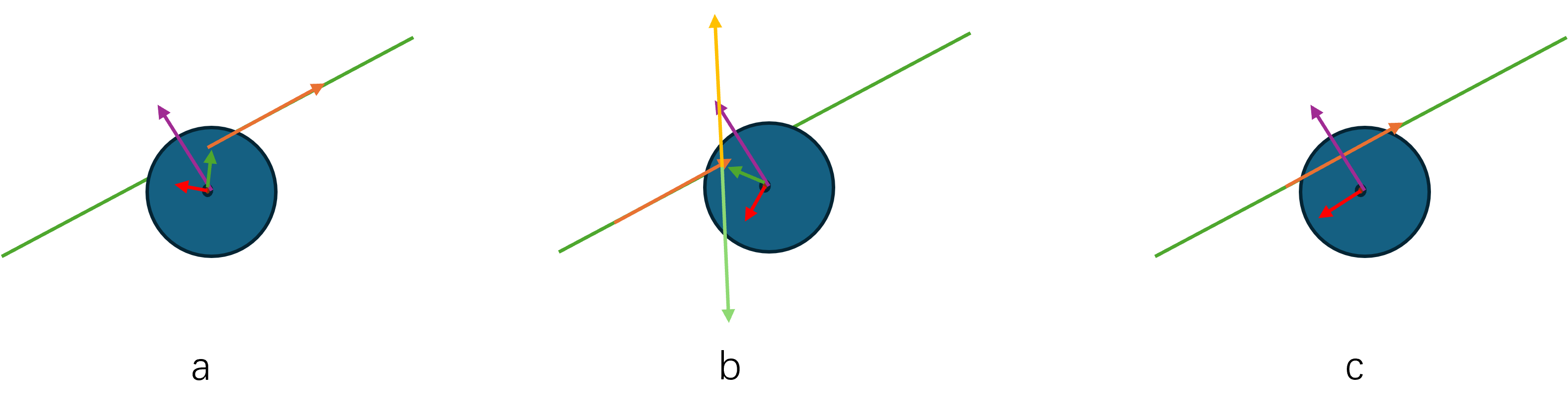

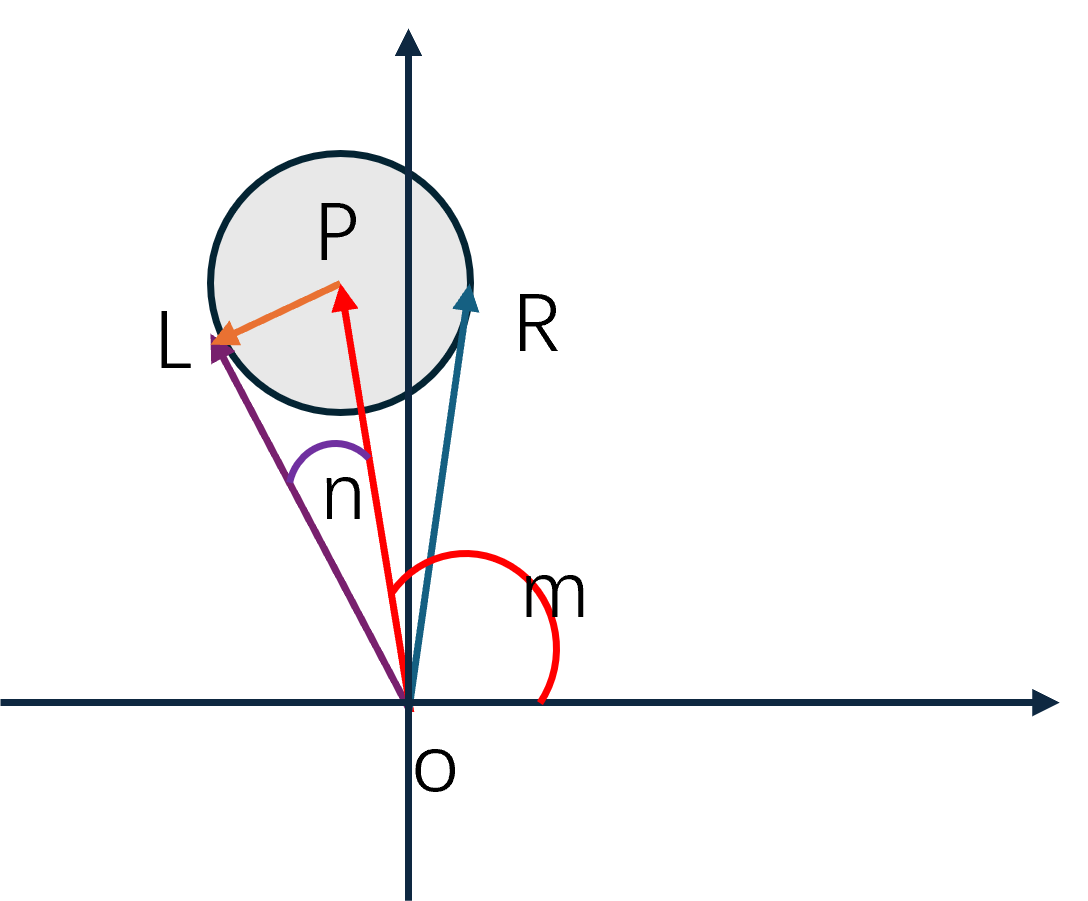

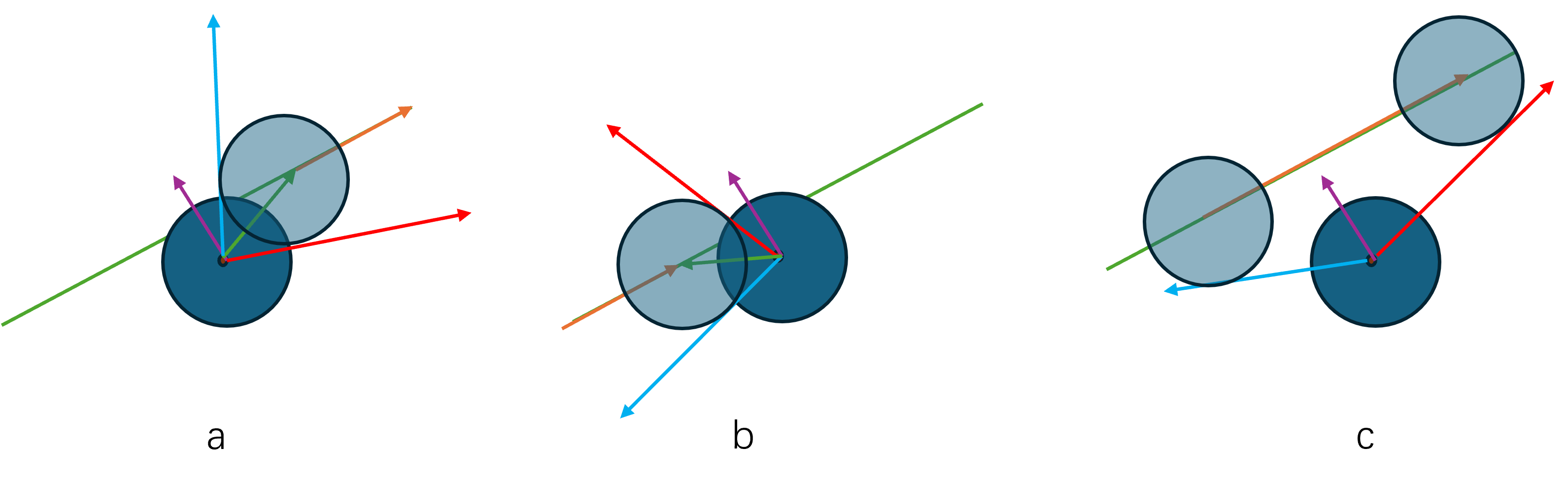

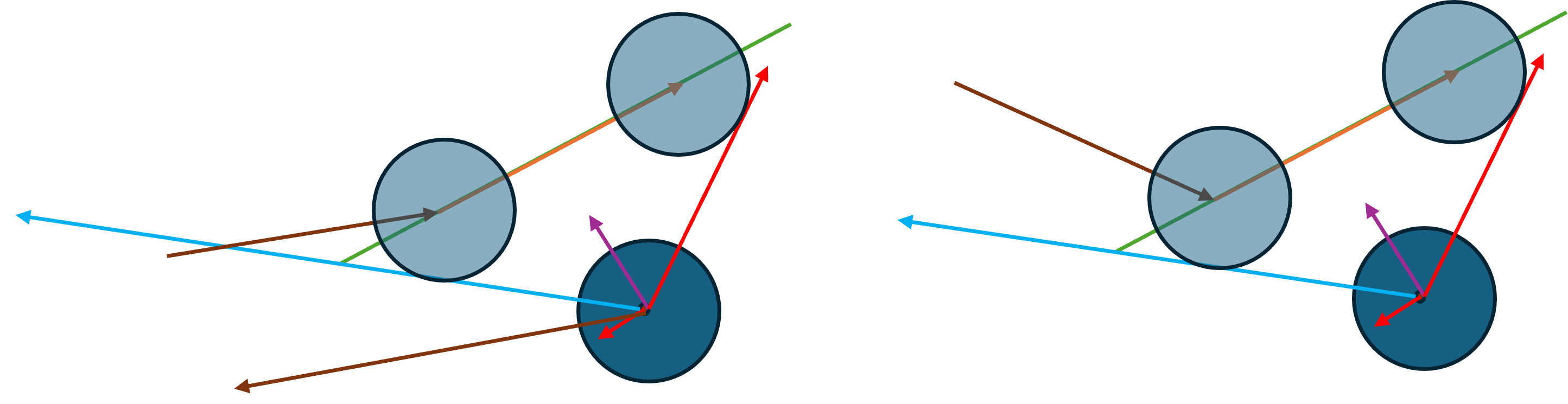

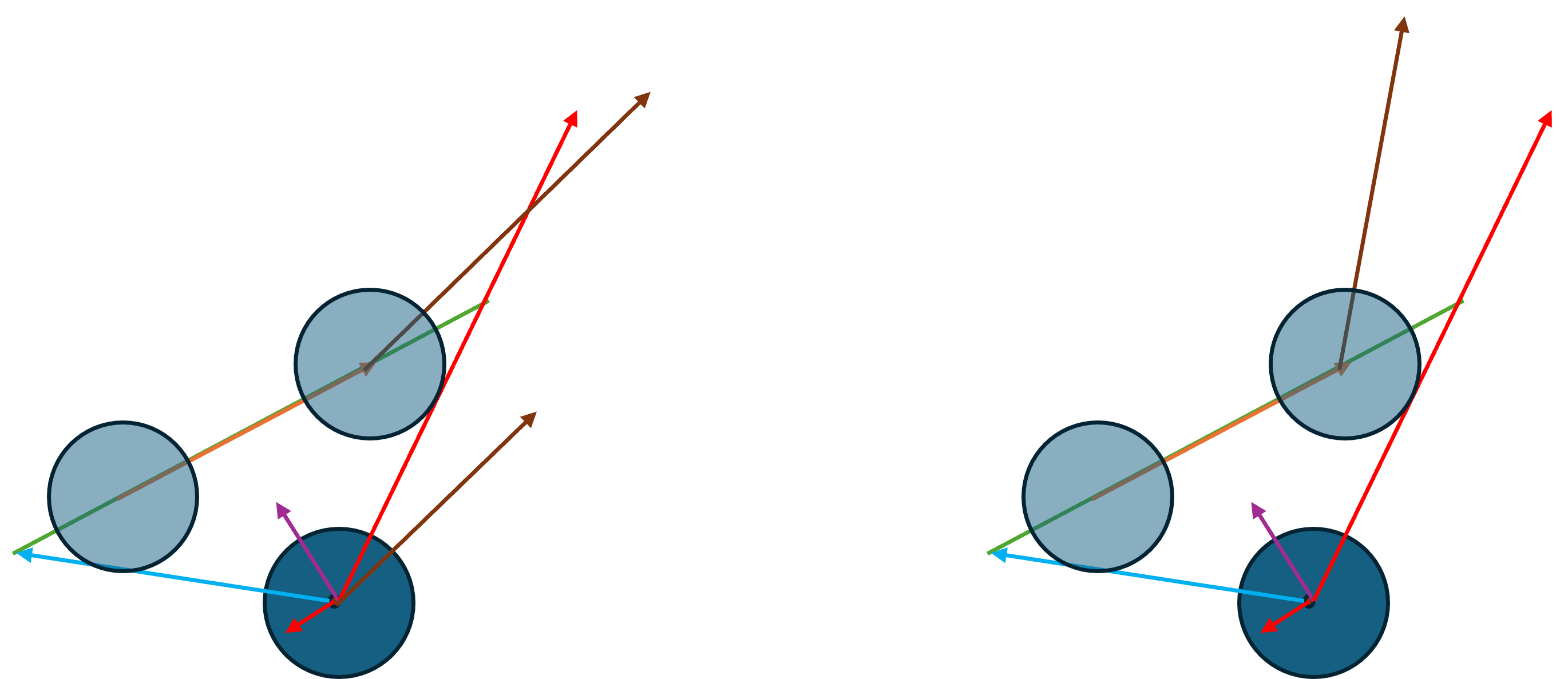

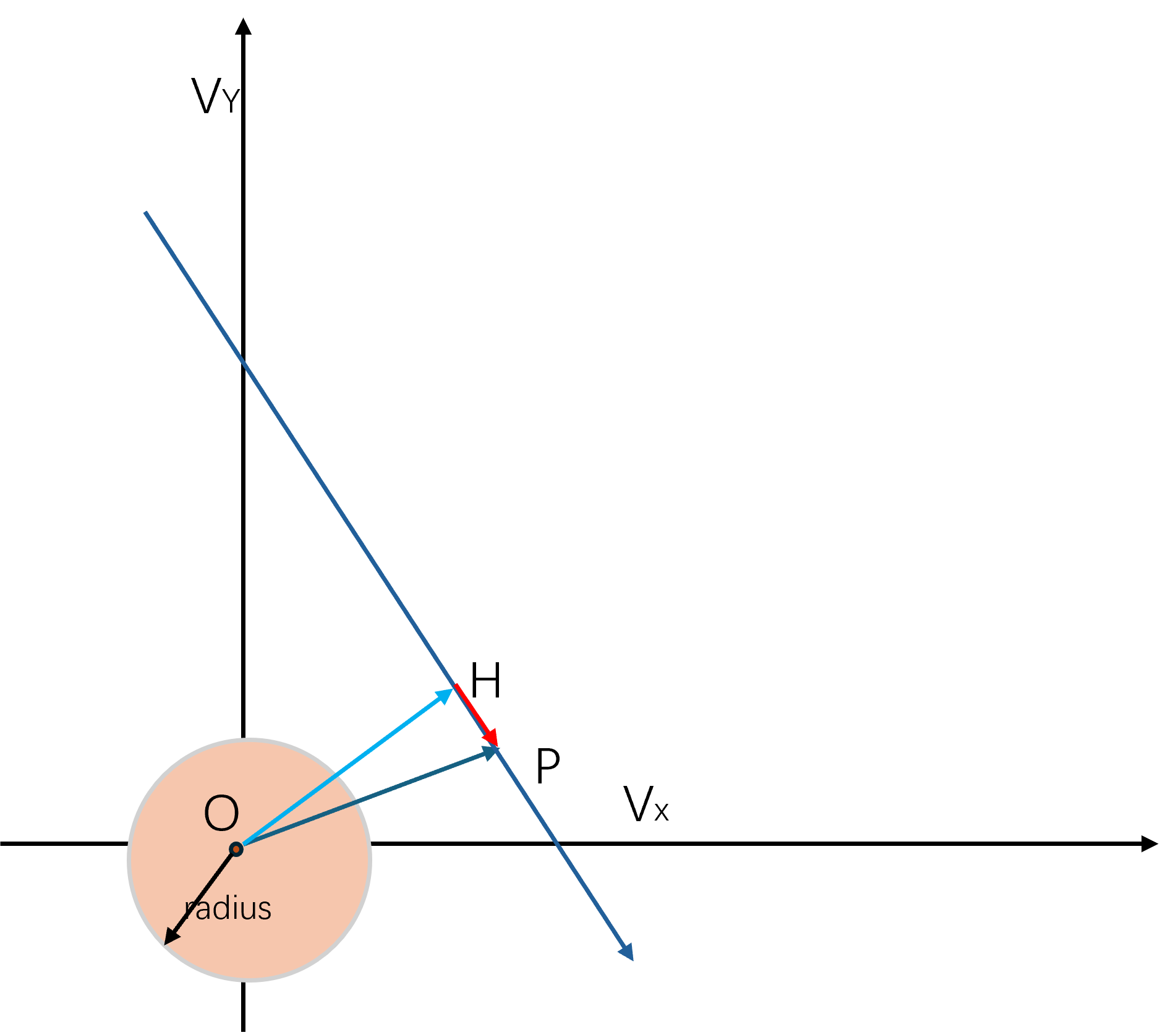

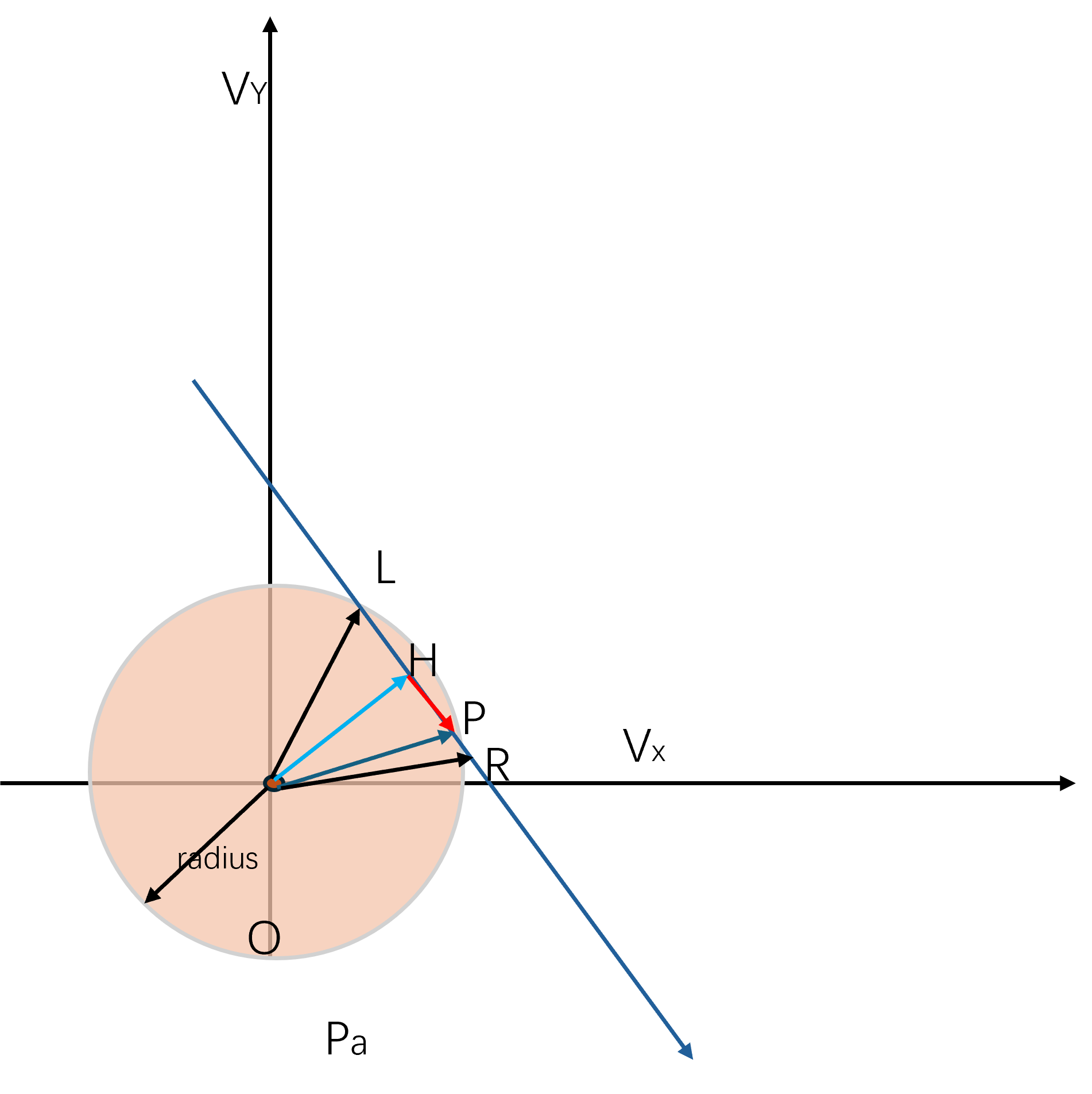

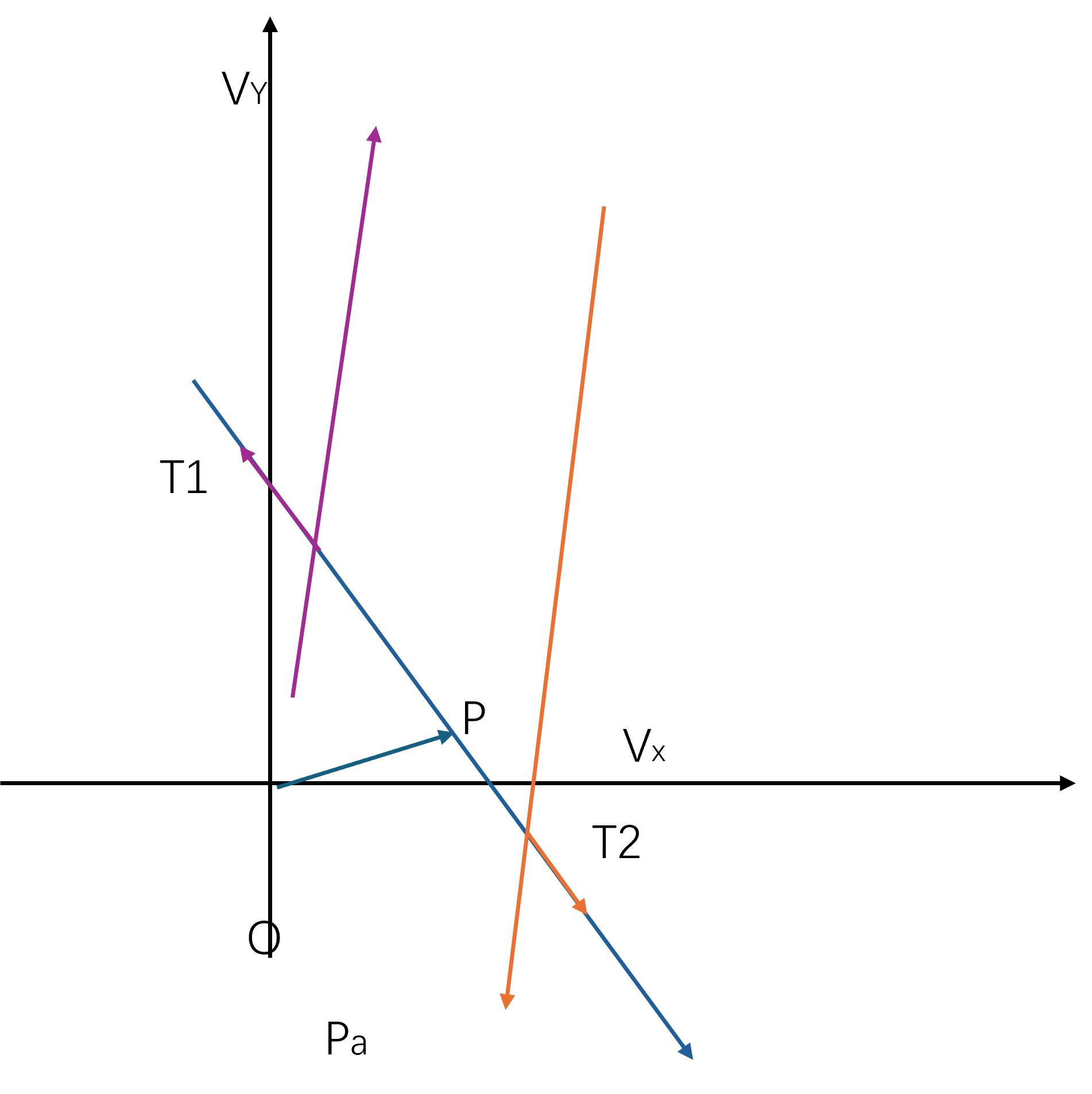

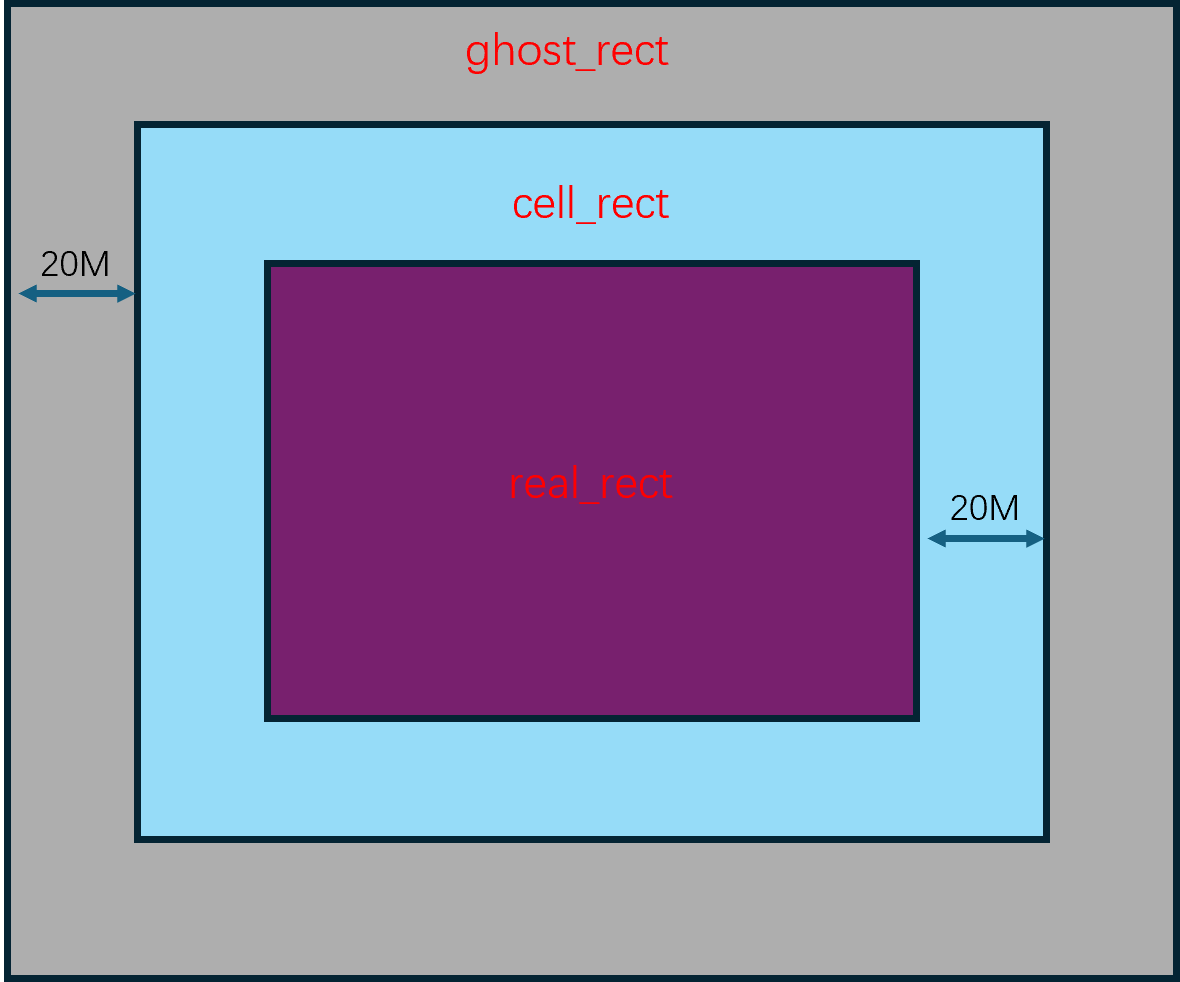

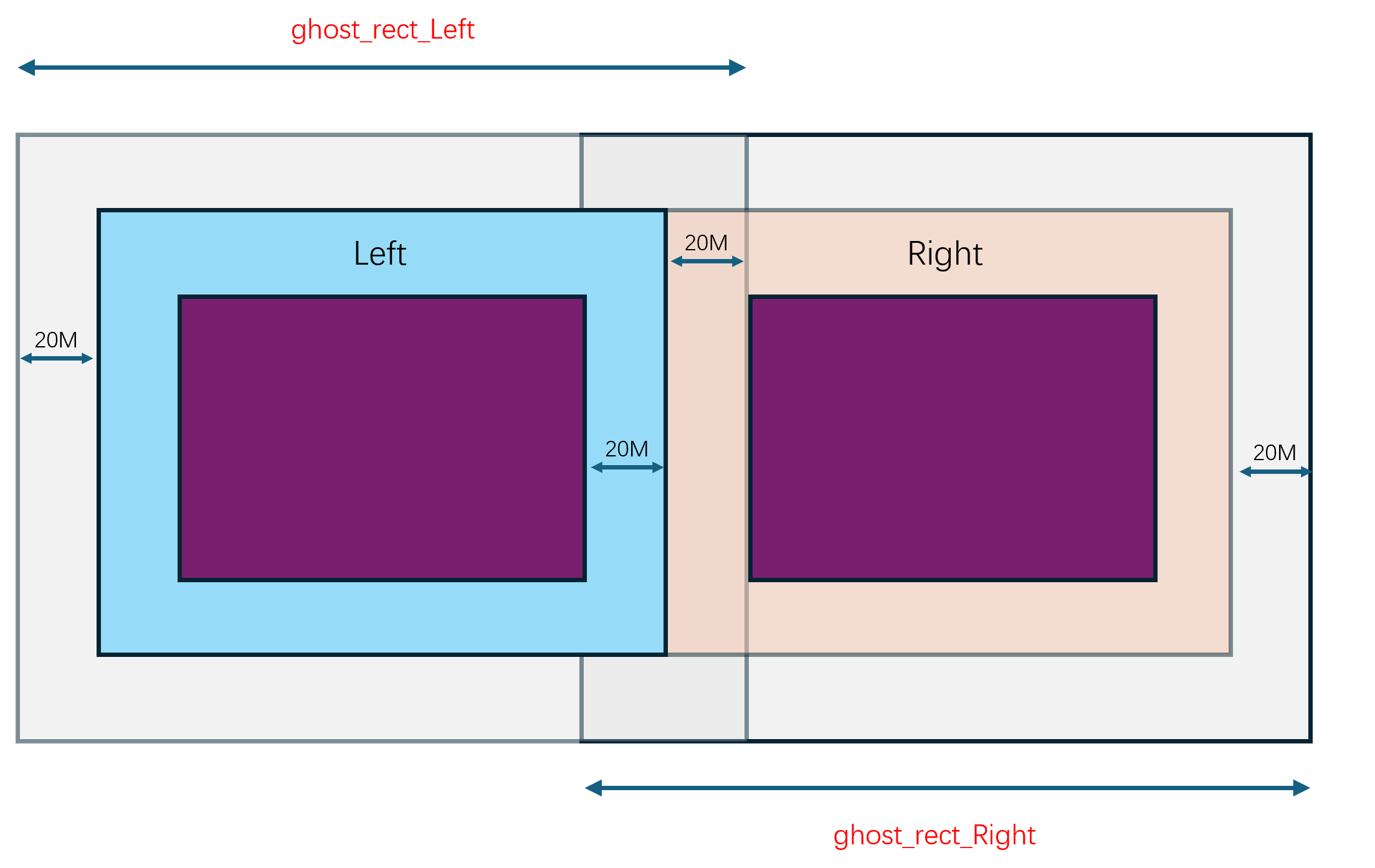

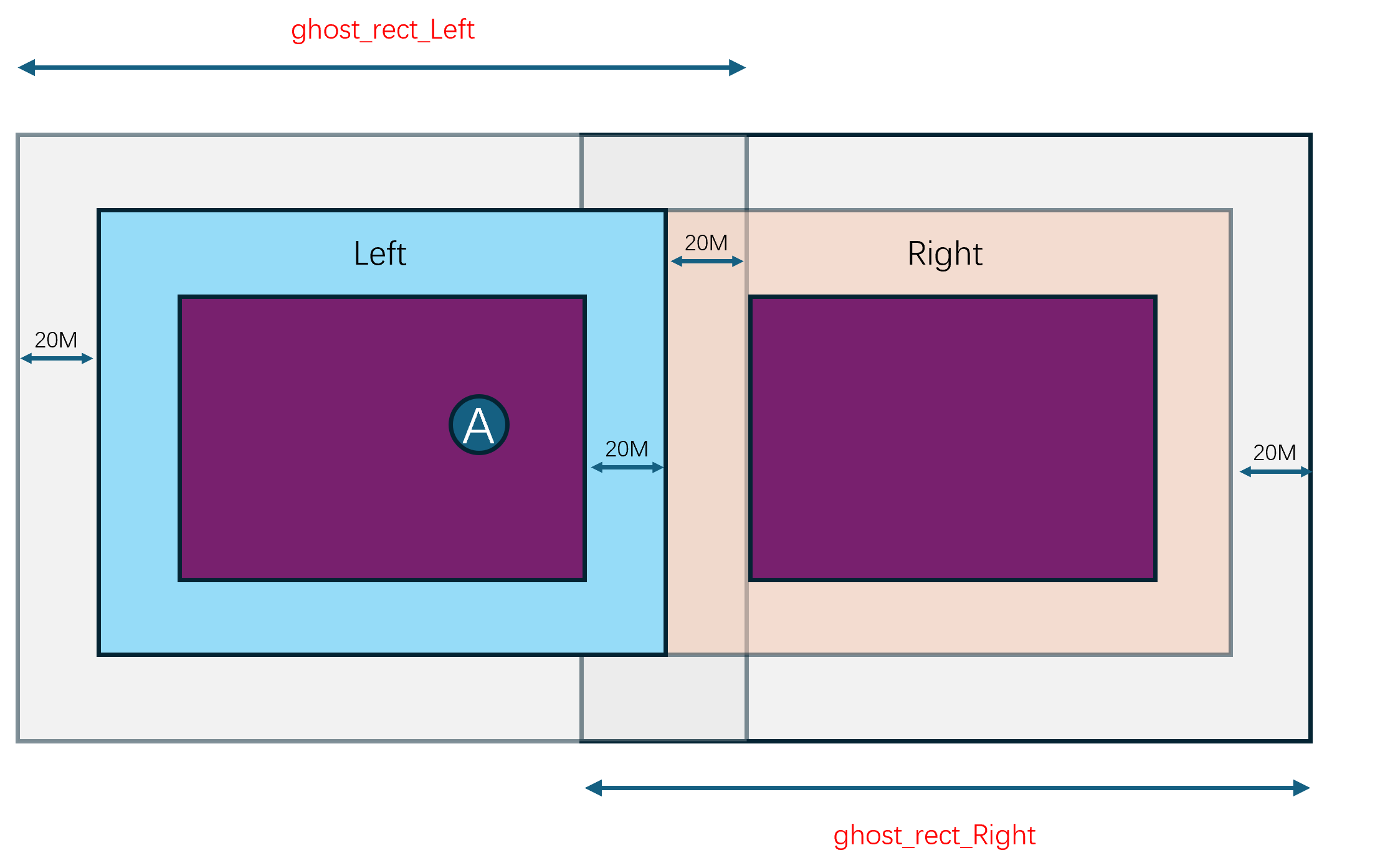

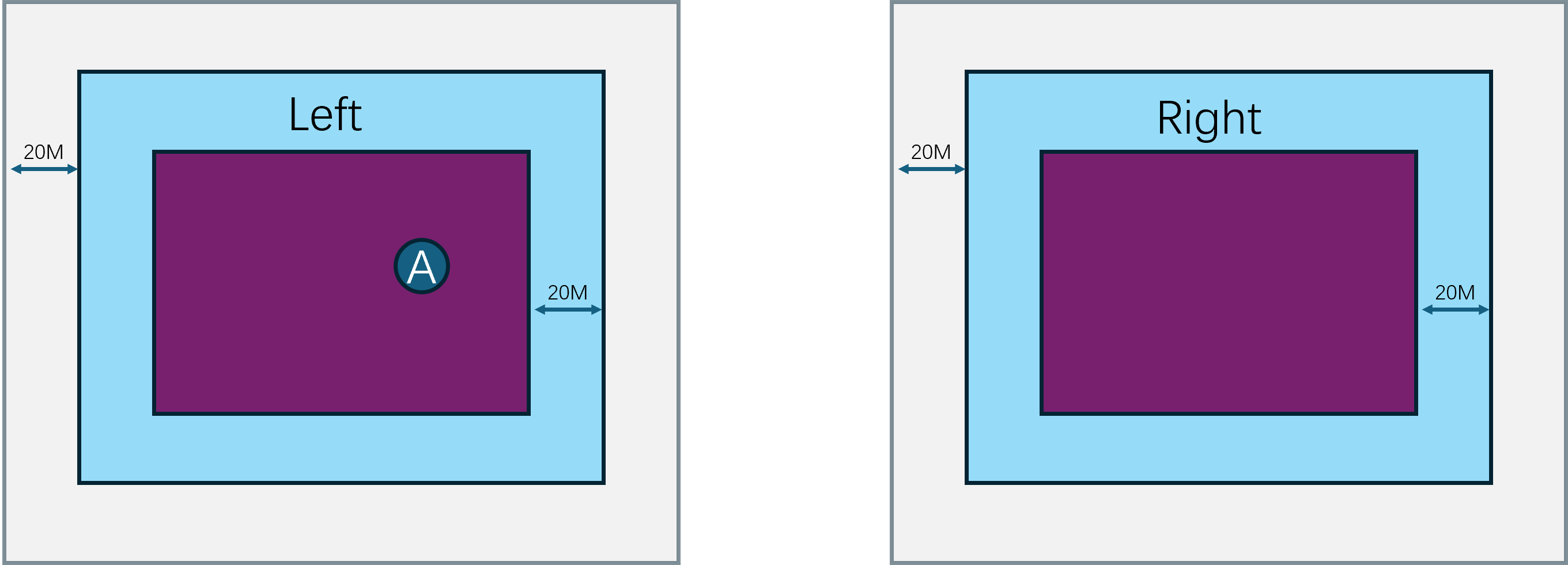

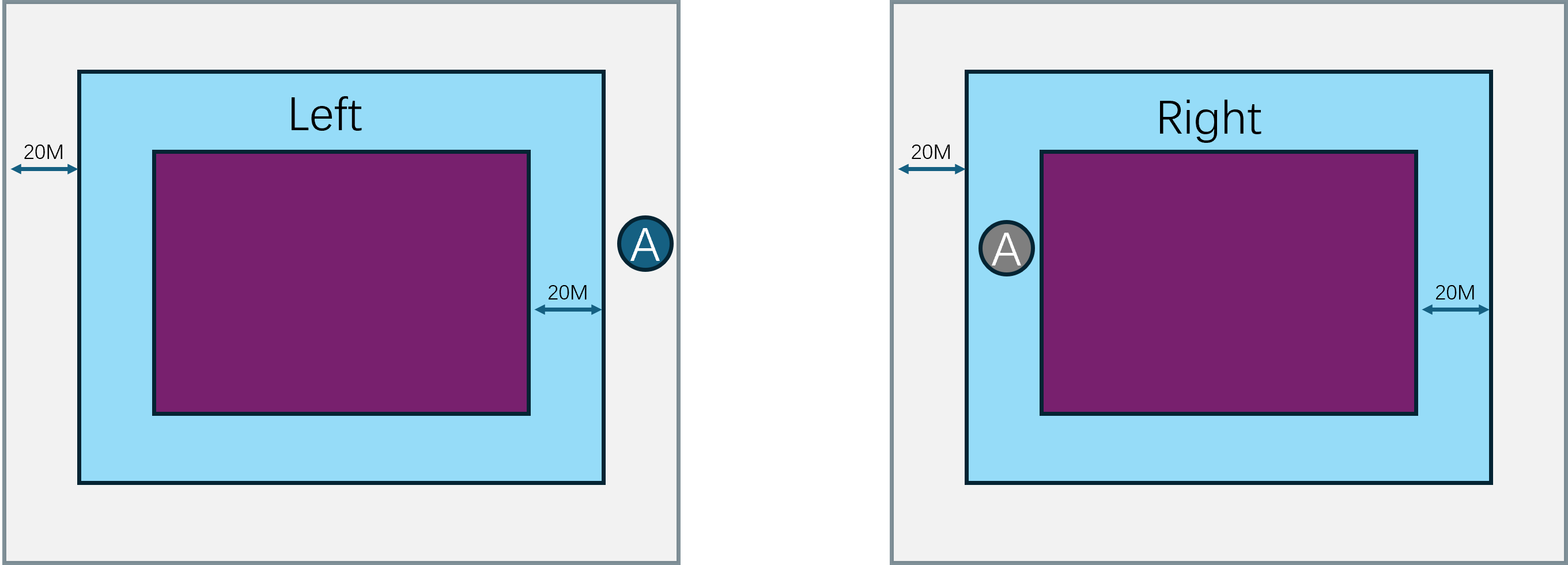

由于玩家能够自由的在不同的子场景之间移动,一旦玩家从一个子场景移动到另一个子场景,就需要将玩家从原场景进程中移除,并将玩家添加到目标场景进程中,也就是需要执行一次玩家的迁移(migration)流程。由于常规的状态同步设计里一个玩家只能看到单进程内的其他玩家,这种越过边界导致的进程切换就会让客户端出现非常明显的同步状态变化。即属于上一个场景的实体都会被销毁,同时会突然同步当前场景里可见的一些实体。这种突然且剧烈的状态变化会给游戏体验带来非常严重的割裂感,解决这种由于迁移导致的客户端同步状态剧烈变化的技术就是无缝迁移(Seamless Migration)。

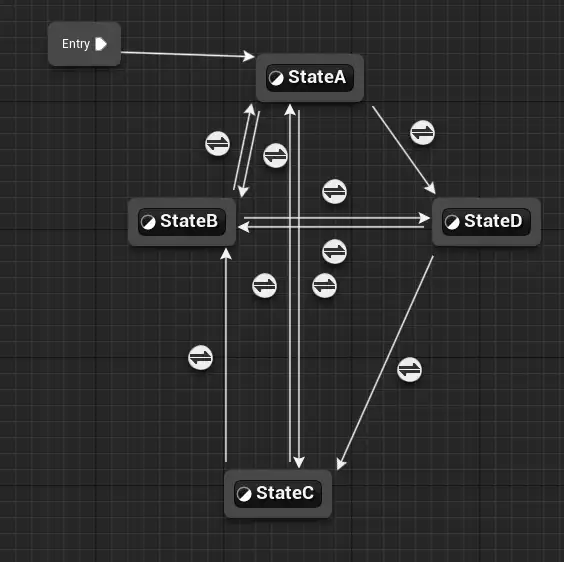

游戏服务端架构样例

在本书中,我将详细的介绍Bigworld、Unreal Engine、mosaic_game这三个游戏引擎在服务端的一些业务上的具体实现。为了方面读者理解后面章节中对那些充满了源代码的内容,在这里就先大致的介绍一些这三个引擎的基础设计以及一些核心概念。

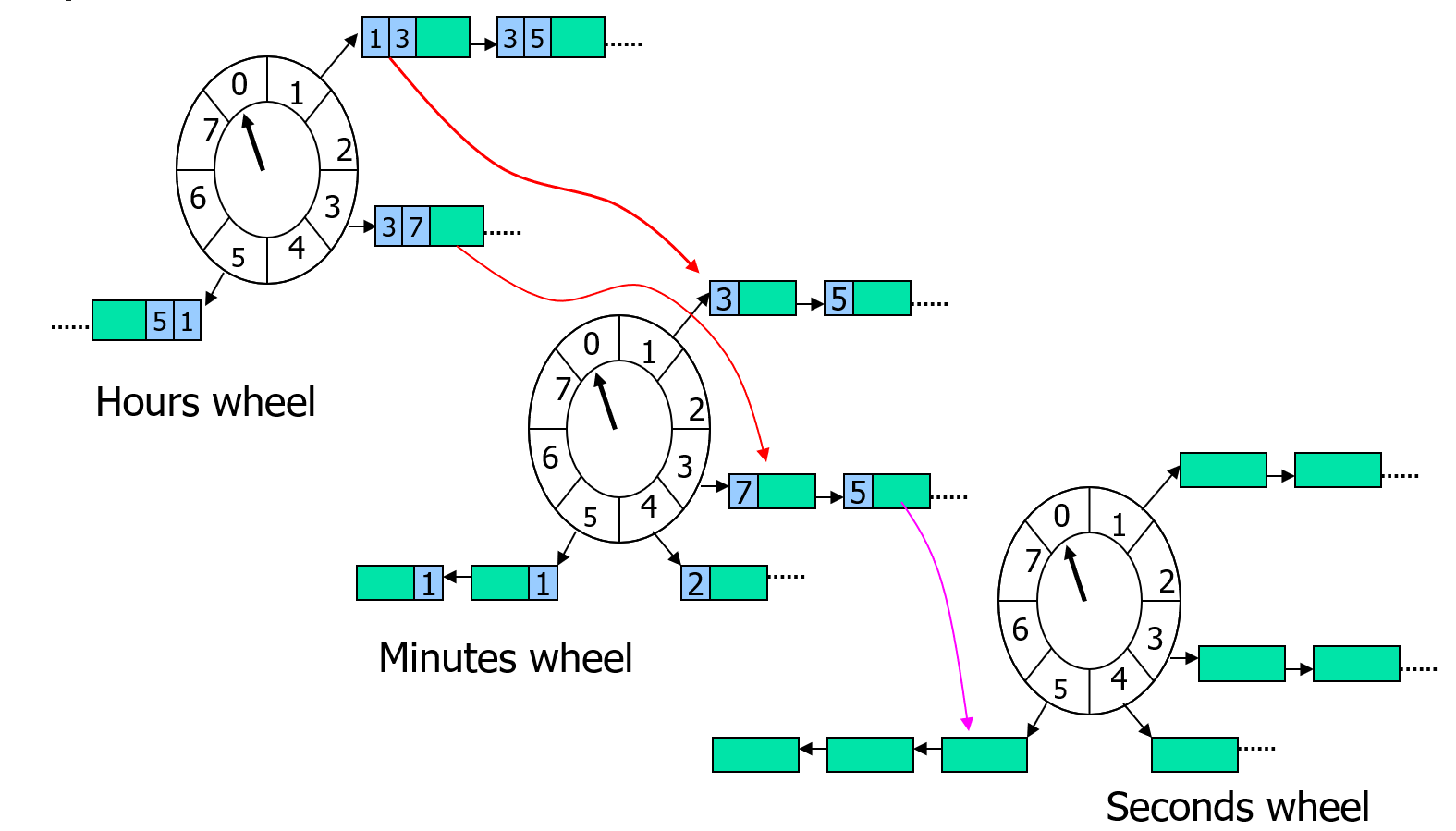

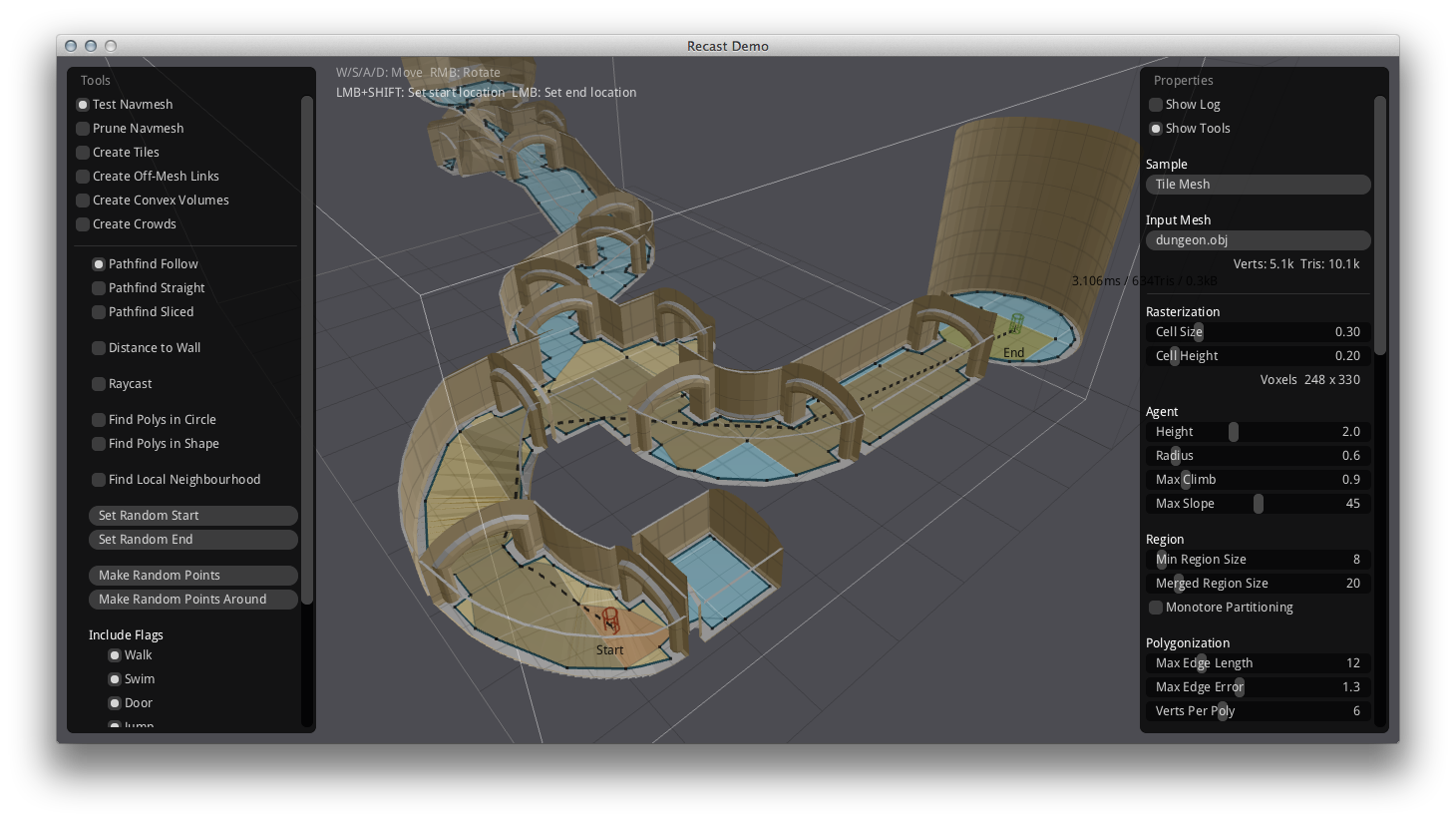

BigWorld 服务端介绍

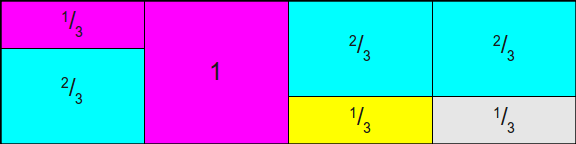

目前可以参考的服务端无缝大世界的资料只有BigWorld游戏引擎,使用这个引擎的游戏少之又少,其中最为知名的是坦克世界WorldOfTanks。根据其官方ppt6-years-of-bigworld-engine-evolution-caused-by-the-success-of-world-of-tanks,上面清楚的写着WorldOfTanks使用BigWorld引擎达到了一百万的ConCurrent User:

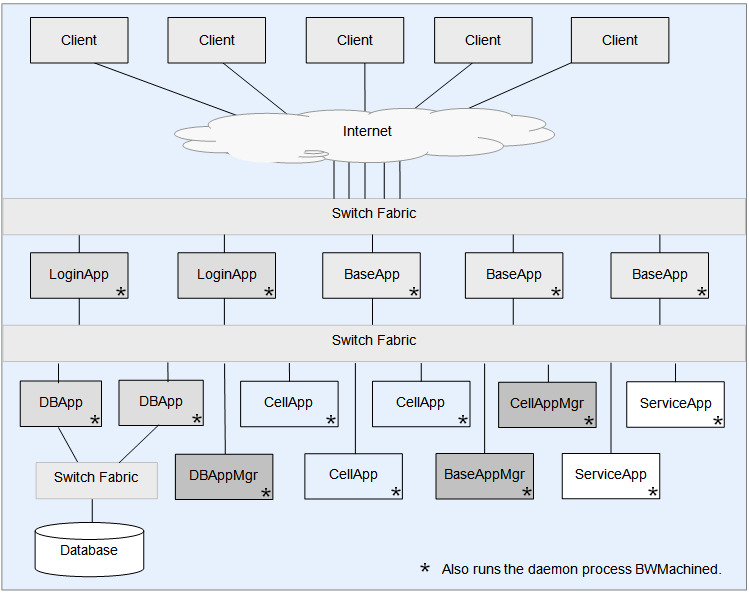

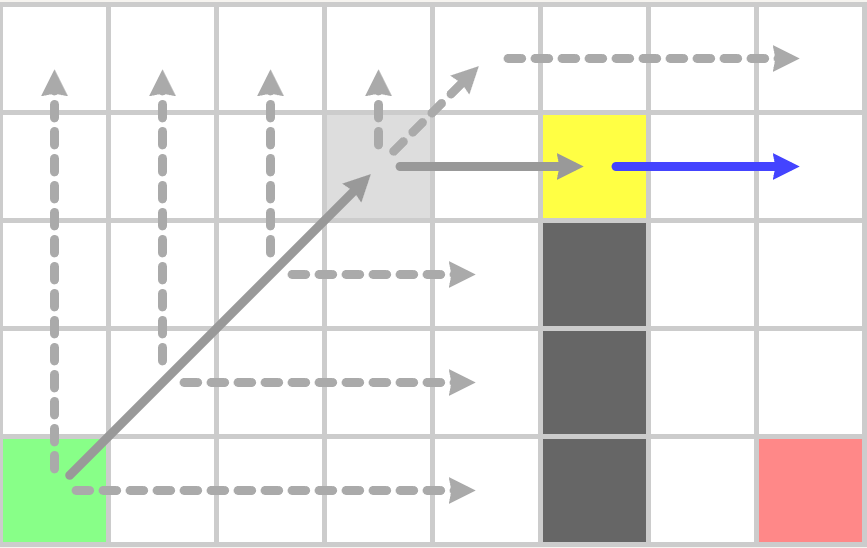

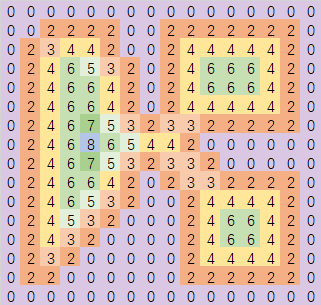

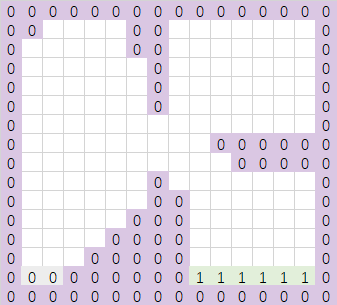

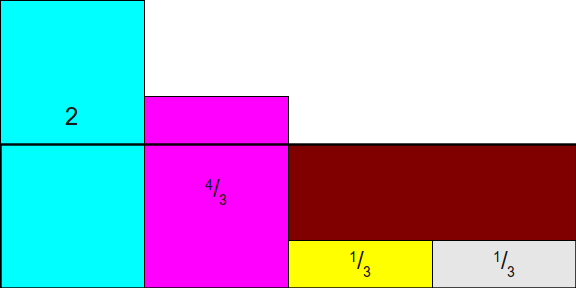

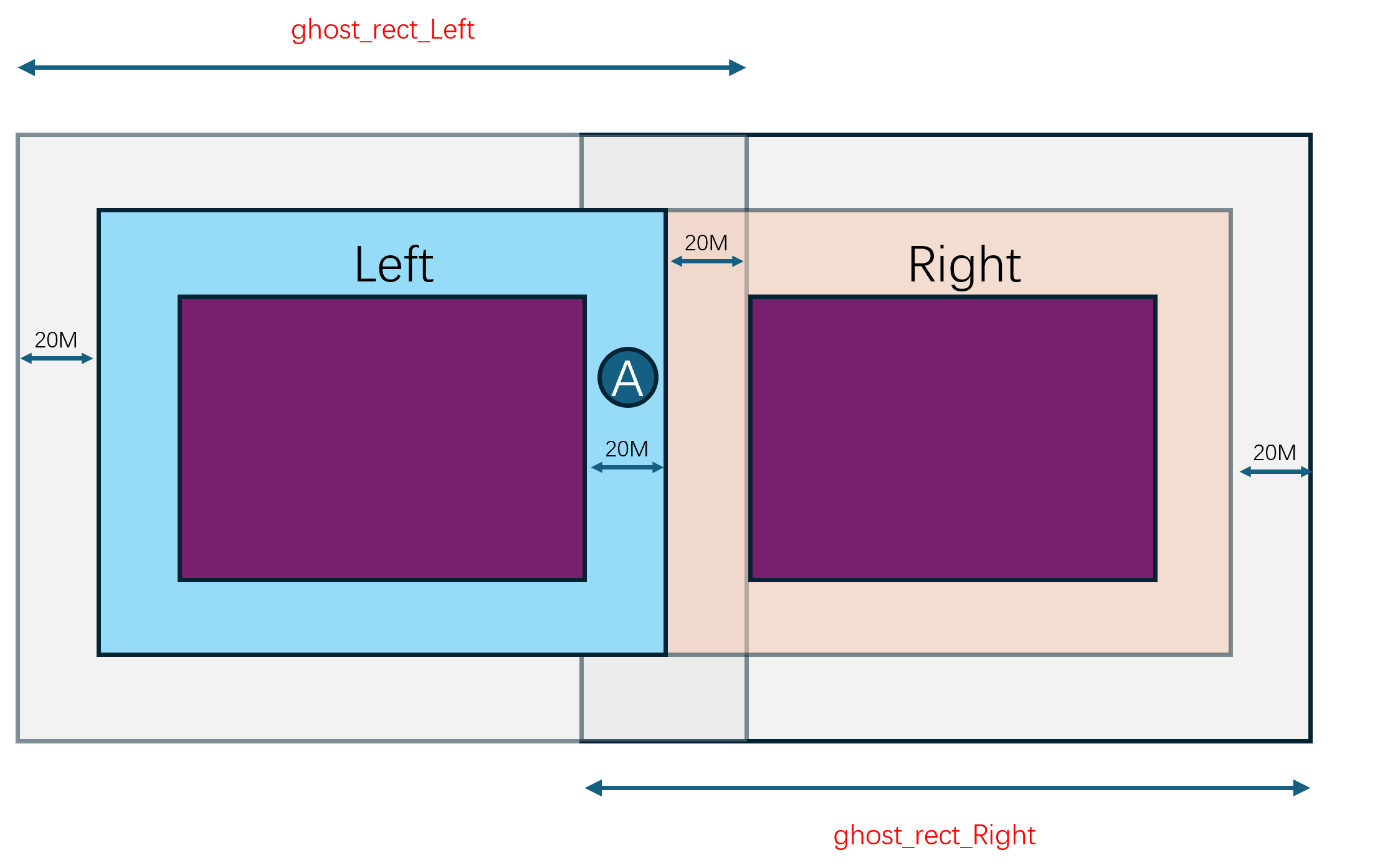

虽然使用这个引擎的游戏不多,幸运的是目前在Github上有这个引擎的开源代码,三年前公开在https://github.com/v2v3v4/BigWorld-Engine-14.4.1,我们可以通过这个公开源代码的版本来探究整体的服务端架构设计以及实现细节。下图就是bigworld服务端的整体架构:

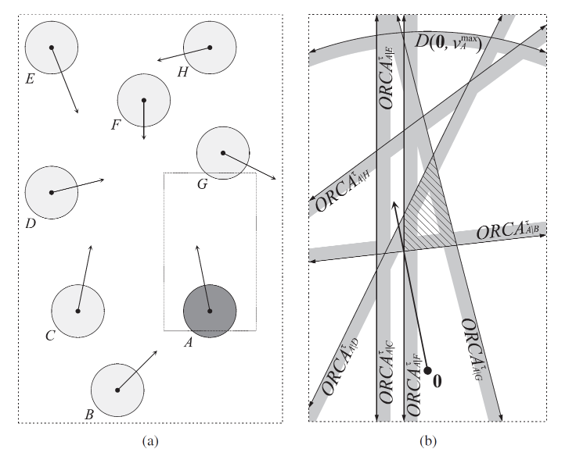

在这个架构图里,我们可以看到bigworld服务端主要由以下几种进程角色组成:

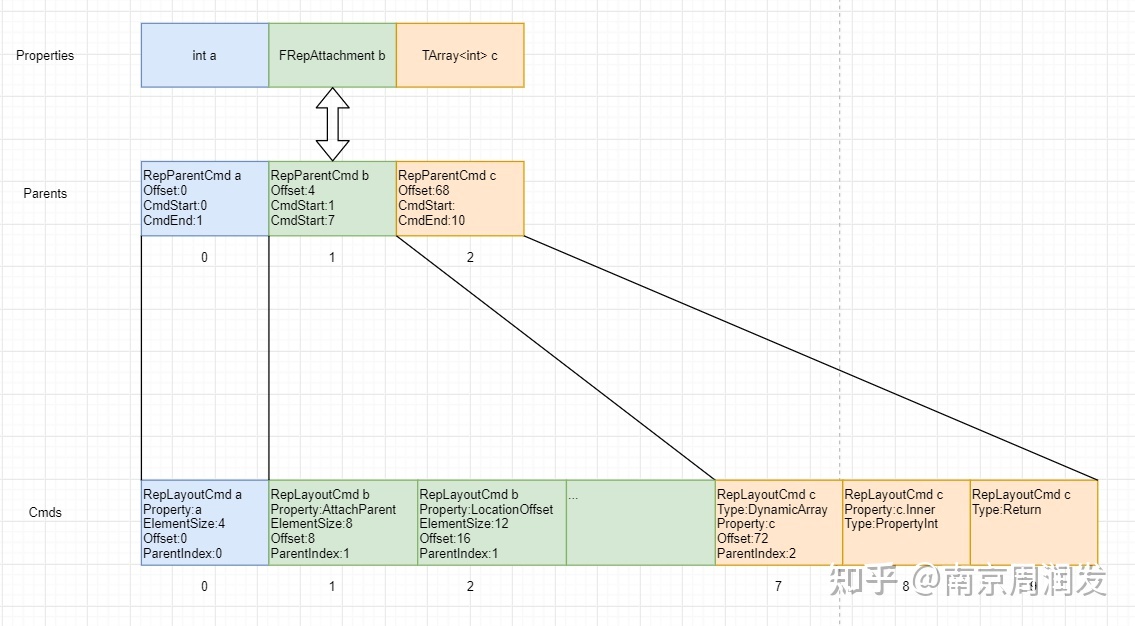

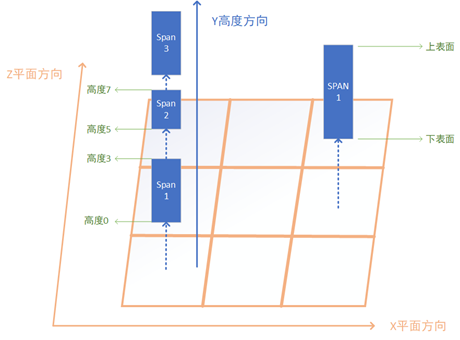

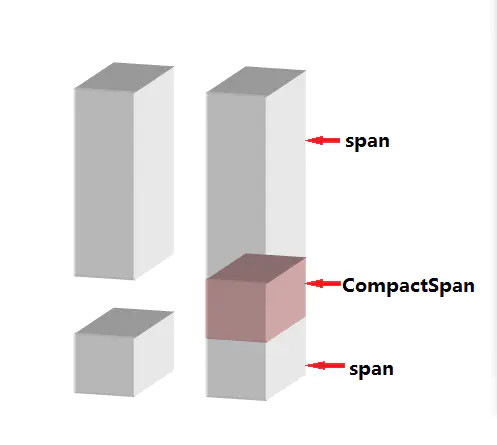

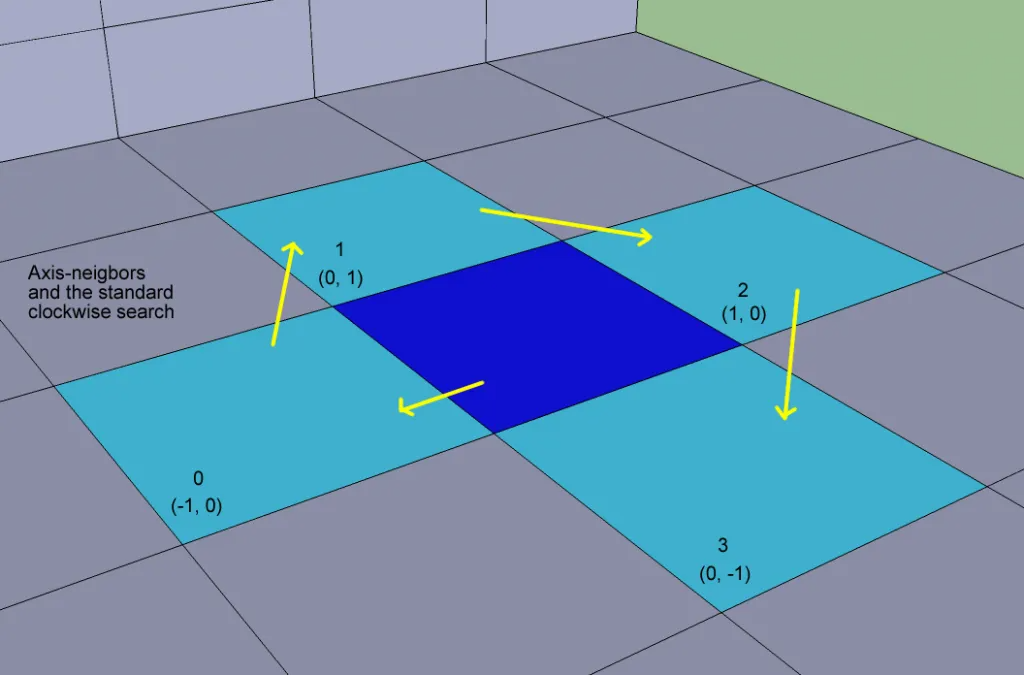

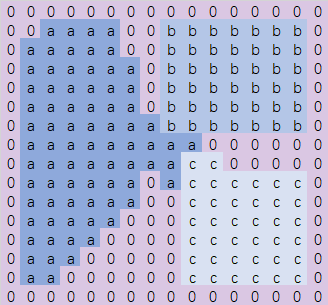

CellApp:场景服务器进程,每个进程负责管理一个或多个Cell。这里的每个Cell都对应一个分布式场景的矩形子区域。CellAppMgr:全局唯一的CellApp管理进程,负责维护所有CellApp的状态以及Cell的创建、销毁、迁移等操作。BaseApp: 实体服务进程,所有的可迁移实体都首先在BaseApp上建立,然后再根据其场景与位置信息往对应的CellApp上创建一个副本。同时对于玩家这种实体,BaseApp还会负责中转CellApp上的实体与对应客户端之间的消息交互。BaseAppMgr:全局唯一的BaseApp管理进程,负责维护所有BaseApp的状态以及BaseApp的创建、销毁、迁移等操作。LoginApp:登录服务器进程,负责处理玩家的登录、注册、验证等操作。DBMgr:全局唯一的数据库服务进程,负责代理所有对数据库的请求,。目前bigworld中使用的数据库是MySQL,但是DBMgr的设计是可以扩展到其他数据库的。

此外还有两个非常重要的进程角色没有在图中体现:一个叫做BWMachined进程,这个进程每个物理机上运行一个,其作用相当于消息总线和运维管理工具;另外一个叫做Reviver进程,这个全局只有一个,负责上述进程的心跳维持和崩溃时的重启。

在上述进程角色中,LoginApp和BaseApp是客户端可以直接连接的,其他进程则不允许客户端连接。因此官方说明书推荐LoginApp和BaseApp所在的物理机配置两个网卡,从而可以接入两个交换机:一个是外网交换机,负责将客户端的请求路由到对应的LoginApp或BaseApp上;另一个是内网交换机,负责将LoginApp或BaseApp上的请求路由到其他角色的进程上。

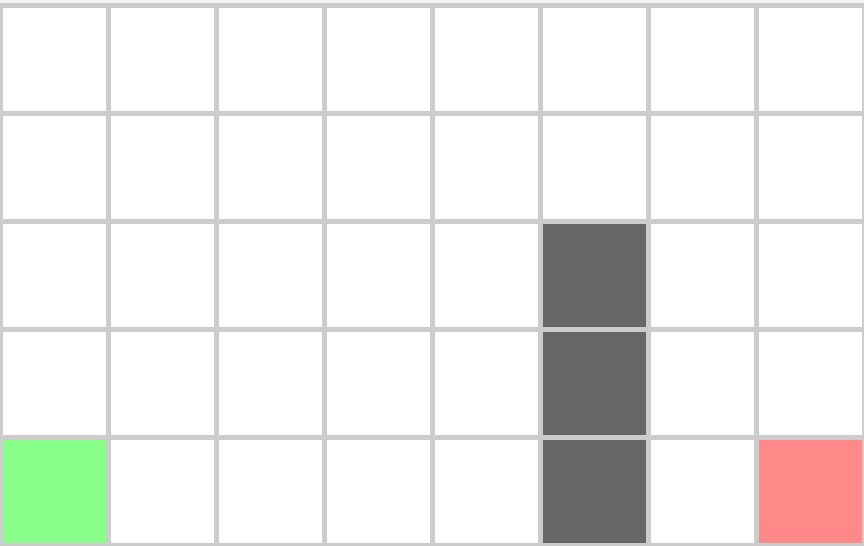

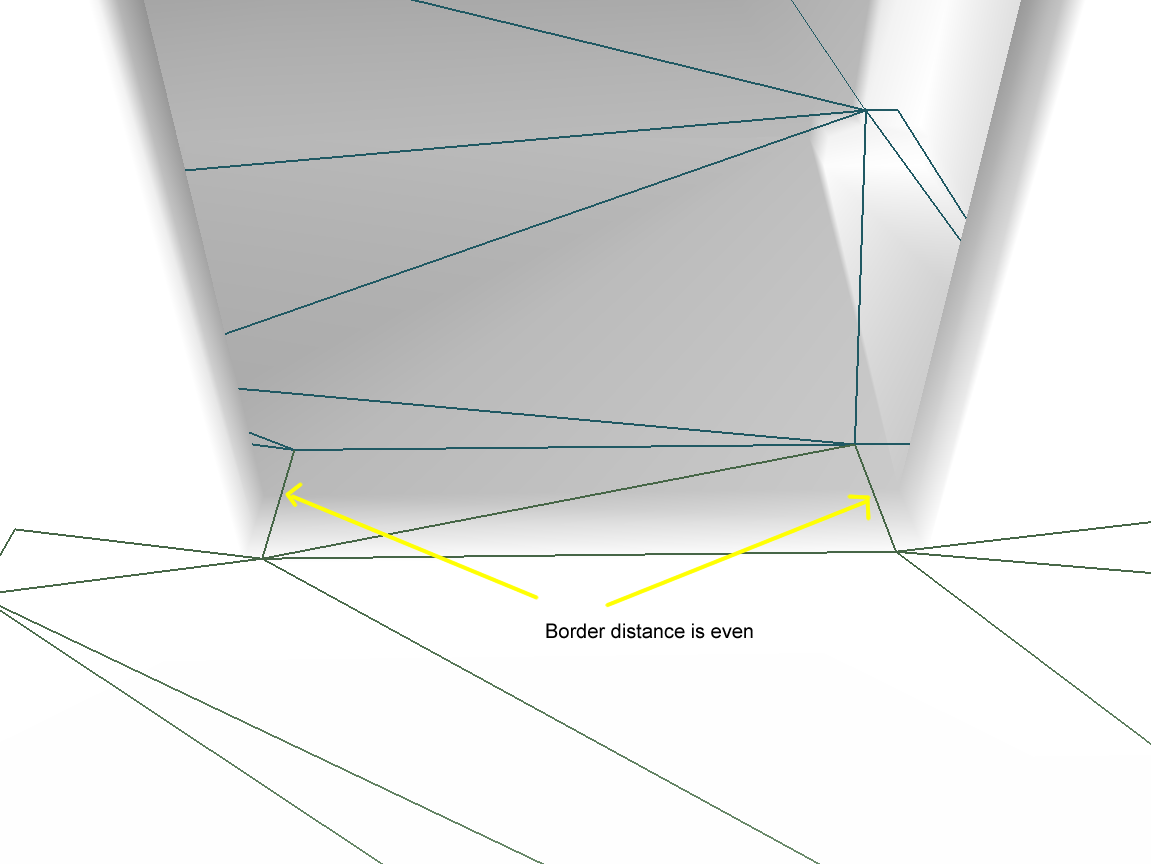

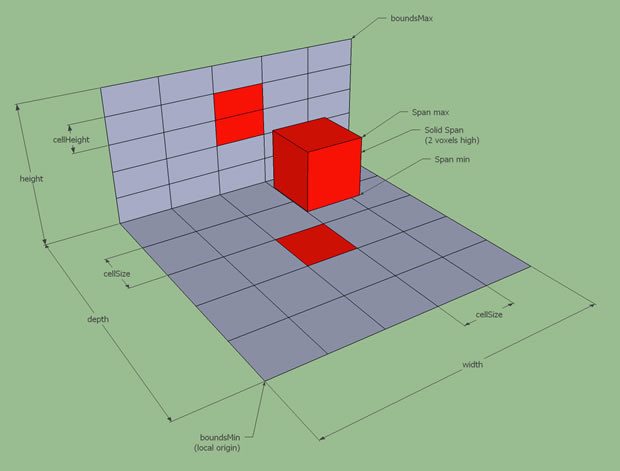

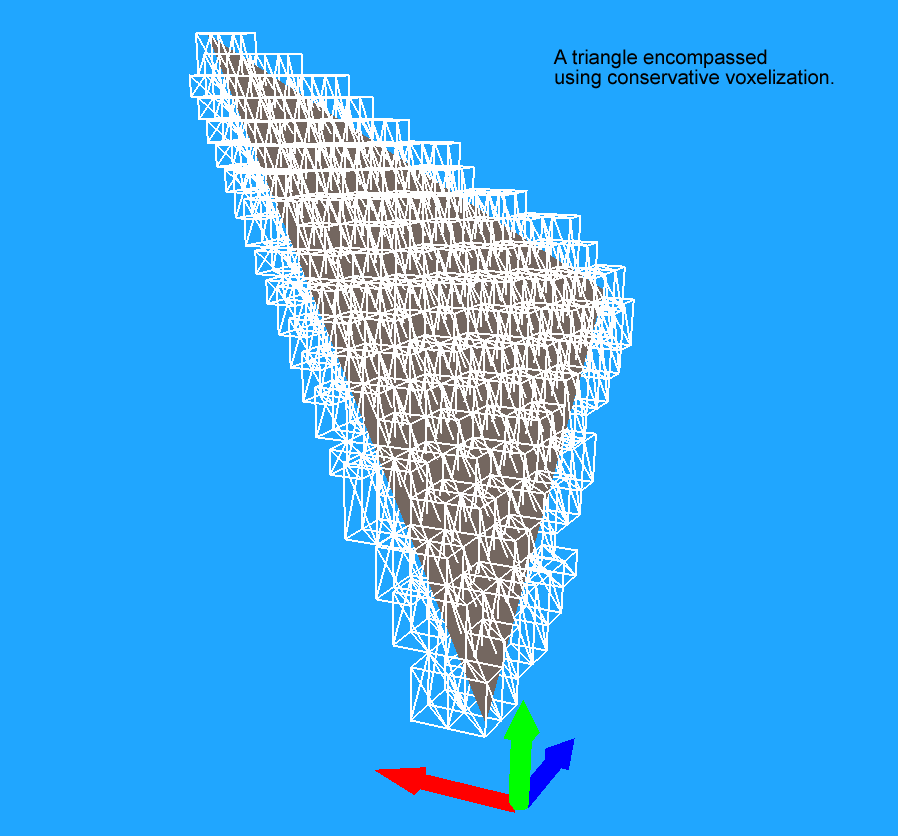

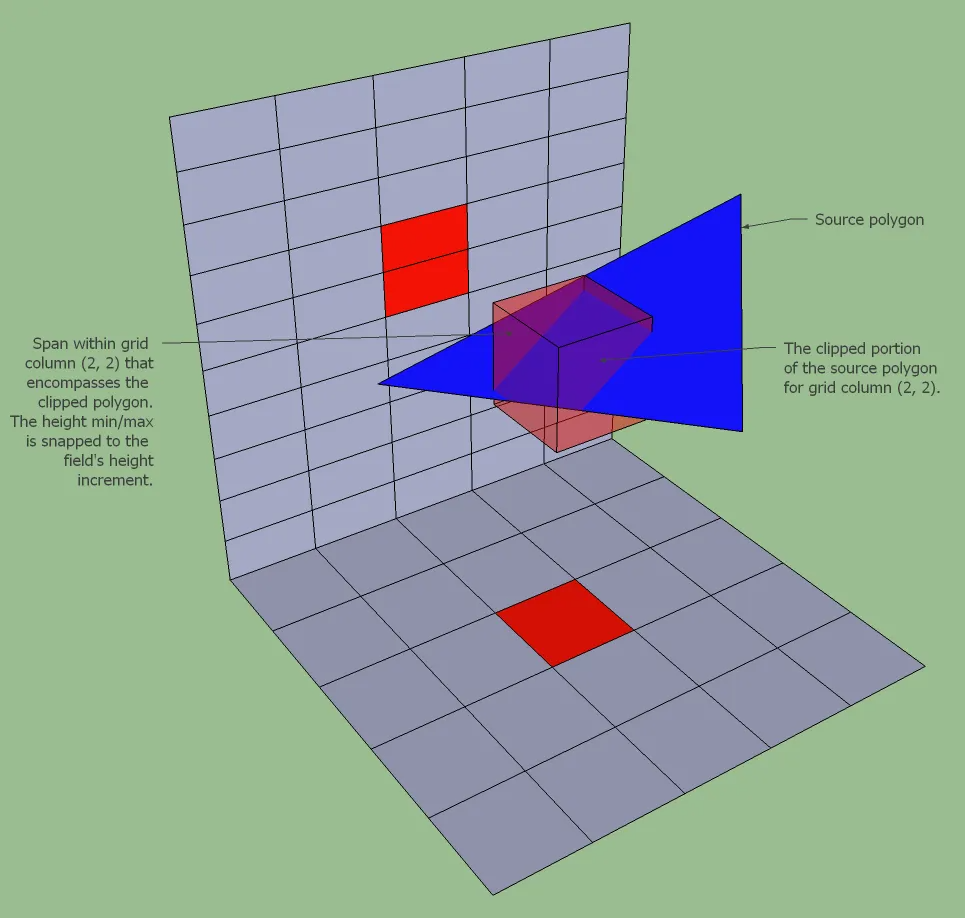

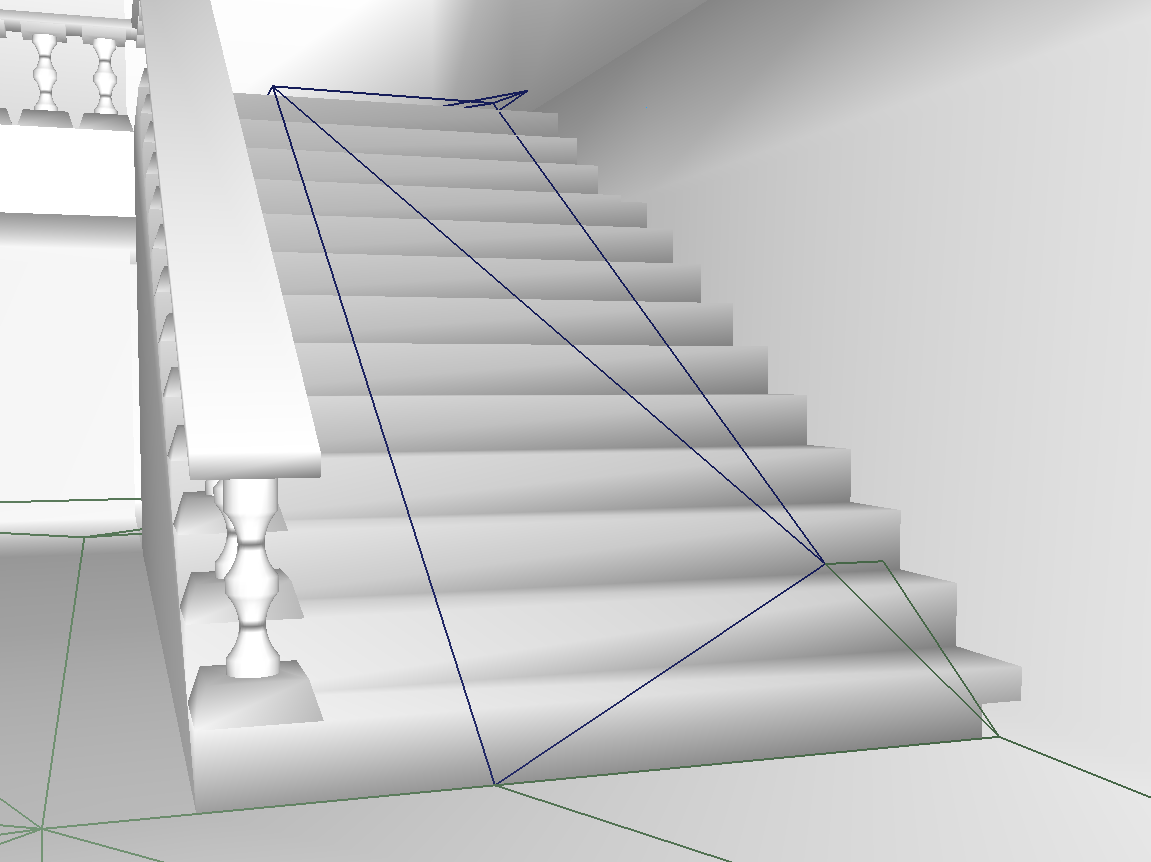

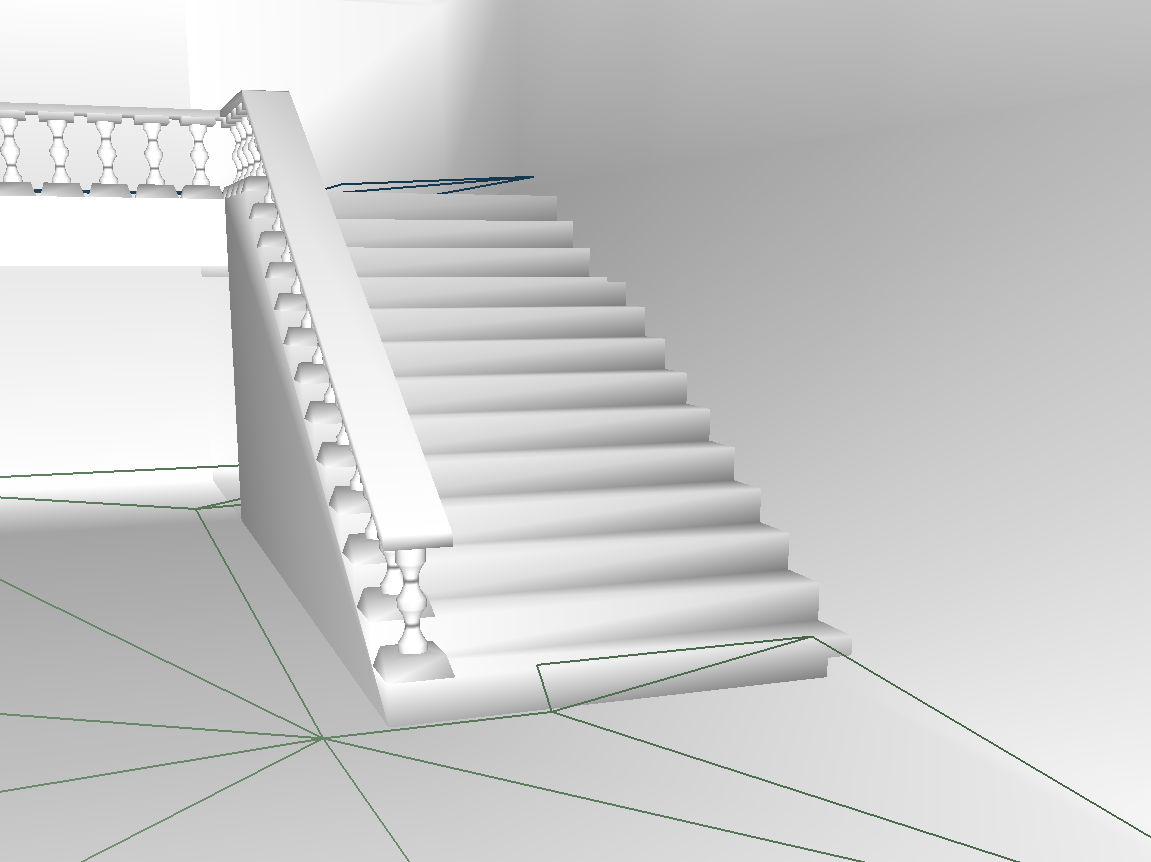

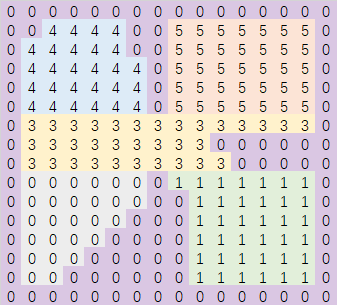

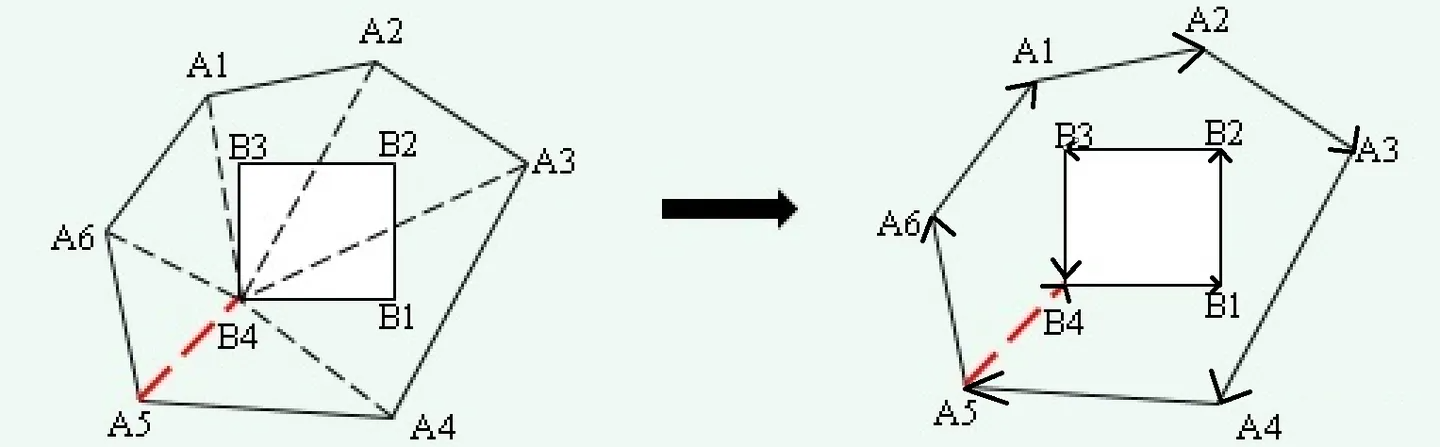

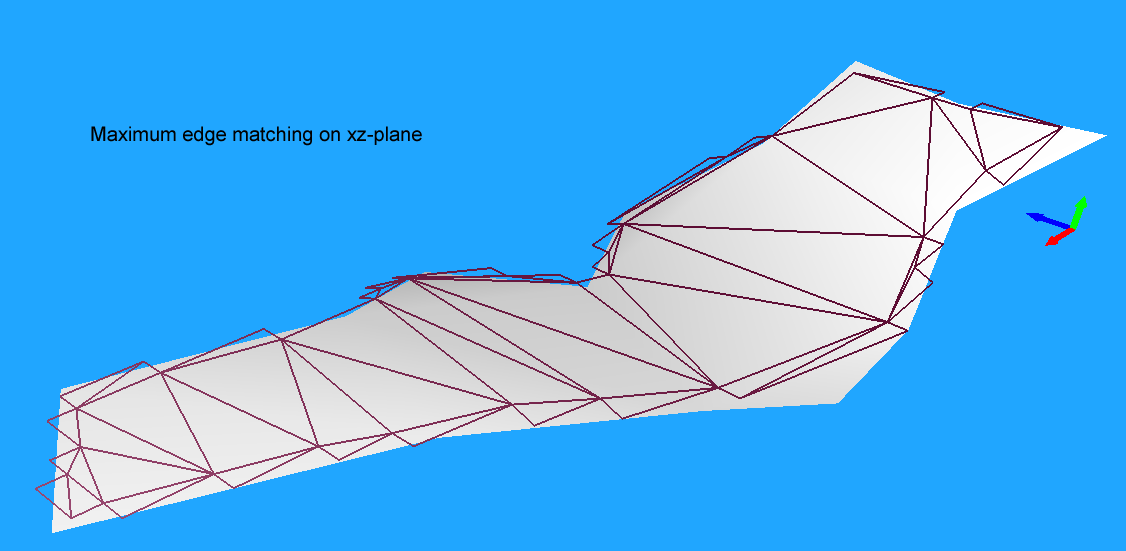

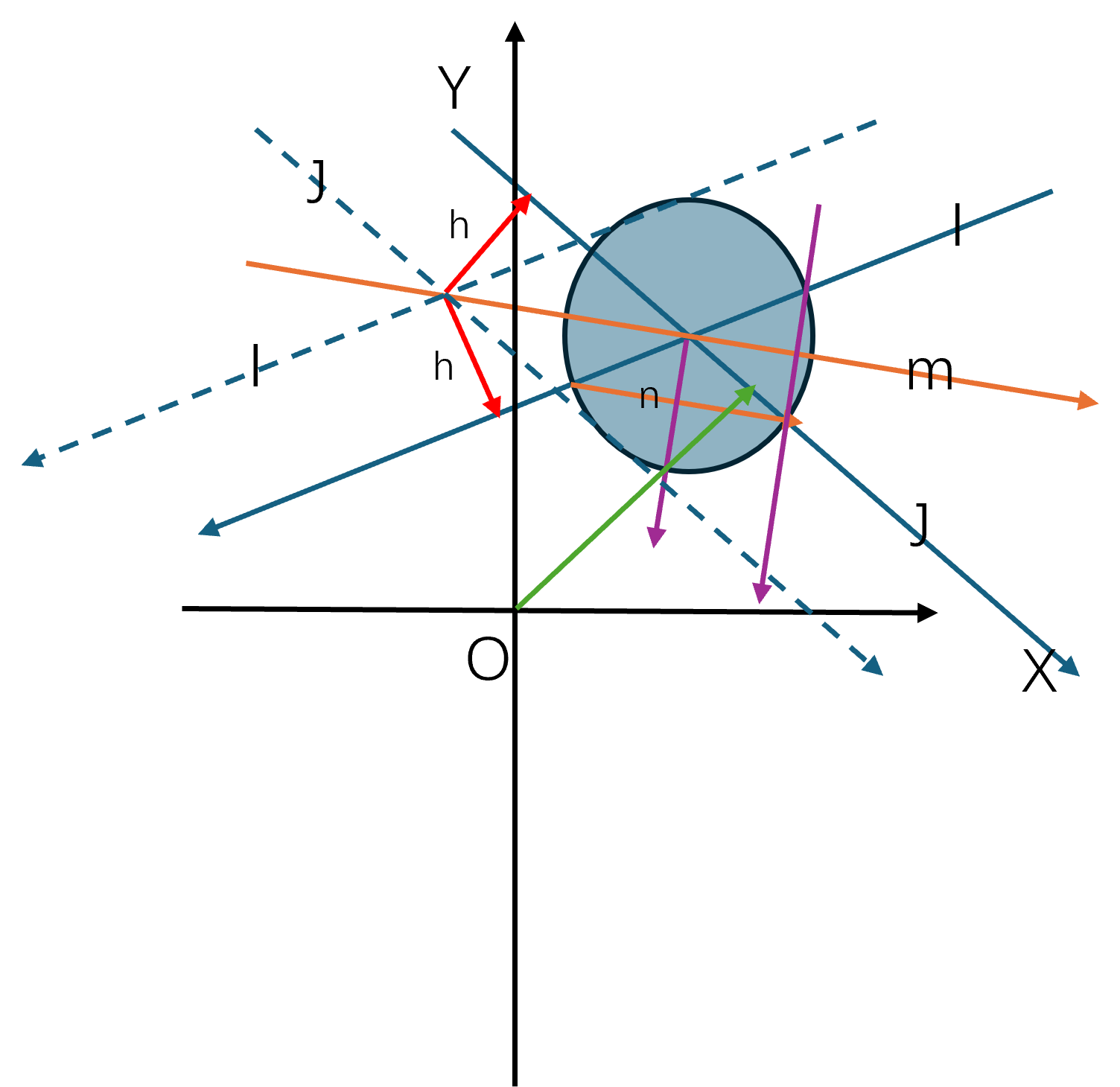

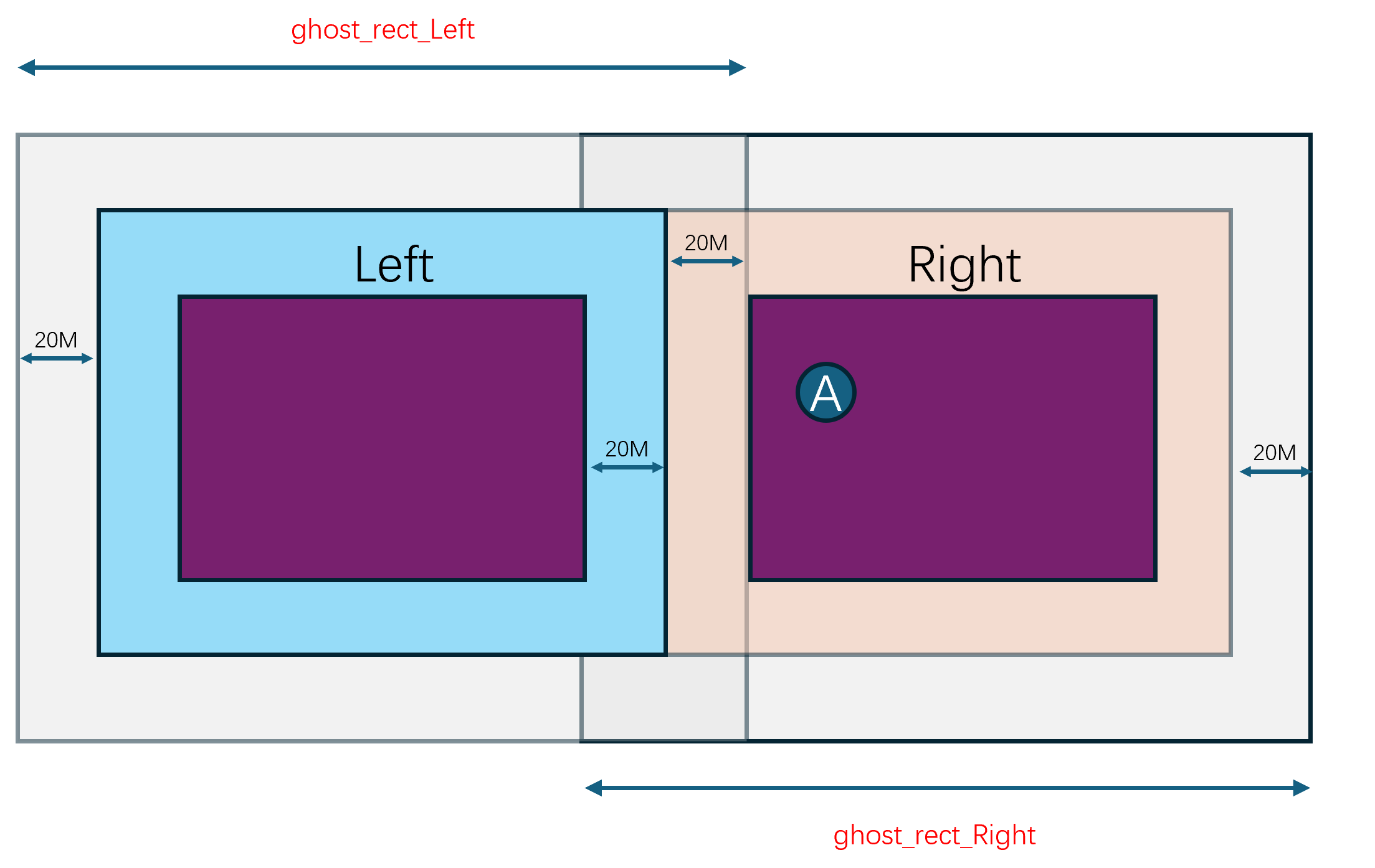

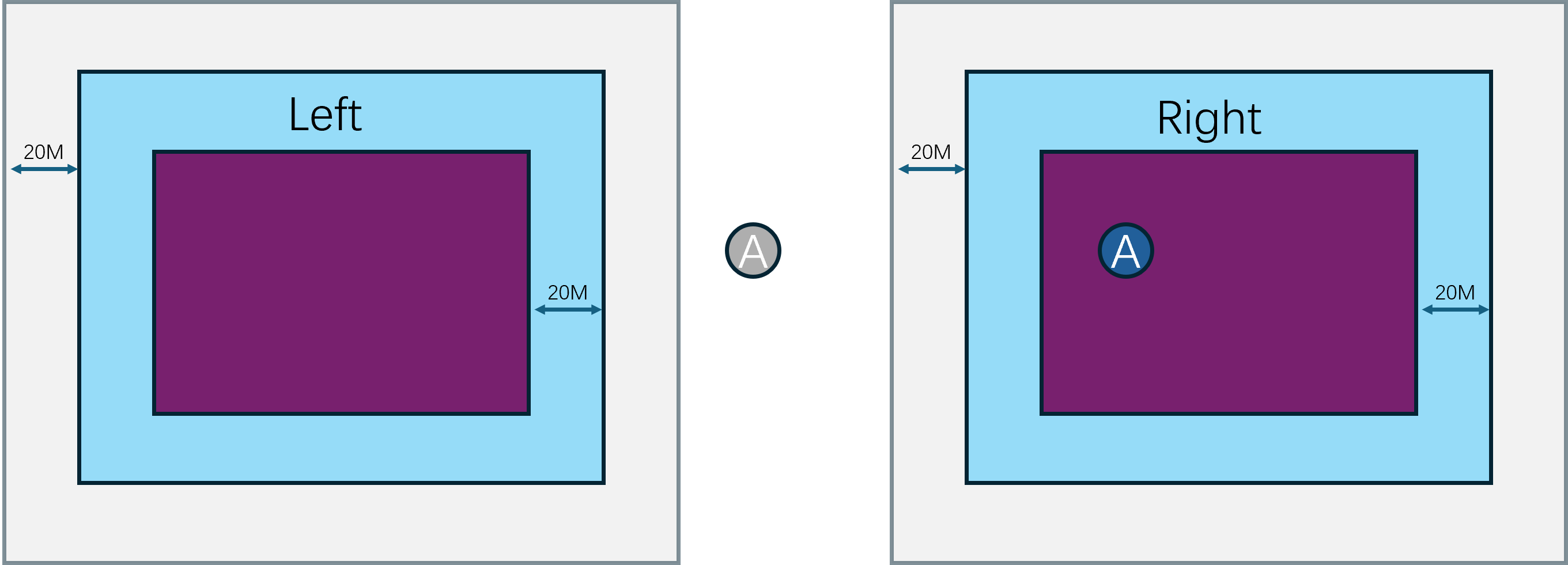

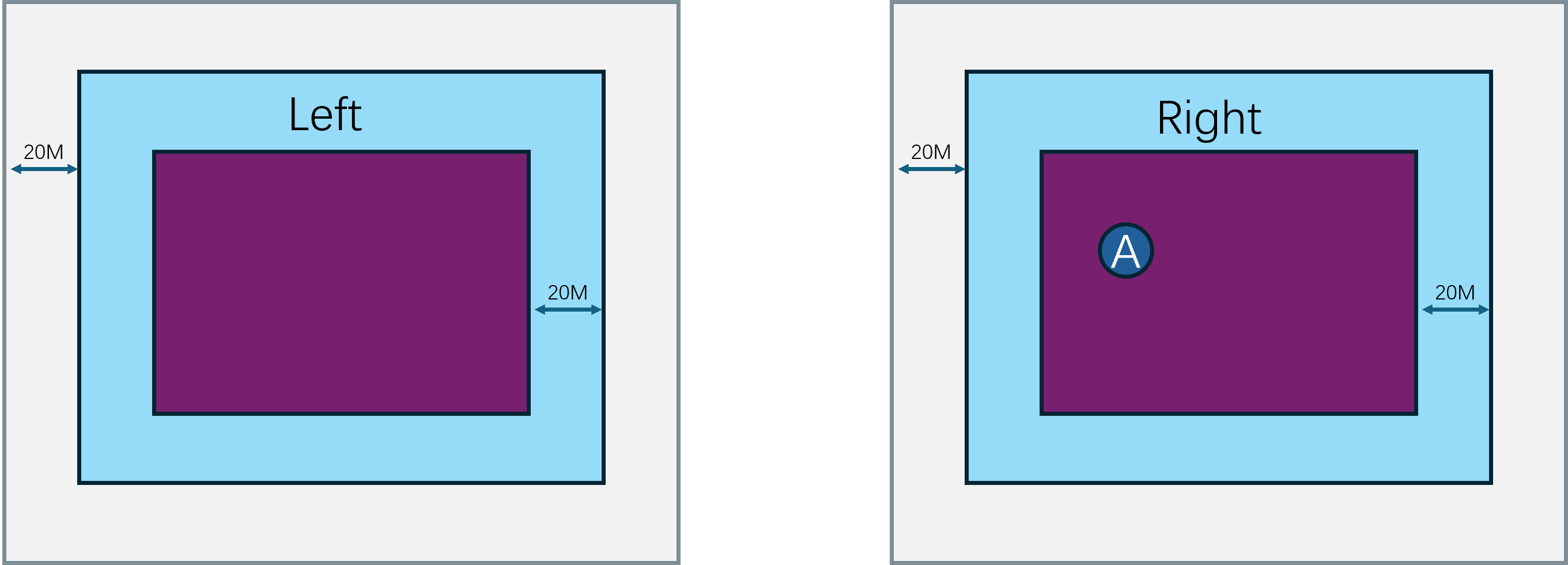

bigworld中使用一个Space类来表示表示一个完整地图,然后Space又根据负载情况动态分割成一个或多个矩形区域Cell。每个Cell负责Space的矩形区域是不会重叠的,且所有Cell的矩形区域的并集就是这个Space的完整区域。同时一个Cell的区域并不是固定的,会根据其相邻区域之间的负载分布情况来动态调整。全局唯一的CellAppMgr管理所有的CellApp,也就是场景服务器进程,一组服务器一般会有数十个CellApp。然后每个CellApp都会有不定个数的Cell在进程内执行。所以Space与Cell的相关信息都会经由CellApp上传到CellAppMgr上,通过这些信息就可以维护Cell的创建、销毁以及边界调整。

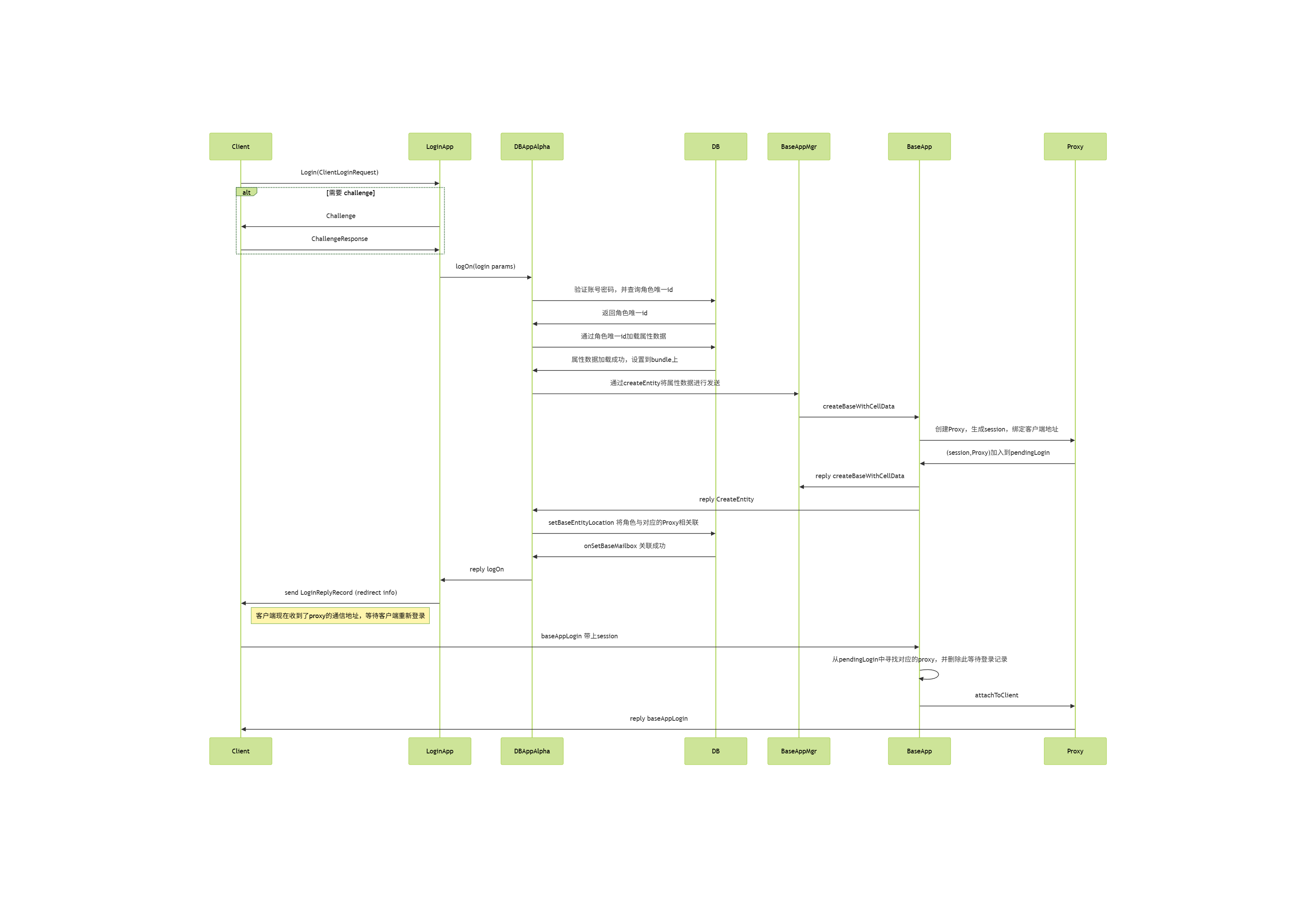

当一个客户端想连接到游戏服务器时,涉及到的流程以及进程角色如下:

- 首先会连接到

LoginApp上。LoginApp会验证客户端的登录信息, - 如果验证成功且通过

DBMgr从数据库里加载出来了对应的玩家数据,然后往BaseAppMgr发送这个玩家数据来请求创建对应实体。 BaseAppMgr接收到这个请求之后就会筛选出合适的BaseApp,并通知选定的BaseApp来创建一个proxy对象,代表对应的玩家。BaseApp创建proxy对象完成之后,就会反向通知BaseAppMgr操作完成,然后BaseAppMgr又会通知回LoginApp。- 此时

LoginApp就可以通知原来的客户端当前登录操作已经成功,且会附带上对应的proxy对象的地址。

客户端接收到登录成功的返回之后,就会以这个proxy对象地址去连接对应的BaseApp,请求将这个proxy对象绑定到当前的客户端连接,绑定的同时会下发这个玩家所有的客户端可见属性,让客户端创建一个对应的对象。

但是此时还没有进入场景,如果玩家想进入场景与其他玩家进行互动,流程链依然很长:

- 首先客户端发送一个进入场景的请求到

BaseApp上的对应proxy对象。 proxy对象就会以此为基础构造一个进入场景的请求,里面填充场景编号、位置数据以及当前proxy对象属性里的Cell可见属性,并将这个请求发送到CellAppMgr。CellAppMgr接收到这个请求之后就会根据传入的场景编号获取对应的Space, 然后再根据出生位置来计算出覆盖这个区域的Cell,并将这个进入场景的请求发送到这个Cell所在的CellApp。CellApp找到对应的Cell后,就会以请求里携带的玩家的属性数据来创建一个真正的RealEntity,并绑定proxy所在的通信地址为数据下行通道。创建成功之后会将这个RealEntity的唯一标识符返回给CellAppMgrCellAppMgr再附带这个Cell的地址返回给proxy对象。- 当

proxy对象接收到对应的RealEntity的地址之后,就会绑定对应RealEntity的通信地址为数据上行通道。

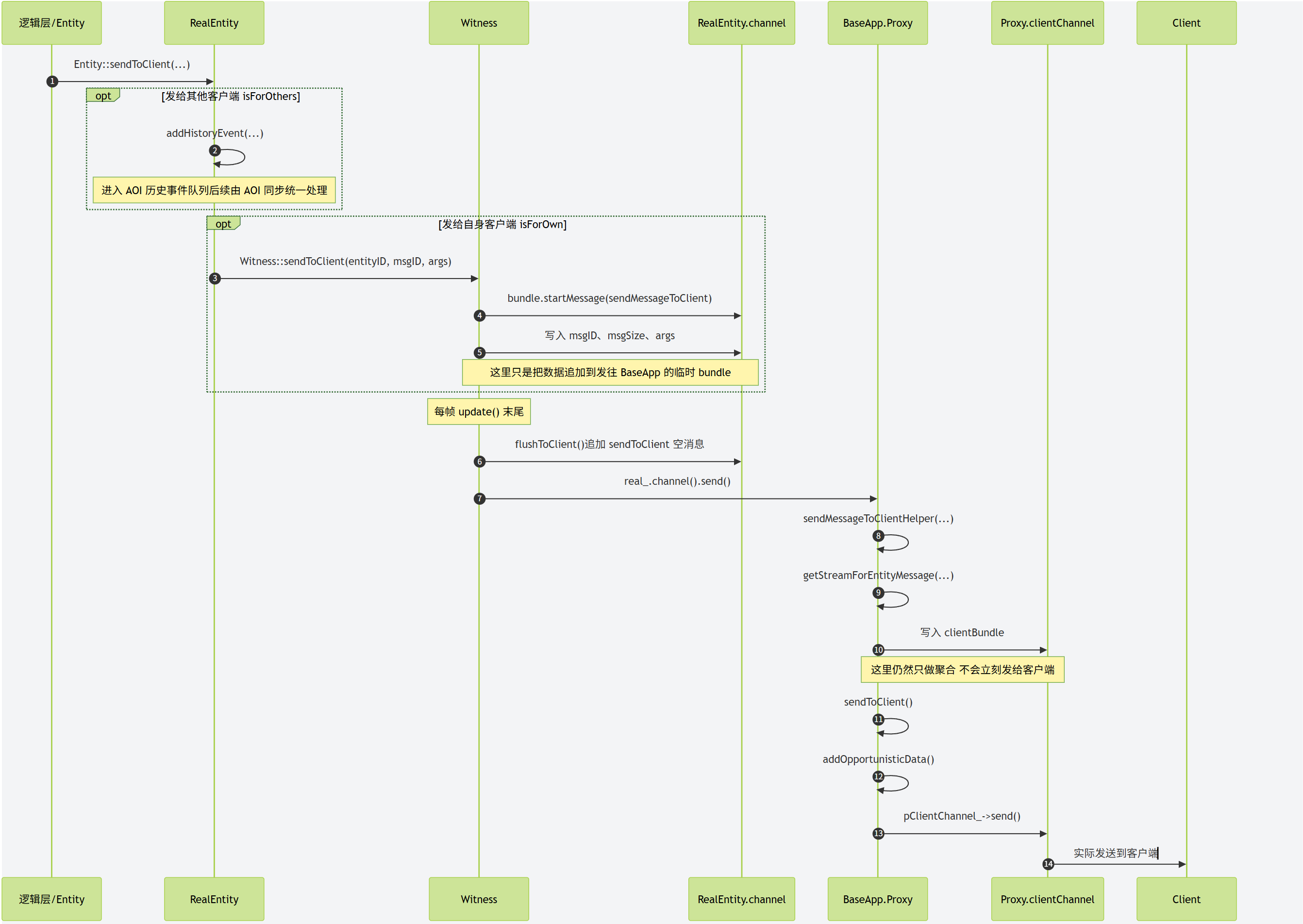

当RealEntity需要给对应的客户端发消息的时候,会先通过与对应proxy之间的通道来传递消息, proxy接收到消息之后,解析发现这是一个发往客户端的消息,就会转发到绑定的客户端连接。同时如果客户端的玩家对象想往对应的RealEntity发送消息,则也需要通过proxy进行中转。

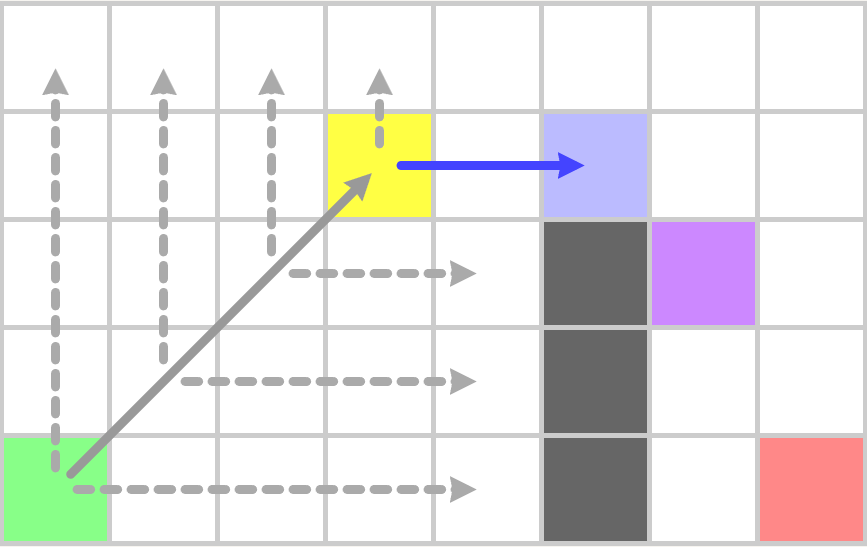

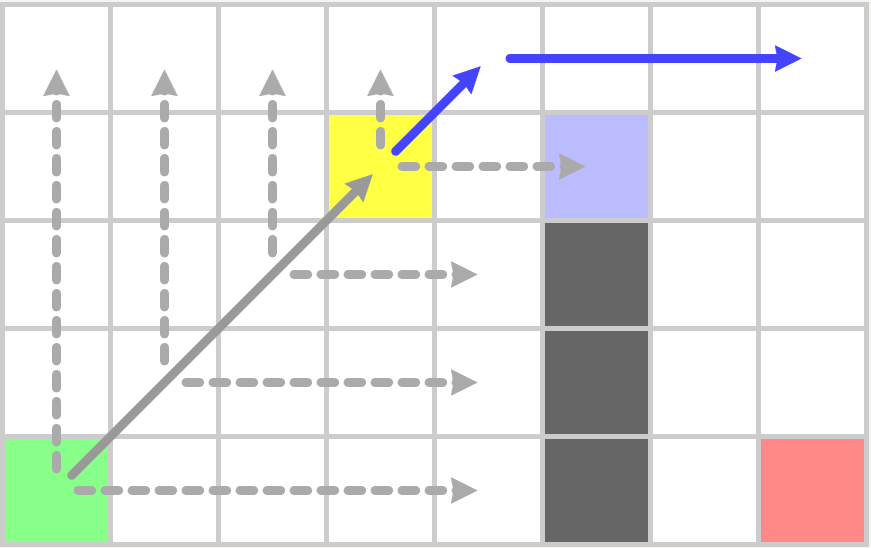

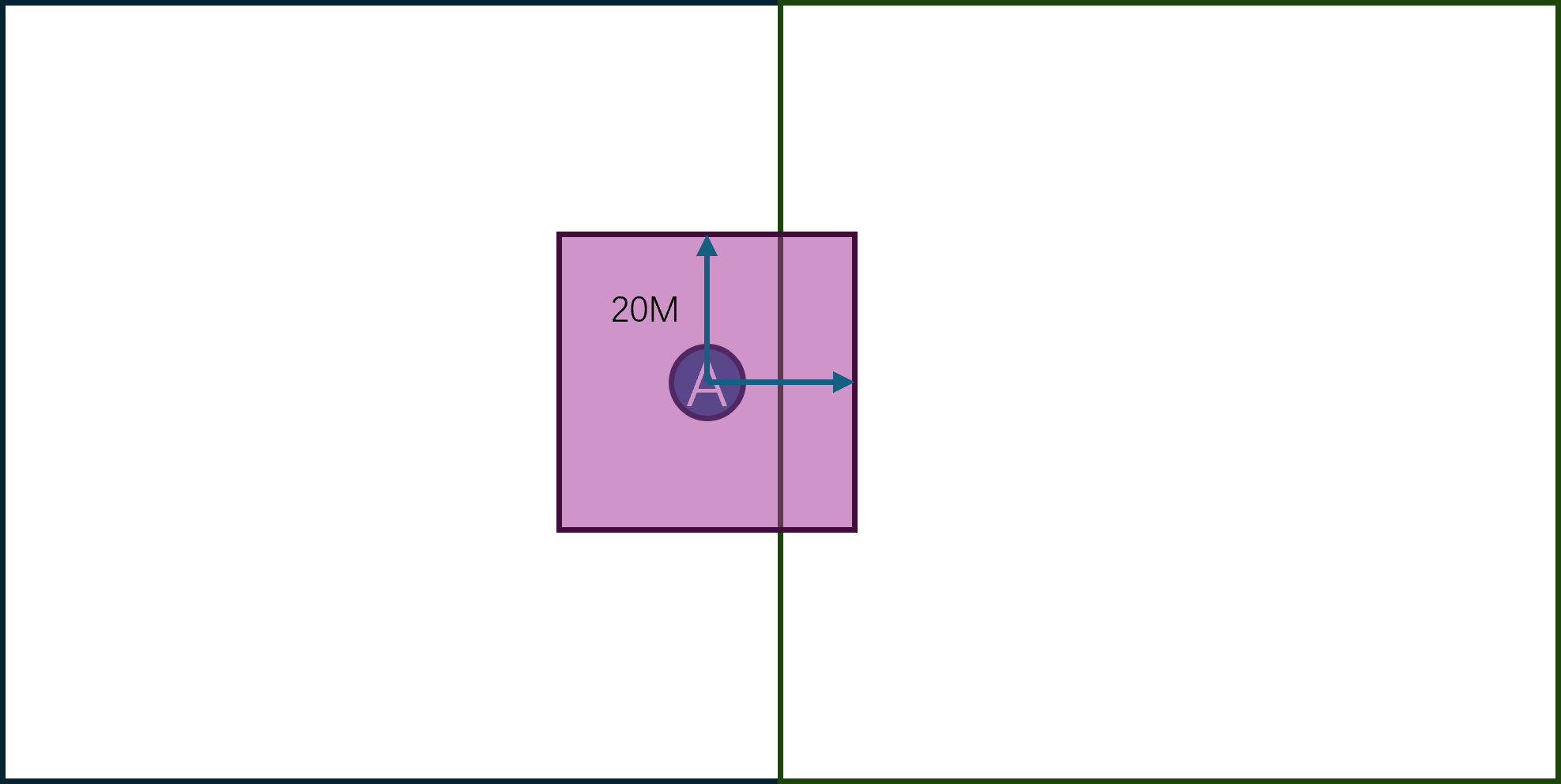

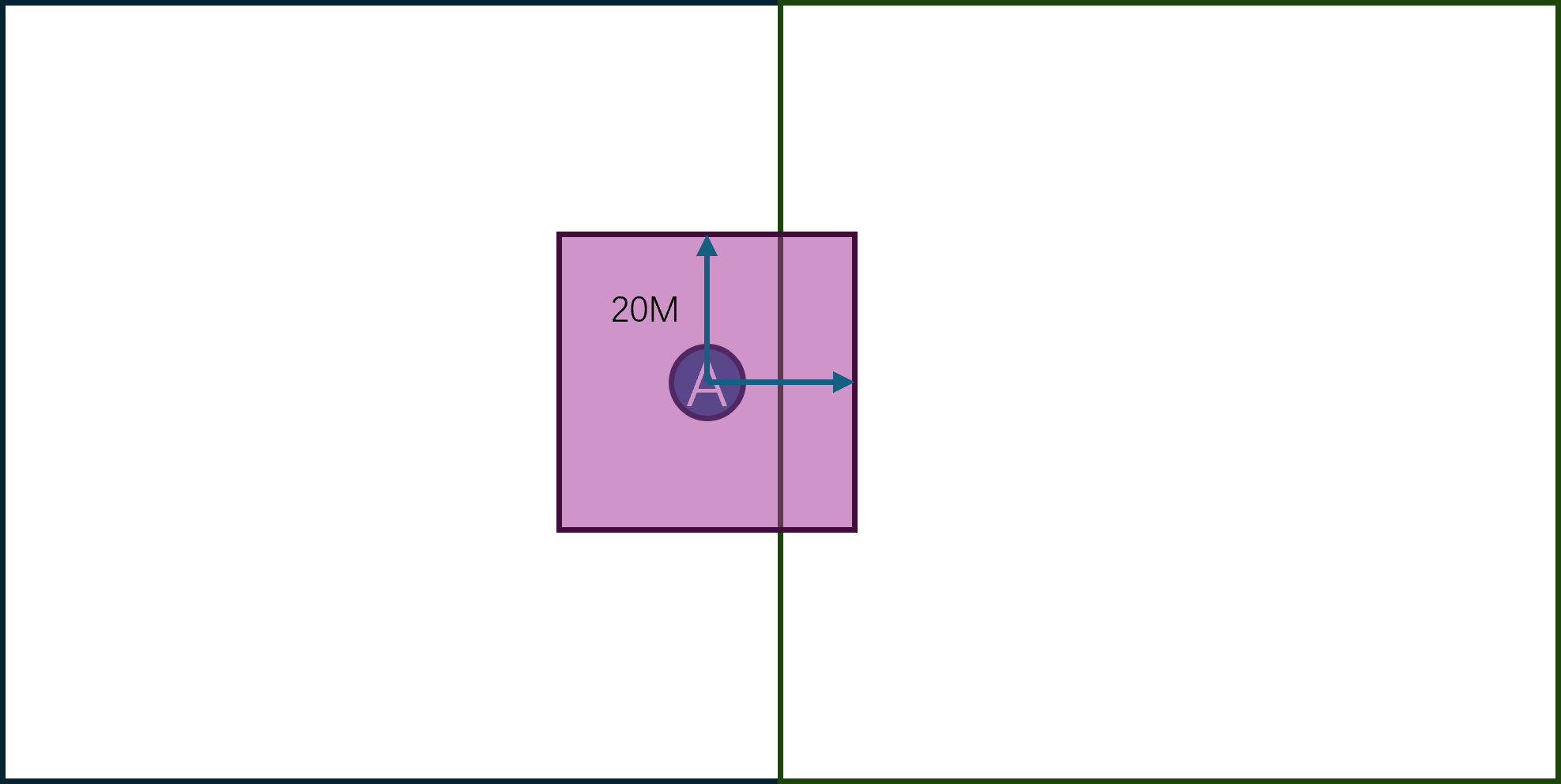

由于Cell的区域是动态调整的,所以Cell之间的边界是会发生变化的,同时玩家又是可以任意移动的,所以玩家的RealEntity并不是一直绑定在同一个Cell上。当一个RealEntity绑定到一个新的Cell时,就会通知新Cell来创建一个新的RealEntity,同时老的RealEntity退化为一个GhostEntity,并通知对应的proxy对象切换上行通道的地址为新的RealEntity。一个RealEntity上发生的所有客户端可见属性的变化都会广播到其管理的GhostEntity集合,同时一个RealEntity下发的其他Entity的状态数据不仅包括周围的RealEntity,还包括周围的GhostEntity。如何通过RealEntity/GhostEntity来将分布式的场景营造成一个支持无缝迁移的统一逻辑场景复杂度其实挺高的,这也是Bigworld的核心技术所在。

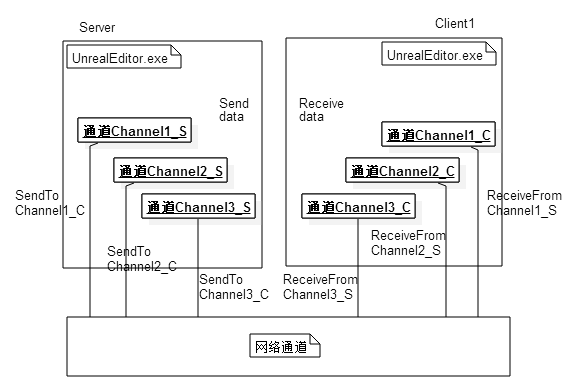

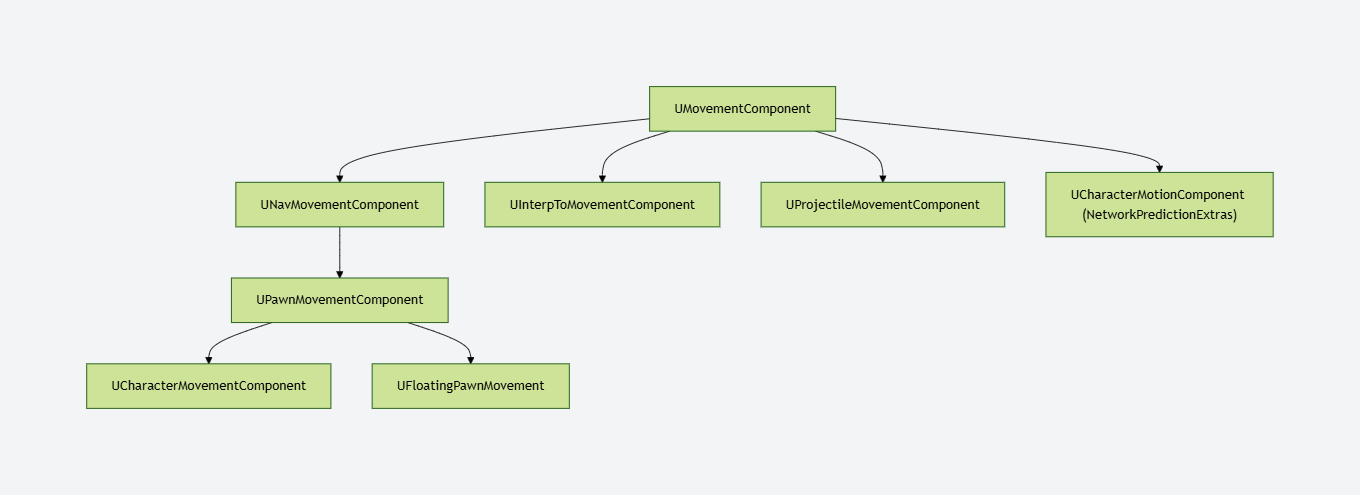

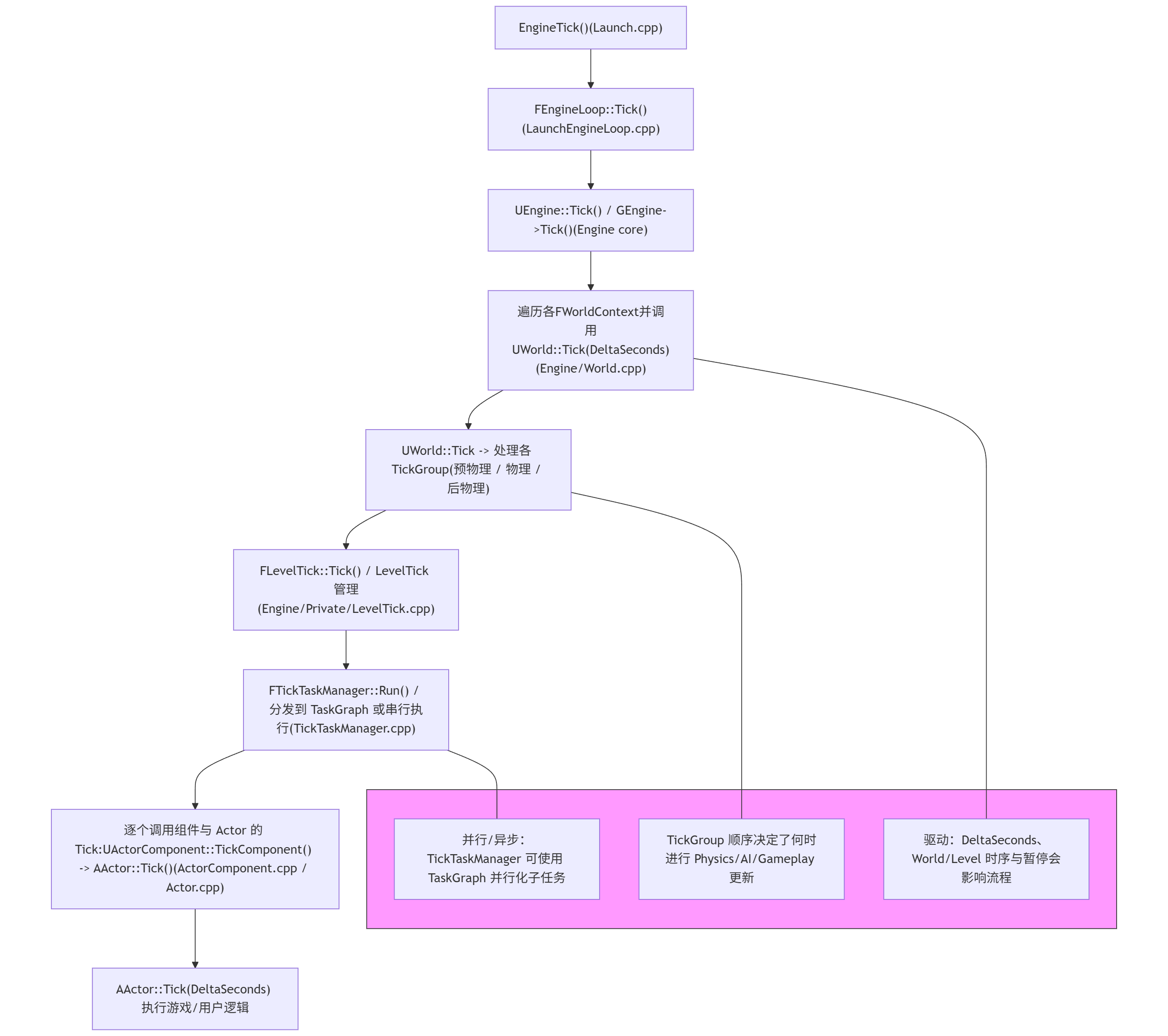

Unreal Engine 服务端介绍

Unreal Engine(后文中将简称UE)是由Epic Games开发的商用游戏引擎,最早于1998年发布,之后更新了多个版本。在2014年发布了虚幻四(后文简称UE4),并在Github上公开了其源代码,这种开放使用的策略大大的加速了UE的推广。然后在2022年进一步的发布了UE5,其黑客帝国Demo令人印象深刻,使得UE5次世代大世界这个词组成为了一个不可分割的整体。使用UE开发的游戏有很多,近年来比较知名的有PUBG、堡垒之夜、黑神话悟空、三角洲行动等。而且UE不仅仅能做游戏,还在虚拟现实、增强现实、电影特效、建筑可视化等各种实时互动内容制作里大显身手。

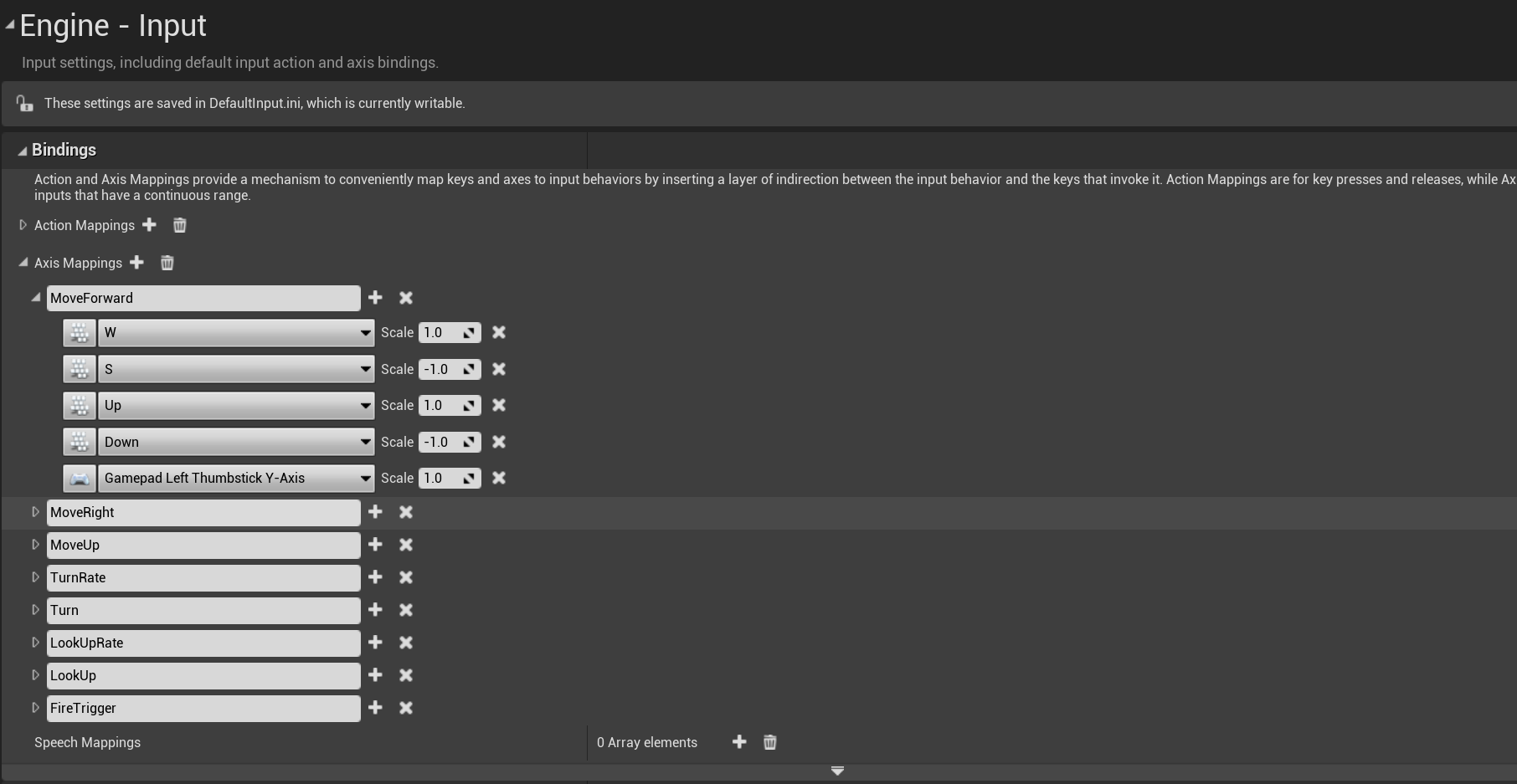

UE引擎有一个跟其他引擎非常不一样的地方,就是他的服务端与客户端源代码是混合在一起的,打包的时候使用同一份代码进行打包。引擎里自动就维护好了客户端与服务端之间的通信协议和状态同步机制,不需要去额外的做开发工作,这种设定下打包出来的服务器叫做专属服务器Dedicated Server,简称DS。如果需要在运行时区分客户端与服务端,那么就需要在代码里进行判断。以官方UE4样例项目ShooterGame的一个函数AShooterCharacter::PostInitializeComponents为例,运行时可以通过GetLocalRole()来判断当前进程是否有这个AShooterCharacter的控制权,然后GetNetMode()这个接口来判断当前进程的角色是客户端还是服务端:

void AShooterCharacter::PostInitializeComponents()

{

Super::PostInitializeComponents();

if (GetLocalRole() == ROLE_Authority)

{

Health = GetMaxHealth();

// Needs to happen after character is added to repgraph

GetWorldTimerManager().SetTimerForNextTick(this, &AShooterCharacter::SpawnDefaultInventory);

}

// 省略一些代码

// play respawn effects

if (GetNetMode() != NM_DedicatedServer)

{

// 省略一些代码

}

}

更为厉害的是在编辑器内可以通过不同的命令行参数来分别启动客户端与服务端,这样可以非常方便的在编辑器里进行开发与测试。这样前后端代码一体化开发的方式,再加上UE引擎自带的蓝图(Blueprint)这个图形化编程工具,极大提升了非程序人员对UE引擎的接受度。

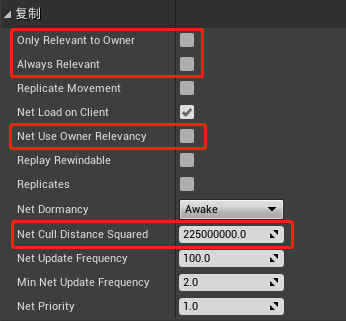

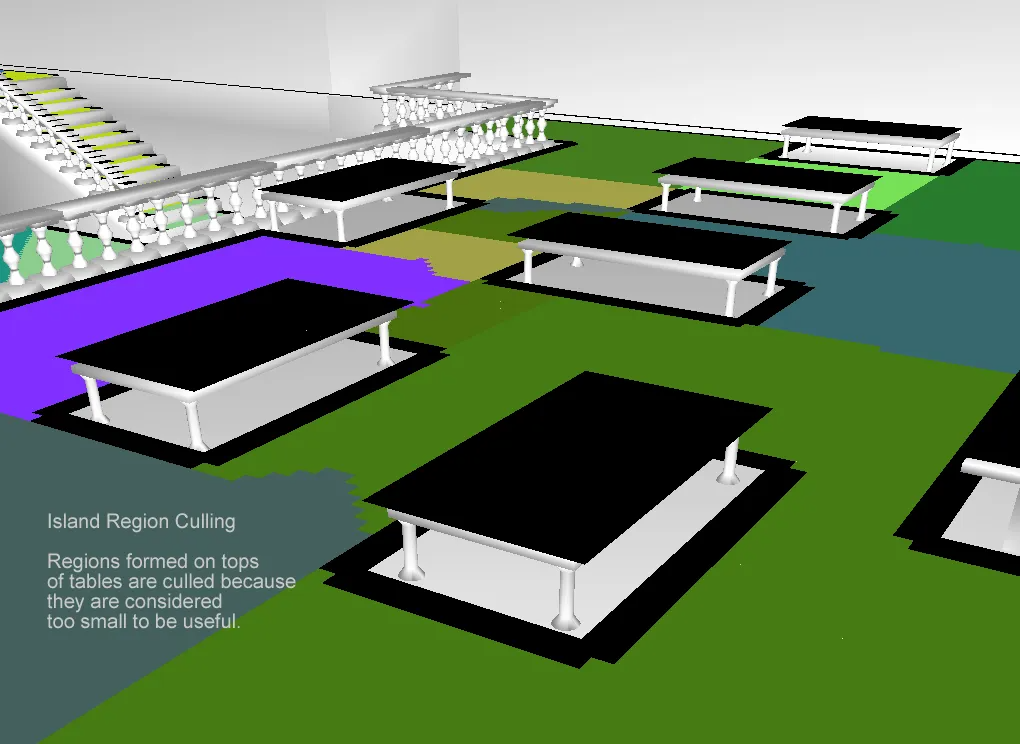

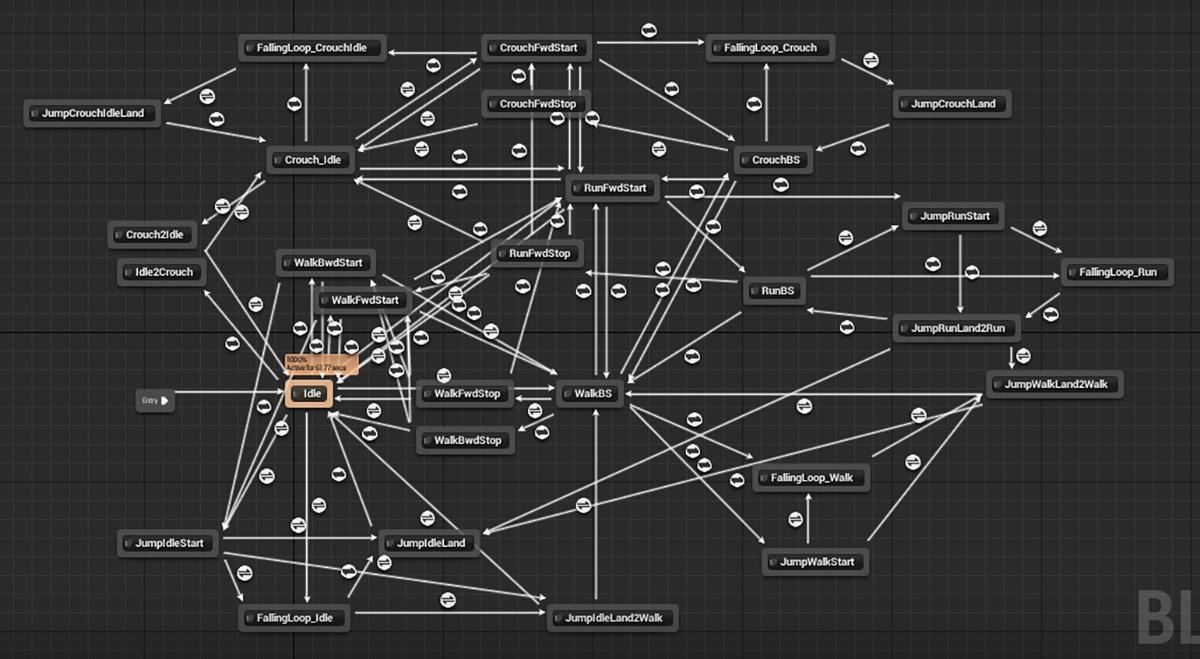

不过这样的一体化开发方式也有一些弊端,特别是服务端,因为服务端也需要处理物理、移动、骨骼动画等性能消耗非常高的任务,导致服务端的性能受到很大的限制,单进程的玩家承载量也受到了很大的限制,基本只能在100左右。正是由于这种承载量的限制,UE引擎目前适用的联网游戏类型基本都是一些匹配对战的开房间游戏,在需要大量玩家同场景的MMORPG(Massively Multiplayer Online Role-Playing Game)类型游戏中则比较少见。

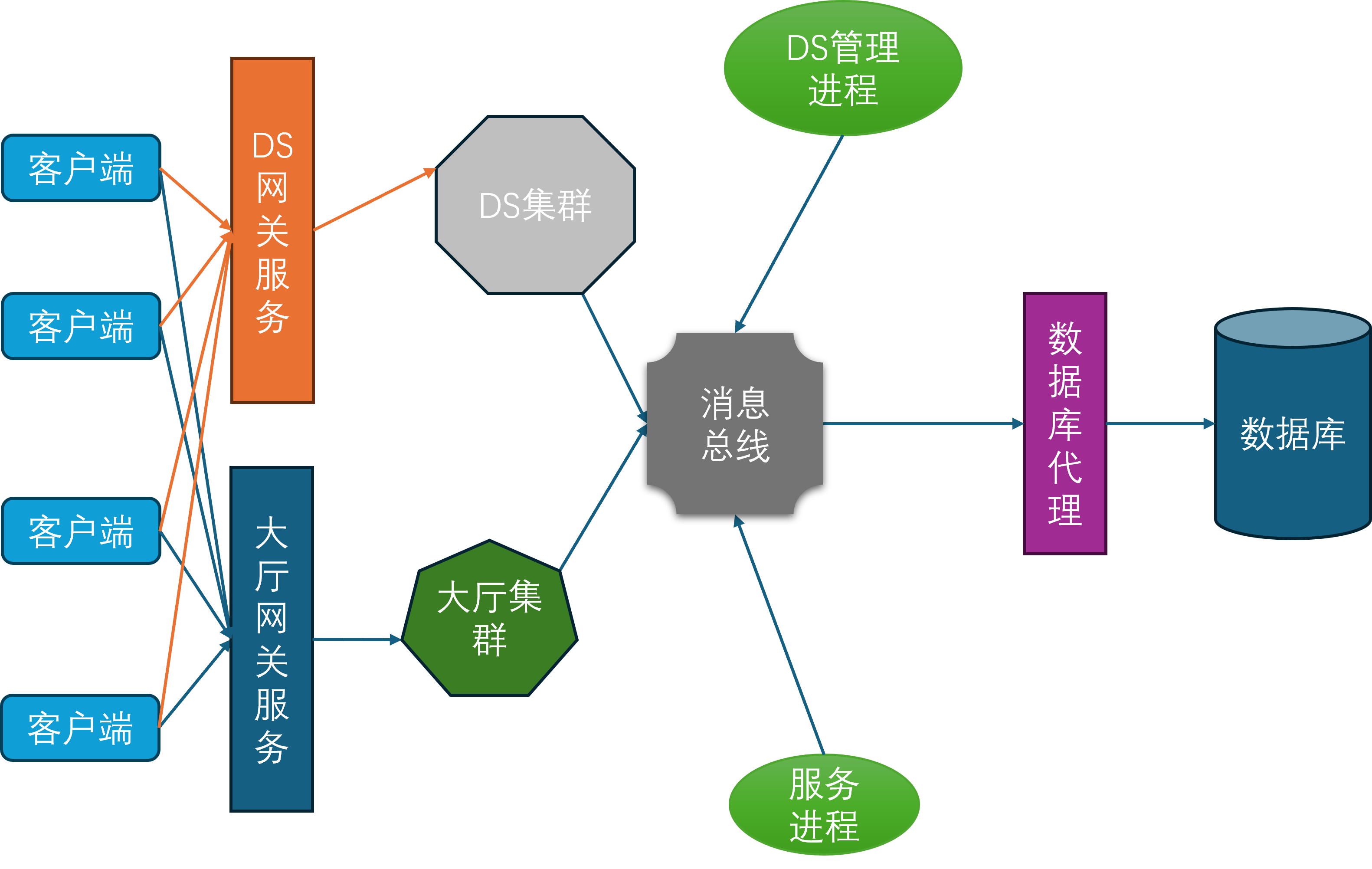

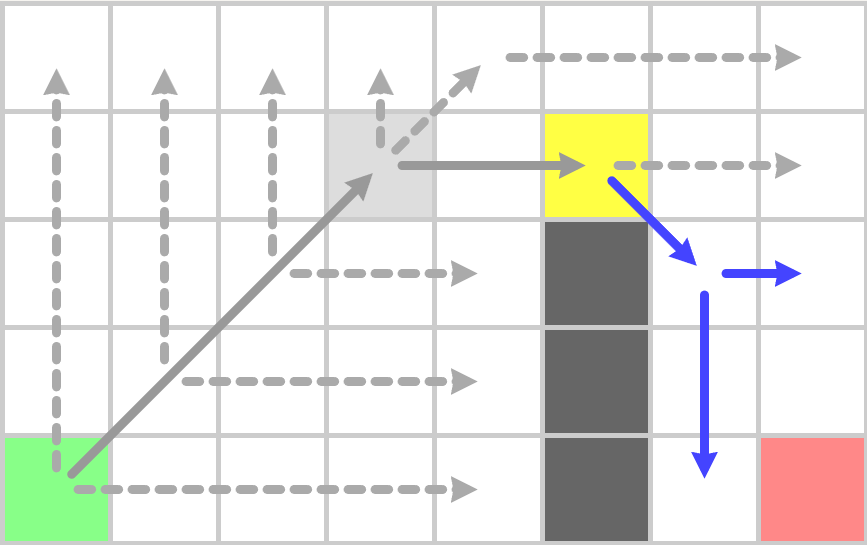

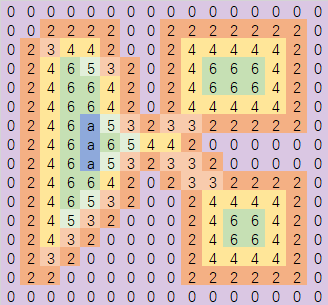

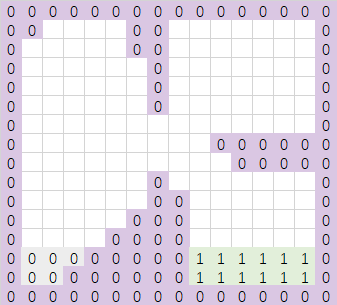

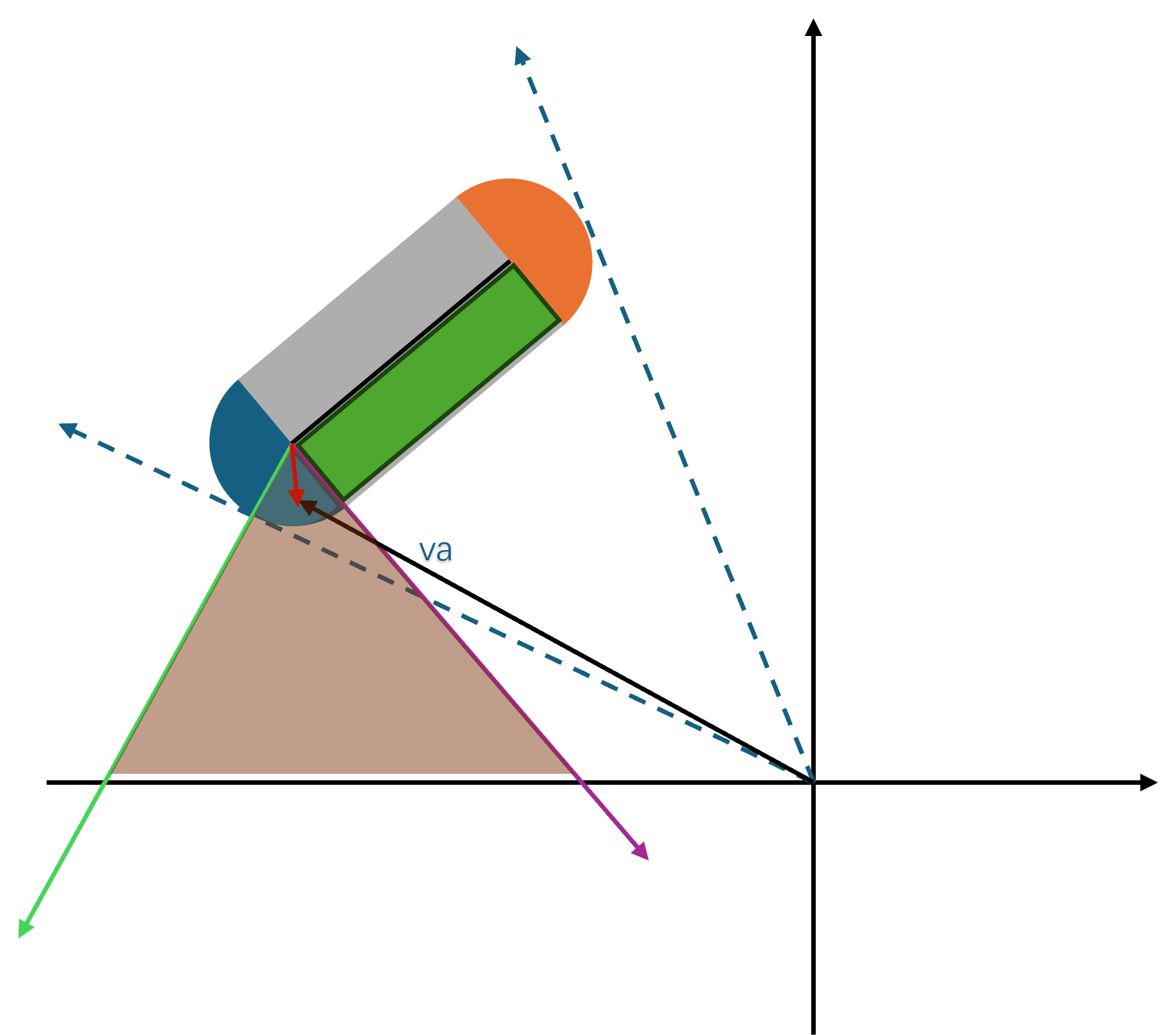

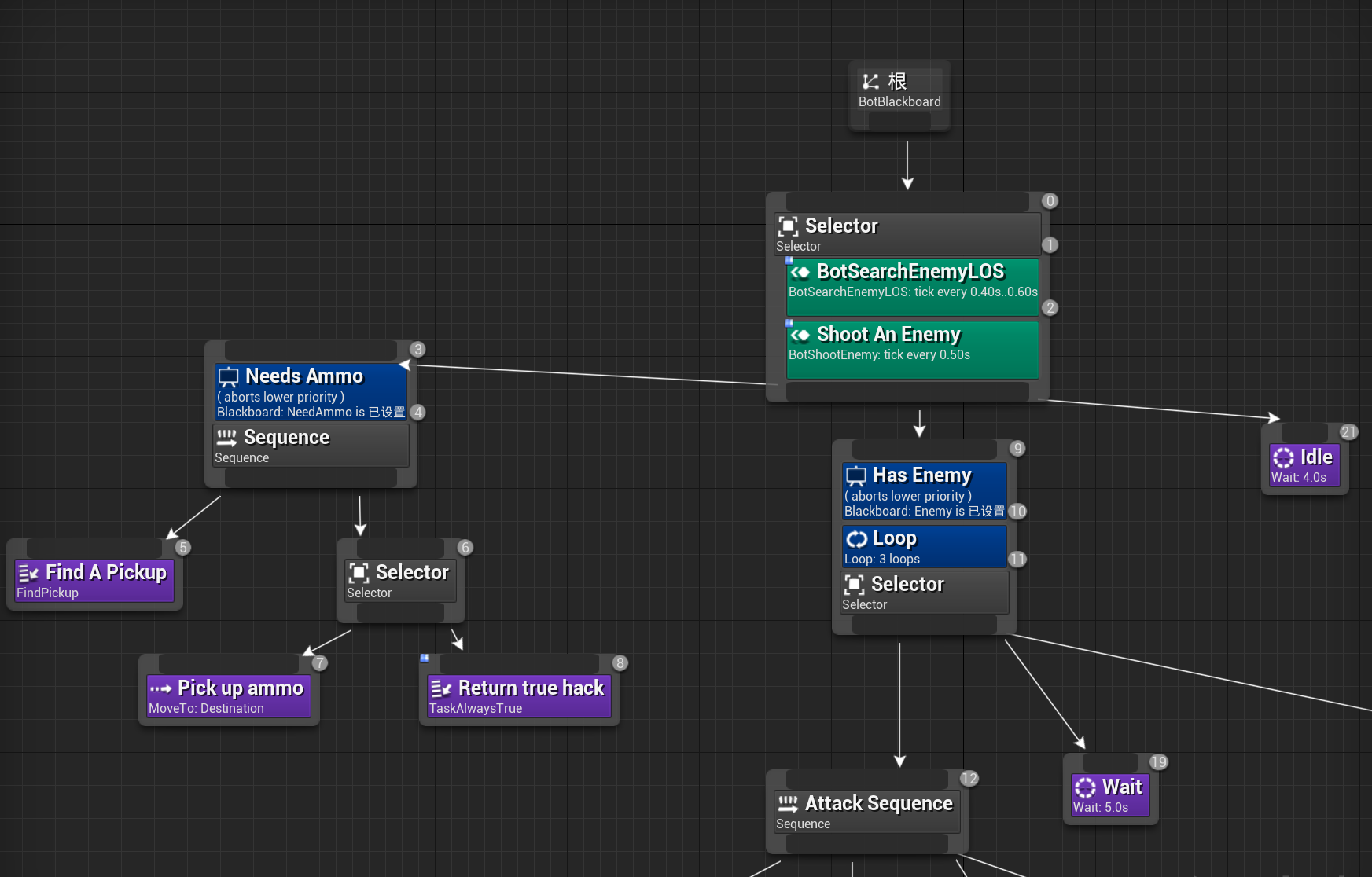

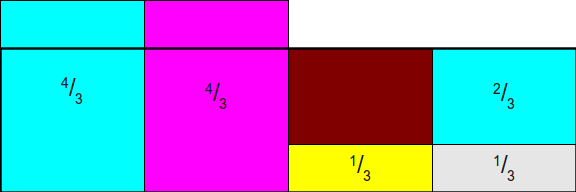

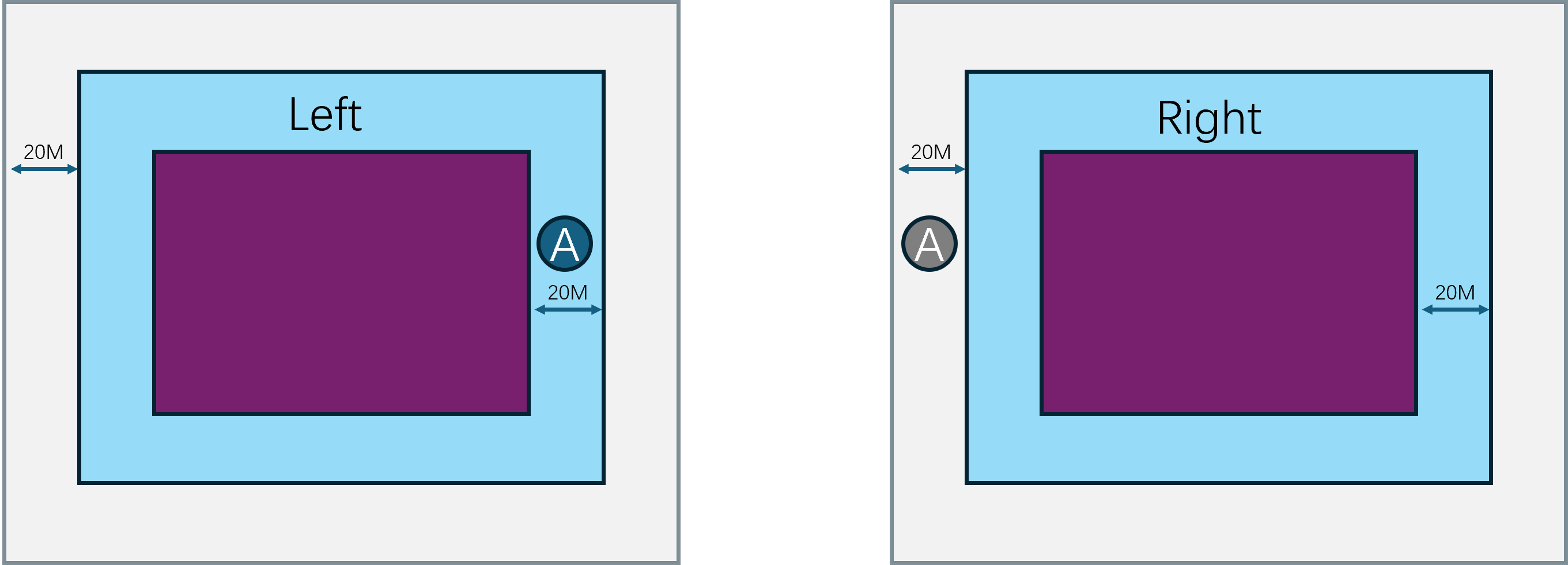

UE打包生成的DS单个进程基本只能服务一个地图场景,在不深度修改的情况下无法做到多地图共存。而且单个DS进程长时间运行下会有比较严重的内存占用,所以一般来说单个DS进程只能存活比较短的时间,粒度基本都是以小时为单位,无法像MMORPG那样单个进程可以持续运行数周直到版本维护。同时UE开发框架里基本只能写地图内的玩家交互逻辑,无法作为聊天、好友、商城的常见游戏服务的进程宿主,导致还需要使用cpp/python/go等语言额外开发一些联网服务,并修改UE引擎来接入与这些服务相通信的RPC。所以一般使用UE作为房间服务器的服务端架构基本会设计成这个样子:

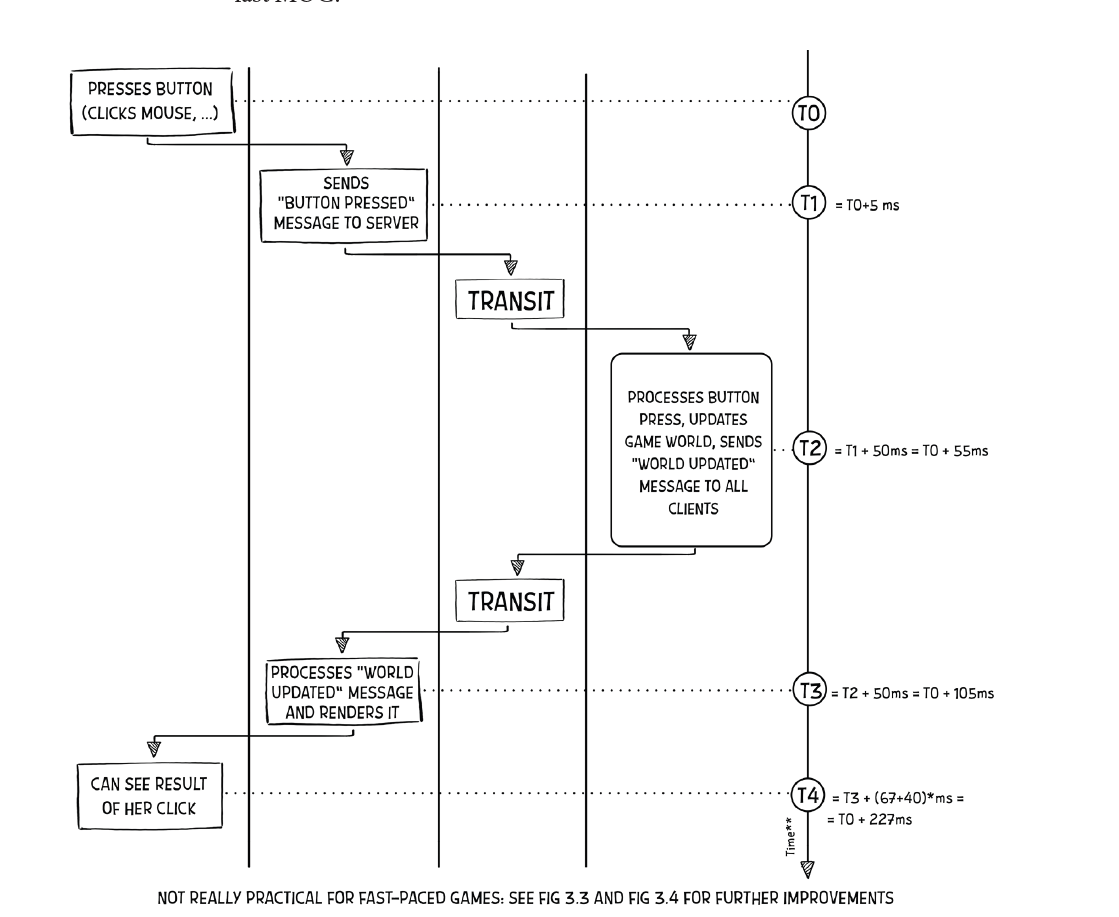

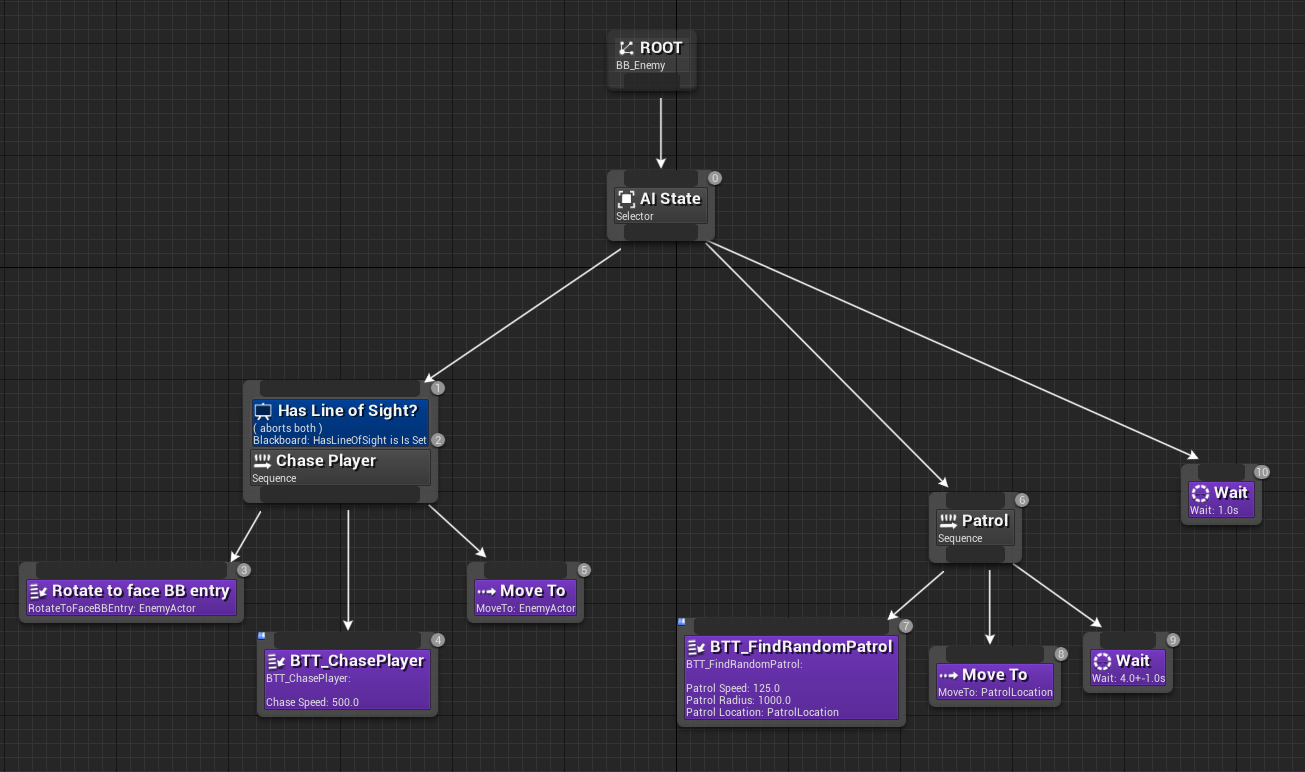

在这个架构图里,前置的网关分为了两种:一种是对接大厅服务的网关,采用TCP协议;一种是对接DS进程的网关,采用UDP协议。大厅服务主要负责玩家的注册登录、匹配、好友、组队、聊天等功能,而DS进程主要负责游戏场景的运行与玩家的交互逻辑。玩家客户端在启动之后,先连接到大厅网关服务,执行登录验证工作,登录成功之后就可以在大厅内执行一些场景无关操作。如果玩家需要进入特定的场景来进行相关玩法,则需要执行一个比较长的交互链条:

- 首先需要得到大厅服务这边的许可。当大厅服务认为可以进入特定场景的时候,会通知

DS管理进程DS Manager来获取一个可用的DS进程来加载这个场景。 - 其实这里还有一个类似于

Bigworld的BWMachined的DS Agent进程,部署在所有能承载DS的物理机器上,用来管理本机的所有DS的拉起、销毁和负载上报,这些DS Agent进程都受到接受DS Manager的管理。大厅请求DS Manager分配一个新的DS,DS Manager接收到请求之后会去找一个机器负载比较小的DS Agent,通知其创建一个新的DS进程,并加载指定的地图,开启特定的玩法。 - 当

DS Agent执行完这个DS进程的拉起操作之后,就会汇报给DS Manager,DS Manager就带上这个DS进程的地址信息通知回大厅。 - 大厅接收到目标

DS的地址信息之后,先通知到DS网关,给当前玩家加上目标DS的绑定,然后再通知当前玩家的客户端可以去发起到DS网关的连接。 - 客户端接收到

DS的连接允许信息之后,就会以UE的连接协议去连接到DS网关。注意此时客户端与大厅之间的连接是没有断的,所以此时客户端与服务器之间会同时存在两条连接通路。 DS网关此时会验证这个客户端是否有绑定的DS目标进程,如果有则开启一个UDP中转会话,用来桥接客户端与DS进程之间的UDP通信。

有的读者可能注意到UE里也有无缝迁移Seamless Travel的概念,但是这里的无缝迁移指的是一个DS进程以无缝的形式切换地图,此时客户端不需要断开与DS进程之间的连接,玩家控制的角色Character、控制器PlayerController和状态PlayerState能够自动的绑定到新的World。相对应的DS的非无缝迁移就是在切换地图之前DS通知所有客户端断开连接,然后再加载新的地图,此时客户端进程则重新执行连接与登录流程,这就会带来Character、PlayerController和PlayerState在服务端的销毁以及重新创建。

UE引擎是非常庞大的,包含了非常多的概念与模块,而且代码更新也极其频繁。在我有限的工作经历中,引擎重新编译涉及到的编译单元从UE4.23的不到2000个增长到现在UE5.5的将近6000个。不过跟服务端相关的Gameplay和网络相关功能UE4与UE5之间的差异并不大,所以本书所讲解的UE相关内容附带的代码都是基于UE4的。如果读者之前没有接触过UE,可以先去阅读一下Epic中国社区经理大钊在知乎写的系列文章Inside UE4,补充一下知识储备。当然读者如果完全对UE不感兴趣,可以在后文的阅读中直接跳过UE相关的章节。如果不是工作需要查证相关问题的话,我自己都有点看不下去这些源代码。

Mosaic Game 服务端介绍

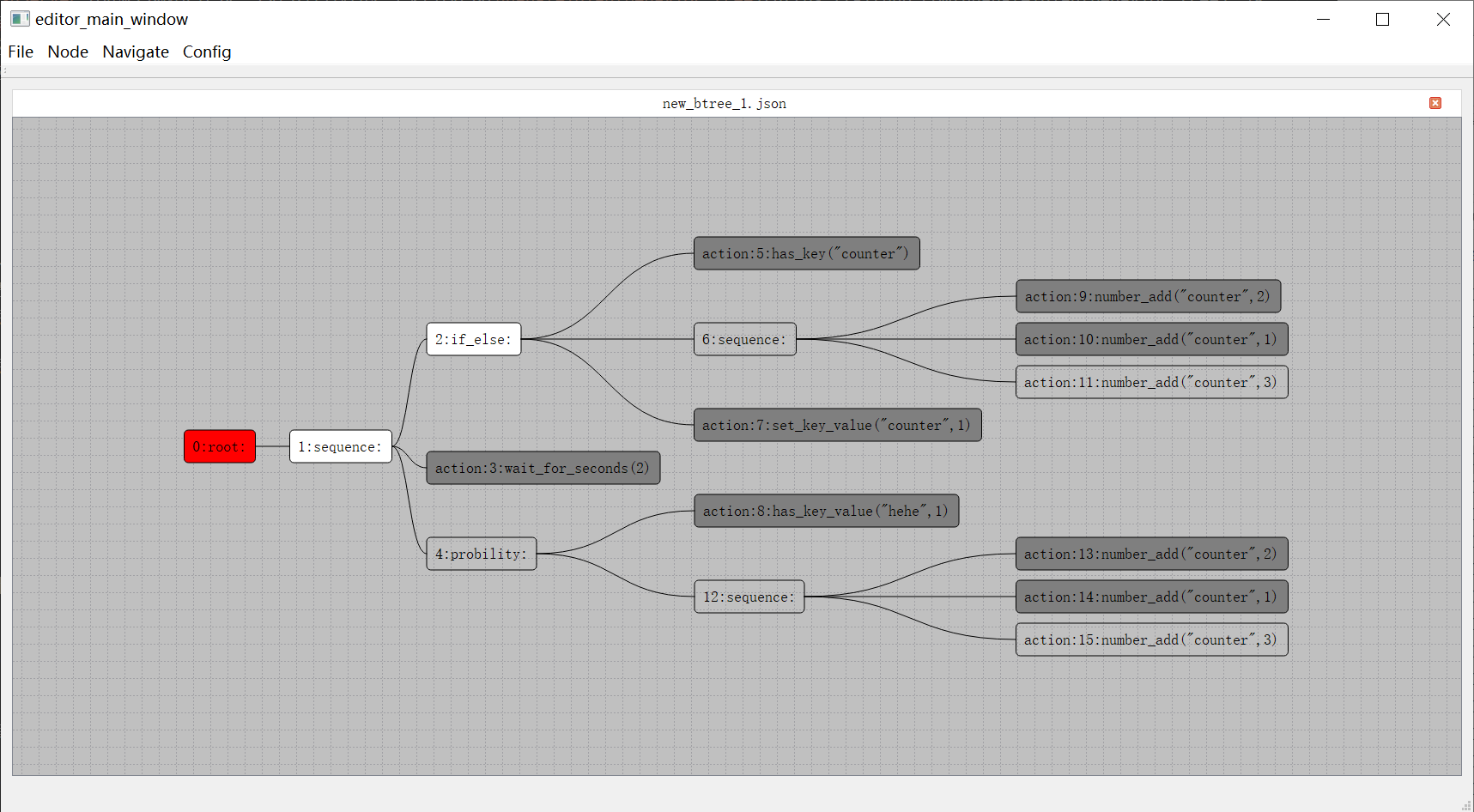

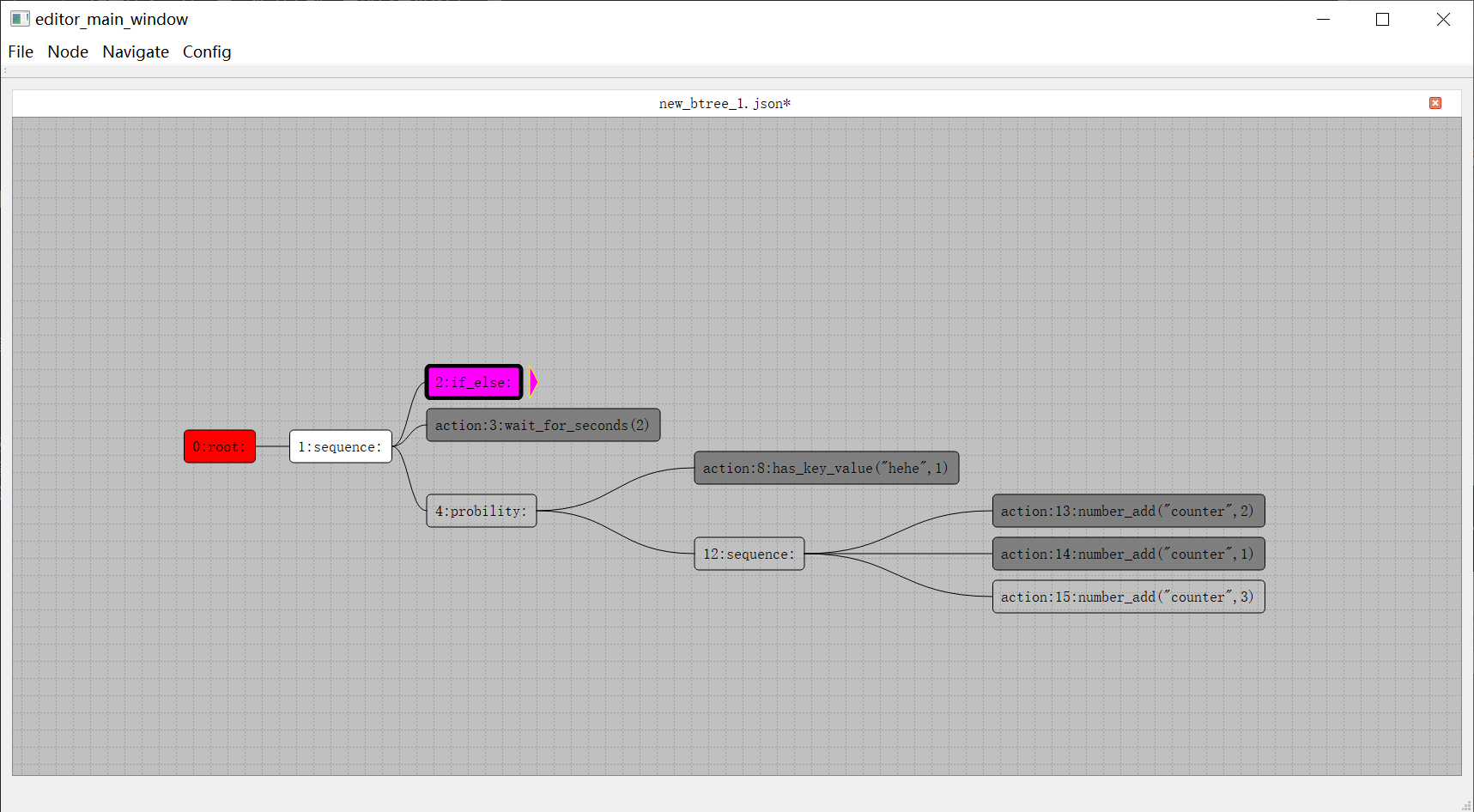

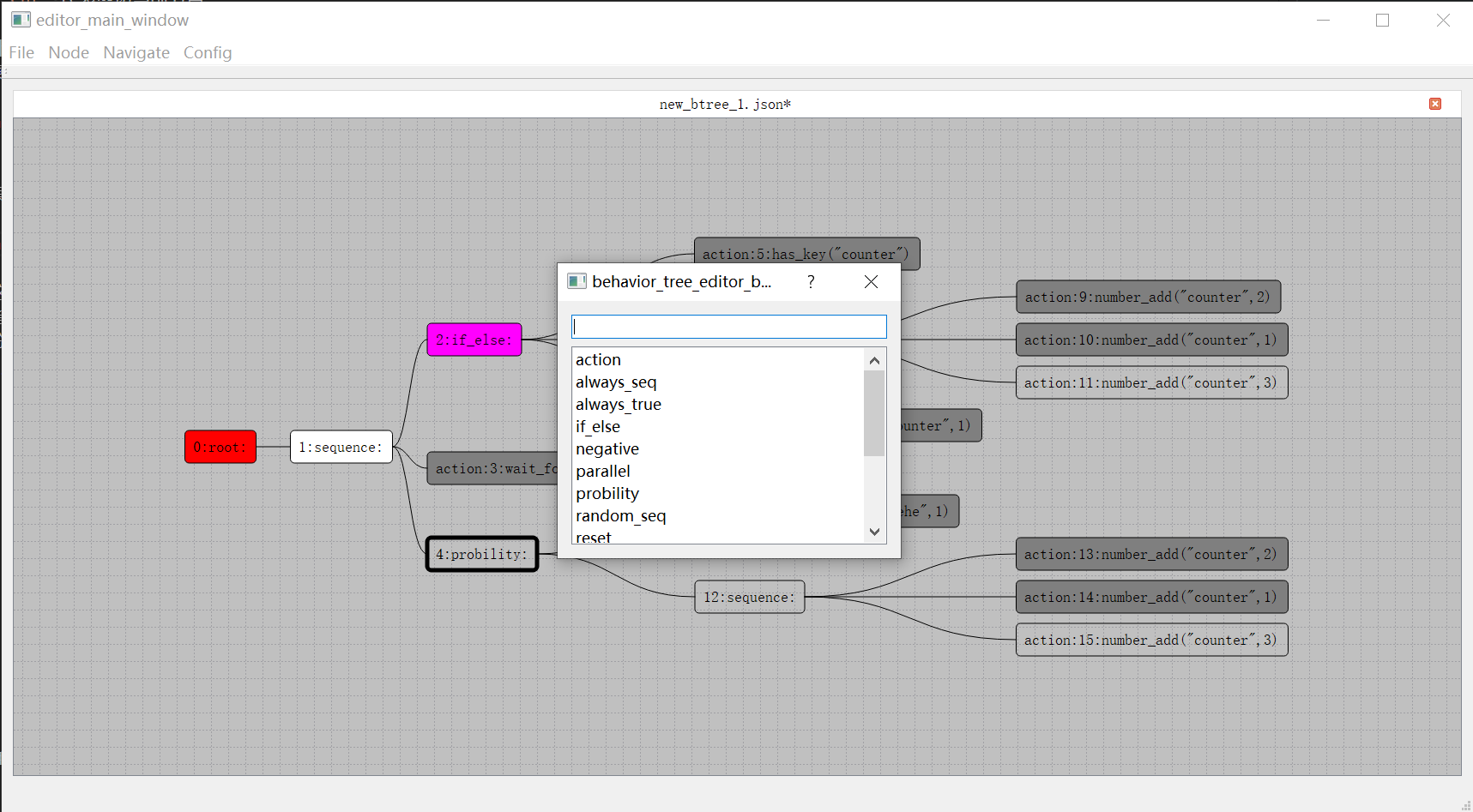

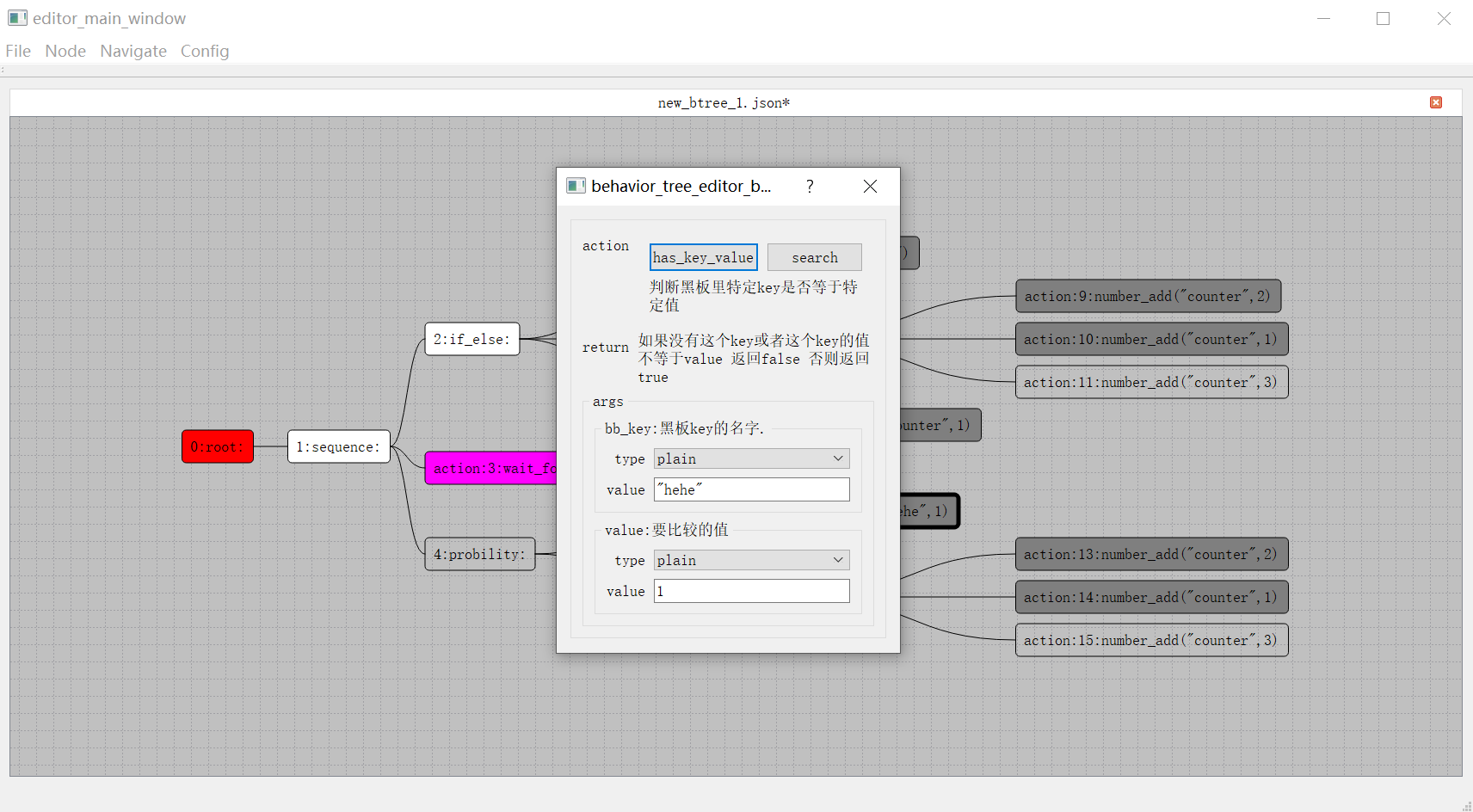

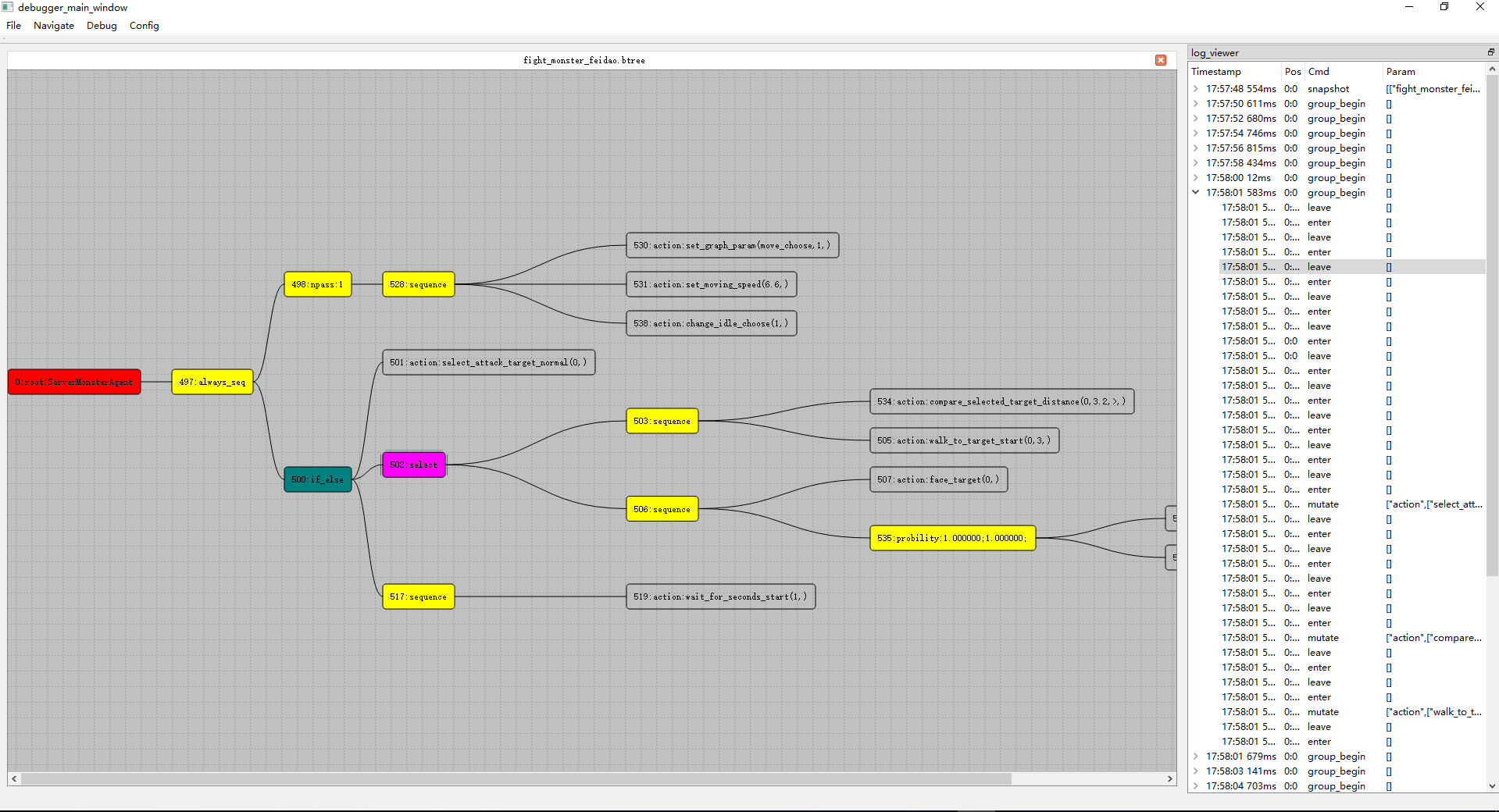

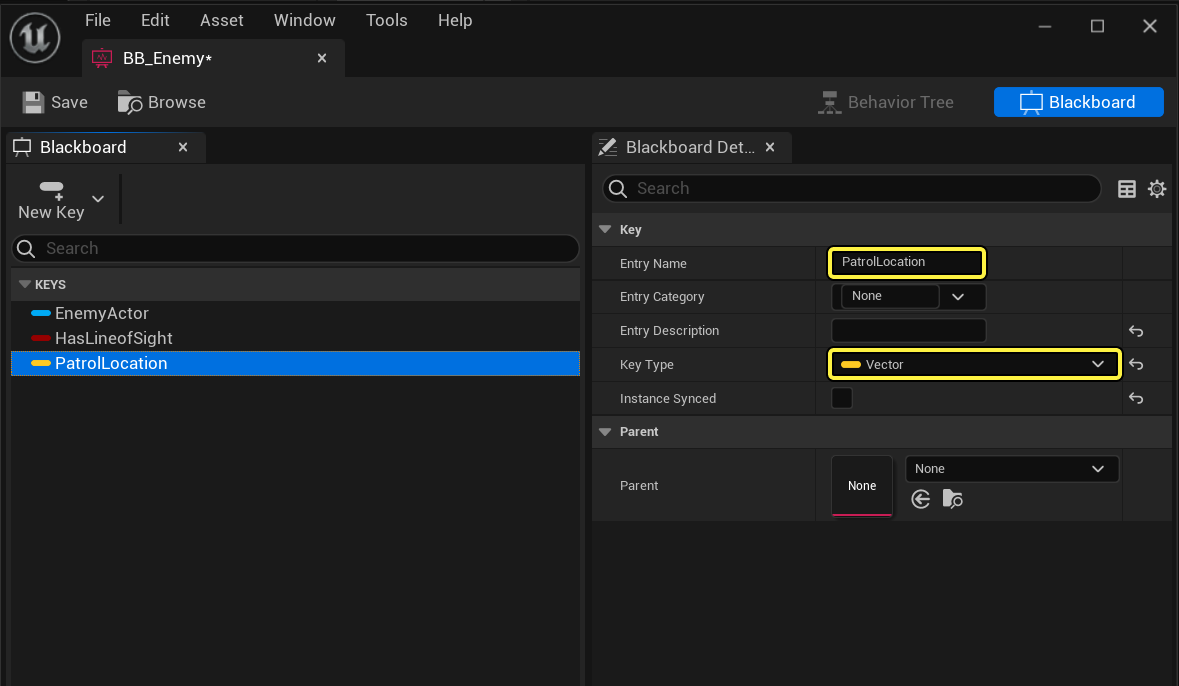

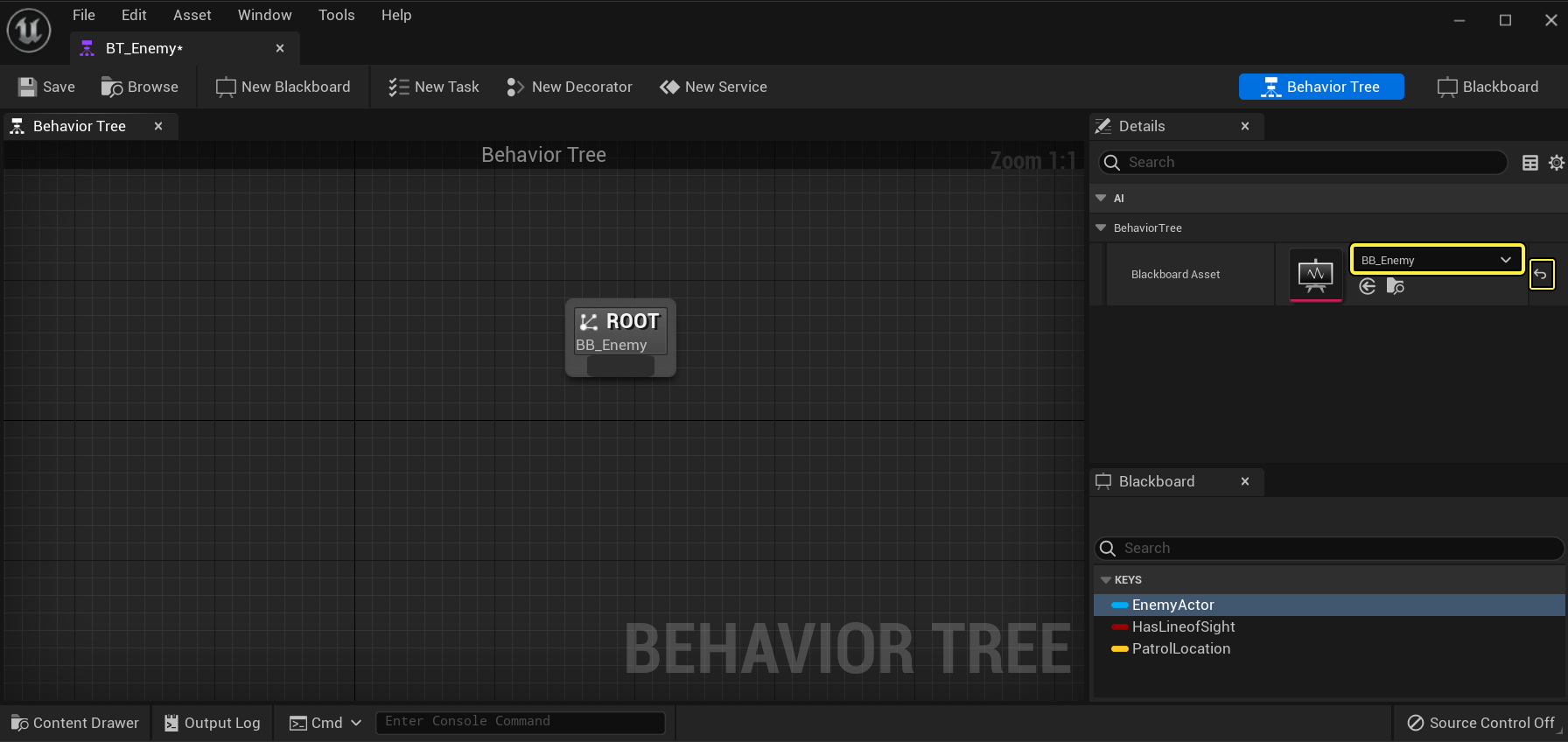

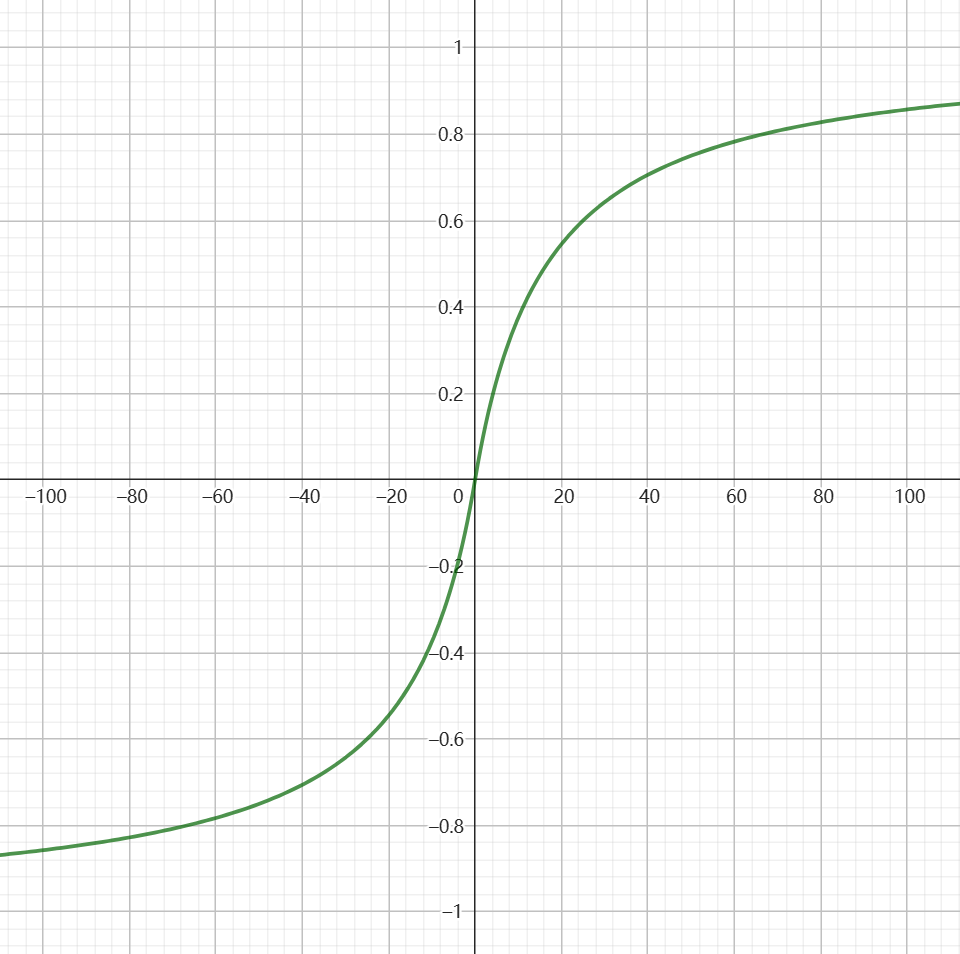

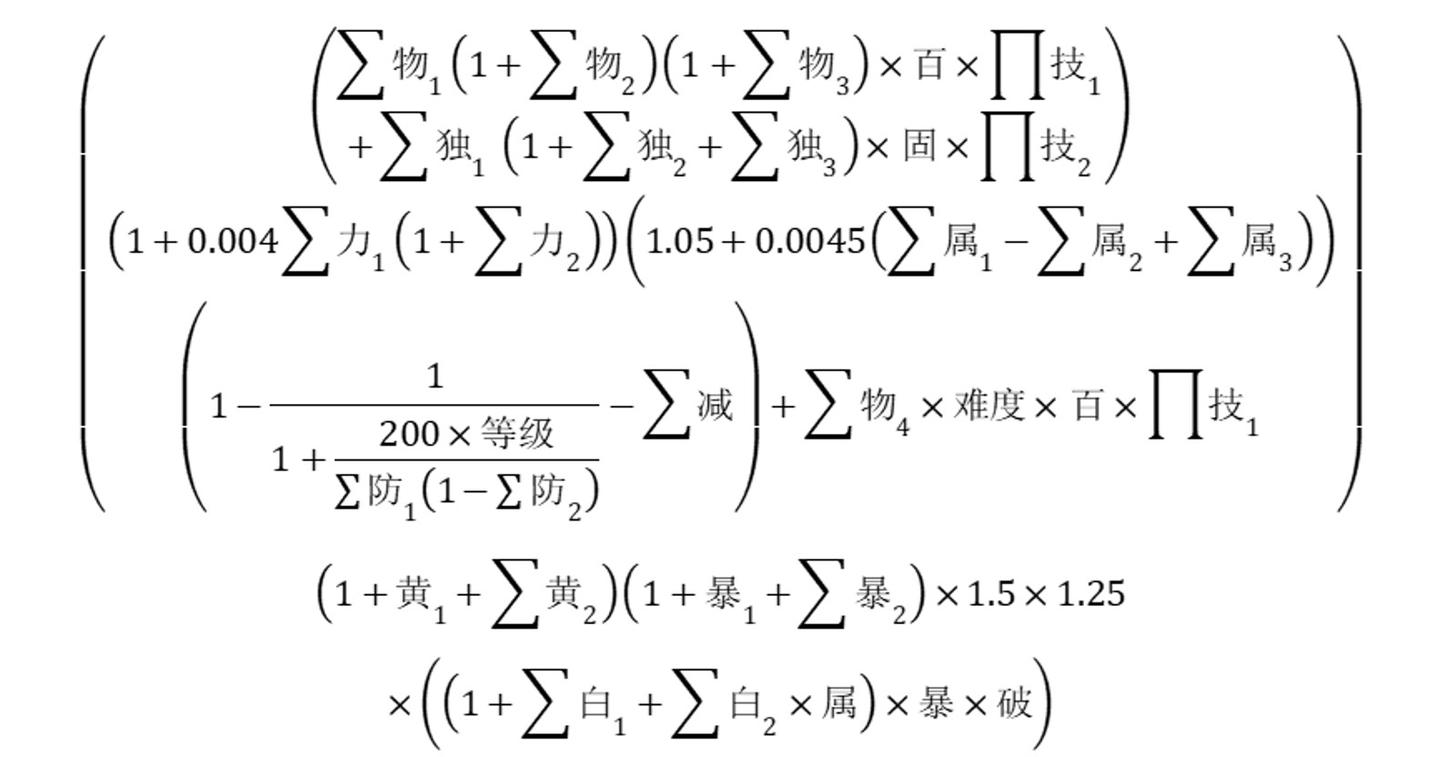

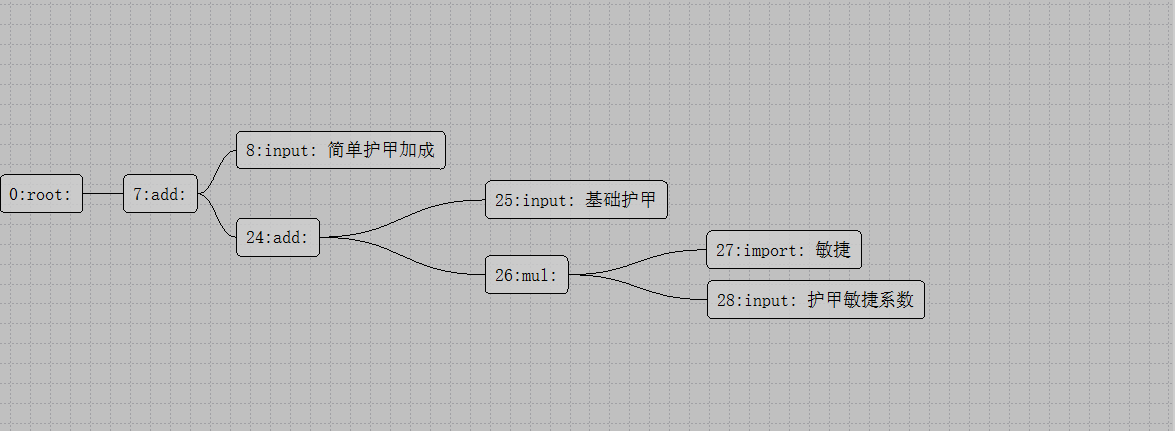

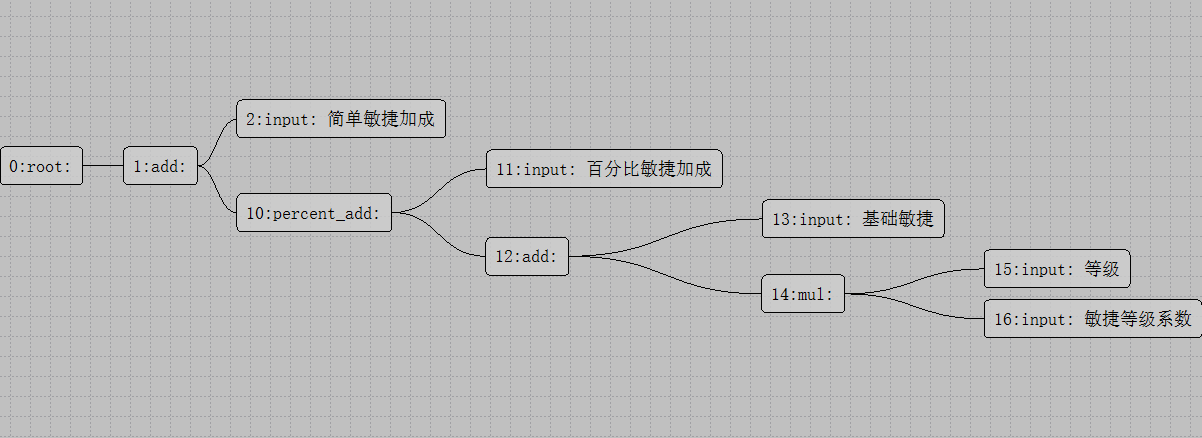

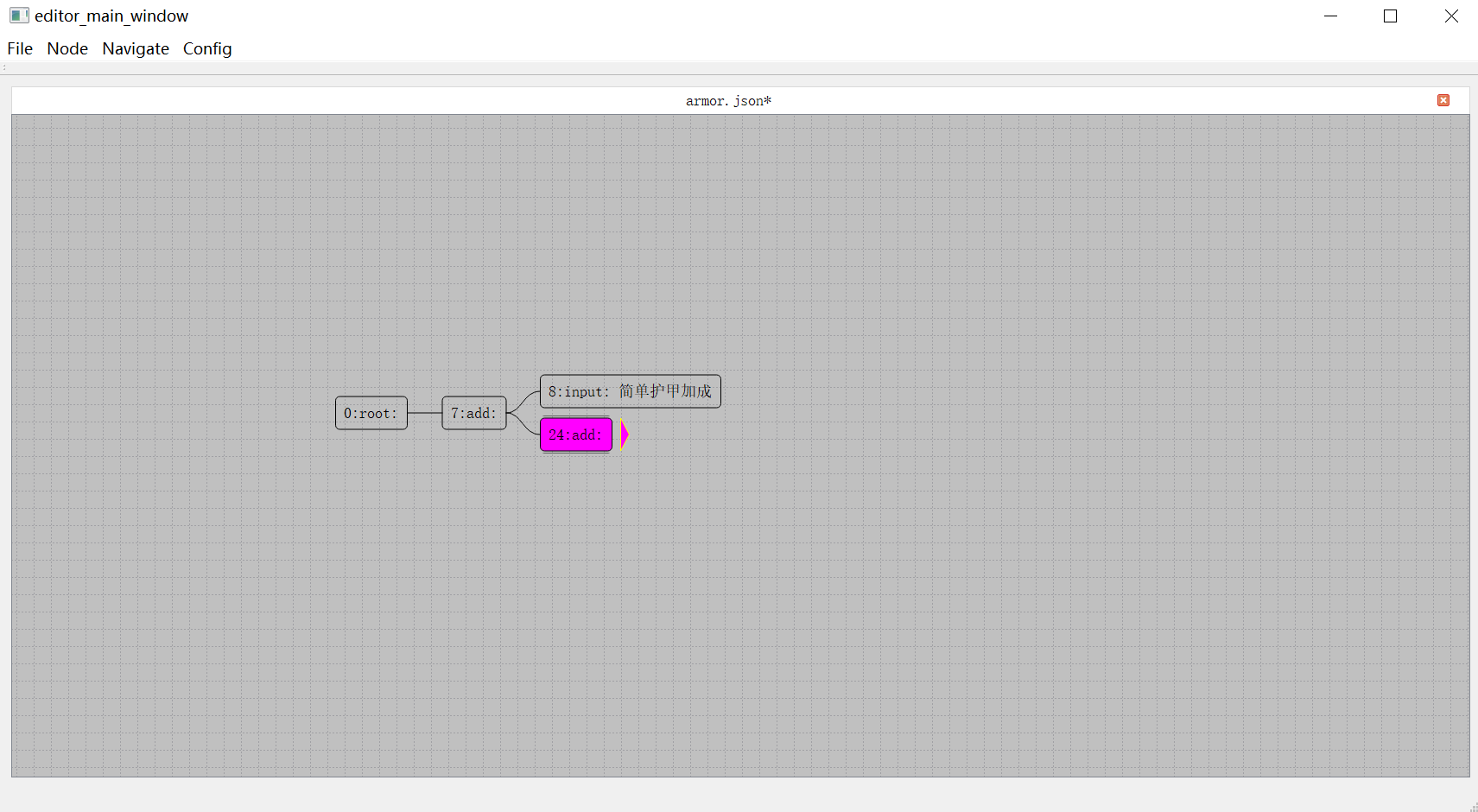

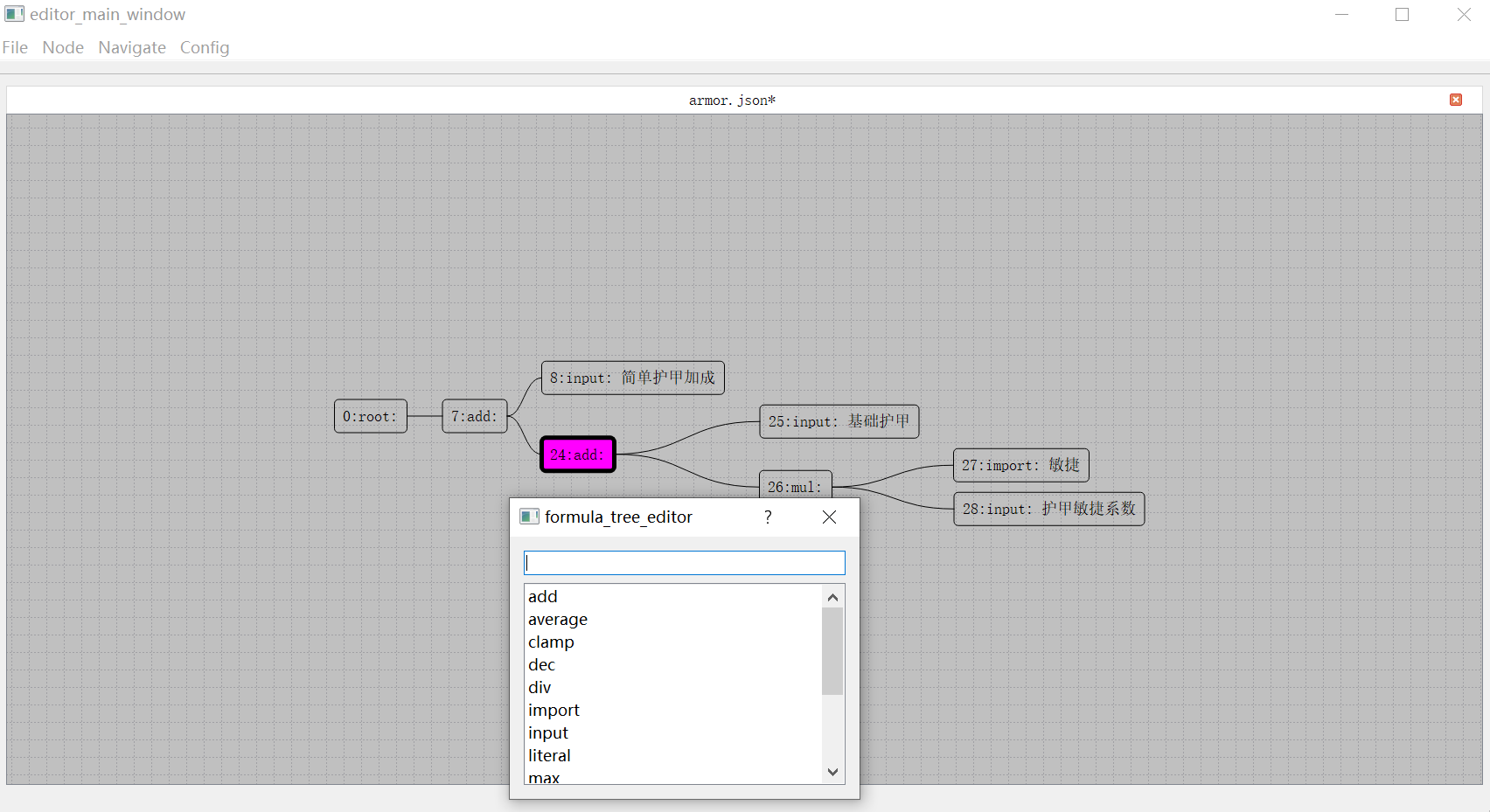

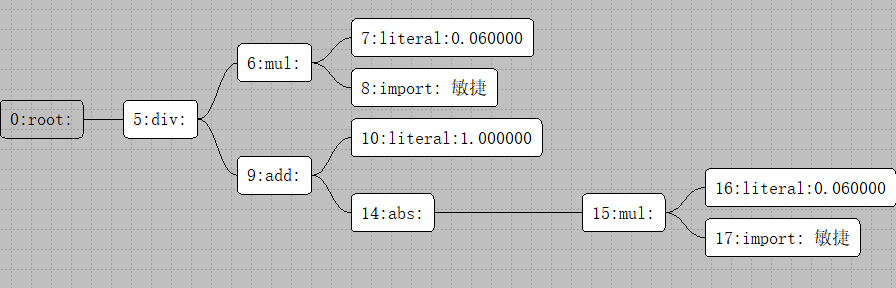

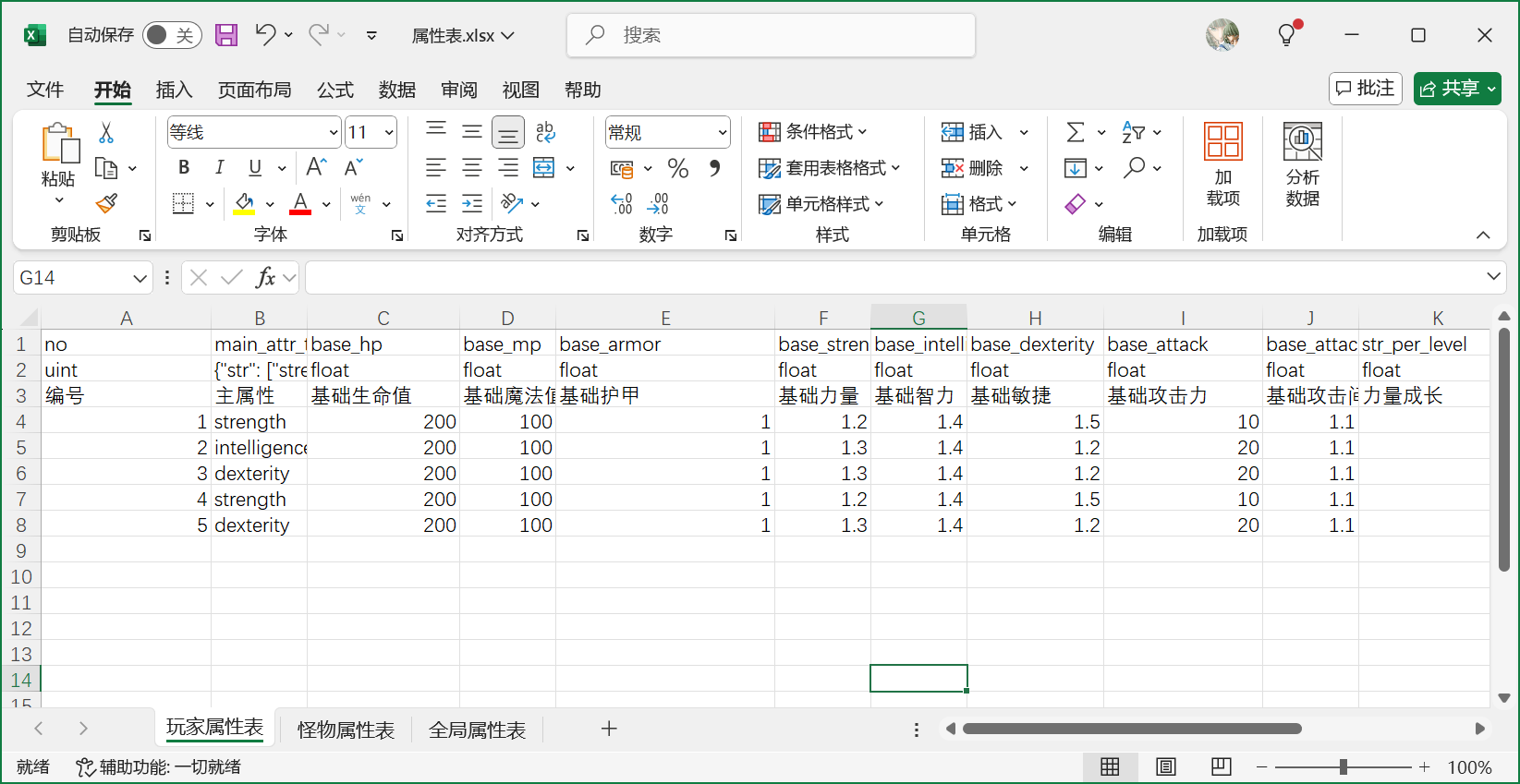

mosaic_game这个项目创建于2020年,但是其实其使用的核心组件在2018年就可以开始陆陆续续的开发了。当时我负责了项目内的行为树与AI,但是当时的编辑器是PyQt实现的,代码非常扭曲,导出的数据文件居然是基于Pickle的,更新功能难度非常大。备受折磨的我准备使用CPP + QT5来重写,将数据格式改成json,顺带体验一下什么叫做GUI开发。完成了行为树编辑器的重新迭代之后,为了方便日常开发中的行为树问题调试,就给这个编辑器添加了调试器的功能。整个行为树开发套件开源在huangfeidian/behavior_tree上。刚好项目内有一套基于树形结构的角色数值属性计算系统,就顺带以这个图形化的编辑器框架为基础,构造了属性公式编辑器,以及属性公式求值运行时,开源在huangfeidian/formula_tree。

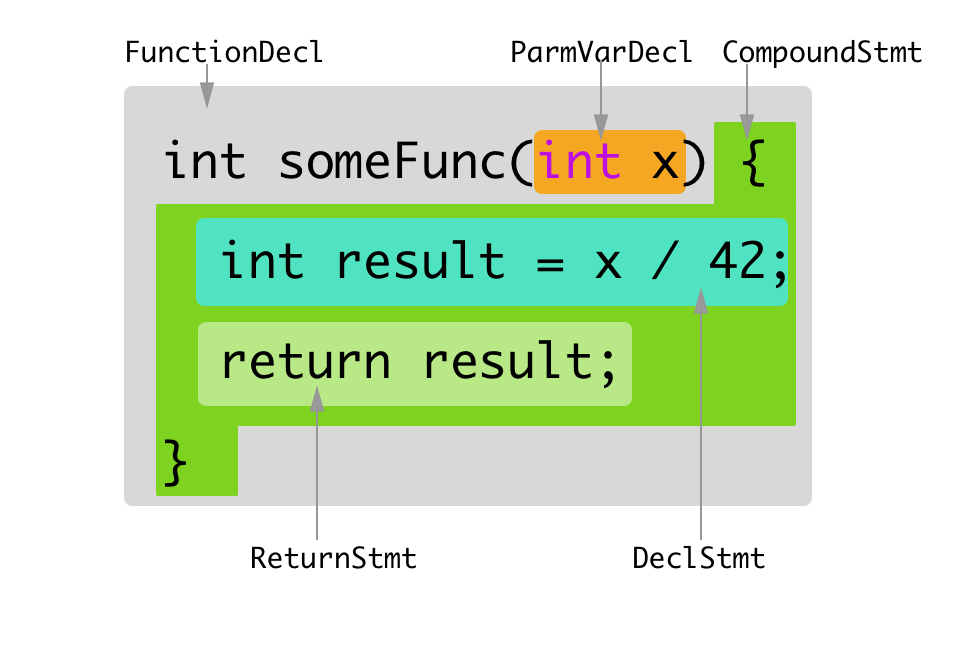

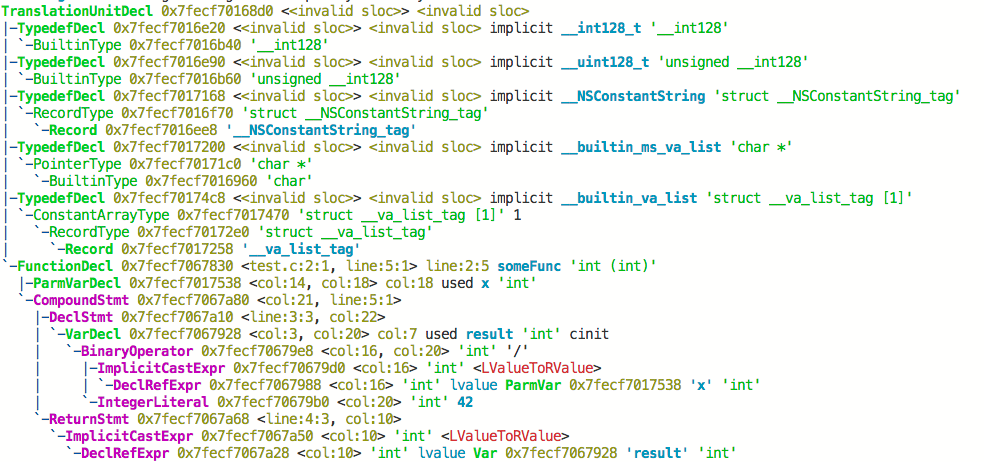

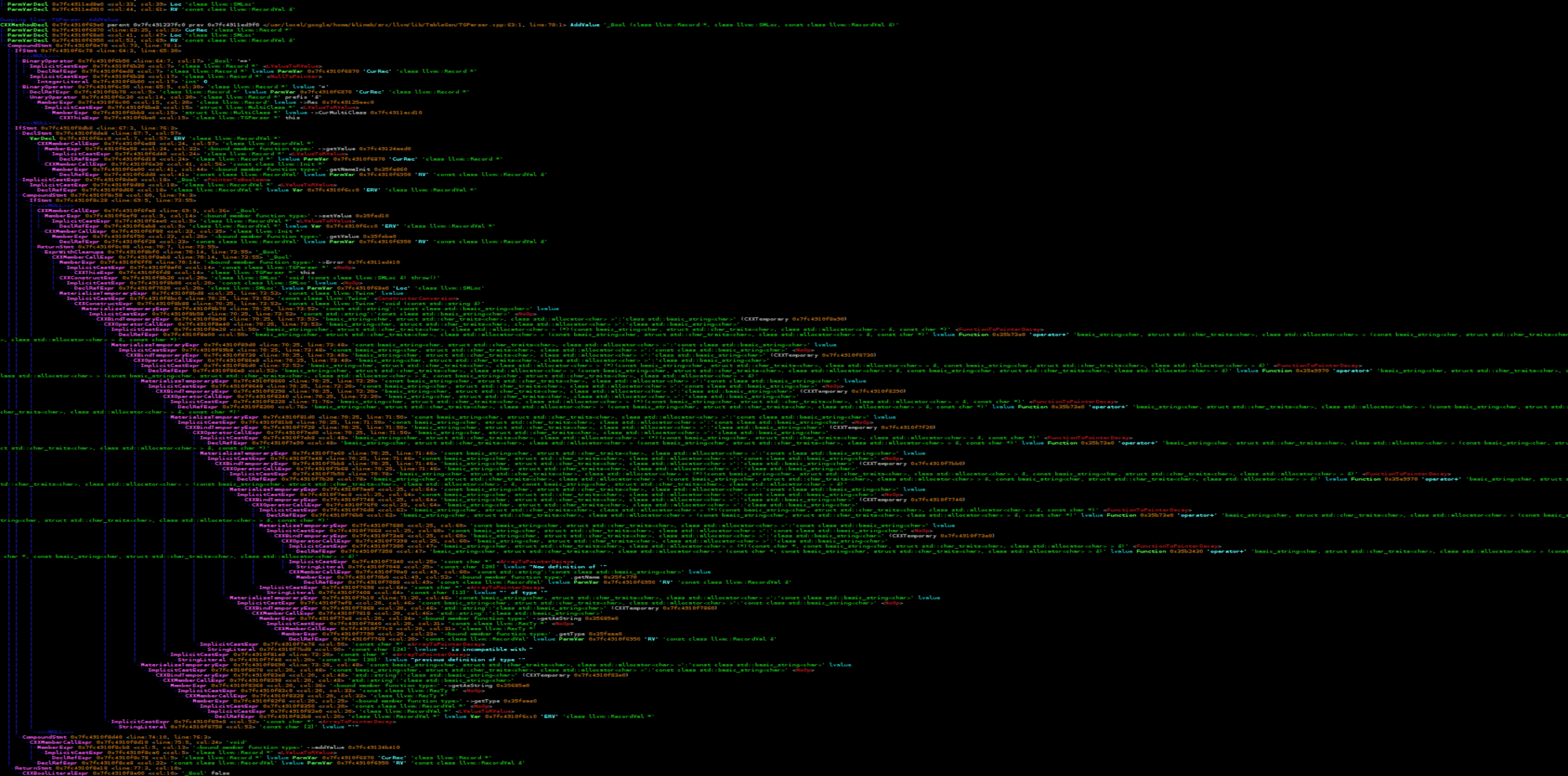

当时项目组使用的服务端引擎是没有源代码的,我们日常开发只能使用Python来写业务逻辑。写Python写烦了就开始琢磨这个引擎的底层实现,特别是玩家属性在Python脚本里修改之后是怎么自动同步到客户端的。当时Bigworld代码还未开源,同时UE4引擎还在我的认知之外,自己对CPP还很热情,因此开始从零开始用CPP来实现一个属性自动同步系统。这个属性自动同步系统还是比较复杂的,为此开发了好几个库:基于json的序列化与反序列化库huangfeidian/any_container;基于libclang的CPP反射库huangfeidian/meta;最终的属性修改自动同步库huangfeidian/property_sync。

在开发huangfeidian/meta这个CPP反射库的过程中,发现这个库提供的元数据收集加代码生成功能不仅可以为上面的属性同步系统服务,还可以来构造基于json的RPC系统,甚至还可以以此来构造基于Actor/Component的Entity系统。构造完这些系统之后发现项目组使用的游戏服务器的大半功能都被覆盖了,为什么不去整个大活,仿造一个完整的游戏服务器!刚好在网络通信上我也有一些积累,基于boost/asio实现过一个带流量加密的http代理服务器huangfeidian/http-proxy。于是就有了mosaic_game这个项目的诞生,开始了我慢慢填坑的过程。

这个mosaic_game是基于纯CPP实现的,但是CPP的包管理功能很弱,不像Python有很多开箱即用的库,因此在开发这个项目的过程中,我需要自己实现很多功能。为了方便游戏内各个功能的验证与调试,避免每次都启动整个游戏服务器通过再添加日志来定位问题,后续功能的添加基本都是以库的形式来组织的,除了上述介绍的功能之外,主要的功能库还有:

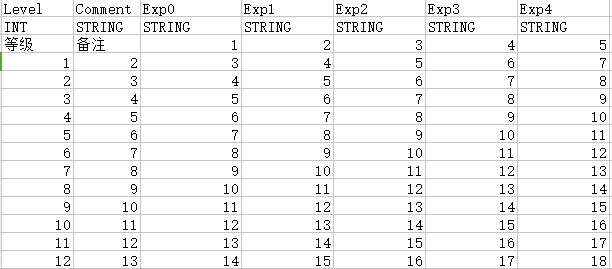

- 将

excel表格中的数据转换为json的工具库huangfeidian/typed_matrix。这个库里还引用了我写的另外两个小组件:用来规定和验证excel数据格式的huangfeidian/typed_string和用来读取excel文件的huangfeidian/xlsx_reader。 - 聚合了各种

AOI计算方法的库huangfeidian/aoi。 - 聊天系统huangfeidian/chat。

- 事件和状态机库huangfeidian/events。

- 匹配系统huangfeidian/match_maker。

- 游戏内的

Entity系统huangfeidian/entity_component_factory。 Redis桥接库huangfeidian/http_redis。MongoDB桥接库huangfeidian/http_mongo。- 基于

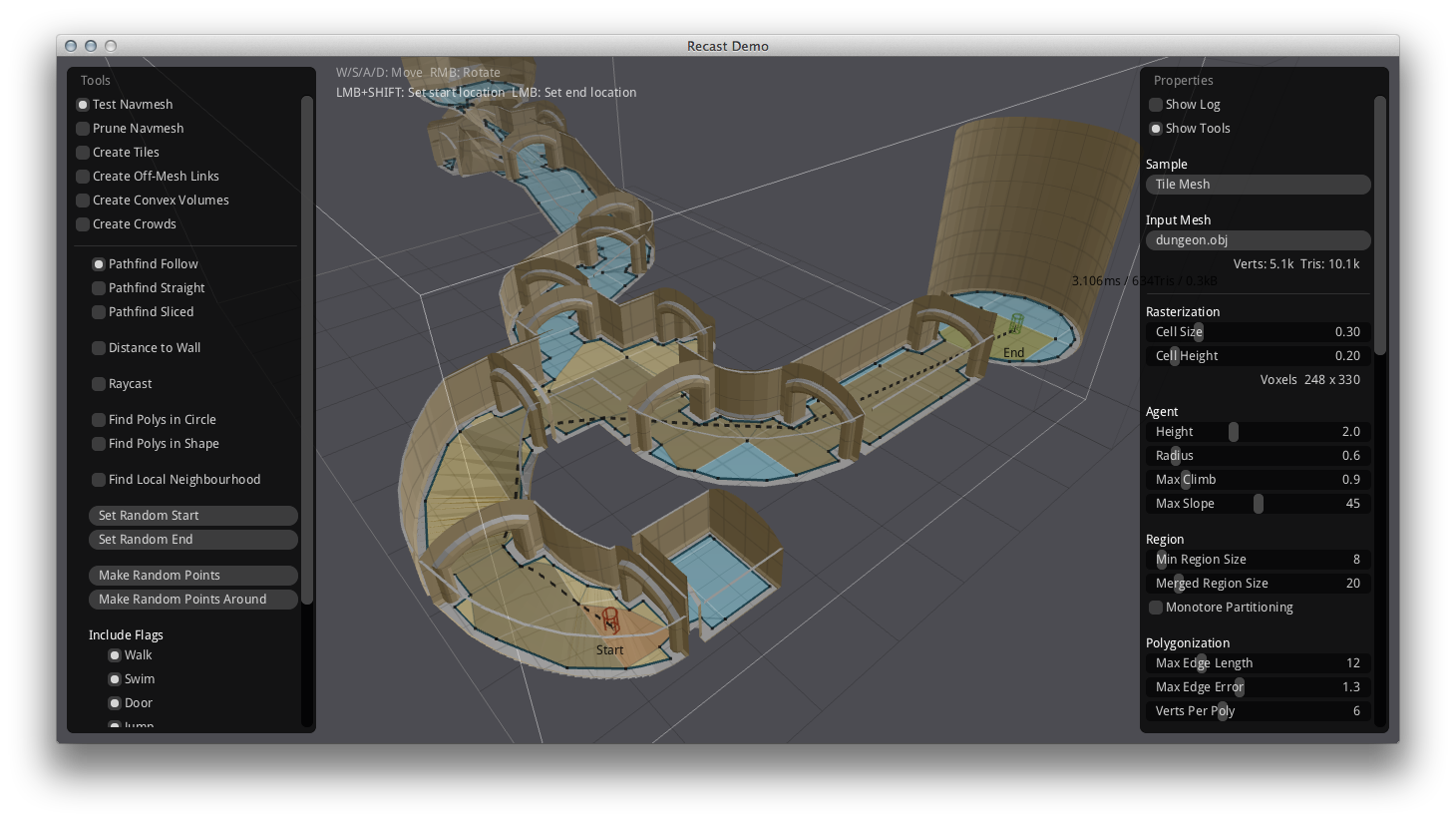

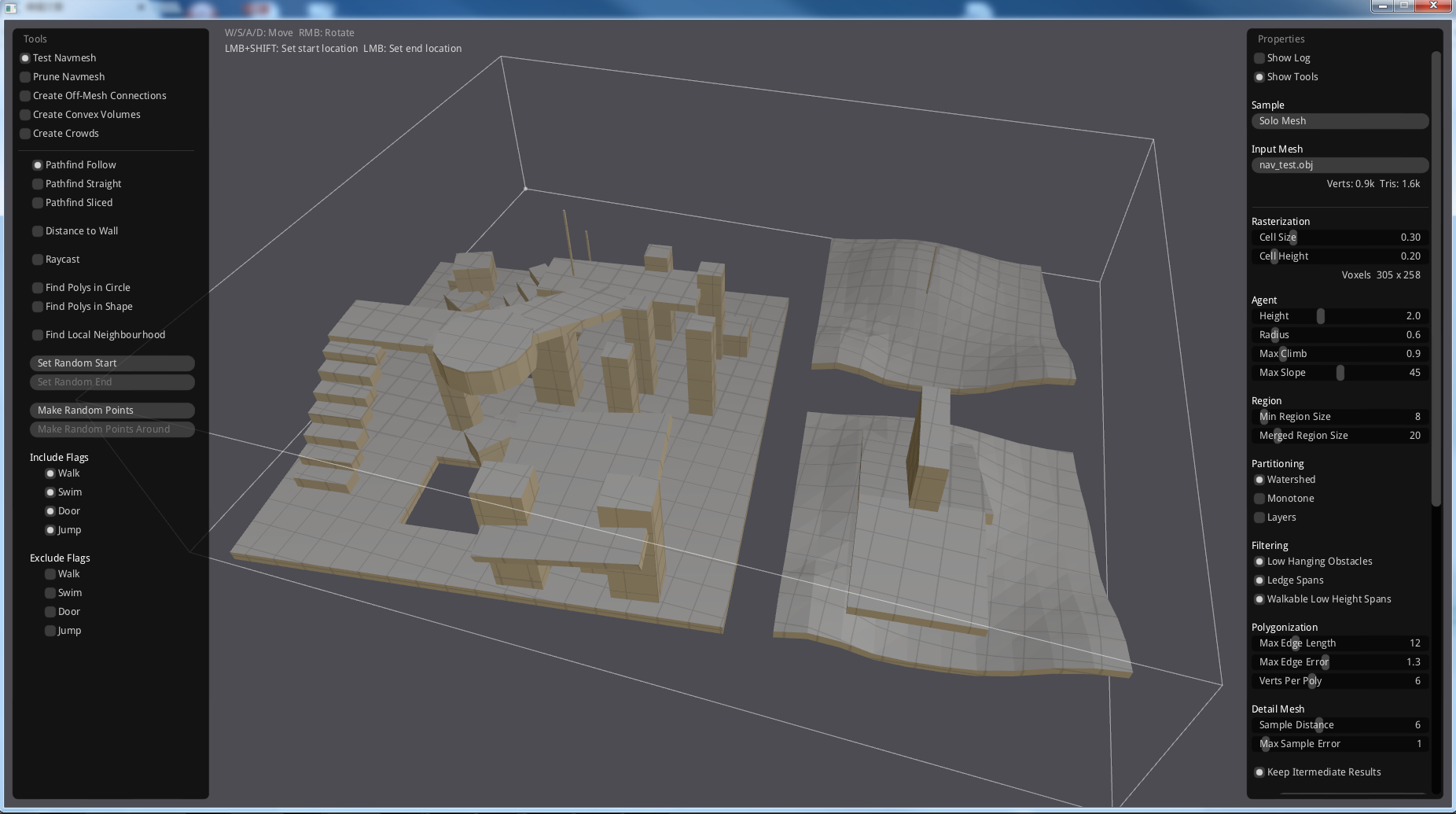

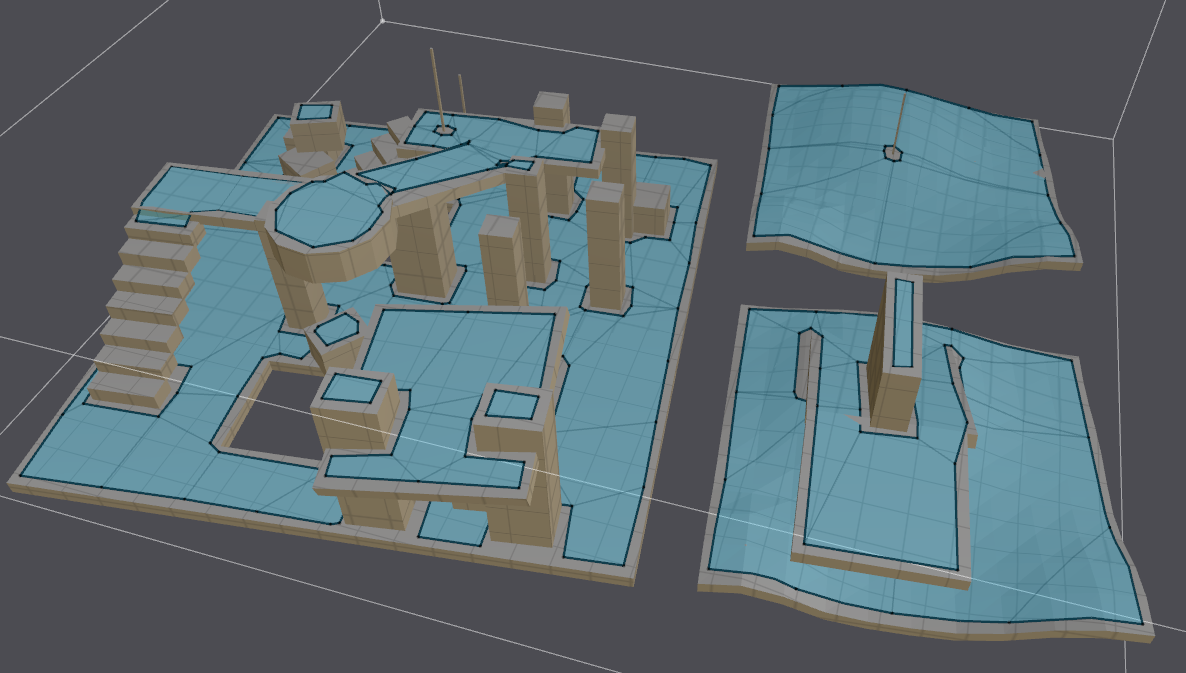

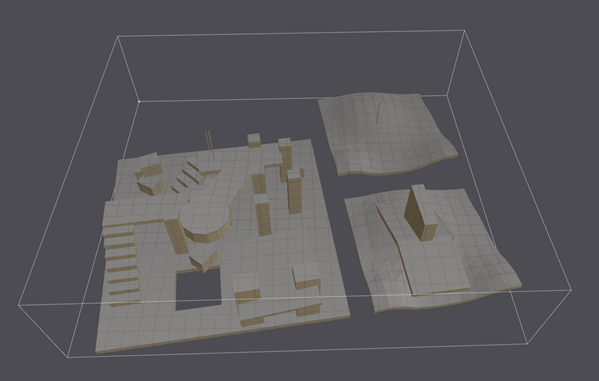

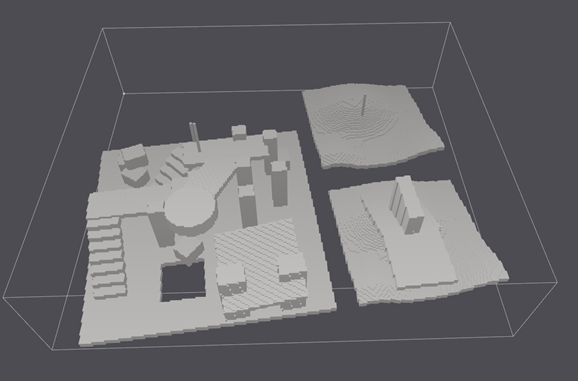

RecastNavigation的异步寻路库huangfeidian/AsyncDetourCrowd。 - 分布式场景管理库huangfeidian/distributed_scene。

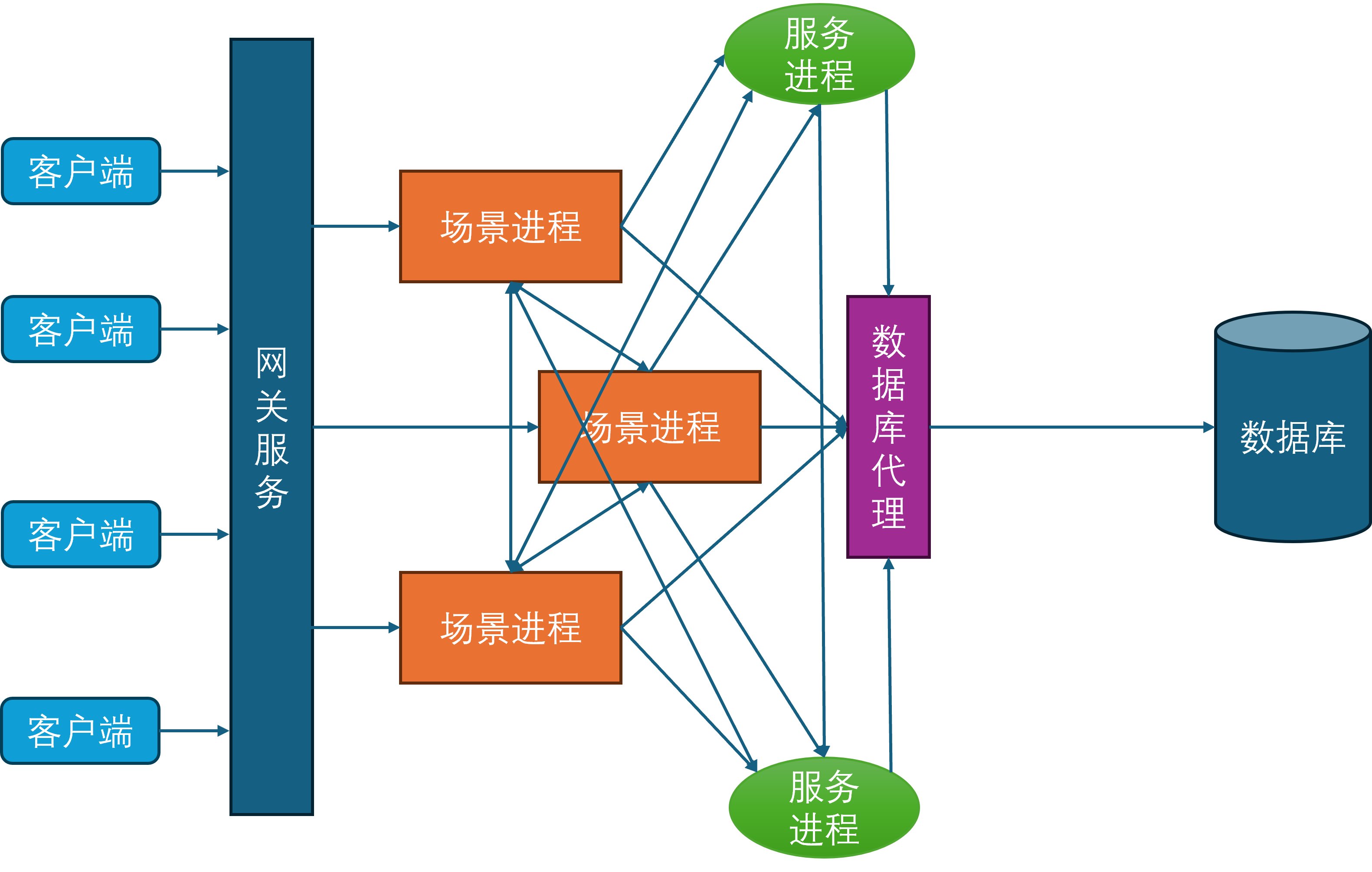

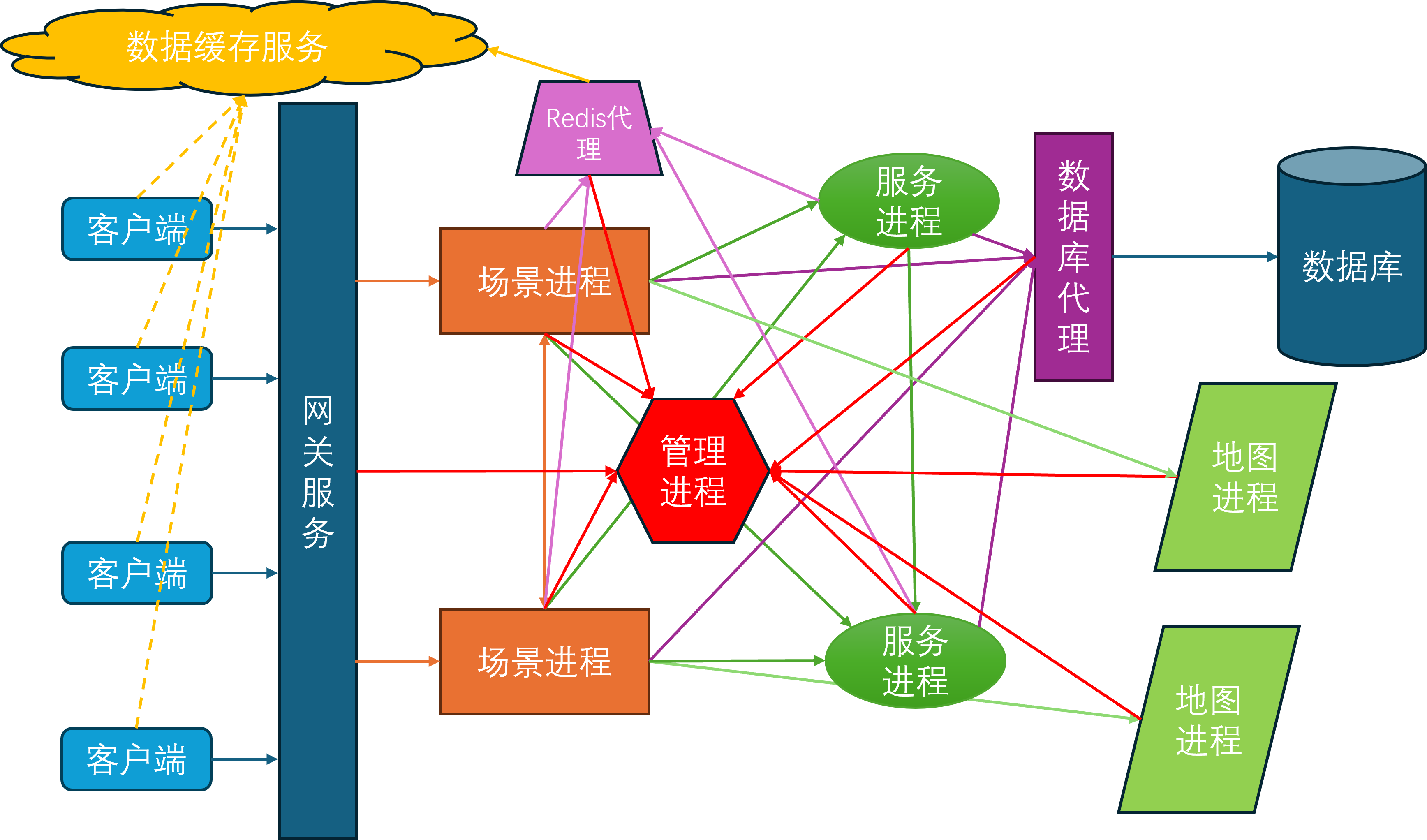

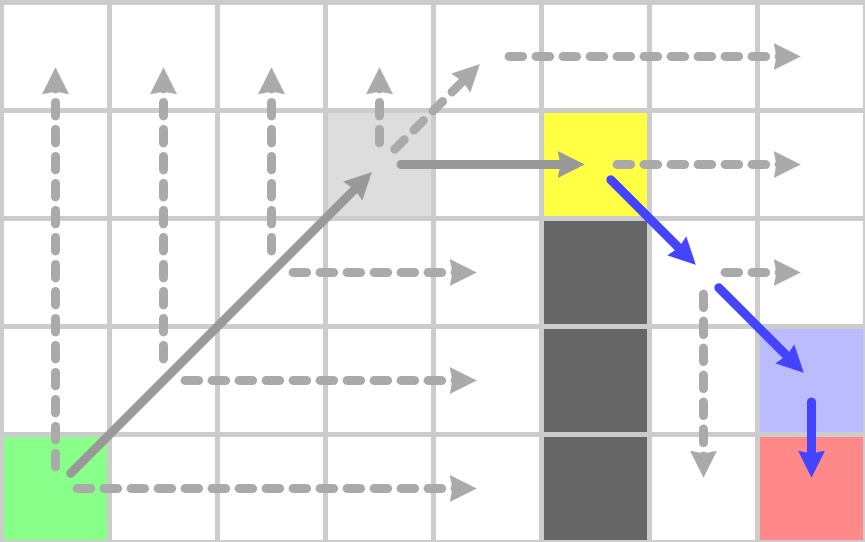

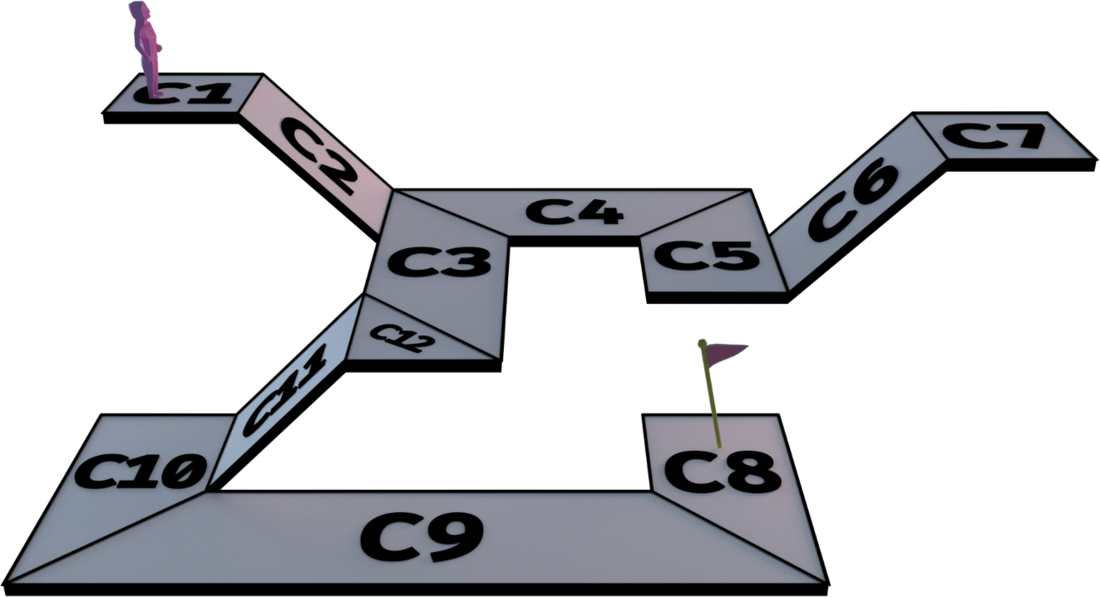

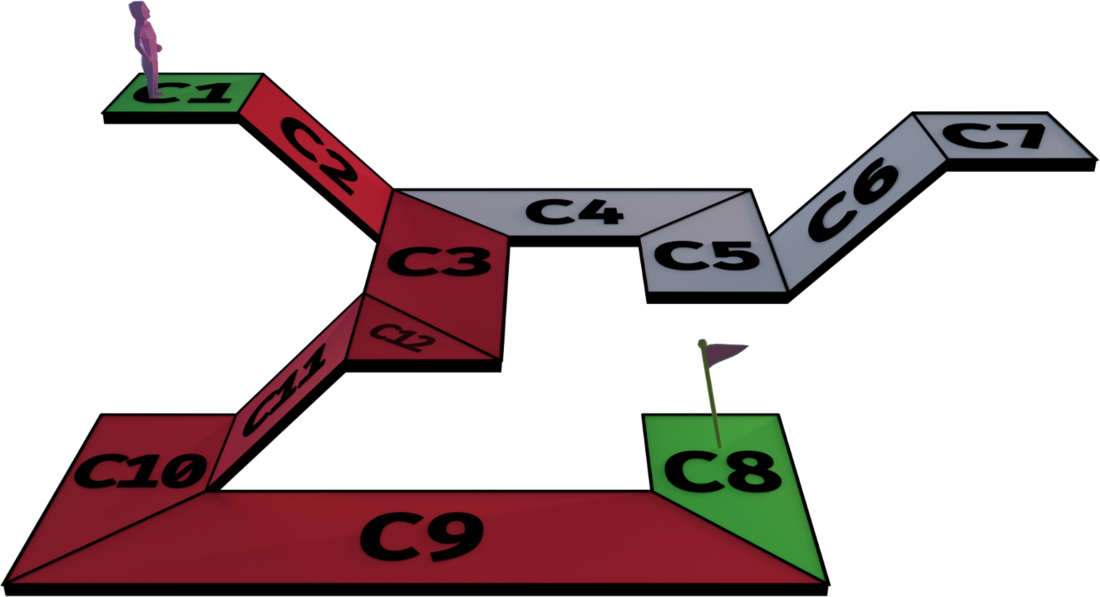

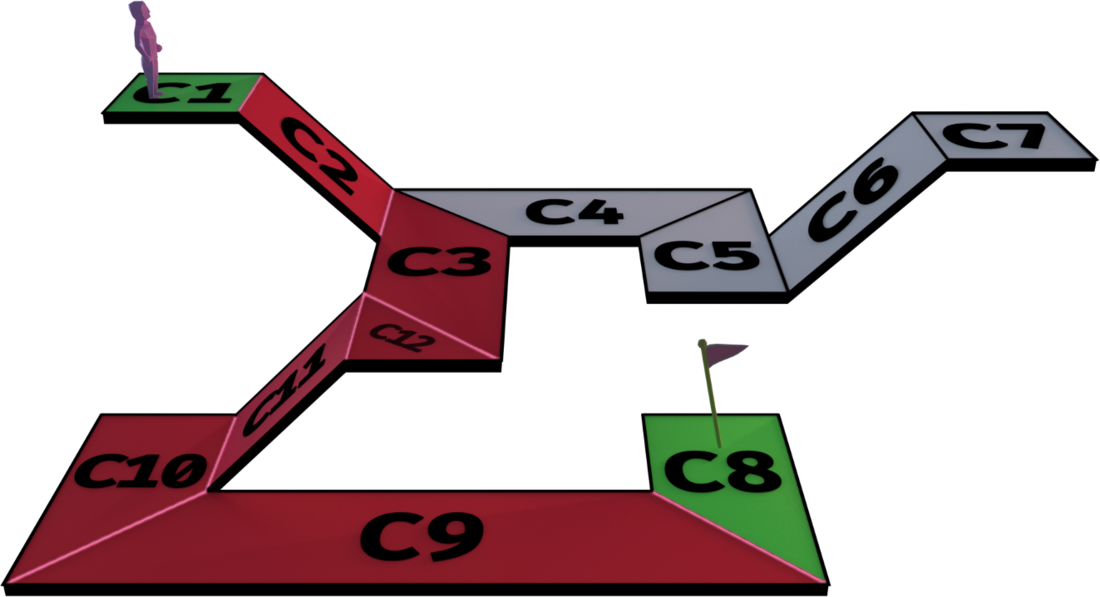

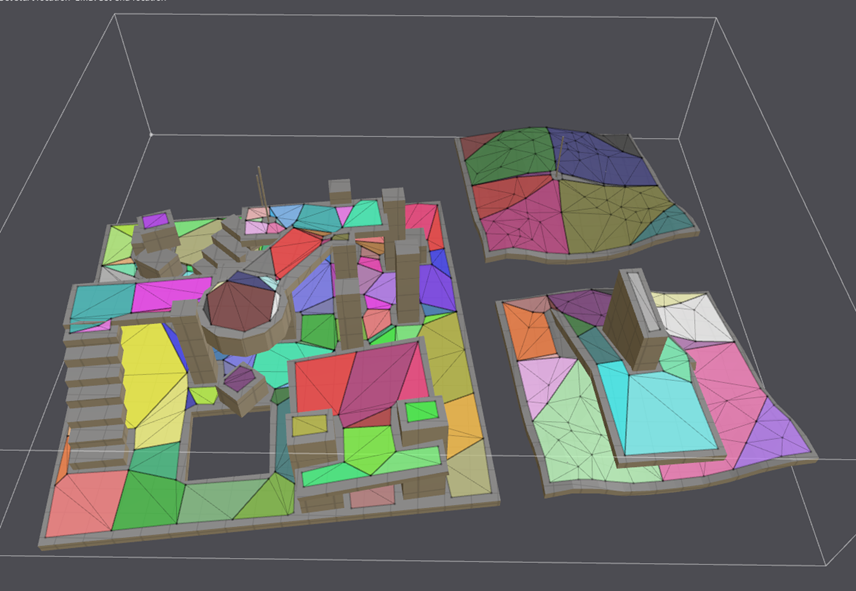

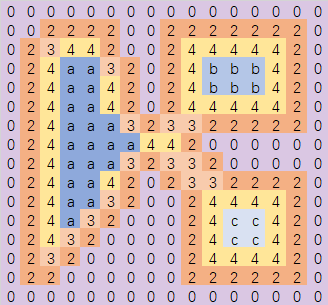

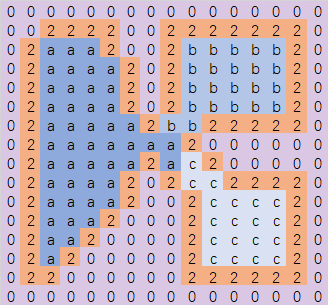

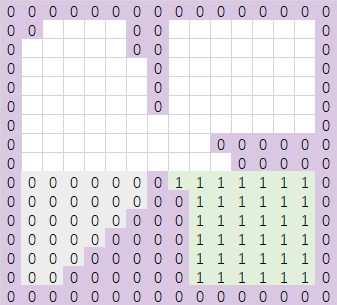

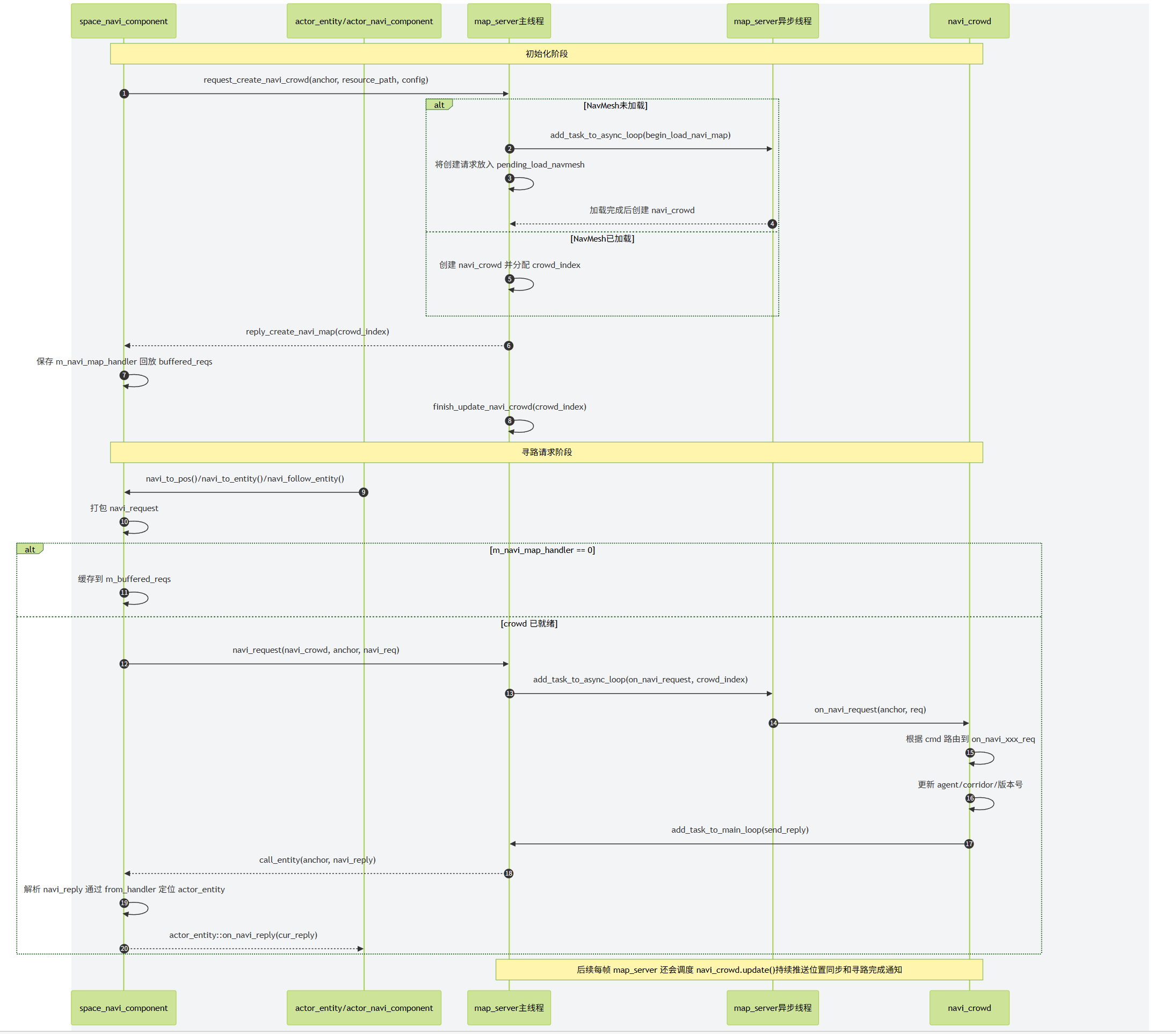

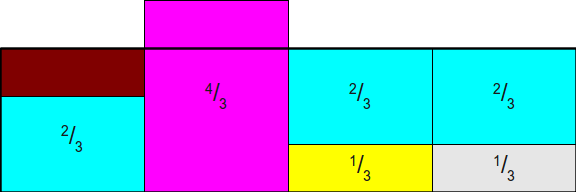

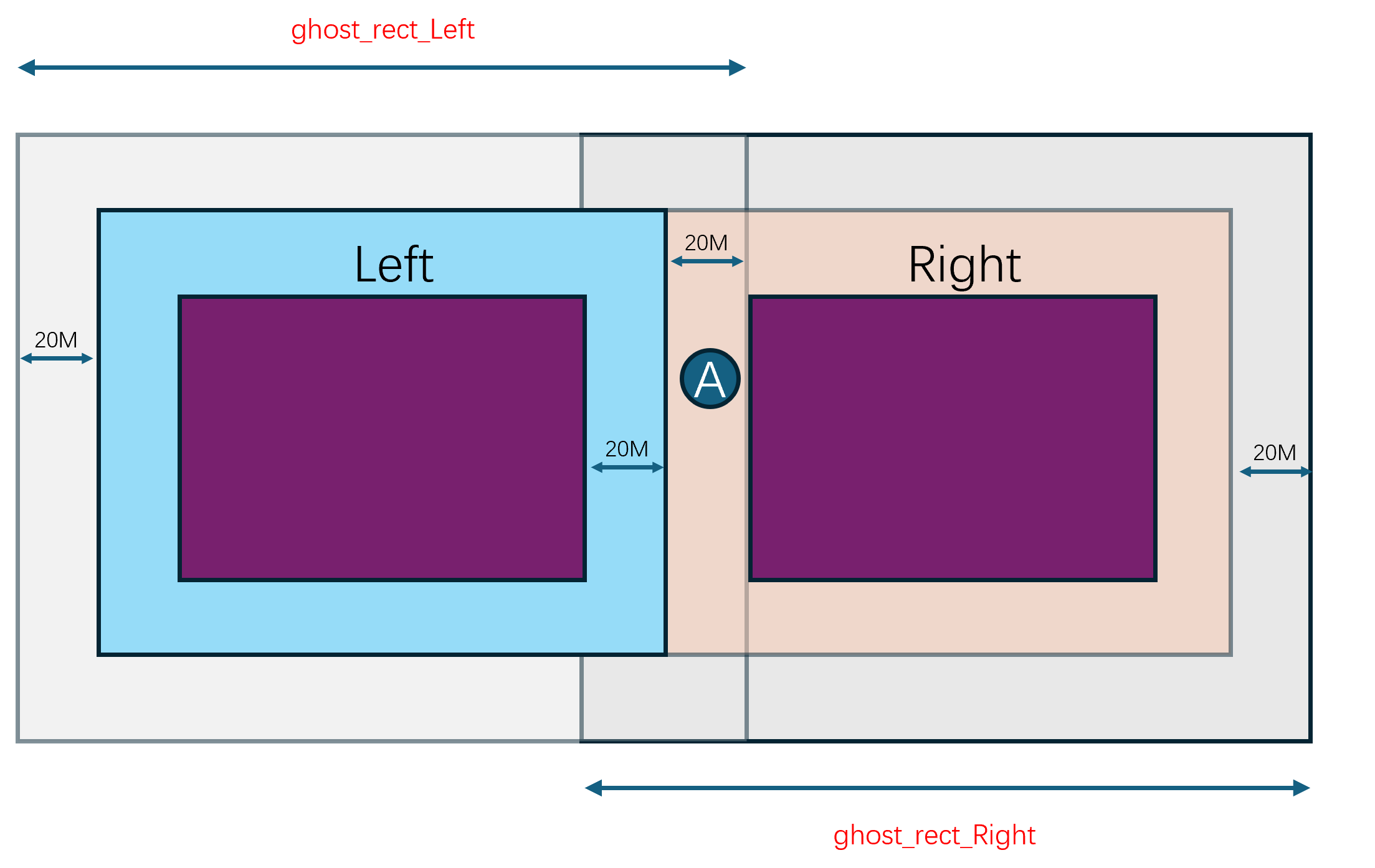

通过集成上述功能,并不断的完善基础的游戏玩法,mosaic_game逐渐有了一个完整的游戏服务器雏形,下图就是最终的服务端架构图:

在这个架构图里,除了外部提供的Mongo进程和Redis进程,主要的进程角色有:

- 管理进程

mgr_server,全局唯一,负责管理整体服务器集群的启动与退出,每个其他进程启动之后都需要向mgr_server注册。当一个进程完全可用之后,会通知mgr_server,然后mgr_server会将这个新进程的信息广播到现有的所有进程。同时mgr_server负责收集各个进程的负载信息,以方便执行一些进程角色的负载均衡 Redis代理进程redis_server,可以有多个,负责代理其他进程与Redis服务器进行交互,MongoDB代理进程db_server,可以有多个,负责代理其他进程与MongoDB数据库进行交互- 服务进程

service_server,可以有多个,作为承载社交等场景无关逻辑的容器,一个service_server内可以有多个service - 场景进程

space_server,可以有多个,作为承载游戏场景逻辑的容器,一个space_server内可以有多个space - 网关进程

gate_server,可以有多个,负责中转客户端与space_server之间的通信 - 地图进程

map_server,可以有多个,负责管理游戏场景中的地图数据,目前只承担了地图寻路功能,一个map_server可以为多个场景提供地图服务

由于当前的设计里还没有集成消息总线,所以目前的进程间互联图看上去乱糟糟的,等以后有空了去使用消息总线来简化一下连接拓扑:

- 所有进程都需要连接到

mgr_server - 每个

space_server和每个service_server都需要连接到一个redis_server和一个db_server - 每个

space_server都需要连接到所有的service_server,这样space_server上才知道任意一个service在哪一个service_server上 - 每两个

space_server之间都需要有一个连接 - 每个

space_server都需要连接到一个map_server - 每个

gate_server都需要连接到所有的space_server

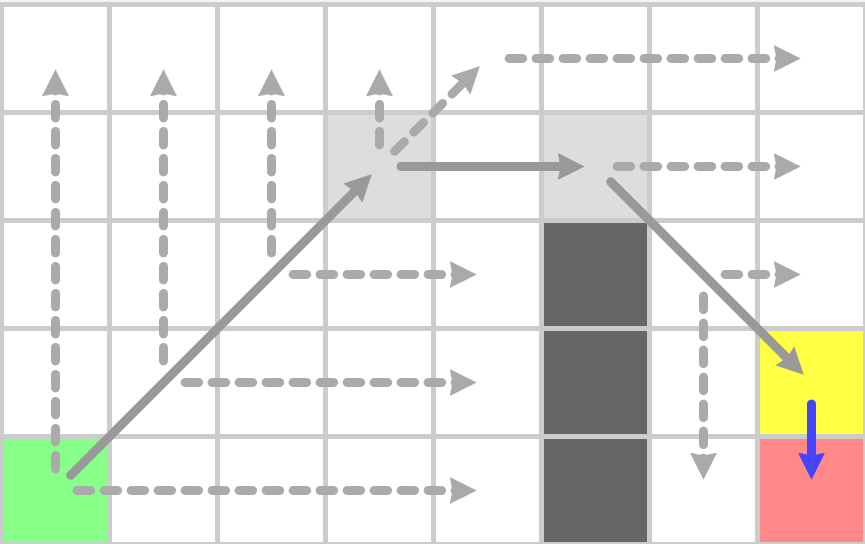

在当前的服务端架构之下,只有gate_server是外网可以直接访问的,此时客户端的登录和进入场景的流程是这样的:

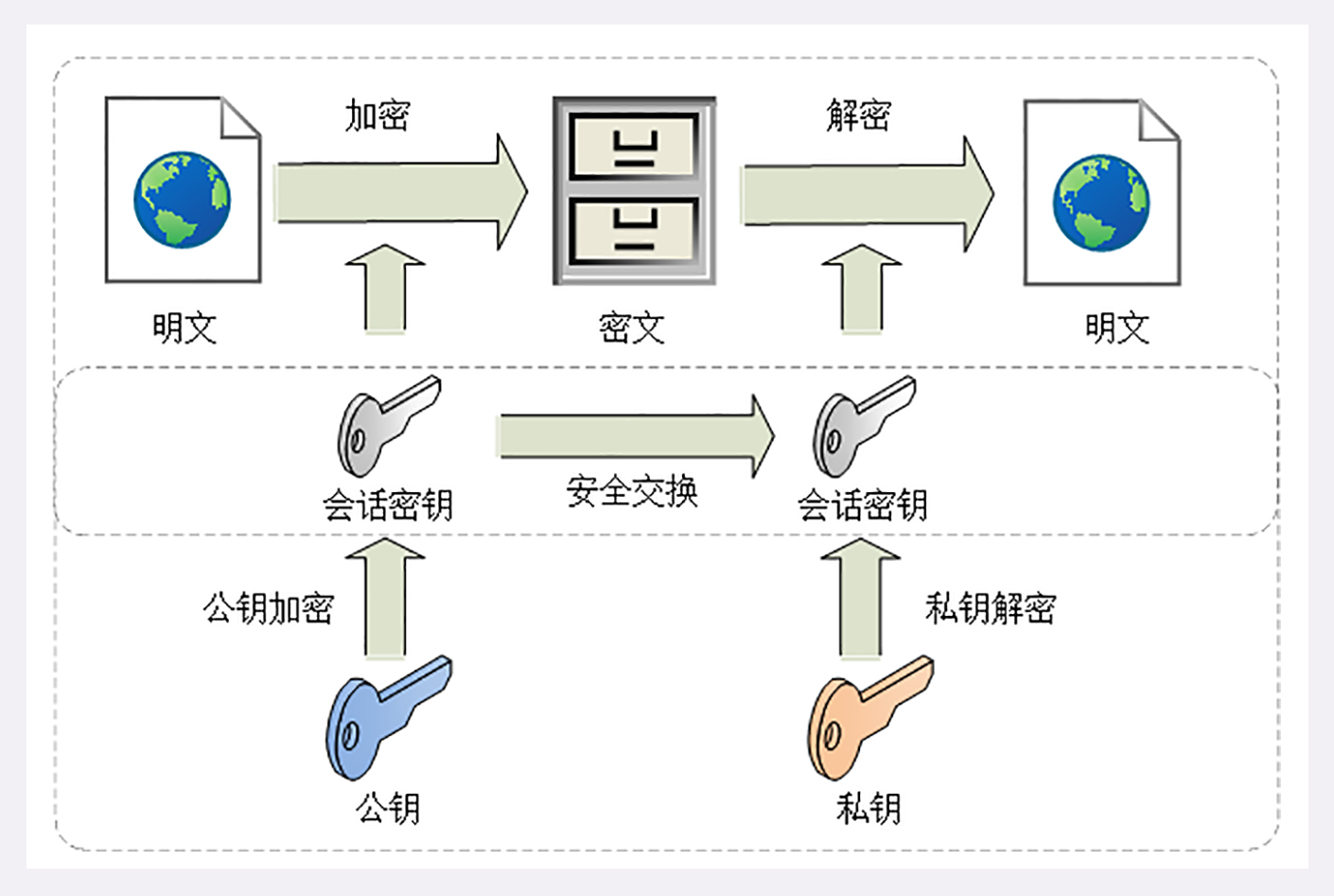

- 客户端以某种方式获取当前可用的

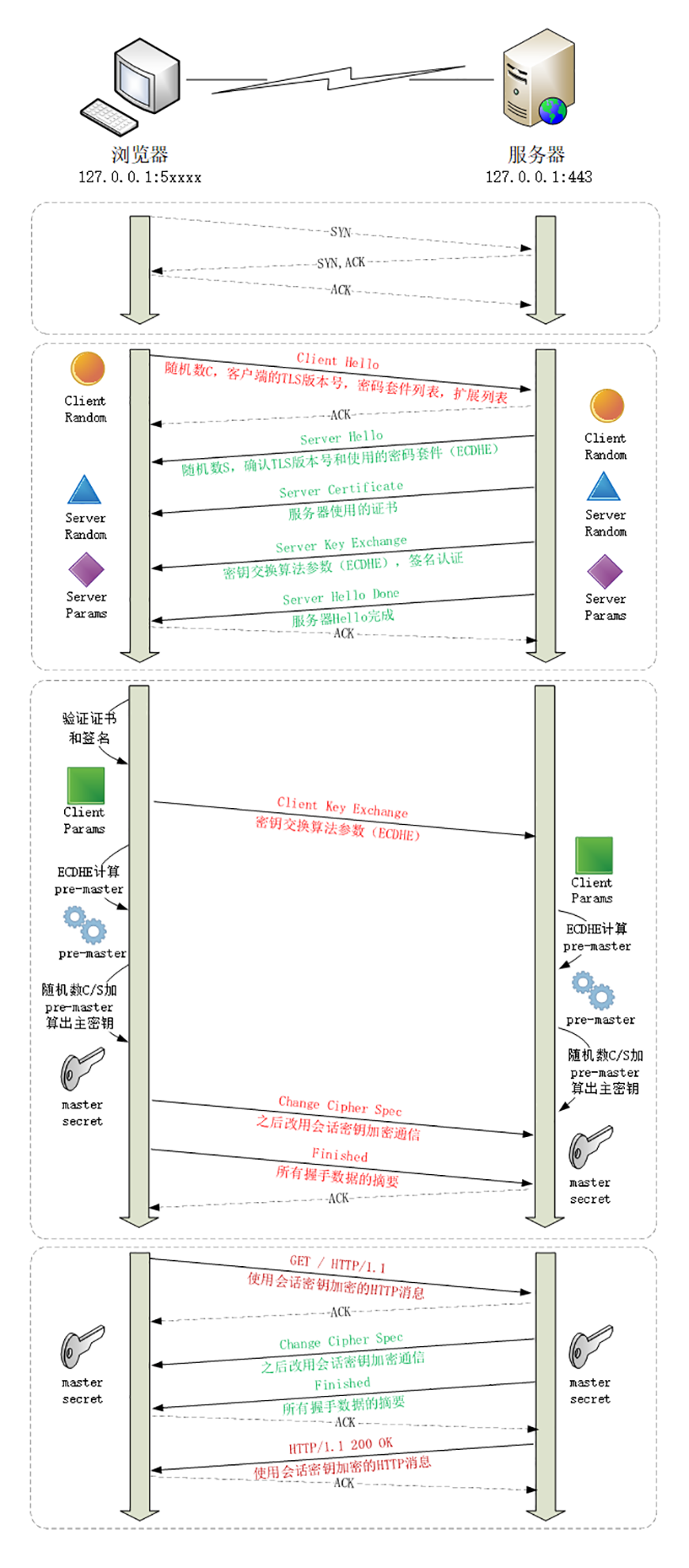

gate_server列表,随机选择一个gate_server发送会话建立请求 gate_server接受了这个连接之后,先执行加密握手,验证客户端的身份,创建一个会话,并商定后续的对称加密密钥gate_server随机选择一个space_server来创建这个客户端对应的服务端账号对象account_entity,然后属性同步系统会通知客户端来同步创建这个client_account,之后gate_server就会当作一个透明的通信中转代理的作用,因此不再提及- 客户端

client_account对象再发起一个登录请求,包含账号密码 space_server上的对应account_entity收到登录请求后,将登录请求转发到登录服务login_service上login_service收到登录请求,通过db_server查询账号密码是否正确,并将验证结果下发到account_entity,如果正确则记录当前账号已经在线space_server上的account_entity收到登录验证结果后,根据验证结果来通知客户端登录成功或失败,如果成功则开始往db_server请求这个账号的角色列表数据,并进行角色列表的下发- 客户端的

client_account收到角色列表数据后,通知服务端account_entity来选择其中一个来创建角色 space_server上的account_entity收到角色选择请求后,通过db_server查询所选角色的完整数据,并以此数据来创建角色对象player_entityspace_server上的player_entity创建完成之后,属性同步系统会将player_entity的属性同步到客户端,通知客户端创建角色对象client_player- 客户端的

client_player创建完成之后,可以发送RPC到space_server上的player_entity来申请进入特定场景 space_server上的player_entity收到进入场景请求后,会转发这个请求到场景服务space_service上space_service检查是否有对应的场景space_entity,如果没有则通过负载均衡选择一个space_server来创建一个新的场景space_entityspace_service找到合适的space_entity之后,将这个player_entity添加到space_entity的玩家列表中,同时通知player_entity开始切换到目标space_entity中,space_server上的player_entity开始执行场景切换,这个切换可能发生在同一个space_server上,也可能发生在不同的space_server上,如果是不同的space_server则还涉及到迁移流程,这里就不去展开了space_server上的player_entity进入到新的场景之后,属性同步系统会将新场景的属性同步到客户端,通知客户端切换到新的场景- 客户端的

client_player收到场景切换通知后,开始执行场景切换,并填充场景属性数据,通知space_server上的player_entity场景切换完成 space_server上的player_entity收到客户端场景切换完成通知后,AOI系统就开始工作,开始向客户端同步周围的其他entity的状态

上述流程虽然冗长,但是好在都是面条代码,逻辑复杂度都非常低,实现起来都比较简单,大概2022年就完成了这些内容。但是随着无缝迁移这个目标的引入,场景管理和属性同步系统就需要大修,这样就缝缝补补了一年多才搞好。现在看上去无缝迁移的核心问题都被解决了,但是后面我已经没有多少精力去做各种测试样例,所以不保证当前的mosaic_game能够像想象中的样子来正确支持无缝迁移, BUG应该会有很多。好在应该没有人会在实际项目中使用mosaic_game这个玩具,这样我就先心安理得的休息一段时间,等有空了再去补这些单元测试。

Mosaic Game 的进程生命周期

服务器启动流程

在之前的游戏服务器架构中我们已经初步的介绍了游戏服务器中的一些常见的进程角色,在mosaic_game中也基本复用了相关概念,在服务器集群中的进程角色有如下六种:

- 管理进程,作为全局的单点进程,第一个执行启动,用来提供进程注册和服务发现等功能,其代码在

roles/server/mgr_server目录下 - 场景进程,用来承载各种局内玩法,作为各种

entity与space的容器,其代码在roles/server/space_server目录下 - 网关进程,用来中转客户端与服务端之间的消息,其代码在

roles/server/gate_server目录下 - 服务进程,用来承载各种局外玩法,如聊天、好友、排行榜等,其代码在

roles/server/service_server目录下 - 数据库进程,用来转接游戏内对数据库的读写请求,其使用的后端数据库为

mongodb,其代码在roles/server/db_server目录下 - 缓存进程,用来承接游戏内对一些缓存数据的读写请求,其使用的后端缓存为

redis,其代码在roles/server/redis_server目录下 - 地图进程,用来处理一些场景的寻路、物理、

AOI等资源查询,其代码在roles/server/map_server下

在了解这些进程角色之后,就可以来介绍一下这些进程的启动流程了。我提供了一个非常简陋的服务器启动脚本deploy/scripts/run_servers.py,在这个python文件中,可以指定除了管理进程之外的其他进程的数量,同时还可以指定一些数据文件位置和一些外围服务所需的配置文件位置。在启动流程中,mgr_server会作为第一个进程起来,这个角色的进程只有一个实例。接下来依次会启动缓存进程、数据库进程、服务进程、网关进程、场景进程、地图进程:

cur_server_cmd = "nohup ../bin/mgr_server -c {0} -l {1} -n mgr_server > /dev/null 2>{1}/mgr_server.log &".format(options.config_path, options.log_path)

os.system(cur_server_cmd)

sleep(1)

for i in range(1):

cur_server_cmd = "nohup ../bin/redis_server -c {0} -l {1} -n redis_server_{2} -f {3} > /dev/null 2>{1}/redis_server_{2}.log &".format(options.config_path, options.log_path, i, options.redis_config)

os.system(cur_server_cmd)

for i in range(options.db_num):

cur_server_cmd = "nohup ../bin/db_server -c {0} -l {1} -n db_server_{2} -m {3} > /dev/null 2>{1}/db_server_{2}.log &".format(options.config_path, options.log_path, i, options.mongo_config)

os.system(cur_server_cmd)

for i in range(options.service_num):

cur_server_cmd = "nohup ../bin/service_server -c {0} -l {1} -n service_server_{2} -d {3}> /dev/null 2>{1}/service_server_{2}.log &".format(options.config_path, options.log_path, i, options.data_dir)

os.system(cur_server_cmd)

for i in range(options.gate_num):

cur_server_cmd = "nohup ../bin/gate_server -c {0} -l {1} -n gate_server_{2} > /dev/null 2>{1}/gate_server_{2}.log &".format(options.config_path, options.log_path, i)

os.system(cur_server_cmd)

for i in range(options.game_num):

cur_server_cmd = "nohup ../bin/space_server -c {0} -l {1} -n space_server_{2} -d {3}> /dev/null 2>{1}/space_server_{2}.log &".format(options.config_path, options.log_path, i, options.data_dir)

os.system(cur_server_cmd)

for i in range(options.map_server_num):

cur_server_cmd = "nohup ../bin/map_server -c {0} -l {1} -n map_server_{2} -d {3}> /dev/null 2>{1}/map_server_{2}.log &".format(options.config_path, options.log_path, i, options.data_dir)

os.system(cur_server_cmd)

sleep(1)

开头的第一个sleep(1)是为了保证在其他进程初始化时当前的mgr_server已经初始化好,后面的sleep(1)是为了在自动化测试的时候避免出现客户端启动了但是服务器还没有准备好的情况。

上述启动的各种server进程对应的逻辑类都继承自include/stub/json_stub.h里提供的json_stub,roles/basic_client里提供的客户端也继承自json_stub。同时这个json_stub又继承自basic_stub,这个basic_stub就是mosaic_game中所有进程角色的逻辑框架。在进程启动时,会通过相关配置文件来初始化这个逻辑框架运行所需的一些基础信息:

basic_stub::basic_stub(boost::asio::io_context& in_io_con, const stub_info& in_local_server, const stub_info& in_upstream_server, std::size_t in_timeout, utility::ts_t in_timer_check_gap_ms, std::uint32_t async_thread_num)

: m_io_context(in_io_con)

, m_local_server(in_local_server)

, m_upstream_server(in_upstream_server)

, m_logger(utility::get_logger(in_local_server.name))

, m_connection_timeout(in_timeout)

, m_timer_check_gap_ms(in_timer_check_gap_ms)

, m_local_name_ptr(std::make_shared<const std::string>(in_local_server.name))

, m_upstream_name_ptr(std::make_shared<const std::string>(in_upstream_server.name))

, m_start_ts(std::chrono::duration_cast<std::chrono::seconds>(std::chrono::system_clock::now().time_since_epoch()).count())

, m_anchor_name_prefix(in_local_server.name + utility::rpc_anchor::seperator)

, m_async_thread_num(async_thread_num)

{

m_asio_wrapper = std::make_unique<asio_wrapper>(m_io_context);

}

这里的in_local_server与in_upstream_server都是stub_info类型,用来明确一个进程的名字、类型与端口信息:

struct stub_info

{

std::string ip;

std::uint16_t port;

std::string rsa_key;

std::string name;

std::string upstream;

std::uint16_t http_port;

std::string type;

};

in_local_server代表当前进程的配置信息, in_upstream_server代表当前进程的上游进程对应的信息。除了mgr_server之外的其他进程在初始化的时候都需要传入对应的mgr_server作为上游管理进程,所以这里的ip,port字段其实只有mgr_server对应的stub_info才需要设置,其他时候保留为空。一个集群中的所有stub_info里的name不得重复,因为这个name字段就是此进程的唯一标识符。如果一个stub_info有上游,则upstream字段就是对应上游进程stub_info里的name。

在basic_stub的start函数里会使用create_router来初始化网络相关资源,然后根据upstream_server里的配置调用connect_to_server去对应的上游进程执行注册。同时调用start_accept根据local_server里的port,http_port来分别开启对正常游戏连接端口以及http调试端口的监听:

void basic_stub::start()

{

m_logger->info("server start");

create_router();

if (!m_upstream_server.name.empty())

{

add_named_server(m_upstream_server);

connect_to_server(m_upstream_server.name);

}

start_accept();

m_asio_wrapper->m_timer.expires_from_now(std::chrono::seconds(1));

m_asio_wrapper->m_timer.async_wait([this](const asio_error_code& error)

{

if(error)

{

m_logger->error("m_asio_wrapper timer error {}", error.message());

exit(1);

}

this->main_loop();

});

keep_alive_callback();

m_async_task_channels = std::vector<mutex_channel<std::function<void()>>>(m_async_thread_num);

m_async_threads.reserve(m_async_thread_num);

for(std::uint32_t i = 0; i< m_async_thread_num; i++)

{

m_async_threads.push_back(std::make_unique<std::thread>([this, i](){

this->async_worker_loop(i);

}));

}

}

第一次启动的时候先用一个1s间隔的计时器去延迟启动框架主循环,keep_alive_callback则负责定期向上游进程发送心跳包来执行进程保活,最后再按照配置去创造一些后台worker线程来作为线程池备用。注意主循环的代码是跑在asio相关线程上的,这里的worker线程并不会处理任何asio相关的任务,asio的线程是在main函数里创建的,不归basic_stub维护。下面就是gate_server在main函数里启动asio线程的相关代码,这里设置为threads为1代表只开启一个asio网络线程:

std::uint8_t const threads = 1;

std::size_t expire_time = 30000;

asio::io_context ioc{ threads };

server::gate_server cur_server = server::gate_server(ioc, local_stub_info, upstream_stub_info, expire_time, utility::ts_t(20));

cur_server.start();

std::vector<std::thread> v;

v.reserve(threads);

for (auto i = threads; i > 0; --i)

v.emplace_back(

[&ioc]

{

ioc.run();

});

for(auto& one_thread: v)

{

one_thread.join();

}

事实上除了上面介绍的这些手动创建的线程之外,还会有一个由spdlog自动创建的日志线程,由于这个线程完全由spdlog托管,所以这里就不去深究了。

不同的进程角色在启动时不仅要执行这个start函数,还有一些自己的额外逻辑去处理。所以在子类中,一般会提供一个自己的do_start函数来执行各自的初始化流程,并在这个流程中去调用basic_stub::start:

- 在

map_server的do_start最为简单,只是初始化了内部的地图设置

void map_server::do_start(const map_config& in_map_config)

{

m_map_config = in_map_config;

json_stub::start();

}

- 在

redis_server的do_start函数中,会构造线程池去连接后端的redis集群

void redis_server::do_start(const redis_config &redis_servers, std::uint8_t worker_num)

{

for (std::uint8_t i = 0; i < worker_num; i++)

{

auto cur_task_logger = utility::get_logger("redis_worker_" + std::to_string(i) );

workers.push_back(std::make_shared<redis_worker>(redis_servers, redis_task_channels, cur_task_logger));

}

for (auto &one_worker : workers)

{

work_threads.emplace_back([=]()

{ one_worker->run(); });

}

json_stub::start();

}

- 在

db_server中的do_start函数中,也会创建线程池去连接后端的mongodb集群

void db_server::do_start(const mongo_config& mongo_servers, std::uint8_t worker_num)

{

auto cur_task_logger = utility::get_logger("mongo_worker");

for (std::uint8_t i = 0; i < worker_num; i++)

{

workers.push_back(std::make_shared<mongo_worker>(mongo_servers, mongo_task_channels, cur_task_logger));

}

for (auto& one_worker : workers)

{

work_threads.emplace_back([=]()

{

one_worker->run();

});

}

json_stub::start();

}

- 在

space_server中的do_start函数,负责初始化entity系统、manager系统以及配置表系统

void space_server::do_start()

{

entity::entity_manager::instance().init();

json_stub::start();

manager_base::init_managers(this);

misc::stuff_utils::init();

global_config_mgr::instance();

}

- 在

service_server中的do_start函数,负责初始化自己的服务管理器以及本地单例manager

void service_server::do_start()

{

m_service_mgr = &service::service_manager::instance();

m_service_mgr->init();

manager_base::init_managers(this);

json_stub::start();

}

- 在

mgr_server中的do_start函数,负责启动一个创建service的计时器

void mgr_server::do_start()

{

json_stub::start();

m_service_create_check_timer = add_timer_with_gap(std::chrono::milliseconds(1000), [this]() {

check_to_create_service();

});

}

- 在

gate_server中的do_start函数, 负责启动一个计时器去定期清理无效的会话

void gate_server::do_start()

{

json_stub::start();

m_session_remove_timer = add_timer_with_gap(std::chrono::seconds(1), [this]()

{

this->check_remove_session();

});

}

服务器主循环

在框架主循环中main_loop,有五种信息需要处理,分别是:

- 普通网络消息

network::msg_task,对应的是服务器进程之间以及客户端与网关进程之间的业务消息 http消息http_utils::request,对应的是此进程开启的http服务接收的消息,目前主要作为调试命令使用- 业务计时器超时消息,对应的是游戏业务逻辑内的各种计时器,而不是

asio提供的计时器 - 连接控制消息

connection_ctrl_msg,对应的是各进程之间的tcp连接的建立与断开消息 - 主线程回调消息

std::function<void()>,对应的是一些非主线程执行的业务回调,例如http请求以及异步线程池处理的相关业务

void basic_stub::main_loop()

{

m_logger->flush();

auto cur_msg_handler = [this](std::shared_ptr<network::net_connection> con, const network::msg_task& one_msg)

{

return this->on_msg(con, one_msg);

};

auto cur_http_handler = [this](const http_utils::request& req, msg_seq_t req_seq)

{

return this->on_http_request(req, req_seq);

};

auto cur_conn_ctrl_msg_handler = [this](const network::connection_ctrl_msg& msg)

{

return this->on_conn_ctrl_msg(msg);

};

std::uint64_t loop_total_ms = 0;

do

{

on_new_frame();

poll_mainloop_tasks();

auto poll_begin_ts = utility::timer_manager::now_ts();

m_router->poll_msg(cur_msg_handler);

http::http_request_mgr::poll_request(cur_http_handler);

poll_timers(utility::timer_manager::now_ts());

m_router->poll_ctrl_msg(cur_conn_ctrl_msg_handler);

auto poll_end_ts = utility::timer_manager::now_ts();

loop_total_ms += poll_end_ts - poll_begin_ts;

if (poll_end_ts - poll_begin_ts > m_high_load_threshold * m_timer_check_gap_ms)

{

continue;

}

else

{

break;

}

}while (!m_stopped);

if(m_stopped)

{

// 暂时忽略进程退出相关代码

}

m_asio_wrapper->m_timer.expires_from_now(std::chrono::milliseconds(std::max(int64_t(1), int64_t(m_timer_check_gap_ms) - int64_t(loop_total_ms))));

m_asio_wrapper->m_timer.async_wait([this](const asio_error_code& error)

{

(void)error;

this->main_loop();

});

return;

}

在单次主循环处理完成之后,会检查此次主循环的执行时间。如果执行时间小于指定的阈值m_high_load_threshold * m_timer_check_gap_ms,则说明当前要处理的任务比较多,负载比较高,此时会继续执行一次逻辑主循环,直到单次逻辑主循环消耗的时间小于指定值或者外部发起了关服请求。在本批次的逻辑主循环执行完成之后,继续创建一个计时器去延迟执行下一次主循环,这里的延迟设置是为了尽量的保证主循环开始的间隔保持为预设的m_timer_check_gap_ms,同时避免主循环空跑造成的性能浪费。

由于网络相关逻辑由network_router管理,与basic_stub的业务逻辑相解耦,所以在处理这些网络消息的时候,外部需要提供相关的回调函数,也就是上面代码中构造的三个msg_handler。这里的on_msg负责处理进程之间的业务消息,on_ctrl_msg负责处理进程间的连接建立与断开,这两个函数的细节将在后续的网络细节相关章节中介绍。on_http_request则负责处理本地http服务器接收到的http请求,这里的http请求主要是一些运维指令相关,内部会调用on_gm_cmd去分发这些运维指令:

void json_stub::on_http_request(const http_utils::request& req, msg_seq_t req_seq)

{

std::string cmd;

json param;

try

{

auto cur_json = json::parse(req.body);

cur_json.at("cmd").get_to(cmd);

cur_json.at("param").get_to(param);

}

catch(const std::exception& e)

{

auto reply = fmt::format("fail to parse http req for req uri {} content {} req_seq {} error {}", req.uri, req.body, req_seq, e.what());

m_logger->info(reply);

on_http_reply(req_seq, reply);

return;

}

on_gm_cmd(cmd, param, req_seq);

}

服务器间连接

目前的流程中,所有的进程角色在启动后都会向其上游角色发起连接。上游连接建立成功之后,会在对应的on_connect函数里执行一些自定义的逻辑,其中最重要的就是通过set_stub_info这个指令告诉mgr_server当前连接发起方的进程角色是什么:

void json_stub::on_connect(std::shared_ptr<network::net_connection> connection)

{

basic_stub::on_connect(connection);

const auto& cur_connection_dest = get_connection_name(connection.get());

auto cur_server_iter = m_named_servers.find(*cur_connection_dest);

if (cur_server_iter == m_named_servers.end())

{

close_connection(connection);

return;

}

json request_msg_1;

request_msg_1["cmd"] = "set_stub_info";

json local_info;

local_info["stub_info"] = m_local_server;

request_msg_1["param"] = local_info;

std::shared_ptr<std::string> msg_ptr = std::make_shared<std::string>(request_msg_1.dump());

m_router->push_msg(connection.get(), m_local_name_ptr, cur_connection_dest, msg_ptr, enums::packet_cmd_helper::encode(enums::packet_cmd::server_control, 0));

}

一个进程角色向mgr_server汇报set_stub_info后并不代表这个进程角色已经可用,只有其向mgr_server发送report_server_ready之后这个进程才能作为完整的角色来提供服务。gate_server的功能比较单一,因此在其on_connect函数中会直接向mgr_server汇报当前进程已经可用

void gate_server::on_connect(std::shared_ptr<network::net_connection> connection)

{

json_stub::on_connect(connection);

auto connection_name_ptr = connection->get_connection_name();

auto cur_server_iter = m_named_servers.find(*connection_name_ptr);

if (cur_server_iter == m_named_servers.end())

{

return;

}

if (cur_server_iter->second.type == "space_server")

{

m_connected_gameservers[*connection_name_ptr] = connection;

}

else if(cur_server_iter->second.type == "mgr_server")

{

json report_ready_info, temp_param;

report_ready_info["cmd"] = "report_server_ready";

temp_param["server_name"] = *m_local_name_ptr;

temp_param["server_type"] = "gate_server";

report_ready_info["param"] = temp_param;

m_router->push_msg(connection.get(), m_local_name_ptr, {}, std::make_shared<std::string>(report_ready_info.dump()), enums::packet_cmd_helper::encode(enums::packet_cmd::server_control, 0));

}

}

与gate_server类似的进程角色还有map_server,db_server,redis_server,都是上游连接建立之后立即汇报server_ready。但是space_server和service_server就复杂了一些,他们不能在连接到mgr_server之后立即汇报就绪状态,因为这些进程角色需要与其他进程角色相配合才能提供完整的角色服务。这些角色的进程在连接建立之后会向mgr_server请求资源进程角色列表:

void service_server::request_allocate_resource_server(const std::string& resource_server_type)

{

json request_msg, request_param;

request_msg["cmd"] = "request_allocate_resource";

request_param["from_server_name"] = m_local_server.name;

request_param["from_server_type"] = m_local_server.type;

request_param["resource_server_type"] = resource_server_type;

request_msg["param"] = request_param;

auto msg_ptr = std::make_shared<std::string>(request_msg.dump());

auto remote_name_ptr = std::make_shared<std::string>(m_upstream_server.name);

if (!m_router->push_msg(m_local_name_ptr, remote_name_ptr, msg_ptr, enums::packet_cmd_helper::encode(enums::packet_cmd::server_control, 0)))

{

add_timer_with_gap(std::chrono::milliseconds(2 * 1000), [resource_server_type, this]() {

request_allocate_resource_server(resource_server_type);

});

}

}

void service_server::on_connect(std::shared_ptr<network::net_connection> connection)

{

json_stub::on_connect(connection);

auto connection_name_ptr = get_connection_name(connection.get());

if (*connection_name_ptr == m_upstream_server.name)

{

request_allocate_resource_server("db_server");

request_allocate_resource_server("redis_server");

}

}

在mgr_server中接收到这些资源申请请求之后,会选取当前资源池中连接数最少的进程来返回:

std::string mgr_server::allocate_resource_svr(std::unordered_map<std::string, resource_stub_info>& resource_svrs, const std::string& from_server_name)

{

if(resource_svrs.empty())

{

return {};

}

std::vector<std::pair<std::string, std::size_t>> resource_server_loads;

resource_server_loads.reserve(resource_svrs.size());

for (const auto& one_pair : resource_svrs)

{

if(!one_pair.second.ready)

{

continue;

}

resource_server_loads.emplace_back(one_pair.first, one_pair.second.connected_svrs.size());

}

std::sort(resource_server_loads.begin(), resource_server_loads.end(), [](const std::pair<std::string, std::size_t>& a, const std::pair<std::string, std::size_t>& b)

{

return a.second < b.second;

});

auto dest_resource_svr = resource_server_loads[0].first;

resource_svrs[dest_resource_svr].connected_svrs.insert(from_server_name);

return dest_resource_svr;

}

当space_server与service_server收到分配的资源进程列表之后,会主动的向这些资源进程发起连接:

void space_server::on_reply_allocate_resource(std::shared_ptr<network::net_connection> con, std::shared_ptr<const std::string> dest, const json& msg)

{

stub_info cur_resource_svr;

std::string resource_server_type;

std::string cur_err;

try

{

msg.at("resource_server_type").get_to(resource_server_type);

msg.at("errcode").get_to(cur_err);

if(!cur_err.empty())

{

m_logger->warn("on_reply_allocate_resource errcode {}", cur_err);

add_timer_with_gap(std::chrono::milliseconds(2 * 1000), [resource_server_type, this]() {

request_allocate_resource_server(resource_server_type);

});

return;

}

msg.at("resource_svr").get_to(cur_resource_svr);

}

catch (std::exception& e)

{

m_logger->warn("on_reply_allocate_resource msg invalid {} error {}", msg.dump(4), e.what());

return;

}

m_named_servers[cur_resource_svr.name] = cur_resource_svr;

connect_to_server(cur_resource_svr.name);

}

当各项进程角色连接数量得到满足之后,就会向mgr_server汇报自己这个进程已经可用了。

void space_server::on_connect(std::shared_ptr<network::net_connection> connection)

{

json_stub::on_connect(connection);

auto connection_name_ptr = get_connection_name(connection.get());

if (*connection_name_ptr == m_upstream_server.name)

{

request_allocate_counter("online_session");

request_allocate_resource_server("db_server");

request_allocate_resource_server("redis_server");

}

else

{

auto cur_server_iter = m_named_servers.find(*connection_name_ptr);

if (cur_server_iter == m_named_servers.end())

{

return;

}

if (cur_server_iter->second.type == "db_server")

{

m_router->link_anchor_to_connection("db_server", connection.get());

m_logger->info("m_connected_resource_servers add {}", *connection_name_ptr);

}

else if(cur_server_iter->second.type == "redis_server")

{

m_router->link_anchor_to_connection("redis_server", connection.get());

m_logger->info("m_connected_resource_servers add {}", *connection_name_ptr);

}

else

{

return;

}

if(m_router->has_anchor("redis_server") && m_router->has_anchor("db_server"))

{

json report_ready_info, temp_param;

report_ready_info["cmd"] = "report_server_ready";

temp_param["server_name"] = *m_local_name_ptr;

temp_param["server_type"] = m_local_server.type;

report_ready_info["param"] = temp_param;

m_router->push_msg( m_local_name_ptr,std::make_shared<std::string>(m_upstream_server.name), std::make_shared<std::string>(report_ready_info.dump()), enums::packet_cmd_helper::encode(enums::packet_cmd::server_control, 0));

}

}

}

当space_server变成ready之后,会向gate_server进行广播:

// void mgr_server::on_report_server_ready(std::shared_ptr<network::net_connection> con, std::shared_ptr<const std::string> dest, const json& msg)

json broadcast_msg, param;

std::vector<stub_info> temp_stub_infos;

temp_stub_infos.push_back(m_named_servers[server_name]);

broadcast_msg["cmd"] = "notify_server_ready";

param["servers"] = temp_stub_infos;

broadcast_msg["param"] = param;

auto cur_info = std::make_shared<std::string>(broadcast_msg.dump(4));

broadcast_to_space_svrs(cur_info);

if(server_type == "space_server")

{

broadcast_to_gate_svrs(cur_info);

}

gate_server收到space_server的ready消息之后,会主动发起一个连接:

void gate_server::on_notify_server_ready(std::shared_ptr<network::net_connection> con, std::shared_ptr<const std::string> dest, const json& msg)

{

std::vector<stub_info> ready_servers;

try

{

msg.at("servers").get_to(ready_servers);

}

catch (std::exception& e)

{

m_logger->error("on_notify_server_ready fail to parse {} error {}", msg.dump(4), e.what());

return;

}

for (const auto& one_server : ready_servers)

{

if (one_server.type != "space_server")

{

continue;

}

m_named_servers[one_server.name] = one_server;

if (!m_router->has_connection_with_name(one_server.name))

{

connect_to_server(one_server.name);

}

}

}

当一个service_server变成ready之后,mgr_server并不会立即往这些service_server分配service去创建,而是等待一个计时器超时再去创建:

void mgr_server::check_to_create_service()

{

if(m_stopped)

{

return;

}

if (m_service_create_check_timer.valid())

{

m_timer_mgr.cancel_timer(m_service_create_check_timer);

m_service_create_check_timer.reset();

}

std::uint32_t need_server_num = 0;

for (const auto& one_pair : m_services_to_create)

{

need_server_num = std::max(need_server_num, one_pair.second);

}

std::vector<std::string> ready_service_servers;

for (const auto& one_pair : m_service_stub_infos)

{

if (one_pair.second.ready)

{

ready_service_servers.push_back(one_pair.first);

}

}

if (need_server_num > ready_service_servers.size())

{

// m_logger->error("need_server_num {} is larger than m_service_stub_infos size {}", need_server_num, ready_service_servers.size());

add_timer_with_gap(std::chrono::milliseconds(1000), [this]() {

check_to_create_service();

});

return;

}

if (m_min_service_server_num > ready_service_servers.size())

{

// m_logger->error("m_min_service_server_num {} is larger than m_service_stub_infos size {}", m_min_service_server_num, ready_service_servers.size());

add_timer_with_gap(std::chrono::milliseconds(1000), [this]() {

check_to_create_service();

});

return;

}

std::unordered_map<std::string, std::vector<std::string>> temp_services_on_server;

std::uint32_t temp_counter = 0;

for (const auto& one_service_cluster : m_services_to_create)

{

for (std::uint32_t i = 0; i < one_service_cluster.second; i++)

{

auto cur_select_server = ready_service_servers[temp_counter % ready_service_servers.size()];

temp_services_on_server[cur_select_server].push_back(one_service_cluster.first);

request_create_service_on_server(cur_select_server, one_service_cluster.first, {});

temp_counter++;

}

}

}

这样做的目的是为了等待所有的service_server都启动好之后再去平摊各种局外服务的创建,否则就可能出现所有服务都创建在同一个service_server的情况。

服务器关闭流程

服务器关闭指令需要运维人员手动去操作,通过mgr_server的http端口进行通知,在deploy/scripts/stop_servers.py中我们通过curl指令来执行关服通知:

with open(options.config_path, "r") as config_file:

config_detail = json.load(config_file)

mgr_server_ip = config_detail["mgr_server"]["ip"]

mgr_server_http_port = config_detail["mgr_server"]["http_port"]

curl_cmd = "curl -H \"Accept: application/json\" -H \"Content-type: application/json\" -X GET -d '{\"cmd\": \"stop\", \"param\": {}}' "

curl_cmd += "http://" + mgr_server_ip + ":" + str(mgr_server_http_port) + "/gm_cmd/GET/"

os.system(curl_cmd)

mgr_server接收到这个运维指令之后,会调用notify_stop来开始执行关服逻辑:

void json_stub::on_gm_cmd(const std::string& cmd, const json& param, msg_seq_t req_seq)

{

if(cmd == "stop")

{

notify_stop();

json reply_json;

reply_json["params"] = param;

auto reply_str = reply_json.dump() + "\r\n";

http::http_request_mgr::finish_request(req_seq, reply_str);

}

}

void basic_stub::notify_stop()

{

if(m_stopped)

{

m_logger->error("notify stop while already stopping");

return;

}

m_logger->warn("notify_stop");

m_stopped = true;

m_stop_report_ts = m_stop_begin_ts = std::chrono::steady_clock::now();

stop_begin();

}

void basic_stub::stop_begin()

{

m_logger->info("stop_begin");

}

在notify_stop中我们会设置m_stopped这个bool变量为true,代表当前进程正在关服流程中,至于具体的关服细节则依赖于各个进程角色的重载实现。

在进程主循环中,如果发现自己是关服过程中,则开始定期检查关服过程是否已经执行完毕,如果执行完毕则开始关闭所有的监听端口以及计时器,等待io_service的自然退出:

// void basic_stub::main_loop()

if(m_stopped)

{

auto stop_check_ts = std::chrono::steady_clock::now();

bool with_stop_log = false;

std::chrono::duration<double> stop_elapsed_time = stop_check_ts - m_stop_report_ts;

if(stop_elapsed_time.count() > m_stop_alert_duration)

{

m_logger->warn("stop check fail after {} seconds", stop_elapsed_time.count());

with_stop_log = true;

m_stop_report_ts = stop_check_ts;

}

if(check_stop_finish(with_stop_log))

{

on_stop_finish();

return;

}

}

void basic_stub::on_stop_finish()

{

m_router->disconnect_all();

m_asio_wrapper->m_acceptor.close();

m_asio_wrapper->m_timer.cancel();

m_http_server->stop();

m_logger->warn("on_stop_finish");

}

这里的check_stop_finish主要检查其他进程发起的连接以及http连接是否为0,都是0的时候再等待所有的线程池退出:

bool basic_stub::check_stop_finish(bool with_log)

{

std::uint32_t has_upstream_server = 0;

if(!m_upstream_server.name.empty())

{

has_upstream_server = 1;

}

if(m_router->get_active_connection_count() > has_upstream_server)

{

if(with_log)

{

m_logger->debug("router->get_active_connection_count() fail remain {}", m_router->get_active_connection_count());

}

return false;

}

if(m_http_server)

{

if(m_http_server->get_session_count() != 0)

{

if(with_log)

{

m_logger->debug("_http_server->get_session_count() fail");

}

return false;

}

}

if(m_finished_async_thread_counter != m_async_thread_num)

{

if(with_log)

{

m_logger->debug("m_finished_async_thread_counter fail");

}

return false;

}

for(auto& one_thread_ptr : m_async_threads)

{

one_thread_ptr->join();

}

m_async_threads.clear();

return true;

}

从前面小章节可知各种角色的服务器的就绪状态其实是有依赖的,这种逻辑依赖不仅影响服务器的启动,还影响服务器的关闭,强制关闭所有的连接可能会导致服务器状态没有正确的保存到数据库。所以mgr_server关服的时候是分阶段来通知各个进程角色去退出的的,阶段变量存储在m_stop_stage中,首先通知的是gate_server:

void mgr_server::stop_begin()

{

json_stub::stop_begin();

json sync_msg;

sync_msg["cmd"] = "notify_stop";

sync_msg["param"] = json::object_t();

auto cur_stop_msg = std::make_shared<std::string>(sync_msg.dump());

// 先通知所有的gate 退出

broadcast_to_gate_svrs(cur_stop_msg);

m_stop_stage = stop_stage::wait_gate_server_destroy;

return;

}

gate_server在接收到这个的通知请求之后,会通知所有的客户端服务器关闭,让客户端主动的断开所有的连接,同时拒绝掉后续的所有新客户端发出来的连接:

void gate_server::stop_begin()

{

json_stub::stop_begin();

std::vector<std::string> temp_entities;

for(const auto& one_pair: m_eid_to_conn_id)

{

temp_entities.push_back(one_pair.first);

}

json::object_t notify_msg;

notify_msg["msg"] = "server_close";

for(const auto& one_dest: temp_entities)

{

request_client_close_impl(one_dest, notify_msg);

}

add_timer_with_gap(std::chrono::seconds(1), [this]()

{

m_router->disconnect_all();

});

}

这里会同时开启一个延迟计时器去强制关闭所有的连接,包括客户端、space_server、mgr_server。mgr_server会收到gate_server的断线通知,当所有的gate_server都退出之后,开始进入第二阶段,通知所有玩家都下线:

void mgr_server::on_gate_all_destroyed()

{

m_logger->warn("on_gate_all_destroyed");

if(m_stop_stage != stop_stage::wait_gate_server_destroy)

{

return;

}

m_stop_stage = stop_stage::wait_account_logout;

json sync_msg;

sync_msg["cmd"] = "request_logout_all_accounts";

sync_msg["param"] = json::object_t();

auto cur_stop_msg = std::make_shared<std::string>(sync_msg.dump());

// 通知 所有的space server account准备logout

broadcast_to_space_svrs(cur_stop_msg);

// 等待login_service汇报所有玩家都已经下线

}

当这个request_logout_all_accounts消息发送到space_server之后,所有的在线账号都会通知其对应的在线玩家去执行存库后下线操作,如果没有对应在线玩家则直接执行账号下线操作:

void space_server::on_request_logout_all_accounts(std::shared_ptr<network::net_connection> con, std::shared_ptr<const std::string> from, const json& msg)

{

// 通知所有的account 准备logout

auto cur_accounts = entity::entity_manager::instance().get_entities_by_exact_type<entity::account_entity>();

utility::rpc_msg logout_msg;

logout_msg.cmd = "request_logout_account";

for(auto one_account: cur_accounts)

{

one_account->on_rpc_msg(logout_msg);

}

utility::rpc_msg request_msg;

request_msg.cmd = "request_check_accounts_empty";

call_service("login_service", request_msg);

}

void account_entity::request_logout_account(const utility::rpc_msg& msg)

{

if(m_statem.active_state_name() == "logout_account")

{

return;

}

if(m_player_id.empty())

{

utility::rpc_msg request_msg;

request_msg.cmd = "request_logout_account";

call_service("login_service", request_msg);

dispatcher().dispatch(enums::event_category::account, "logout");

m_statem.change_to("logout_account");

}

else

{

utility::rpc_msg request_msg;

request_msg.cmd = "request_logout_player";

call_player(request_msg);

m_statem.change_to("logout_account");

}

}

当所有在线账号都下线之后,login_service会通知mgr_server下线阶段执行完成:

void service_server::report_accounts_all_logout()

{

m_logger->info("report_accounts_all_logout");

json report_info;

report_info["cmd"] = "report_accounts_all_logout";

report_info["param"] = json::object_t();

m_router->push_msg(m_local_name_ptr, std::make_shared<std::string>(m_upstream_server.name), std::make_shared<std::string>(report_info.dump()), enums::packet_cmd_helper::encode(enums::packet_cmd::server_control, 0));

}

在此之后mgr_server通知所有的service执行存库后退出操作,:

void mgr_server::on_accounts_all_logout(std::shared_ptr<network::net_connection> con, std::shared_ptr<const std::string> from, const json& msg)

{

m_logger->warn("on_accounts_all_logout");

if(m_stop_stage != stop_stage::wait_account_logout)

{

return;

}

m_stop_stage = stop_stage::wait_service_destroy;

// login_service汇报所有账号都已经下线

json sync_msg;

sync_msg["cmd"] = "notify_stop";

sync_msg["param"] = json::object_t();

auto cur_stop_msg = std::make_shared<std::string>(sync_msg.dump());

// 通知所有的service准备退出

broadcast_to_service_svrs(cur_stop_msg);

broadcast_to_space_svrs(cur_stop_msg);

}

同时由于所有的账号都下线了,所有的space_server也不再被需要,开始执行退出流程:

void space_server::stop_begin()

{

manager_base::stop_managers();

json_stub::stop_begin();

}

service_server接收到stop通知后,会遍历当前进程上的所有service,执行存库后退出操作:

void service_server::stop_begin()

{

m_logger->warn("service server {} stop_begin ", *m_local_name_ptr);

auto cur_services = m_service_mgr->get_all_servicies();

for(auto one_pair: cur_services)

{

one_pair.second->notify_stop();

}

manager_base::stop_managers();

}

当一个service完成了自己的退出逻辑之后,会通知到mgr_server:

void service_server::destroy_service(service::base_service* cur_service)

{

json report_destroy_info, temp_param;

report_destroy_info["cmd"] = "report_service_destroyed";

temp_param["service_type"] = cur_service->m_base_desc.m_type_name;

temp_param["service_id"] = cur_service->global_id();

report_destroy_info["param"] = temp_param;

m_router->push_msg( m_local_name_ptr, std::make_shared<std::string>(m_upstream_server.name), std::make_shared<std::string>(report_destroy_info.dump()), enums::packet_cmd_helper::encode(enums::packet_cmd::server_control, 0));

m_services_by_id.erase(cur_service->global_id());

m_services_to_destroy.push_back(cur_service);

}

当mgr_server记录的所有service都退出后,开始通知space_server与service_server执行连接清理并退出:

void mgr_server::on_service_all_destroyed()

{

m_logger->warn("on_service_all_destroyed");

if(m_stop_stage != stop_stage::wait_service_destroy)

{

return;

}

m_stop_stage = stop_stage::wait_space_server_destroy;

// service 都退出之后 通知 space server 与 service server主动退出

json sync_msg;

sync_msg["cmd"] = "notify_clear_connection";

sync_msg["param"] = json::object_t();

auto cur_stop_msg = std::make_shared<std::string>(sync_msg.dump());

broadcast_to_space_svrs(cur_stop_msg);

broadcast_to_service_svrs(cur_stop_msg);

// 等待 所有的 space 服务器都退出

}

当所有的space_server都下线之后,最后通知所有的资源服务器执行下线操作:

void mgr_server::on_space_server_all_destroyed()

{

m_logger->warn("on_space_server_all_destroyed");

if(m_stop_stage != stop_stage::wait_space_server_destroy)

{

return;

}

m_stop_stage = stop_stage::wait_connection_clear;

json sync_msg;

sync_msg["cmd"] = "notify_stop";

sync_msg["param"] = json::object_t();

auto cur_stop_msg = std::make_shared<std::string>(sync_msg.dump());

// 通知所有的资源服务器准备退出

broadcast_to_map_svrs(cur_stop_msg);

broadcast_to_redis_svrs(cur_stop_msg);

broadcast_to_db_svrs(cur_stop_msg);

}

由于redis与db才是最后负责数据落地的角色,所以这两种进程的退出条件优先判断是否所有的读写任务都已经完成:

bool db_server::check_stop_finish(bool with_log)

{

if (!mongo_task_channels.tasks_all_finished())

{

if(with_log)

{

m_logger->debug("mongo_task_channels check fail");

}

return false;

}

else

{

for (auto& one_worker : workers)

{

one_worker->notify_stop();

}

return json_stub::check_stop_finish(with_log);

}

}

bool redis_server::check_stop_finish(bool with_log)

{

if (!redis_task_channels.tasks_all_finished())

{

if (with_log)

{

m_logger->debug("redis_task_channels check fail");

}

return false;

}

else

{

for (auto &one_worker : workers)

{

one_worker->notify_stop();

}

return json_stub::check_stop_finish(with_log);

}

}

当所有的连接都断开之后,mgr_server的check_stop_finish就会返回true,并最终导致mgr_server进程退出。

BigWorld 的进程生命周期

启动流程

在Bigworld里,每种进程都有一个专门的main.cpp文件来定义其启动时的main函数,其文件内容大同小异,只是bwMainT< >的模板参数不同。其模板参数就是进程的App类,例如CellApp、BaseApp、LoginApp等:

// server\dbappmgr\main.cpp

int BIGWORLD_MAIN( int argc, char * argv[] )

{

return bwMainT< DBAppMgr >( argc, argv );

}

// server\loginapp\main.cpp

int BIGWORLD_MAIN( int argc, char * argv[] )

{

return bwMainT< LoginApp >( argc, argv );

}

这里的BIGWORLD_MAIN宏定义了进程的main函数,来封装一些通用的处理流程,例如初始化Bigworld的资源和配置,解析命令行参数等,最后调用bwMainT< >来启动进程的主循环:

#define BIGWORLD_MAIN \

bwMain( int argc, char * argv[] ); \

int main( int argc, char * argv[] ) \

{ \

BW_SYSTEMSTAGE_MAIN(); \

BWResource bwresource; \

BWResource::init( argc, (const char **)argv ); \

BWConfig::init( argc, argv ); \

bwParseCommandLine( argc, argv ); \

return bwMain( argc, argv ); \

} \

int bwMain

这个bwMainT< >模板函数负责进程运行环境的准备,包括初始化全局事件驱动器EventDispatcher、网络资源interface以及注册进程退出的信号处理函数signalProcessor。初始化好了这三个重要组件之后,继续利用模板函数doBWMainT< >来启动进程的主循环:

// lib\server\bwservice.hpp

template <class SERVER_APP>

int bwMainT( int argc, char * argv[], bool shouldLog = true )

{

Mercury::EventDispatcher dispatcher;

// Find the internal interface IP by querying BWMachined

if (!Mercury::MachineDaemon::queryForInternalInterface(

ServerApp::discoveredInternalIP ))

{

WARNING_MSG( "bwMainT: "

"Unable to determine internal interface via BWMachineD query.\n" );

}

BW::string internalInterfaceName =

getBWInternalInterfaceSetting( SERVER_APP::configPath() );

Mercury::NetworkInterface interface( &dispatcher,

Mercury::NETWORK_INTERFACE_INTERNAL, 0,

internalInterfaceName.c_str() );

SignalProcessor signalProcessor( dispatcher );

BW_MESSAGE_FORWARDER3( SERVER_APP::appName(), SERVER_APP::configPath(),

/*ENABLED=*/shouldLog, dispatcher, interface );

START_MSG( SERVER_APP::appName() );

if (internalInterfaceName != Mercury::NetworkInterface::USE_BWMACHINED)

{

CONFIG_WARNING_MSG( "internalInterface set to '%s' in bw.xml. "

"This option is deprecated. It is recommended to not set this "

"value. The default behaviour is to use the same interface as "

"bwmachined. This is controlled by the broadcast route.\n",

internalInterfaceName.c_str() );

}

int result = doBWMainT< SERVER_APP >( dispatcher, interface, argc, argv );

INFO_MSG( "%s has shut down.\n", SERVER_APP::appName() );

return result;

}

这个doBWMainT的实现就非常简单了,主要就是创建一个SERVER_APP的实例,然后调用其runApp方法来启动进程的主循环:

template <class SERVER_APP>

int doBWMainT( Mercury::EventDispatcher & dispatcher,

Mercury::NetworkInterface & interface,

int argc, char * argv[] )

{

if (!ServerAppConfig::init( SERVER_APP::Config::postInit ))

{

CONFIG_ERROR_MSG( "Failed to initialise configuration options. "

"See earlier error message for details.\n" );

return -1;

}

SERVER_APP serverApp( dispatcher, interface );

serverApp.setBuildDate( __TIME__, __DATE__ );

return serverApp.runApp( argc, argv ) ? EXIT_SUCCESS : EXIT_FAILURE;

}

每种进程都有一个对应的App类,这些App类都继承自ServerApp类。ServerApp类是Bigworld进程管理的核心类,ServerApp::runApp来调用init初始化相关组件:

/**

* This method runs this application.

*/

bool ServerApp::runApp( int argc, char * argv[] )

{

// calculate the clock speed

stampsPerSecond();

bool result = false;

if (this->init( argc, argv ))

{

INFO_MSG( "---- %s is running ----\n", this->getAppName() );

result = this->run();

}

else

{

ERROR_MSG( "Failed to initialise %s\n", this->getAppName() );

}

this->fini();

interface_.prepareForShutdown();

#if ENABLE_PROFILER

g_profiler.fini();

#endif

return result;

}

这里的init方法负责初始化性能监控g_profiler,退出信号处理函数pSignalHandler_,以及FD的最大打开数,并设置网络接口的日志等级:

/**

* Initialisation function.

*

* This needs to be called from subclasses' overrides.

*/

bool ServerApp::init( int argc, char * argv[] )

{

PROFILER_SCOPED( ServerApp_init );

bool runFromMachined = false;

for (int i = 1; i < argc; ++i)

{

if (strcmp( argv[i], "-machined" ))

{

runFromMachined = true;

}

}

INFO_MSG( "ServerApp::init: Run from bwmachined = %s\n",

watcherValueToString( runFromMachined ).c_str() );

#if ENABLE_PROFILER

if (ServerAppConfig::hasHitchDetection())

{

g_profiler.setProfileMode( Profiler::SORT_BY_TIME, false );

}

#endif

pSignalHandler_.reset( this->createSignalHandler() );

// Handle signals

this->enableSignalHandler( SIGINT );

this->raiseFileDescriptorLimit( ServerAppConfig::maxOpenFileDescriptors() );

interface_.verbosityLevel( ServerAppConfig::isProduction() ?

Mercury::NetworkInterface::VERBOSITY_LEVEL_NORMAL :

Mercury::NetworkInterface::VERBOSITY_LEVEL_DEBUG );

return true;

}

在完成了init之后,ServerApp::runApp接下来会调用run方法来启动进程的主循环EventDispatcher::processOnce:

/**

* This is the default implementation of run. Derived classes to override

* this to implement their own functionality.

*/

bool ServerApp::run()

{

mainDispatcher_.processUntilBreak();

this->onRunComplete();

return true;

}

/**

* This method call processContinuously until breakProcessing is called.

*/

void EventDispatcher::processUntilBreak()

{

this->processContinuously();

pErrorReporter_->reportPendingExceptions( true /* reportBelowThreshold */ );

}

/**

* This method processes events continuously until interrupted by a call to

* breakProcessing.

*

* @see breakProcessing

*/

void EventDispatcher::processContinuously()

{

breakProcessing_ = false;

while (!breakProcessing_)

{

this->processOnce( /* shouldIdle */ true );

}

}

在processOnce方法中,会先处理频繁任务processFrequentTasks,然后处理定时器processTimers,随后是性能统计processStats,最后处理网络事件processNetwork:

/**

* This method processes the current events.

*

* @param shouldIdle If set to true, this method will block until the next

* timer is due if there is nothing waiting on the network.

*

* @return The number of network events processed.

*/

int EventDispatcher::processOnce( bool shouldIdle /* = false */ )

{

breakProcessing_ = false;

this->processFrequentTasks();

if (!breakProcessing_)

{

this->processTimers();

}

this->processStats();

if (!breakProcessing_)

{

return this->processNetwork( shouldIdle );

}

return 0;

}

这个processFrequentTasks方法会通过FrequentTasks::process方法来处理所有通过mainDispatcher_.addFrequentTask注册过来的FrequentTask,目前主要用到这个FrequentTask的地方不多,只有mysql,signal和http相关的任务会注册到FrequentTasks中:

/**

* This method calls doTask on all registered FrequentTasks objects.

*/

void EventDispatcher::processFrequentTasks()

{

PROFILER_SCOPED( processFrequentTasks );

pFrequentTasks_->process();

}

// lib\db_storage_mysql\mysql_database.cpp

/**

* This method implements the FrequentTask method.

*/

void MySqlDatabase::doTask()

{

bgTaskManager_.tick();

}

// lib\server\signal_processor.cpp

void SignalProcessor::doTask()

{

this->dispatch();

}

/**

* Handle frequent task trigger.

*/

void SignalProcessor::dispatch()

{

const Signal::Set allSignals( Signal::Set::FULL );

Signal::Blocker blocker( allSignals );

int sigNum = SIGMIN;

while (sigNum <= SIGMAX)

{

if (signals_.isSet( sigNum ))

{

this->dispatchSignal( sigNum );

}

++sigNum;

}

signals_.clearAll();

}

在processTimers方法里会调用TimeQueue::process方法来处理全局计时器队列pTimeQueue_里所有到期的定时器任务,这个方法会返回处理的定时器任务数量,我们可以通过这个数量来判断是否有定时器任务到期:

/**

* This method processes outstanding timers.

*/

void EventDispatcher::processTimers()

{

PROFILER_SCOPED( processTimers );

numTimerCalls_ += pTimeQueue_->process( timestamp() );

}

在processNetwork方法中,会调用Poller::processPendingEvents方法来处理所有等待在网络上的事件,这个方法会返回处理的事件数量,我们可以通过这个数量来判断是否有网络事件发生:

/**

* This method processes any activity on the network.

*

* @param shouldIdle If set to true, this method will block until the next

* timer is due if there is nothing waiting on the network.

* @return Number of file descriptors that triggered handlers.

*/

int EventDispatcher::processNetwork( bool shouldIdle )

{

PROFILER_SCOPED( processNetwork );

// select for network activity until earliest timer

double maxWait = shouldIdle ? this->calculateWait() : 0.0;

return pPoller_->processPendingEvents( maxWait );

}

目前这个Poller的实现有三种, 就是常见的Linux系统上的select,poll和epoll:

SelectPoller调用select来等待文件描述符的相关状态改变,并执行对应的事件处理函数。PollPoller调用poll来等待文件描述符的相关状态改变,并执行对应的事件处理函数。EPoller调用epoll_wait来等待文件描述符的相关状态改变,并执行对应的事件处理函数。

运行的时候根据编译选项会选择不同的Poller实现,默认是SelectPoller,如果编译选项中定义了HAS_POLL,则会选择PollPoller,如果定义了HAS_EPOLL,则会选择EPoller。

/**

* This static method creates an appropriate EventPoller. It may use select or

* epoll.

*/

EventPoller * EventPoller::create()

{

#if defined( HAS_POLL )

return new PollPoller();

#elif defined( HAS_EPOLL )

return new EPoller();

#else // !defined( HAS_EPOLL )

return new SelectPoller();

#endif // defined( HAS_EPOLL )

}

这里的事件循环里并没有处理具体的业务逻辑,只是处理了网络事件和定时器任务。ServerApp的具体子类负责利用这里提供的Task和Timer组件来插入自身的业务逻辑。以最简单的DBApp为例,在其连接到数据库之后会调用initTimers,这里会开启一个固定间隔且重复调用的计时器来驱动GameTick,在其超时处理函数handleTimeout里用来定时调用advanceTime方法来推进时间:

/**

* This method sets up required timers.

*

* @return true on success, false otherwise.

*/

bool DBApp::initTimers()

{

MF_ASSERT( status_.status() < DBStatus::RUNNING );

// A one second timer to check all sorts of things, including whether to

// start the server running if we are waiting for other components to

// be ready.

statusCheckTimer_ = mainDispatcher_.addTimer( 1000000, this,

reinterpret_cast< void * >( TIMEOUT_STATUS_CHECK ),

"StatusCheck" );

// NOTE: DBApp's time is not synchronised with the rest of the cluster.

gameTimer_ = mainDispatcher_.addTimer( 1000000/Config::updateHertz(), this,

reinterpret_cast< void * >( TIMEOUT_GAME_TICK ),

"GameTick" );

return true;

}

/**

* This method handles timer events. It is called every second.

*/

void DBApp::handleTimeout( TimerHandle handle, void * arg )

{

switch (reinterpret_cast< uintptr >( arg ))

{

case TIMEOUT_GAME_TICK:

this->advanceTime();

break;

case TIMEOUT_STATUS_CHECK:

this->checkStatus();

break;

}

}

这个advanceTime由ServerApp这个基类来提供,主要内容就是推进游戏时间,提供游戏的Tick机制,也就是常说的游戏帧的概念。内部会调用onTickPeriod、onEndOfTick、onStartOfTick、onTickProcessingComplete等回调函数,以及调用callUpdatables方法来更新所有Updatable对象。

/**

* This method increments the game time.

*/

void ServerApp::advanceTime()

{

if (lastAdvanceTime_ != 0)

{

double tickPeriod = stampsToSeconds( timestamp() - lastAdvanceTime_ );

this->onTickPeriod( tickPeriod );

}

lastAdvanceTime_ = timestamp();

this->onEndOfTick();

++time_;

#if ENABLE_PROFILER

g_profiler.tick();

#endif

this->onStartOfTick();

this->callUpdatables();

this->onTickProcessingComplete();

}

这里的几个Tick相关函数目前基本都是空实现,主要是为了预留扩展点,方便子类来实现自己的逻辑。只有ServerApp::onTickPeriod提供了一个基础实现,就是对过长的帧时间进行警告并记录:

virtual void onTickPeriod( double tickPeriod );

/*

* This method gives subclasses a chance to act at the end of a tick

* immediately before the current server time is incremented.

*/

virtual void onEndOfTick() {};

/*

* This method gives subclasses a chance to act at the beginning of a tick

* immediately after the current server time is incremented.

*/

virtual void onStartOfTick() {};

/*

* This method gives subclassses a chance to act at the end of ServerApp's

* tick processing, immediately before control returns to the caller of

* ServerApp::advanceTime();

*/

virtual void onTickProcessingComplete() {};

/*

* This method gives subclasses the tick period each time advanceTime is

* called

*/

void ServerApp::onTickPeriod( double tickPeriod )

{

if (tickPeriod * ServerAppConfig::updateHertz() > 2.0)

{

WARNING_MSG( "ServerApp::onTickPeriod: "

"Last game tick took %.2f seconds. Expected %.2f.\n",

tickPeriod, 1.0/ServerAppConfig::updateHertz() );

}

#if ENABLE_PROFILER

if (ServerAppConfig::hasHitchDetection() &&

((tickPeriod * ServerAppConfig::updateHertz()) >

ServerAppConfig::hitchDetectionThreshold()))

{

WARNING_MSG( "Service::onTickPeriod: "

"Server hitch detected, creating JSON dump.\n" );

g_profiler.dumpThisFrame();

}

#endif

}

注册互联

Bigworld服务器集群里会存在各种角色的进程,包括DBApp、GameApp、LoginApp等。对于DBApp、BaseApp、CellApp等角色的进程,在一个集群里会有一个到多个具体的实例。为了统一管理这些不定数量的角色实例,Bigworld里设计了DBAppMgr、BaseAppMgr和CellAppMgr等管理器。在这些DBApp、BaseApp、CellApp等角色的进程启动之后,会将这些进程注册到对应的Mgr实例里。这个注册机制依赖于ServerApp上提供的一个ManagerAppGateway类型的对象,默认情况下ServerApp::pManagerAppGateway返回空,只有在这些需要被统一管理的进程角色里会对这个接口进行重载:

// lib\server\server_app.hpp

virtual ManagerAppGateway * pManagerAppGateway() { return NULL; }

// server\baseapp\baseapp.hpp

ManagerAppGateway * pManagerAppGateway() /* override */

{

return &baseAppMgr_;

}

// server\cellapp\cellapp.hpp

ManagerAppGateway * pManagerAppGateway() /* override */

{

return &cellAppMgr_;

}

// server\dbapp\dbapp.hpp

ManagerAppGateway * pManagerAppGateway() /* override */

{

return &dbAppMgr_;

}

这个ManagerAppGateway负责当前进程与对应的管理进程之间的注册与反注册,构造的时候需要提供通信用的网络通道networkInterface和反注册用的RPC retireAppIE:

ManagerAppGateway::ManagerAppGateway( Mercury::NetworkInterface & networkInterface,

const Mercury::InterfaceElement & retireAppIE ) :

channel_( networkInterface, Mercury::Address::NONE ),

retireAppIE_( retireAppIE )

{

MF_ASSERT( retireAppIE_.lengthStyle() == Mercury::FIXED_LENGTH_MESSAGE );

MF_ASSERT( retireAppIE_.lengthParam() == 0 );

}

// server\dbapp\dbappmgr_gateway.cpp

DBAppMgrGateway::DBAppMgrGateway( Mercury::NetworkInterface & interface ) :

ManagerAppGateway( interface, DBAppMgrInterface::retireApp )

{}

// server\cellapp\cellappmgr_gateway.cpp

CellAppMgrGateway::CellAppMgrGateway( Mercury::NetworkInterface & interface ) :

ManagerAppGateway( interface, CellAppMgrInterface::retireApp )

{}

// server\baseapp\baseappmgr_gateway.cpp

BaseAppMgrGateway::BaseAppMgrGateway( Mercury::NetworkInterface & interface ) :

ManagerAppGateway( interface, BaseAppMgrInterface::retireApp )

{}

这个ManagerAppGateway构造函数并不直接负责注册,依赖于外部来调用ManagerAppGateway::init来初始化底层的通道Channel。接口需要提供能与目标ManagerApp通信的interfaceName,通过这个interfaceName来查询对应的通信地址:

bool ManagerAppGateway::init( const char * interfaceName, int numRetries, float maxMgrRegisterStagger )

{

if (!almostZero( maxMgrRegisterStagger ))

{

const float MICROSECONDS_IN_SECOND = 1000000.0f;

// Spread starting time of processes within a tick to avoid possible network peaks during startup

BWRandom rand( mf_getpid() );

uint32 delay = static_cast<uint>( rand( 0.0f, maxMgrRegisterStagger ) * MICROSECONDS_IN_SECOND );

if (delay > 0)

{

DEBUG_MSG( "ManagerAppGateway::init: "

"Manager Registration Stagger mode is active: maxMgrRegisterStagger: %f s. "

"Delaying process start for %f s.\n",

maxMgrRegisterStagger, delay / MICROSECONDS_IN_SECOND );

usleep( delay );

}

}

Mercury::Address addr;

Mercury::Reason reason =

Mercury::MachineDaemon::findInterface( interfaceName,

0, addr, numRetries );

if (reason == Mercury::REASON_SUCCESS)

{

channel_.addr( addr );

}

// This channel is irregular until we start the game tick timer.

this->isRegular( false );

return reason == Mercury::REASON_SUCCESS;

}

这个Mercury::MachineDaemon::findInterface函数负责查询interfaceName对应的通信地址addr,具体做法是向当前进程所属的MachineDaemon发送一个广播消息ProcessStatsMessage,查询所有能提供interfaceName的进程的通信地址,获取第一个返回的通信地址:

/**

* This method finds a specified interface on the network.

* WARNING: This function always blocks.

*

* @param name Only interfaces with this name are considered.

* @param id Only interfaces with this ID are considered, if

* negative all are considered.

* @param addr Output address of the found interface.

* @param retries The number of retries if no interface is found.

* @param verboseRetry Flag for versbose output on retry.

* @param pHandler Handler to process ProcessStatsMessages responses. If

* NULL, the default FindFirstInterfaceHandler is used.

*

* @return A Mercury::REASON_SUCCESS if an interface was found, a

* Mercury::REASON_TIMER_EXPIRED if an interface was not found, other

* Mercury::Reasons are returned if there is an error.

*/

Reason findInterface( const char * name, int id,

Address & addr, int retries, bool verboseRetry,

IFindInterfaceHandler * pHandler )

{

ProcessStatsMessage pm;

pm.param_ = pm.PARAM_USE_CATEGORY |

pm.PARAM_USE_UID |

pm.PARAM_USE_NAME |

(id <= 0 ? 0 : pm.PARAM_USE_ID);

pm.category_ = pm.SERVER_COMPONENT;

pm.uid_ = getUserId();

pm.name_ = name;

pm.id_ = id;

IFindInterfaceHandler * pDefaultHandler = NULL;

if (!pHandler)

{

pDefaultHandler = new FindFirstInterfaceHandler();

pHandler = pDefaultHandler;

}

int attempt = 0;

retries = std::max( retries, 1 );

while (++attempt <= retries)

{

Reason reason = pm.sendAndRecv( 0, BROADCAST, pHandler );

if (reason != REASON_SUCCESS)

{

return reason;

}

if (pHandler->hasError())

{

return REASON_GENERAL_NETWORK;

}

Address result = pHandler->result();

if (result != Address::NONE)

{

addr = result;

return REASON_SUCCESS;

}

if (verboseRetry)

{

INFO_MSG( "MachineDaemon::findInterface: "

"Failed to find %s for UID %d on attempt %d.\n",

name, pm.uid_, attempt );

}

// Sleep a little because sendAndReceiveMGM() is too fast now! :)

#if defined( PLAYSTATION3 )

sys_timer_sleep( 1 );

#elif !defined( _WIN32 )

sleep( 1 );

#else

Sleep( 1000 );

#endif

}

if (pDefaultHandler)

{

bw_safe_delete( pDefaultHandler );

}

return REASON_TIMER_EXPIRED;

}

这个findInterface的核心点在于ProcessStatsMessage的广播,需要探究一下这个消息是如何通知到当前服务器所在集群的MachineDaemon的。

Mercury::Reason MachineGuardMessage::sendAndRecv( uint32 srcip, uint32 destaddr,

ReplyHandler *pHandler )

{

BW_GUARD;

// Set up socket

Endpoint ep;

ep.socket( SOCK_DGRAM );

if (!ep.good() || ep.bind( 0, srcip ) != 0)

{

return Mercury::REASON_GENERAL_NETWORK;

}

return this->sendAndRecv( ep, destaddr, pHandler );

}

/**

* This method sends this MachineGuardMessage message to the bwmachined at the

* input address. The reply messages received are handled by the provided

* handler.

*

* Note: If sending to BROADCAST, REASON_TIMER_EXPIRED will be returned if not

* all bwmachined daemons reply, even if some are successful.

*/

Mercury::Reason MachineGuardMessage::sendAndRecv( Endpoint &ep, uint32 destaddr,

ReplyHandler *pHandler )

{

BW_GUARD;

if (destaddr == BROADCAST)

{

ep.setbroadcast( true );

}

char recvbuf[ MGMPacket::MAX_SIZE ];

MachineGuardResponseChecker responseChecker;

int countdown = 3;

while (countdown--)

{

if (!this->sendto( ep, htons( PORT_MACHINED ), destaddr,

MGMPacket::PACKET_STAGGER_REPLIES ))

{

ERROR_MSG( "MachineGuardMessage::sendAndRecv: "

"Failed to send entire MGM (#%d tries left)\n",

countdown );

continue;

}

// 省略很多代码

}

ERROR_MSG( "MachineGuardMessage::sendAndRecv: timed out!\n" );

return Mercury::REASON_TIMER_EXPIRED;

}

这里的sendto函数调用的时候,第二个参数是端口号PORT_MACHINED,这个端口号是bwmachined的监听端口,用于接收MachineGuardMessage消息。第三个参数是目标机器的地址,当前执行findInterface的时候被设置为了const uint32 BROADCAST = 0xFFFFFFFF;,表示广播发送。这个广播的UDP消息包只会发送到当前本地网络里的所有设备,不会被路由器转发到其他网段。这样粗暴的使用广播数据包来执行服务发现的方法真是令人叹为观止!这种方法的成功依赖于局域网内有开启PORT_MACHINED的监听的进程,目前Bigworld里使用了一个单独的BWMachineD进程来监听这个端口

/**

* Discover the broadcast interface to use and init all the endpoints.

*/

void BWMachined::initNetworkInterfaces()

{

// Determine which network interface will be sending broadcast messages

if (broadcastAddr_ == 0 && !this->findBroadcastInterface())

{

syslog( LOG_CRIT, "Failed to determine default broadcast interface. "

"Make sure UDP ports %d and %d are not firewalled and that "

"your broadcast route is set correctly. e.g. "

"/sbin/ip route add broadcast 255.255.255.255 dev eth0",

PORT_MACHINED, PORT_BROADCAST_DISCOVERY );

exit( EXIT_FAILURE );

}

if (!ep_.good() ||

ep_.bind( htons( PORT_MACHINED ), broadcastAddr_ ) == -1)

{

syslog( LOG_CRIT, "Failed to bind socket to '%s'. %s.",

inet_ntoa((struct in_addr &)broadcastAddr_),

strerror(errno) );

exit( EXIT_FAILURE );

}

ep_.setbroadcast( true );

if (!epLocal_.good() ||

epLocal_.bind( htons( PORT_MACHINED ), LOCALHOST ) == -1)

{

syslog( LOG_CRIT, "Failed to bind socket to (lo). %s.",

strerror(errno) );

exit( EXIT_FAILURE );

}

if (!epBroadcast_.good() ||

epBroadcast_.bind( htons( PORT_MACHINED ), BROADCAST ) == -1)

{

syslog( LOG_CRIT, "Failed to bind socket to '%s'. %s.",

inet_ntoa((struct in_addr &)BROADCAST),

strerror(errno) );

exit( EXIT_FAILURE );

}

cluster_.ownAddr_ = broadcastAddr_;

}

这个BWMachineD进程在每个物理机上都有一个实例,然后多个实例之间通过PORT_MACHINED端口进行通信,从而组成一个集群cluster。

当一个ServerApp启动的时候,会将当前进程的地址、端口、pid、网络角色等信息打包成一个MachineGuardMessage消息,然后发送到本机的PORT_MACHINED端口。

// server\baseapp\baseapp.cpp

/**

* This method does the portion of the init after this app has been

* successfully added to the BaseAppMgr.

*/

bool BaseApp::finishInit( const BaseAppInitData & initData,

BinaryIStream & stream )

{

// 省略一些代码

if (isServiceApp_)

{

BaseAppIntInterface::registerWithMachinedAs( "ServiceAppInterface",

this->intInterface(), id_ );

}

else

{

BaseAppIntInterface::registerWithMachined( this->intInterface(), id_ );

}

}

// server\baseappmgr\baseappmgr.cpp

/**

* This method initialises this object.

*

* @return True on success, false otherwise.

*/

bool BaseAppMgr::init( int argc, char * argv[] )

{

// 省略一些代码

Mercury::Reason reason =

BaseAppMgrInterface::registerWithMachined( interface_, 0 );

}

// server\cellapp\cellapp.cpp

/**

* This method handles the portion of init after registering with the

* CellAppMgr.

*/

bool CellApp::finishInit( const CellAppInitData & initData )

{

// 省略一些代码

CellAppInterface::registerWithMachined( this->interface(), id_ );

}

// server\cellappmgr\cellappmgr.cpp

/**

* The initialisation method.

*/

bool CellAppMgr::init( int argc, char * argv [] )

{

// 省略一些代码

{

CellAppMgrInterface::registerWithInterface( interface_ );

Mercury::Reason reason =

CellAppMgrInterface::registerWithMachined( interface_, 0 );

}

}

// server\dbapp\dbapp.cpp

/**

* This method performs initialisation for our newly received DBApp ID.

*

* @return true on success, false otherwise.

*/

bool DBApp::initAppIDRegistration()

{

// 省略一些代码

if (DBAppInterface::registerWithMachined( interface_, id_ ) !=

Mercury::REASON_SUCCESS)

{

NETWORK_ERROR_MSG( "DBApp::initAppIDRegistration: "

"Unable to register with interface. Is machined running?\n" );

return false;

}

}

// server\dbappmgr\dbappmgr.cpp

bool DBAppMgr::init( int argc, char * argv [] )

{

// 省略一些代码

reason =

DBAppMgrInterface::registerWithMachined( interface_, 0 );

}

// server\loginapp\loginapp.cpp

/**

* This method completes initialisation after registration to DBAppMgr.

*

* @param appID The LoginApp ID.

* @param dbAppAlphaAddress The address of DBApp Alpha.

*/

bool LoginApp::finishInit( LoginAppID appID,

const Mercury::Address & dbAppAlphaAddress )

{

DEBUG_MSG( "LoginApp::finishInit: id %d (DBApp Alpha: %s)\n",

appID, dbAppAlphaAddress.c_str() );

id_ = appID;

dbAppAlpha_.addr( dbAppAlphaAddress );

Mercury::Reason reason =

LoginIntInterface::registerWithMachined( this->intInterface(), id_ );

// 省略一些代码

}

上述各种Interface::registerWithMachined执行的都是NetworkInterface::registerWithMachined:

/**

* This method is used to register or deregister an interface with the machine

* guard (a.k.a. machined).

*/

Reason NetworkInterface::registerWithMachined(

const BW::string & name, int id )

{

return this->interfaceTable().registerWithMachined( this->address(),

name, id );

}

Reason InterfaceTable::registerWithMachined( const Address & addr )

{

return MachineDaemon::registerWithMachined( addr,

name_, id_ );

}

Reason InterfaceTable::registerWithMachined( const Address & addr,

const BW::string & name, int id )

{

name_ = name;

id_ = id;

return this->registerWithMachined( addr );

}

最后这里的MachineDaemon::registerWithMachined就是往LOCALHOST的BWMachineD发送注册信息的过程:

/**

* This function registers a socket with BWMachined.

*/